TITLE: Search, Abstractions, and Big Epistemological Questions

AUTHOR: Eugene Wallingford

DATE: September 11, 2015 3:55 PM

DESC:

-----

BODY:

Andy Soltis is an American grandmaster who writes a monthly

column for Chess Life called "Chess to Enjoy". He

has also written several good books, both recreational and

educational. In his August 2015 column, Soltis talks about

a couple of odd ways in which computers interact with humans

in the chess world, ways that raise bigger questions about

teaching and the nature of knowledge.

As most people know, computer programs -- even commodity

programs one can buy at the store -- now play chess better

than the best human players. Less than twenty years ago,

Deep Blue

first defeated world champion Garry Kasparov in a single

game. A year later, Deep Blue defeated Kasparov in a closely

contested six-game match. By 2005, computers were crushing

Top Ten players with regularity. These days, world champion

Magnus Larson is no match for his chess computer.

Yet there are still moments where humans shine through. Soltis

opens with a story in which two GMs were playing a game the

computers thought Black was winning, when suddenly Black

resigned. Surprised journalists asked the winner, GM Vassily

Ivanchuk, what had happened. It was easy, he said: it only

looked like Black was winning. Well beyond the computers'

search limits, it was White that had a textbook win.

How could the human players see this? Were they searching

deeper than the computers? No. They understood the position

at a higher level, using abstractions such as "being in the

square" and passed pawns like splitting a King like "pants".

(We chessplayers are an odd lot.)

When you can define 'flexibility' in 12 bits,

it will go into the program.

Attempts to program computers to play chess using such abstract

ideas did not work all that well. Concepts like king safety

and piece activity proved difficult to implement in code, but

eventually found their way into the programs. More abstract

concepts like "flexibility", "initiative", and "harmony" have

proven all but impossible to implement. Chess programs got

better -- quickly -- when two things happened: (1) programmers

began to focus on search, implementing metrics that could be

applied rapidly to millions of positions, and (2) computer

chips got much, much faster.

The result is that chess programs can beat us by seeing farther

down the tree of possibilities than we do. They make moves

that surprise us, puzzle us, and even offend our sense of

beauty: "Fischer or Tal would have played this move;

it is much more elegant." But they win, easily -- except when

they don't. Then we explain why, using ideas that express an

understanding of the game that even the best chessplaying

computers don't seem to have.

This points out one of the odd ways computers relate to us in

the world of chess. Chess computers crush us all, including

grandmasters, using moves we wouldn't make and many of us do

not understand. But good chessplayers do understand

why moves are good or bad, once they figure it out. As Soltis

says:

And we can put the explanation in words. This is why chess

teaching is changing in the computer age. A good coach has

to be a good translator. His students can get their machine

to tell them the best move in any position, but they need

words to make sense of it.

Teaching computer science at the university is affected by

a similar phenomenon. My students can find on the web code

samples to solve any problem they have, but they don't always

understand them. This problem existed in the age of the book,

too, but the web makes available so much material, often

undifferentiated and unexplained, so, so quickly.

The inverse of computers making good moves we don't understand

brings with it another oddity, one that plays to a different

side of our egos. When a chess computer loses -- gasp! -- or

fails to understand why a human-selected move is better than

the moves it recommends, we explain it using words that make

sense of human move. These are, of course, the same words and

concepts that fail us most of the time when we are looking for

a move to beat the infernal machine. Confirmation bias lives

on.

Soltis doesn't stop here, though. He realizes that this

strange split raises a deeper question:

Maybe it's one that only philosophers care about, but I'll

ask it anyway:

Are concepts like "flexibility" real? Or are they just

artificial constructs, created by and suitable only for

feeble, carbon-based minds?

(Philosophers are not the only ones who care. I do. But

then, the epistemology course I took in grad school remains

one of my two favorite courses ever. The second was cognitive

psychology.)

We can implement some of our ideas about chess in programs,

and those ideas have helped us create machines we can no

longer defeat over the board. But maybe some of our concepts

are simply be fictions, "just so" stories we tell ourselves

when we feel the need to understand something we really don't.

I don't think so, the pragmatist in me keeps pushing for

better evidence.

Back when I did research in artificial intelligence, I always

chafed at the idea of neural networks. They seemed to be a

fine model of how our brains worked at the lowest level, but

the results they gave did not satisfy me. I couldn't ask

them "why?" and receive an answer at the conceptual level at

which we humans seem to live. I could not have a conversation

with them in words that helped me understand their solutions,

or their failures.

Now we live in a world of "deep learning", in which Google

Translate can do a dandy job of translating a foreign phrase

for me but never tell me why it is right, or explain the

subtleties of choosing one word instead of another. Add more

data, and it translates even better. But I still want the

sort of explanation that Ivanchuk gave about his win or the

sort of story Soltis can tell about why a computer program

only drew a game because it saddled itself with inflexible

pawn structure.

Perhaps we have reached the limits of my rationality. More

likely, though, is that we will keep pushing forward, bringing

more human concepts and abstractions within the bounds of what

programs can represent, do, and say. Researchers like

Douglas Hofstadter

continue the search, and I'm glad. There are still plenty of

important questions to ask about the nature of knowledge, and

computer science is right in the middle of asking and answering

them.

~~~~

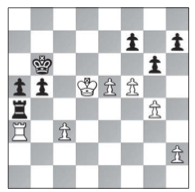

IMAGE 1. The critical position in Ivanchuk-Jobava, Wijk aan

Zee 2015, the game to which Soltis refers in his

story. Source:

Chess Life,

August 2015, Page 17.

IMAGE 2. The cover of Andy Soltis's classic Pawn

Structure Chess. Source: the book's page at

Amazon.com.

IMAGE 3. A bust of Aristotle, who confronted Plato's ideas

about the nature of ideals. Source:

Classical Wisdom Weekly.

-----