March 30, 2007 6:51 PM

A Hint for Idealess Web Entrepreneurs

I'm still catching up on blog reading and just ran across this from Marc Hedlund:

One of my favorite business model suggestions for entrepreneurs is, find an old UNIX command that hasn't yet been implemented on the web, and fix that. talk and finger became ICQ, LISTSERV became Yahoo! Groups, ls became (the original) Yahoo!, find and grep became Google, rn became Bloglines, pine became Gmail, mount is becoming S3, and bash is becoming Yahoo! Pipes. I didn't get until tonight that Twitter is wall for the web.

Show of hands -- how many of you have used every one of those Unix commands? The rise of Linux means that my students don't necessarily think of me as a dinosaur for having used all of them!

I wonder when rsync will be the next big thing on the web. Or has already done that one, too?

Then Noel Welsh points out a common thread:

The real lesson, I think, is that the basics of human nature are pretty constant. A lot of the examples above are about giving people a way to talk. It's not a novel idea, it's just the manifestation that changes.

Alan Kay is right -- perhaps the greatest impact of computing will ultimately be as a new medium of communication, not as computation per se. Just this week an old friend from OOPSLA and SIGCSE dropped me a line after stumbling upon Knowing and Doing via a Google search for an Alan Kay quote. He wrote, "Your blog illustrates the unique and personal nature of the medium..." And I'm a pretty pedestrian blogger way out on the long tail of the blogosphere.

This isn't to say that computation qua computation isn't exceedingly important. I have a colleague who continually reminds us young whippersnappers about the value added by scientific applications of computing, and he's quite right. But it's interesting to watch the evolution of the web as a communication channel, and as our discipline lays the foundation for a new way to speak we make possible the sort of paradigm shift that Kay foretells. And this paradigm shift will put the lie to the software world's idea that moving from C to C++ is a "paradigm shift". To reach Kay's goal, though, we need to make the leap from social software to everyman-as-programmer, though that sort of programming may look nothing like what we call programming today.

March 30, 2007 12:06 PM

One of Those Weeks

I honestly feel like my best work is still ahead of me.

I'm just not sure I can catch up to it.

I owe this gem, which pretty much sums up how I have felt all week, to comedian Drew Hastings, courtesy of the often bawdy but, to my tastes, always funny Bob and Tom Show. Hastings is nearly a decade older than I, but I think we all have this sense sooner or later. Let's hope it passes!

I owe you some computing content, so here is an interview with Fran Allen, who recently received the 2006 Turing Award. She challenges us to recruit women more effectively ("Could the problem be us?") and to help our programming languages and compilers catch up with advances in supercomputing ("Only the bold should apply!")

March 29, 2007 4:20 PM

It Seemed Like a Good Idea at the Time

At SIGCSE a couple of weeks ago, I attended an interesting pair of complementary panel sessions. I wrote about one, Ten Things I Wish They Would Have Told Me..., in near-real time. Its complement was a panel called "It Seemed Like a Good Idea at the Time". Here, courageous instructors got up in front of a large room full of their peers to do what for many is unthinkable: tell everyone about an idea they had that failed in practice. When the currency of your profession is creating good ideas, telling everyone about one of your bad ideas is unusual. Telling everyone that you implemented your bad idea and watched it explode, well, that's where the courage comes in.

My favorite story from the panel was from the poor guy who turned his students loose writing Java mail agents -- on his small college's production network. He even showed a short video of one of the college's IT guys describing the effects of the experiment, in a perfect deadpan. Hilarious.

We all like good examples that we can imitate. That's why we are all drawn to panels such as "Ten Things..." -- for material to steal. But other than the macabre humor we see in watching someone else's train wreck, what's the value in a panel full of bad examples?

The most immediate answer is that we may have had the same idea, and we can learn from someone else's bad example. We may decide to pitch the idea entirely, or to tweak our idea based on the bad results of our panelist. This is useful, but the space of ideas -- good and bad -- is large. There are lots of ways to tweak a bad idea, and not all of them result in a good idea. And knowing that an idea is bad leaves us with the real question unanswered: Just what should we do?

(The risk that the cocky among us face is the attitude that says, "Well, I can make that work. Just watch." This is the source of great material for the next "It Seemed Like a Good Idea at the Time" panel!)

All this said, I think that failed attempts are invaluable -- if we examine them in the right way. Someone at SIGCSE pointed out that negative examples help us to create a framework in which to validate hypotheses. This is how science works from failed experiments. This idea isn't news to those of us who like to traffic in patterns. Bad attempts put us on the road to a pattern. We discover the pattern by using the negative example to identify the context our problem lies and the forces that drive a solution. Sometimes a bad idea really was a good idea -- had it only been applied in the proper context, where the forces at play would have resolved themselves differently. We usually only see these patterns after looking at many, many examples, both good and bad, and figuring what makes them tick.

A lot of CS instruction aims to expose students to lots of examples, in class and in programming assignments. Too often, though, we leave the student discover context and forces on their own, or to learn them implicitly. This is one of the motivations of my work on elementary patterns, to help guide students in the process of finding patterns in their and other people's experiences.

March 26, 2007 8:14 PM

The End of a Good Blog

Between travels and work and home life, I've fallen way behind in reading my favorite blogs. I fired up NetNewsWire Lite this afternoon in a stray moment just before heading home and checked my Academic CS channel. When I saw the blog title "The End", I figured that complexity theorist Lance Fortnow had written about the passing of John Backus. Sadly, though, he has called an end to his blog, Computational Complexity. Lance is one of the best CS bloggers around, and he has taught me a lot about the theory of computation and the world of theoretical computer scientists. Theory was one of my favorite areas to study in graduate school, but I don't have time to keep up on its conferences, issues, and researchers full time. These days I rely on the several good blogs in this space to keep me abreast. With Computational Complexity's demise, I'll have one less source to turn to. Besides, like the best bloggers, Lance was a writer worth reading regardless of his topic.

I know how he must feel, though... His blog is 4.5 years and 958 entries old, while mine is not yet 3 years old and still shy of 500 posts. There are days and weeks where time is so scarce that not writing becomes easy. Not writing becomes a habit, and pretty soon I almost have to force myself to write. So far, whenever I get back to writing regularly, the urge to write re-exerts itself and all is well with Knowing and Doing is well again. Fortunately, I still the need to write as I learn. But I can imagine a day when the light will remain dim, and writing out of obligation will not seem right.

Fortunately, we all still have good academic CS blogs to read, among my favorites being the theory blogs Ernie's 3D Pancakes and The Geomblog. But I'll miss reading Lance's stuff.

March 25, 2007 9:35 AM

Another Way Life is Like Running

Seven miles on heavy legs this morning had these passages on my mind:

To me it was a relief just to realize it might be ok to be discontented. The idea that a successful person should be happy has thousands of years of momentum behind it. If I was any good, why didn't I have the easy confidence winners are supposed to have? But that, I now believe, is like a runner asking "If I'm such a good athlete, why do I feel so tired?" Good runners still get tired; they just get tired at higher speeds. ...

If you feel exhausted, it's not necessarily because there's something wrong with you. Maybe you're just running fast.

As a runner trying to work back into shape, I appreciate this reminder. As a person trying to be a good husband and father, I appreciate it. As a student of computing and programming who faces an unending flow of new knowledge to be gained, I appreciate it. As a department head who can't seem to get off the treadmill of daily grind, I appreciate it.

You should read the whole piece in which these words appear, another essay from the wisdom of Paul Graham. Don't worry; it's not about running.

Oh, and as for seven miles on heavy legs this morning: The sun is out, the temperature is up, and spring rains fell overnight. However slow I felt, the run was a glorious reminder that spring has returned.

March 22, 2007 6:53 PM

Patterns in Space and Sound -- Merce Cunningham

A couple of nights I was able to see a performance by the Merce Cunningham Dance Company here on campus. This was my first exposure to Cunningham, who is known for his exploration of patterns in space and sound. My knowledge of the dance world is limited, but I would call this "abstract dance". My wife, who has some background in dance, might call it something else, but not "classical"!

The company performed two pieces for us. The first was called eyeSpace, and it seemed the more avant garde of the two. The second, called Split Sides, exemplifies Cunningham's experimental mindset quite well. From the company's web site:

Split Sides is a work for the full company of fourteen dancers. Each design element was made in two parts, by one or two artists, or, in the case of the music, by two bands. The order in which each element is presented is determined by chance procedure at the time of the performance. Mathematically, there are thirty-two different possible versions of Split Sides.

And a mathematical chance it was. At intermission, the performing arts center's director came out on stage with five people, most local dancers, and a stand on which to roll a die. Each of the five assistants in turn rolled the die, to select the order of the five design elements in question: the pieces, the music, the costumes, the backgrounds, and a fifth element that I've forgotten. This ritual heightened the suspense for the audience, even though most of us probably had never seen Split Sides before, and must have added a little spice for the dancers, who do this piece on tour over and over.

In the end, I preferred the second dance and the second piece of music (by Radiohead), but I don't know to what extent this enjoyment derived from one of the elements or the two together. Overall, I enjoyed the whole show quite a bit.

Not being educated in dance, my take on this sort of performance is often different from the take of someone who is. In practice, I find that I enjoy abstract dance even more than classical. Perhaps this comes down to me being a computer scientist, an abstract thinker who enjoys getting lost in the patterns I see and hear on stage. A lot of fun comes in watching the symmetries being broken as the dance progresses and new patterns emerge.

Folks trained in music may sometimes feel differently, if only because the patterns we see in abstract dance are not the patterns they might expect to see!

Seeing the Merce company perform reminded of a quote about musician Philip Glass, which I ran across in the newspaper while in Carefree for ChiliPLoP:

... repetition makes the music difficult to play.

"As a musician, you look at a Philip Glass score and it looks like absolutely nothing," says Mark Dix, violist with the Phoenix Symphony, who has played Glass music, including his Third String Quartet.

"It looks like it requires no technique, nothing demanding. However, in rehearsal, we immediately discovered the difficulty of playing something so repetitive over so long a time. There is a lot of room for error, just in counting. It's very easy to get lost, so your concentration level has to be very high to perform his music."

When we work in the common patterns of our discipline -- whether in dance, music, or software -- we free our attention to focus on the rest of the details of our task. When we work outside those patterns, we are forced to attend to details that we have likely forgotten even existed. That may make us uncomfortable, enough so that we return to the structure of the pattern language we know. That's not necessarily a bad thing, for it allows us to be productive in our work.

But there can be good in the discomfort of the broken pattern. One certainly learns to appreciate the patterns when they are gone. The experience can remind us why they are useful, and worth whatever effort they may require. The experience can also help us to see the boundaries of their usefulness, and maybe consider a combination, or see a new pattern.

Another possible benefit working without the usual patterns is hidden in Dix's comments above. Without the patterns, we have to concentrate. This provides a mechanism whereby we attend to details and hone our concentration, our attention to detail. I think it also allows us to focus on a new technique. Programming at the extremes, without an if-statement, say, forces you to exercise the other techniques you know. The result may be that you are a better user of polymorphism even after you return to the familiar patterns that include imperative selection.

And I can still enjoy abstract dance and music as an outsider.

There is another, more direct connection between Cunningham's appearance and software. He has worked with developers to create a new kind of choreography software called DanceForms 1.0. While his troupe was in town, they asked the university to try to arrange visits with computer science classes to discuss their work. We had originally planned for them to visit our User Interface Design course and our CS I course (which has a media computation theme), but schedule changes on our end prevented that. I had looked forward to hearing Cunningham discuss what makes his product special, and to see how they had created "palettes of dance movement" that could be composed into dances. That sounds like a language, even if it doesn't have any curly braces.

Posted by Eugene Wallingford | Permalink | Categories: Patterns, Software Development, Teaching and Learning

March 21, 2007 4:35 PM

END DO

What prompted me to finally write about Frances Allen winning the Turing Award was a bit of sad news. One of the pioneers of computing, John Backus, has died. Like Allen, Backus also worked in the area of programming languages. He is most famous as the creator of Fortran, as reported in the Times piece:

Fortran changed the terms of communication between humans and computers, moving up a level to a language that was more comprehensible by humans. So Fortran, in computing vernacular, is considered the first successful higher-level language."

I most often think of Backus's contribution in terms of the compiler for Fortran. His motivation to write the compiler and design the language was that shared by many computer scientists through history: laziness. Here is my favorite quote from the CNN piece:

"Much of my work has come from being lazy," Backus told Think, the IBM employee magazine, in 1979. "I didn't like writing programs, and so, when I was working on the IBM 701 (an early computer), writing programs for computing missile trajectories, I started work on a programming system to make it easier to write programs."

Work on a programming system to make it easier to write programs... This is the beginning of computer science as we know it!

Backus's work laid the foundation for Fran Allen's work; in fact, her last big project was called PTRAN, an homage to Fortran that stands for Parallel TRANslation.

One of my favorite Backus papers is his Turing Award essay, Can Programming Be Liberated from the von Neumann Style? (subtitled: A Functional Style and its Algebra of Programs). After all his years working on language for programmers and translators for machines, he had reached a conclusion that the mainstream computing world is still catching up to, that a functional programming style may serve us best. Every computer scientist should read it.

This isn't the first time I've written of the passing of a Turing Award winner. A couple of years ago, I commented on Kenneth Iverson, also closely associated with his own programming language, APL. Ironically, APL offers a most extreme form of liberation from the von Neumann machine. Thinking of Iverson and Backus together at this time seems especially fitting.

The Fortran programmers among us know what the title means. RIP.

March 21, 2007 2:03 PM

Turing Award History: Fran Allen

For the last month or so, I have been meaning to write about a historic announcement in the computing world, that Frances Allen receiving the Turing Award. This is the closest thing to a Nobel Prize in computing as there is. The reason that this announcement is historic? Allen is the first women to win a Turing. Women have long made seminal contributions to computer science, something that most computer scientists know and appreciate. But it's a milestone when these contributions receive prestigious and public acknowledgment. You can also listen to a short interview with John Hennessy, the well-know computer scientist who is now president of Stanford, in which he puts Allen's award into context.

In one of her interviews since receiving the award, Allen reminds us that women these days are underrepresented in computing compared to when she began her career in the 1950s. Women were better represented in computing than in most other science and technology disciplines up through 1985 or 1990, at which time their numbers began to plummet -- precipitously. This is a trend that most of us in computing would like to reverse, and one that Allen has working to correct since retiring from IBM five years ago.

Let's not allow the social implication of Allen's award to steal attention from the quality and importance of the technical work she did. The chair of the Turing Award committee said, "Fran Allen's work has led to remarkable advances in compiler design and machine architecture that are at the foundation of modern high-performance computing." Her primary contributions came in the areas of compiler design and program optimization, techniques for making compilers that produce better executable code. Her early work is the theoretical foundation for modern optimizers that work independent of particular source languages and target machines. Her later work focused on optimization of programs for parallel computers, which contributed to high-performance computing for weather simulation and bioinformatics. I found one of her seminal papers in the ACM digital library: Control Flow Analysis; check it out.

Thinking about this award helps us to remember the "applied" value that derives from basic scientific research in an area as theoretical as compiler optimization can be. By making it possible to write really good compilers, we make it possible to create higher-level programming languages. This makes programmers more productive and also widens the potential population of programmers. By advancing parallel high-speed computing, we make it possible to study much larger problems and thus address important social and scientific questions. This latter point is an important one in the context of trying to make computing more attractive to women, who seem to be more interested in careers that "advance the public good" in obvious ways. Allen herself has stated her hope that high-performance computing's role in medical and scientific research will attract women back into our profession.

March 15, 2007 4:55 PM

Writing about Doing

Last week I ran across this quote by noted rocker Elvis Costello:

Writing about music is like dancing about architecture -- it's really a stupid thing to want to do.

My immediate reaction was an intense no. I'm not a dancer, so my reaction was almost exclusively to the idea of writing about music or, by extension, other creative activities. Writing is the residue of thinking, an outward manifestation of the mind exploring the world. It is also, we hope, occasionally a sign of the mind growing, and those who read can share in the growth.

I don't imagine that dancing is at all like writing in this respect.

Perhaps Costello meant specifically writing about music and other pure arts. But I did study architecture for a while, and so I know that architecture is not a pure art. It blends the artistic and purely creative with an unrelenting practical element: human livability. People have to be able to use the spaces that architects create. This duality means that there are two levels at which one can comment on architecture, the artistic and the functional. Costello might not think much of people writing about the former, but he may allow for the value in people writing about the latter.

I may be overthinking this short quote, but I think it might have made more sense for Costello to have made this analogy: "Writing about music is like designing a house about dancing ...". But that doesn't have any of the zip of his original!

I can think of one way in which Costello's original makes some sense. Perhaps it is taken out of context, and implicit in the context is the notion of only writing about music. When someone is only a critic of an art form, and not a doer of the art form, there is a real danger of becoming disconnected from what practitioners think, feel, and do. When the critic is disconnected from the reality of the domain, the writing loses some or all of its value. I still think it is possible for an especially able mind to write about without doing, but that is a rare mind indeed.

What does all this have to do with a blog about software and teaching? I find great value in many people's writing about software and about teaching. I've learned a lot about how to build more expressive, more concise, and more powerful software from people who have shared their experiences writing such software. The whole software patterns movement is founded upon the idea that we should share our experiences of what works, when and why. The pedagogical patterns community and the SIGCSE community do the same for teachers. Patterns really do have to be founded in experience, so "only" writing patterns without practicing the craft turns out to be a hollow exercise for both the reader and the writer, but writing about the craft is an essential way for us to share knowledge. I think we can share knowledge both of the practical, functional parts of software and teaching and of the artistic element -- what it is to make software that people want to read and reuse, to make courses that people want to take. In these arts, beauty affects functionality in a way that we often forget.

I don't yet have an appetite for dancing about software, but my mind is open on the subject.

Posted by Eugene Wallingford | Permalink | Categories: Patterns, Software Development, Teaching and Learning

March 13, 2007 1:55 PM

Yannis's Law on Programmer Productivity

I'm communications chair for OOPSLA 2007 and was this morning updating the CFP for the research papers track, adding URLs to each member of the program committee. Chair David Bacon has assembled quite a diverse committee, in terms of affiliation, continent, gender, and research background. While verifying the URLs by hand, I visited Yannis Smaragdakis's home page and ran across his self-proclaimed Yannis's Law. This law complements Moore's Law in the world of software:

Programmer productivity doubles every 6 years.

I have long been skeptical of claims that there is a "software crisis", that as hardware advances give us incredibly more computing power our ability to create software grows slowly, or even stagnates. When I look at the tools that programmers have today, and at what students graduate college knowing, I can't take seriously the notion that programmers today are less productive than those who worked twenty or more years ago.

We have made steady advances in the tools available to mainstream programmers over the last thirty years, from frameworks and libraries, to patterns, to integrated environments such as .NET and Eclipse, down to the languages we use, like Perl, Ruby, and Python. All help us to produce more and better code in less time than we could manage even back when I graduated college in the mid-1980s.

Certainly, we produced far fewer CS graduates and and employed far fewer programmers thirty years ago, and so we should not be surprised that that cohort was -- on average -- perhaps stronger than the group we produce today. But we have widened the channel of students who study CS in recent decades, and these kids do all right in industry. When you take this democratization of the pool of programmers into account, I think we have done all right in terms of increasing productivity of programmers.

I agree with Smaragdakis's claim that a decent programmer working with standard tools of the day should be able to produce Parnas's KWIC index system in a couple of hours. I suspect that a decent undergrad could do so as well.

Building large software systems is clearly a difficult task, one usually dominated by human issues and communication issues. But we do our industry a disservice when we fail to see how far we have come.

March 12, 2007 12:34 PM

SIGCSE Day 3: Jonathan Schaeffer and the Chinook Story

The last session at SIGCSE was the luncheon keynote address by Jonathan Schaeffer, who is a computer scientist at the University of Alberta. Schaeffer is best known as the creator of Chinook, a computer program that in the mid 1990s became the second best checker player in the history of the universe and that, within three to five months, will solve the game completely. If you visit Chinook's page, you can even play a game!

I'm not going to write the sort of entry that I wrote about Grady Booch's talk or Jeannette Wing's talk, because frankly I couldn't do it justice. Schaeffer told us the story of creating Chinook, from the time he decided to make an assault on checkers, through Chinook's rise and monumental battles with world champion Marion Tinsley, up to today. What you need to do is to go read One Jump Ahead, Schaeffer's book that tells the story of Chinook up to 1997 in much more detail. Don't worry if you aren't a computer scientist; the book is aimed at a non-technical audience, and in this I think Schaeffer succeeds. In his talk, he said that writing the book was the hardest thing he has ever done -- harder than creating Chinook itself! -- because of the burden of trying to make his story interesting and understandable to the general reader.

If you are a CS professor of student, you'll still learn a lot from the book. Even though it is non-technical, Schaeffer does a pretty good job introducing the technical challenges that faced his team, from writing software to play the game in parallel across as many processors as he could muster, to building databases of the endgame positions so that the program could play endings perfectly. (A couple of these databases are large by today's standards. Just try to recall how large a billion billion entry table must have seemed in 1994!) He also helps to us feel what he must have felt when non-intellectual problems arose, such as a power failure in the lab that had been computing a database for weeks, or mix-up at the hotel where Chinook was playing its world championship match that resulted in oven-like temperatures in the playing room. This snafu may account for one of Chinook's losses in that match.

As a computer scientist, what I found most compelling about the talk was reading about the dreams, goals, and daily routine of a regular computer scientist. Schaeffer is clearly a bright and talented guy, but he tells his story as one of an Everyman -- a guy with a big ego who obsessively pursued a research goal, whose goal came to have as much of a human element as a technical one. He has added to our body of knowledge, as well as our lore. I think that non-technical readers can appreciate the human intrigue in the Chinook-versus-Tinsley story as well. It's a thriller of a sort, with no violence in its path.

I knew a bit about checkers before I read the book. Back in college, I was trying to get my roommate to join me in an campus-wide chess tournament that would play out over several weeks. I was a chessplayer, but he was only casual, so he decided one way to add a bit of spice was for both of us to enter the checkers part of the same tournament. Neither of us know much about checkers other than how to move the pieces. The dutiful students that we were, we went to Bracken Library and checked out several books on checkers strategy and studied them before the tournament. That's where I learned that checkers has a much narrower search space than chess, and that many of its critical variations are incredibly narrow and also incredibly deep. This helped me to appreciate how Tinsley, the human champion, once computed a variation over 40 moves long at the table while playing Chinook. (Schaeffer did a wonderful job explaining the fear this struck in him and his team: How can we beat this guy? He's more of a machine than our program!)

That said, knowing how to play checkers will help as you read the book, but it's not essential. If you do know, dig out a checkers board and play along with some of the game scores as you read. To me, that added to the fun.

Reading the book is worth the effort only to learn about Chinook's nemesis, Marion Tinsley ( Chinook page | wikipedia page), the 20th-century checkers player (and math Ph.D. from Ohio State) who until the time of his death was the best checkers player in the world, almost certainly the best checkers player in history, and in many ways unparalleled by any competitor in any other game or sport I know of. Until his first match against Chinook, Tinsley lost only 3 games in 42 years. He retired through the 1960s because he was so much better than his competition that competition was no fun. The appearance of Chinook on the scene, rather than bothering or worrying him (as it did most in the checkers establishment, and as the appearance of master-level chess programs did at first in the chess world), reinvigorated Tinsley, as it now gave him opponent that played at his level and, even better, had no fear of him. By Tinsley's standard, guys like Michael Jordan, Tiger Woods, and even Lance Armstrong are just part of the pack in their respective sports. Armstrong's prolonged dominance of the Tour de France is close, but Tinsley won every match he played and nearly every game, not just in the single premiere event each year.

The book is good, but the keynote talk was compelling in its own way. Schaeffer isn't the sort of electric speaker that holds his audience by force of personality. He really seemed like a regular guy, but one telling the story of his own passions, in a way that gripped even someone who knew the ending all the way to the end. (His t-shirt with pivotal game positions on both front and back was a nice bit of showmanship!) And one story that I don't remember from the book was even better in person: He talked about how he used lie in bed next to his wife and fantasize... about Marion Tinsley, and beating him, and how hard that would be. One night his wife looked over and asked, "Are you thinking about him again?"

Seeing this talk reminded me of why I love AI and loved doing AI, and why I love being a computer scientist. There is great passion in being a scientist and programmer, tackling a seemingly insurmountable problem and doggedly fighting it to the end, through little triumphs and little setbacks along the way. Two thumbs up to the SIGCSE committee for its choice. This was a great way to end SIGCSE 2007, which I think was one of the better SIGCSEs in recent years.

March 10, 2007 12:39 PM

SIGCSE This and That

Here are some miscellaneous ideas from throughout the conference...

Breadth in Year 1

On Friday, Jeff Forbes and Dan Garcia presented the results of their survey of introductory computer science curricula across the country. The survey was methodologically flawed in many ways, which makes it not so useful for drawing any formal conclusions. But I did notice a couple of interesting ways that schools arrange their first-year courses. For instance, Cal Tech teaches seven different languages in their intro sequence. Students must take three -- two required by the department, and a third chosen from a menu of five options. Talk about having students with different experiences in the upper-division courses! I wonder how well their students learn all of these languages (some are small, like Scheme and Haskell), and I wonder how well this would work at other schools.

Many Open Doors

In the same session, I learned that Georgia Tech offers three different versions of CS1: the standard course for CS majors, a robotics-themed course for engineers, and the media computation course that I adopted last fall for my intro course. Even better, they let CS majors take any of the CS1s to satisfy their degree requirement.

This is the sort of first-year curriculum that we are moving to at UNI. For a variety of reasons, we have had a hard time arriving at a common CS1/CS2 sequence that satisfies all of our faculty. We've had parallel tracks in Java and C/C++ for the last few years, and we've decided to make this idea of alternate routes into the major a feature of our program, rather than a curiosity (or a bug!). Next year, we will offer three different CS1/CS2 sequences. Our idea is that with "many open doors", more different kinds of students may find what appeals to them about CS and pursue a major or minor. Recruitment, retention, faculty engagement -- I have high hopes that this broadening of our CS1 options will help our department get better.

No Buzz

Last year, the buzz at SIGCSE was "Python rising". That seemed a natural follow-up to SIGCSE 2005, where the buzz seemed to be "Java falling". But this year, neither of these trends seems to have gained steam. Python is out there seeing some adoptions, but Java remains strong, and it doesn't seem to be going anywhere fast.

I don't feel a buzz at SIGCSE this year. The conference has been useful to me in many ways, and I've enjoyed many sessions. But there doesn't seem to be energy building behind any particular something that points to a different sort of future.

That said, I do notice the return of games to the world of curriculum. Paper sessions on games. Special sessions on games. Game development books at every publisher's booth. (Where did they come from? Books take a long time to write!) Even still, I don't feel a buzz.

The idea that causes the most buzz for me personally is the talk of computational thinking, and what that means for computer science as a major and as a discipline for all.

RetroChic CS

I am sitting in a session on nifty assignments just now. The assignments have ranged from nifty to less so, but the last takes us all back to the '70s and '80s. It is Dave Reed's explorations with ASCII art, modernized as ASCIImations. He started his talk with a quote that seems a fitting close to this entry:

Boring is the new nifty.

-- Stuart Reges, 2006

Up next: the closing luncheon and keynote talk by Jonathan Schaeffer, of Chinook fame.

March 10, 2007 10:18 AM

SIGCSE Day 1, Continued: Teaching Honors

In the Teaching Tips session on Thursday, Stuart Reges suggested offering an honors section of CS1. I agree. Many people think that teaching honors students requires more work, or more material, but it doesn't really. It does require deeper engagement, because the students will want to go deeper. From the instructor's perspective, the result is better interaction and the opportunity to talk and be more like a real computer scientist. I've worked with honors students before, and it is a blast when you get even an ordinary group. Unfortunately, we only recently started an honors program at my university, and in that time CS1 enrollments have been too small to afford an honors section.

David Gries commented on Stuart's suggestion, saying something to the effect, "It seems to me that it's the weaker students who need this experience, not the better ones." I also agree with this sentiment. But unless Gries means that we should never create targeted opportunities for our better students, I don't think that my agreement with both is a conflict.

Teaching honors students offers an instructor a great opportunity to experiment with new ideas. I may not want to risk trying a different way of teaching -- say, all exercise-driven, no lecture -- with a group of fifty students in a regular section. If things go wrong, I may have a hard time recovering the semester, and the average and weaker students are the ones with the most to lose. But in a smaller section of more capable students, I can try it out, confident that the students will help me smooth off the rough edges of my new approach and identify the places I need to rethink.

When I have a new idea worked out, I can then transfer it into my regular sections with more personal comfort -- and reasonable assurance that I won't be harming any students in the process of my own learning!

In the worst case of an approach "failing", honors students are better able to roll with the punches and recover with me. Every experienced teacher knows that there are students who will learn what they need in a course no matter what the instructor does, by working on their own, thinking about the important issues, and asking questions. This is the sort of student one usually sees in an honor section.

As Stuart points out, one of the joys of teaching an honors section is that you can discuss whatever you find interesting -- say, a book on computing or a computer scientist, or a current topic in computing that you can relate back to your course. To be honest, I do this in most of my courses anyway, from CS1 to senior project courses. I have to be aware of time constraints imposed by the curriculum, so I can't wax poetic any time I like. (But Owen Astrachan told us on Day 1, we should not paralyze ourselves with the need for to cover more, more, more!) Some of my favorite course sessions in Programming Languages, Algorithms, Object-Oriented Programming, Intelligent Systems, and, yes, CS1 have resulted directly from reading I've done outside of class -- and from attending sessions at OOPSLA, PLoP, and SIGCSE.

All of our students need a good experience. Teaching honors -- or as if you were teaching honors -- is one way to move in that direction.

March 09, 2007 9:11 PM

SIGCSE Day 2: Read'n', Writ'n', 'Rithmetic ... and Cod'n'

Back in 2005, Grady Booch gave a masterful invited talk to close OOPSLA 2005, on his project to preserve software architectures. Since then, he has undergone open-heart surgery to repair a congenital defect, and it was good to see him back in hale condition. He's still working on his software architecture project, but he came to SIGCSE to speak to us as CS educators. If you read Grady's blog, you'll know that he blogged about speaking to us back on March 5. (Does his blog have permalinks to sessions that I am missing?) There, he said:

My next event is SIGCSE in Kentucky, where I'll be giving a keynote titled Read'n, Writ'n, 'Rithmetic...and Code'n. The gap between the technological haves and have-nots is growing and the gap between academia and the industries that create these software-intensive systems continues to be much lamented. The ACM has pioneered recommendations for curricula, and while there is much to praise about these recommendations, that academia/industry gap remains. I'll be offering some observations from industry why that is so (and what might be done about it).

And that's what he did.

A whole generation of kids has grown up not knowing a time without the Internet. Between the net, the web, iPods, cell phones, video games with AI characters... they think they know computing. But there is so much more!

Grady recently spent some time working with grade-school students on computing. He did a lot of the usual things, such as robots, but he also took a step that "walked the walk" from his OOPSLA talk -- he showed his students the code for Quake. Their eyes got big. There is real depth to this video game thing!

Grady is a voracious reader, "especially in spaces outside our discipline". He is a deep believer in broadening the mind by reading. This advice doesn't end with classic literature and seminal scientific works; it extends to the importance of reading code. Software is everywhere. Why shouldn't read it to learn to understand our discipline? Our CS students will spend far more of their lives reading code than writing it, so why don't we ask them to read it? It is a great way to learn from the masters.

According to a back of the envelope calculation by Grady and Richard Gabriel 38 billion lines of new and modified code are created each year. Code is everywhere.

While there may be part of computer science that is science, that is not what Booch sees on a daily basis. In industry, computing is an engineering problem, the resolution of forces in constructing an artifact. Some of the forces are static, but most are dynamic.

What sort of curriculum might we develop to assist with this industrial engineering problem? Booch referred to the IEEE Computer Society's Software Engineering Body of Knowledge (SWEBOK) project in light of his own software architecture effort. He termed SWEBOK "noble but failed", because the software engineering community was unable to reach a consensus on the essential topics -- even on the glossary of terms! If we cannot identify the essential knowledge, we cannot create a curriculum to help our students learn it.

He then moved on to curriculum design. As a classical guy, he turned to the historical record, the ACM 1968 curriculum recommendations. Where were we then?

Very different from now. A primary emphasis in the '68 curriculum was on mathematical and physical scientific computing -- applications. We hadn't laid much of the foundation of computer science at that time, and the limitations of both the theoretical foundations and physical hardware shaped the needs of the discipline and thus the curriculum. Today, Grady asserts that the real problems of our discipline are more about people than physical limits. Hardware is cheap. Every programmer can buy all the computing power she needs. The programmer's time, on the other hand, is still quite expensive.

What about ACM 2005? As an outsider, Grady says, good work! He likes the way the problem has been decomposed into categories, and the systematic way it covers the space. But he also asks about the reality of university curricula; are we really teaching this material in this way?

But he sees room for improvement and so offered some constructive suggestions for different ways to look at the problem. For example, the topical categories seem limited. The real world of computing is much more diverse than our curriculum. Consider...

Grady has worked with SkyTV. Most of their software, built in web-centric world, is less than 90 days old. Their software is disposable! Most of their people are young, perhaps averaging 28 years old or so.

He has also worked with people at the London Underground. Their software is old, and their programmers are old (er, older). They face a legacy problem like no other, both in their software and in their physical systems. I'm am reminded of my interactions with colleagues from Lucent, who work with massive, old physical switching systems driven by massive, old programs that no one person can understand.

What common theme do SkyTV and London Underground folks share? Building software is a team sport.

Finally, Grady looked at the ACM K-12 curriculum guidelines. He was so glad to see it, so glad to that see we are teaching the ubiquitous presence of computing in contemporary life to our young! But we are showing them only the fringes of the discipline -- the applications and the details of the OS du jour. Where do we teach them our deep ideas, the beauty and nobility of our discipline?

As he shifted into the home stretch of the talk, Grady pointed us all to a blog posting he'd come across called The Missing Curriculum for Programmers and High Tech Workers, written by a relatively recent Canadian CS grad working in software. He liked this developers list and highlighted for us many of the points that caught his fancy as potential modifications to our curricula, such as:

- Sometimes, worker harder or longer won't get the job done.

- Learn a scripting language!

- Documentation is essential, but it must be tied to code.

- Learn the patterns of organization behavior.

- Learn about many other distinctly human elements of the profession, like meetings (how to stay awake, how to avoid them), hygiene (friend or foe?), and planning for the future.

One last suggestion for our consideration involved his Handbook of Software Architecture. There, he has created categories of architectures that he considers the genres of our discipline. Are these genres that our students should know about? Here is a challenging thought experiment: what if these genres were the categories of our curriculum guidelines? I think this is a fascinating idea, even if it ultimately failed. How would a CS curriculum change if it were organized exclusively around the types of systems we build, rather than mostly on the abstractions of our discipline? Perhaps that would misrepresent CS as science, but what would it mean for those programs that are really about software development, the sort of engineering that dominates industry?

Grady said that he learned a few lessons from his excursion into the land of computing curriculum about what (else) we need to teach. Nearly all of his lessons are the sort of things non-educators seem always to say to us: Teach "essential skills" like abstraction and teamwork, and teach "metaskills" like the ability to learn. I don't diminish these remarks as not valuable, but I don't think these folks realize that we do actually try to teach these, but they are hard to learn, especially in the typical school setting, and so hard to teach. We can address the need to teach a scripting language by, well, adding a scripting language to the curriculum in place of something less relevant these days. But newbies in industry don't abstract well because they haven't gotten it yet, not because we aren't trying.

The one metaskill on his list that we really shouldn't forget, but sometimes do, is "passion, beauty, joy, and awe". This is what I love about Grady -- he considers these metaskills, not squishy non-technical effluvium. I do, too.

During his talk, Grady frequently used the phrase "right and noble" to describe the efforts he sees folks in our industry making, including in CS education. You might think that this would grow tiresome, but it didn't. It was uplifting.

It is both a privilege and a responsibility, says Grady, to be a software developer. It is a privilege because we are able to change the world in so many, so pervasive, so fundamental ways. It is a responsibility for exactly the same reason. We should keep this mind, and be sure that our students know this, too.

At the end of his talk, he made one final plug that I must relay. He says that patterns are the coolest, most important thing that have happened in the software world over the last decade. You should be teaching them. (I do!)

And I can't help passing on one last comment of my own. Just as he did at OOPSLA 2005, before giving his talk he passed by my table and said hello. When he saw my iBook, he said almost exactly the same thing he said back then: "Hey, another Apple guy! Cool."

Posted by Eugene Wallingford | Permalink | Categories: Computing, Software Development, Teaching and Learning

March 09, 2007 4:02 PM

SIGCSE Day 1: Computational Thinking

I have written before about Chazelle's efforts to pitch computer science as an algorithmic science that matters to everyone, but I have been remiss in not yet writing about Jeannette Wing's Communications of the ACM paper on Computational Thinking. (Her slides are also available on line). Fortunately, there was a panel session on computational thinking today at which Wing and several like-minded folks shared the ideas and courses.

Wing's dream is that, like the three Rs, every child learns to think like a computer scientist. As Alan Kay keeps telling us, our discipline fundamentally changes how people think and write -- or should. Wing is trying to find ways to communicate computational thinking and its importance to everyone. In this post, I summarize some of her presentation.

Two "A"s of computational thinking distinguish it from other sciences:

- abstraction: Computational thinkers work at multiple levels of abstraction. Other scientists do, too, but their bread-and-butter is at a single layer. Computational thinkers work at multiple levels at the same time.

- automation: Computational thinkers mechanize their abstractions. This gives us two more "A"s -- the ability and audacity to scale up rapidly, to build large systems.

Wing gave a long, long list of examples of computational thinking. A few will sound familiar to readers of this and similar blogs: We ask and answer these questions: How difficult is it to solve this problem? How can we best solve it? We reformulate problems in terms of other problems that we already understand. We choose ways to represent data and process. We think of data as code, code as data. We look for opportunities to solve problems by decomposing them and by applying abstractions.

If only we look, we can evidence of the effects of computational thinking in other disciplines. There are plenty of surface examples, but look deeper. Machine learning has revolutionized statistics; math and statistics departments are hiring specialists in neural nets and data mining into their statistics positions. Biology is beginning to move beyond the easy computational problems, such as data mining, to the modeling of biological processes with algorithms. Game theory is a central mechanism in economics.

Computational thinking is bigger than what most people think of computer science. It is about conceptualizing, not programming; about ideas, not artifacts. In this regard, Wing has two messages for the general public:

- Intellectually challenging and engaging scientific problems in computer science remain to be solved. Our only limits are curiosity and creativity.

- One can major in computer science and do anything -- just like English, political science, and mathematics.

You can find more of Wing's ideas at her Computational Thinking web site.

Wing was only participant on this panel. The other folks offered some interesting ideas as well, but her energy carried the session. The one other presentation that made my list of ideas to try was Tom Cortina's description of his cool Principles of Computation course at Carnegie Mellon. What stuck with me were some of his non-programming, non-computing examples of important computing concepts, such as:

- booking flights to give four talks over break, and then thirty-nine (the Traveling Salesman Problem and computational complexity)

- washing and drying five loads of laundry (pipelining), which even the least mathematical students can understand: you can dry one load while washing another!

- four-way stops in the road (races and deadlocks), including a great story of the difference between New York drivers (race) and Pittsburgh drivers (deadlock)

My department is beginning to implement courses aimed at bringing computational thinking to the broader university community, including an experimental Computational Modeling and Simulation course next fall. Perhaps we can incorporate some of these ideas into our course, and see how they play in Cedar Falls.

March 09, 2007 10:17 AM

SIGCSE Day 1: A Conference First

My roommate and I are staying in the Radisson Riverfront, which is right across the river from downtown Cincinnati at the nexus of the Ohio River and I-75. The view from our 14th-floor room is good.

So there I was, settling in after a long day at the conference. About 10 PM, the phone rings. Odd. The caller asks for me. Odd again. It's the front desk. The local police have asked all guests to come down to the lobby.

Apparently, someone had shot a bullet into a 12th-floor room. From somewhere outside.

Odd.

Down we went to the lobby. We heard lots of curious smalltalk, and no small amount impatience at being inconvenienced by this interruption. Some commented that we hardly seemed safer all herded into one place, in front of wide glass lobby windows open to the interstate exit.

After 25 minutes or so, the chief of the Covington Police Department called us together. "Welcome to Covington!" he offered in good humor. He explained what had happened, what the police had done to investigate, and that they now believed us to be safe. He thanked us for our patience and wished us a good visit. I can imagine that he is a good sort of person to have as a police chief -- a big part of the job is communicating with people who are in varying states of distress.

The rest of the night passed without event.

Let's see if OOPSLA can top this.

March 08, 2007 8:03 PM

SIGCSE Day 1: Media Computation BoF

A BoF is a "birds of a feather" session. At many conferences, BoFs are a way for people with similar interests to get together during down time in the conference to share ideas, promote an idea, or gather information as part of a bigger project.

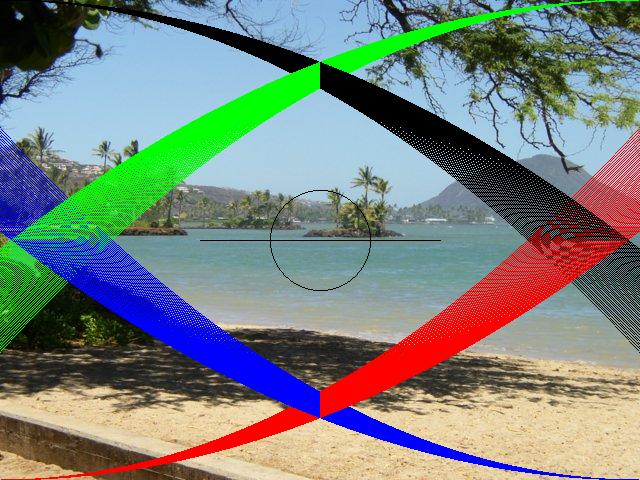

Tonight I attended a BoF on the media computation approach I used in CS I last semester. The developers of this approach, Mark Guzdial and Barbara Ericson, organized the session, called "A Media Computation Art Gallery and Discussion", to showcase work done by students in the many college and high school courses that use their approach. You can access the movies, sounds, and video shown at this on-line gallery.

The picture to the right was an entry I submitted, an image generated by a program written by my CS I student Keller McBride. This picture demonstrates the sort of creativity that our students have, just waiting to get out when given a platform. I don't know how novel the assignment itself was, but here's the idea. Throughout the course, students do almost all of their work using a set of classes designed for them, which hide many of the details of Java image and sound. In one lab exercise, students played with standard Java graphics programming using java.awt.Graphics objects. That API gives programmers some power, but it has always seemed more complicated than is truly necessary. My 8-year-old daughter ought to be able to draw pictures, too! So, while we were studying files and text processing, I decided to try an assignment that blended files, text, and images. I asked my students to write an interpreter for a simple straight-line language with commands like this:

line 10 20 300 400

circle 100 200 50

The former draws a line from (10, 20) to (300, 400), and the latter a circle whose center point is (100, 200) and whose radius is 50.

This is the sort of assignment that lies right in my sweet spot for encouraging students to think about programming languages and the programs that process them. Even a CS I student can begin to appreciate this central theme of computing!

Students were obligated to implement the line and circle commands, and then to create and implement a command of their own choosing. I expected squares and ovals, which I received, and text, which I did not. Keller implemented something I never expected: a colorSpray command that takes a density argument and then produces four sprays, one from each corner. I describe the effect as shaking a paint brush full of color and watching the color disperse in an arc from the brush, becoming less dense as the paint moves away from the shaker.

This was a CS1 course, so I wasn't expecting anything too fancy. Keller even discounted the complexity of his code in a program comment:

* My Color Spray method can only be modified by how many arcs it creates, not really fancy, but I did write it from scratch, and I think it's cool.

I do, too. The code uses nested loops, one determinate and one indeterminate, and does some neat arithmetic to generate the spray effect. This is real programming, in the sense that it requires discovering equations to build a model of something the programmer understands at a particular level. It required experimentation and thought. If all my students could get so excited by an assignment or two each semester, our CS program would be a much better place.

At the BoF, one attendee asked how he should respond to colleagues at his university who ask "Why teach this? Why not just teach Photoshop?" Mark's answer was right on mark for one audience. Great artists understand their tools and their media at a deep level. This approach helps future Photoshop users really understand the tool they will be using. And, as another attendee pointed out, many artists bump up against the edges of Photoshop and want to learn a little programming precisely so they can create filters and effects that Adobe didn't ship in their software. The answer to this question for a CS or technical audience ought to be obvious -- students can encounter so many of the great ideas of computing in this approach; it motivates many students so much more than "convert Celsius to Fahrenheit"; and it is relevant to students' everyday experiences with media, computation, and data.

The CS education community owes Mark and Barb a hand for their work developing this wonderful idea through to complete, flexible, and usable instructional materials -- in two different languages, no less! I'm looking forward to their next step, a data structures course that builds on top of the CS 1 approach. We may have a chance to try it out in Fall 2008, if our faculty approve a third offering of media computation CS I next spring.

March 08, 2007 4:36 PM

SIGCSE Day 1: Teaching Tips We Wish We'd Known...

Once again, things that could've been

brought to my attention yesterday!

-- Adam Sandler, in "The Wedding Singer"

After a long day driving and a short morning run, I joined the conference in time for the first set of parallel sessions. I was pleased to see a great way for me to start, a panel session called "Teaching Tips We Wish They'd Told Us Before We Started". That's a great title, but the list of panelists is like a Who's Who of big names from SIGCSE: Owen Astrachan, Dan Garcia, Nick Parlante, and Stuart Reges. These guys are reflective, curious, and creative, and one of the best ways to learn is to listen to reflective masters talk about what they do. The only shortcoming of the group is that they all teach at big schools, with large sections, recitations, and teaching assistants. But still!

The session was a staccato of one-minute presentations of teaching tips across a set of generic categories. Some applied to all of us: lecturing, writing exams, designing projects and homework assignments, and grading. Some applied only to those at big schools: managing staff and running recitation sections. Most were presented in an entertaining way.

(This is a bit long... Feel free at any time to skip down to the section on "meta" tips and be done.)

Lecturing

Some of these tips are ideas that I've found to work well in my own courses. I love to write code on the fly, and I've pretty much lost my reluctance to make a mistake in front of the students. Students are pretty good at understanding that everyone makes mistakes, and they like to see the prof make a mistake and then recover from it. They can learn as much from the reaction and recovery as from the particular code we write. Owen has long championed showing student code in class, but I have done so only under limited circumstances, such as code reviews of a big CS II program. I should try collecting some student code and using the document camera soon.

Nick's tips were more about behavior than practice. First, don't roll your eyes about any part of the course. If the instructor displays a bad attitude about some part of the curriculum, the student may well learn the same. What's worse in my mind is that students often learn to dismiss the instructor as limited in understanding or as a jingoistic advocate for particular ideas. That loss of respect diminishes the positive effect that an instructor can have on students.

My favorite tip from Nick was "a mistake I've made, recast as a tip": don't be lulled by your own outline. I've done this, too. I pullout the old lecture notes for the day, give them a quick scan, and think to myself, "Oh, yeah, I remember this." Then I go in the classroom and fall flat. Scanning your outline != real preparation. It is too easy to remember the surface feelings of a lecture without recapturing the details of the session, the depth of the topic, or the passion I need to make a case.

Dan's tips may reflect his relative youth. First, show video on the screen during the set-up time between sessions. He suggests videos from SIGGRAPH to motivate students to think beyond what they see in their intro courses to what computing can really be. Second, try podcasting your lectures. He pointed us to itunes.berkeley.edu, which allows you to go Apple's iStore and download podcasts of lectures from a variety of Berkeley's courses, among them his own CS 61C Machine Structures. Podcasting may sound more difficult to do than it is... One of my colleagues has experimented with a simple digital voice recorder and the free, open-source editing software Audacity. This approach doesn't produce sound studio-quality casts, but it does make your work available to students in a new channel. Dan gave one extra tip: think about what audiocasting means for how you present. "Like this" and "here" loose their value when students can't see what you are doing!

Perhaps the best quip from this part of the panel came from the omnipresent Joe Bergin. Paraphrase: "The more finely crafted and perfected your lecture, and the message that you want to communicate, the worse you will do." Amen. That perfect lecture is perfect for you, not your students. Go into class with an idea of two to communicate, have a plan, and then be prepared for whatever may happen.

Writing Exams

Lots of good advice here. We all eventually learn important lessons in exam writing by doing it wrong, like to do every problem before putting it on an exam (every problem -- no problem is so straightforward that you can't be wrong about it, and you will), and not to overestimate what students can do in 50 minutes (guilty as charged). But there were some nice nuggets that expose the idiosyncrasies of these good teachers:

- Bring your laptop to the exam. (Dan) You can use it to display the time left in the period, or to display real-time corrections and clarifications.

- Write the rubric for your question first. (Owen) You'll learn a lot about what the questions should be doing, which helps you to write it better. (If this isn't test-driven programming, I don't know what it is!)

- When writing a CS1 exam, allocate 60% of the points to mechanical questions, 30% to problem solving, and 10% to a "super hard" question. (Stuart) The first part of the exam gives every student a chance to succeed through hard work and practice; the second gives all students to demonstrate the higher-order skills that go beyond mechanical tasks, and the third challenges and engages the best students without punishing those students who haven't reached that level of understanding yet.

- Use "program mystery" questions. (Stuart) These questions are like mental calisthenics that ask students understand

algorithmic ideas beyond the surface. I liked his example:

What is the effect of the expression b = (b == false) for the boolean b?

- Distribute sample questions. (Stuart) When you do, you'll get an extra week of learning out of your students. They take sample exams seriously enough to do them!

- Omit the backstory. (Nick) The complex set-up may work on a homework assignment, where students have time to think and chance to ask for clarifications. But on an exam, the story is just cognitive overload, and as likely to interfere with student performance as to help.

Someone in the audience offered a practice I sometimes use. Let students take home their graded exam and correct their answers, and then give them 25% credit toward their grade. I usually only do this when exam scores are significantly lower than I or my students expected, as a way to build up morale a bit and to be sure that students have an incentive to plug the gaps in their understanding with a little extra work.

Designing Projects and Homework Assignments

Don't belittle student work. Even if you mean your comment as separate from person, students see the belittling of their work as belittling them. And definitely take care how you comment on student work in public! Work hard to provide constructive feedback.

Use real examples wherever possible. Watch for the real world "intruding" on old standards. Owen gave the example of his old, boring "count the occurrences of words in a text file" problem, which has now become hip in the form of sites such as TagCrowd.

Make every assignment due at 11:59 PM. This eliminates the sort of begging for extensions during the daytime that often leads to a loss of dignity, while giving students extra time in the evening when they have one last chance to work. But even if the students work right up to the deadline, they will still be "on cycle" for sleep. I've been in the habit of using 8:00 AM for my deadlines in recent semesters, but I think I might try midnight to see if I notice any effect on student behavior.

Do not assign too many math problems. "Math appeals to a certain tribe. You are probably in the tribe, so you don't 'hear the accent'.")

Grading

Use an absolute grading scale, with no curve. (I do that.) At the end of the semester, bump up grades at the margins if you think that gives a fairer grade. (I do that.) Never bump grades down. (I do that.) Allow performance on later exams to "clobber" poor performance on earlier exams. I do this, but with a squooshy "I'll take that into account" policy.

Stuart reminded us not to kill our CS1 students. If you are going to bump students' grades upward, do it right away. That takes away some of the uncertainty and fear associated with that grade, and they are already feeling enough uncertainty and fear. When you do mess up, say, with an exam that is too hard or too long (or both), be honest, admit your mistake, and make amends. Students will forgive almost anything, if you are honest and fair.

From the audience, Rich Pattis offered a bit of symmetry on grading grace periods. Many instructors allow students to submit assignments late, with a penalty. Rich suggests that we offer bonuses for early submission. In his experience, even a small bonus encourages many students to start doing their work earlier. Implicit in this suggestion is that when they don't get done early for the bonus, because they need more time to get it, they have more time to get it! I'm adding this to my toolbox effective today.

I won't say much about the tips for managing staff and designing recitation sections. But the big lessons here were to value people. Undergraduates are an underutilized yet powerful resource. (We've found this in our CS I, II, and III labs, too.) Empower your TAs. Be human with them.

Going Meta

The last section was general advice, going meta. Most teachers in most disciplines can learn from these tips science.

Team teach. An instructor can learn so much from working with another teacher and watching another teacher work. Even better is to debrief with one another. (If this isn't pair programming, I don't know what it is!)

Make notes as you learn how to teach your course. Keep a per-lecture diary of ideas that you have in class. I have been doing this for a decade or so. When I leave a session with a new idea or a feeling that I could have done better, I like to make an entry in a log of ideas, tagged with the session number, or the assignment or exam number. The next time I teach the course, I can review this log when prepping the course, to help me plan ahead for last time's speed bumps, and then use it when prepping the session or assignment in question during the semester.

The rest of these meta tips are from Owen Astrachan. I admire his concrete ideas about teaching, and his abstract lessons as well.

Stop being driven by the need to cover material. Your students won't remember most of the details anyway. How many details do you remember from any particular course you took as an undergrad? Breaking this habit frees you to do more.

Owen gave three tips in the same vein. Know your students' names. Eat where your students eat occasionally. Cultivate relationships with non-CS faculty. The vein: People matter, and being a person makes you a better teacher.

Learn something new: a whole new area, such as computational genomics; a new hot topic in computing, such as Ruby on Rails or Ajax; or even a relatively little technique you don't already know, such as suffix arrays. Learning new things reminds you what it's like to learn something new, which is where your students are every day. Learning new things lets you infuse your teaching with fresh ideas, maybe ideas that are more relevant to today's students. Learning new things lets you infuse your teaching with fresh attitude, which is an essence of good teaching.

----

From this panel I can conclude at least two things. One is that I'm getting old... I've been teaching CS long enough to have discovered many of these practices in my own classroom, often from my students. The other is that I still have plenty to learn. Some of these ideas may show up in one of my courses soon!

If you'd like to learn more of these teaching tips, or add your own to the collective wisdom, check out the community wiki via this link.

March 07, 2007 11:04 PM

Thinking Hard to Understand

Heard on my drive to SIGCSE today:

These experiences have caused him to think very hard about what he is doing and where he is going. And the result of all this thinking is that he now understands he doesn't know what he is doing or where he is going.

This quote is about Ray Porter, a character in Steve Martin's novella Shopgirl, which I listened on my drive to SIGCSE today. While I am in most ways nothing at all like the divorced, 50-something Porter, I can certainly appreciate his sudden need to think very hard and his sudden realization that he is essentially clueless. Over the course of my career, I had grown to feel comfortable in my roles as an academic, as teacher and scholar. Which I switched into the Big Office Downstairs, I just assumed that things would proceed as usual.

But after a year and a half as department head, I experience occasional moments of "midterm crisis", in which I think that I don't really know what I'm doing or where I'm going. I often have a pretty good 50,000-foot view of what I want, but down in the trenches I usually feel a little disoriented. With experience, things have gotten better, but a year filled with academic program review, two time-consuming searches, and a curriculum revision have sapped my reservoirs of creativity and energy.

At least now I think I understand that I don't know what I am doing or where I am going. You know what they say about acceptance being the first step toward recovery!

By the way, I do recommend Shopgirl. I have read the book and then listened to it several times on tape while driving to PLoPs, SIGCSEs, and ICFPs. For some reason, Martin's writing holds my attention, and the story is sweet enough that I can get over constantly wondering, "Why does she put up with this?" Martin is a surprisingly good writer; though he is never going to win a Nobel Prize, he can spin a decent short yarn. My first exposure to his literary work was Picasso at the Lapin Agile, a stage play about a fantasy meeting between Picasso and Einstein at a Paris bar around the turn of the century.

Oh, and as for Shopgirl -- I haven't seen the movie yet! I'm glad that I read the book first, and then heard it read before seeing the film version. Just knowing that Martin and the glorious Clair Danes play the leading roles has changed my experience of the book...

March 06, 2007 10:19 AM

Getting Back to Normal

This "slow and steady" thing is starting to pay off. Last week, I ran a week of four-milers, six of them. That doesn't seem like much after four years of marathon training, but...

While recording mileage in my running log, I noticed that this was the first time I had run four days in a row since January 2-5, and the first time I had run five in a row since November 27-December 1. These weren't fast miles, for the most part, as I let the "run come to me" every day. But then on Friday, I had a pleasant surprise. I found myself falling into a steady, comfortable run -- at sub-marathon goal pace. It felt great! Even better, I didn't feel too sore or tired over the weekend, and so was able to finish off my week outdoors on the ice.

This week, my goal is a week of five-milers, six of them. I ran right at MP on the track this morning, with the rest of my runs for the week planned outdoors. Tomorrow, I head to SIGCSE 2007, in Covington, Kentucky ("the other Cincinnati"). My more technical readers can look forward to some CS-specific writing in the next few days.

March 02, 2007 4:09 PM

A Missed Opportunity

The people always know what's wrong

... but given the wrong leadership,

they won't ever talk to you about it.

Jason Yip

A month or so ago, I wrote about my involvement in a search for a college sysadmin. That search proceeds slowly. In the mean time, the second search I mentioned -- for an Assistant Vice President of Information Technology of the university -- has wrapped up with a disappointing result: a closed search. This means that the university decided not to hire any of the candidates in our applicant pool. If you have ever served on a search committee and put in the many hours required to do a good job, only to have it closed at the end, you know how disappointing this can be.

Why close the search? The campus community couldn't get 100% behind any of the candidates. The search exposed some of the rifts on campus, within the IT community and between that community and the arms of the university it serves. The administration felt it better to put our house in better order before trying to bring someone in from the outside to lead the organization forward.

Any time a search is closed, there is a sense of disappointment at the time spent for no tangible gain. In this case, though, that sense is offset somewhat by a sense of learning. This search was a discovery process for the campus and new president, and that is valuable in its own right, if intangible. But there is a second sort of disappointment, too. Many people on campus felt that one of the candidate was just what the university needed -- a leader with a strong technical background and a strong vision of the future of information technology. Thus the lament "a missed opportunity".

Personally, I learned a lot about university politics by participating in this search, as well as developing a better sense of what sort of person one must seek in a university CIO. This person doesn't need deep technical skills because he or she will be doing technology hands-on, but because he or she must have a deep enough understanding of technology to evaluate ideas, set priorities, select and mentor a staff, and educate administration on the needs, opportunities, and threats that confront the university. Of course, deep technical knowledge isn't enough, as communication can often dominate the technical in most non-technical peoples' minds. At least in the immediate moment, that is; when the technology choices are bad, suddenly the technical side of the leader matters a lot.

I shouldn't say much about the details of the search publicly, but let's leave it at this: The quip quoted above from Jason Yip lit up my screen when I first read it, and because of this search. When people know what's wrong but can't or won't tell you, you have a big problem.

Striving for excellence leaves one prone to occasional or even frequent disappointment. Back to the drawing board.