December 31, 2007 3:20 PM

2007 Running Year in Review

1492.

I was planning to run this morning, even though Monday is often a day of rest for me. There were two reasons. The first was purely sentimental. Today is the last day of 2007, and it is always kinda cool to mark big days with a run. The second reason was more compelling. It will be cold here tomorrow, certainly in the single digits Fahrenheit and perhaps as low as 0 degrees, with gusty winds. I figured that I would enjoy my run more at a balmy 15 degrees than a frigid 5. This would be my usual Tuesday run, an easy five-miler that falls between a Sunday long run and a faster Wednesday 8-miler, usually run on the track. I would run it on Monday instead and take Tuesday, January 1, as a day of rest, kicking off a new year's running bright and early on Wednesday morning.

But then I sat down last night to update my running long for the previous week, and saw that number.

1492.

As of December 30, I had run 1,492.0 miles. It was a surprise to me, as I had not expected to have put so many miles in the books this month. But the last three weeks have been good running, with 35, 37, and 39 miles, respectively. I'm getting back to a good groove after my latest marathon, though on most of my easy days I still feel a little slow.

In any case, upon seeing 1492.0 in my end-of-week column, no longer could I plan to run the same old five miles on New Year's Eve! With a mere three miles more, I could reach the nice, round total of 1500 miles for 2007.

So December 31 became an 8-mile run.

1500 shouldn't excite me so much. In 2006, I ran 1932.9 miles, in 2005, I ran my all-time high of 2137.7 miles, and in 2004, I made a huge jump in mileage upswing with 1907.0 miles. We have to go back to the year of my first marathon, 2003, to find so few miles on the books, 1281.8 miles.

But 2007 was different. January was tough, beginning with a lingering illness that knocked me off the road entirely for the last week of January and the first of February. I slowly built my mileage back up -- partly to be safe and partly because I didn't feel strong enough to build faster. Then I lost whole weeks to the same lingering "under the weather" in April and May, and a week to bad hamstrings in the middle of July.

From then on, I was as healthy as I'd been in a long while. My marathon training went pretty well, though I never quite found the speed I had lost from 2006. I could run fast a couple of days a week, but the other days were "just miles". Post-marathon has been pretty good, too, with only a few days missed. The last three weeks have seemed positively normal.

So 2007 was simply a tougher year all-around, and the small accomplishment of 1500 miles felt like a good way to ring out the old year and look toward a new one. And indeed it was.

Looking back at my log, 2003 looks a lot like 2007 in many ways. I was under the weather and off the road for January and February. In March, I began to recover with some long walks in the Arizona desert of ChiliPLoP'03; my colleague and good friend Robert Duvall may recall these walks as well. I then ran once each of the last two weeks, for a total of 6 miles on March 31. I began to train for my first half marathon on April 1 and put in 284.7 miles over the next three months. Then came training for the Chicago marathon, with an until then unheard-of 545.7 miles over three months. My post-marathon mileage was a nearly a carbon copy of 2007. Interesting.

My mileage was down this year, but what about performance in races? Though I never reached my strongest levels of 2005 or 2006, race times were not too bad. I ran my 3rd-best 5K ever in September, my 3rd-best half-marathon in June, and my 2nd-best marathon ever at the end of October. With a little more intestinal luck in Washington, I might have PRed the marathon, despite running a more physically demanding course. So, as I usually find at the end of each year, I can't complain much. This year raised new challenges, but isn't that what most years do for us?

As I type this now, I feel a distinct urge to start 2008 "right" -- on the road. An easy, easy three miles sounds good... I can handle half an hour of -5 degrees Fahrenheit, even with the stiff winds forecast for the night and morning. I'll bundle up, lace up the Asics, and savor the crispest of winter air as it fills my lungs with the hope of a year of new challenges. Perhaps that is what I'll do.

There are plenty of ways to warm up after I return to the house to celebrate the New Year with my family.

December 21, 2007 4:05 PM

A Panoply of Languages

As we wind down into Christmas break, I have been thinking about a lot of different languages.

First, this has been a week of anniversaries. We celebrated the 20th anniversary of Perl (well, as much as that is a cause for celebration). And BBC World ran a program celebrating the 50th birthday of Fortran, in the same year that we lost John Backus, its creator.

Second, I am looking forward to my spring teaching assignment. Rather than teaching one 3-credit course, I will be teaching three 1-credit courses. Our 810:151 course introduces our upper-division students to languages that they may not encounter in their other courses. In some departments, students see a smorgasbord of languages in the Programming Languages course, but we use an interpreter-building approach to teach language principles in that course. (We do expose them in that course to functional programming in Scheme, but more deeply.)

I like what that way of teaching Programming Languages, but I also miss the experience of exposing students to the beauty of several different languages. In the spring, I'll be teaching five-week modules on Unix shell programming, PHP, and Ruby. Shell scripting and especially Ruby are favorites of mine, but I've never had a chance to teach them. PHP was thrown in because we thought students interested in web development might be interested. These courses will be built around small and medium-sized projects that explore the power and shortcomings of each language. This will be fun for me!

As a result, I've been looking through a lot of books, both to recommend to students and to find good examples. I even did something I don't do often enough... I bought a book, Brian Marick's Everyday Scripting with Ruby. Publishers send exam copies to instructors who use a text in a course, and I'm sent many, many others to examine for course adoption. In this case, though, I really wanted the book for myself, irrespective of using it in a course, so I decided to support the author and publisher with my own dollars.

Steve Yegge got me to thinking about languages, too, in one of his recent articles. The article is about the pitfalls of large code bases but, while I may have something to say about that topic in the future, what jumped out to me while reading this week were two passages on programming languages. One mentioned Ruby:

Java programmers often wonder why Martin Fowler "left" Java to go to Ruby. Although I don't know Martin, I think it's safe to speculate that "something bad" happened to him while using Java. Amusingly (for everyone except perhaps Martin himself), I think that his "something bad" may well have been the act of creating the book Refactoring, which showed Java programmers how to make their closets bigger and more organized, while showing Martin that he really wanted more stuff in a nice, comfortable, closet-sized closet.

For all I know, Yegge's speculation is spot on, but I think it's safe to speculate that he is one of the better fantasy writers in the technical world these days. His fantasies usually have a point worth thinking about, though, even when they are wrong.

This is actually the best piece of language advice in the article, taken at its most general level and not a slam at Java in particular:

But you should take anything a "Java programmer" tells you with a hefty grain of salt, because an "X programmer", for any value of X, is a weak player. You have to cross-train to be a decent athlete these days. Programmers need to be fluent in multiple languages with fundamentally different "character" before they can make truly informed design decisions.

We tell our students that all the time, and it's one of the reasons I'm looking forward to three 5-week courses in the spring. I get to help a small group of our undergrads crosstrain, to stretch their language and project muscles in new directions. That one of the courses helps them to master a basic tool and another exposes then to one of the more expressive and powerful languages in current use is almost a bonus for me.

Finally, I'm feeling the itch -- sometimes felt as a need, other times purely as desire -- to upgrade the tool I use to do my blogging. Should I really upgrade, to a newer version of my current software? (v3.3 >> v2.8...) Should I hack my own upgrade? (It is 100% shell script...) Should I roll my own, just for the fun of it? (Ruby would be perfect...) Language choices abound.

Merry Christmas!

Posted by Eugene Wallingford | Permalink | Categories: Computing, Software Development, Teaching and Learning

December 20, 2007 1:45 PM

An Unexpected Christmas Gift

Some students do listen to what we say in class.

Back when I taught Artificial Intelligence every year, I used to relate a story from Russell and Norvig when talking about the role knowledge plays in how an agent can learn. Here is the quote that was my inspiration, from Pages 687-688 of their 2nd edition:

Sometimes one leaps to general conclusions after only one observation. Gary Larson once drew a cartoon in which a bespectacled caveman, Zog, is roasting his lizard on the end of a pointed stick. He is watched by an amazed crowd of his less intellectual contemporaries, who have been using their bare hands to hold their victuals over the fire. This enlightening experience is enough to convince the watchers of a general principle of painless cooking.

I continued to use this story long after I had moved on from this textbook, because it is a wonderful example of explanation-based learning.

Unfortunately, Russell and Norvig did not include the cartoon, and I couldn't find it anywhere. So I just told the story and then said to the class -- every class of AI students to go through my university over a ten-year stretch -- that I hoped to find it some day.

As of yesterday, I can, thanks to a former student. Ryan heard me on that day in his AI course and never forgot. He looked for that cartoon in many of the ways I have over the years, by googling and by thumbing through Gary Larson collections in the book stores. Not too long ago, he found it via a mix of the two methods and tracked it down in print. Yesterday, on one of his annual visits (he's a local), he brought me a gift-wrapped copy. And I was happy!

Sadly, I still can't show you or any of my former students who read my blog. Sorry. I once posted another Gary Larson cartoon in a blog entry, with a link to the author's web site, only to eventually a pre-cease-and-desist e-mail asking me to pull the cartoon from the entry. I'll not play with that fire again. This is almost another illustration of the playful message of the very cartoon in question: learning not to stick one's hand into the flame from a single example. But not quite -- it's really an example of learning from negative feedback.

Thanks to Ryan nonetheless, for remembering an old prof's story from many years ago and for thinking of him during this Christmas season! Both the book and the remembering make excellent gifts.

December 20, 2007 11:30 AM

Increasing Your Sustainable Pace

Sustainable pace is part of the fabric of agile methods. The principles behind the Agile Manifesto include:

Agile processes promote sustainable development. The sponsors, developers, and users should be able to maintain a constant pace indefinitely.

When I hear people talk about sustainable pace, they are usually discussing time -- how many hours per week a healthy developer and a healthy team can produce value in their software. The human mind and body can work so hard for only so long, and trying to work hard for longer leads to problems, as well as decline in productivity.

The first edition of XP had a practice called 40-hour work week that embodied this notion. The practice was later renamed sustainable pace to reflect that 40 hours is an arbitrary and often unrealistic limit. (Most university faculty certainly don't stop at 40 hours. By self-report at my school, the average work week is in the low-50s.) But the principle is the same.

Does this mean that we cannot become more productive?

Recall that pace -- rate -- is a function of two variables:

rate = distance / time

Productivity is like distance. One way to cover more distance is to put in more time. Another is to increase the amount of work you can do in a given period of time.

This is an idea close to the heart of runners. While I have written against running all out, all the time, I know that the motivation found in that mantra is to get faster. Interval training, fartleks, hill work, and sustained fast pace on long runs are all intended to help a runner get stronger and faster.

How can software developers "get faster"? I think that one answer lies in the tools they use.

When I use a testing framework and automate my tests, I am able to work faster, because I am not spending time running tests by hand. When I use a build tool, I am able to work faster, because I am not spending time recompiling files and managing build dependencies. When I use a powerful -- and programmable -- editing tool, whether it is Eclipse or Emacs, I am able to work faster, because I am not spending time putzing around for the sake of the tool.

And, yes, when I use a more powerful programming language, I am able to work faster, because I am not spending time expressing thoughts in the low-level terms of a language that limits my code.

So, programmers can increase their sustainable pace by learning tools that make them more productive. They can learn more about the tools they already use. They can extend their tools to do more. And they can write new tools when existing tools aren't good enough.

Perhaps it is not surprising that I had this thought while running a pace that I can't sustain for more than a few miles right now. But I hope in a few months that a half marathon at this pace will be comfortable!

December 18, 2007 4:40 PM

Notes on a SECANT Workshop: Table of Contents

[Nothing new here for regular readers... This post implements an idea that I saw on Brian Marick's blog and liked: a table of contents for a set of conference posts coupled with cross-links as notes at the top of each entry. I have done a table of contents before, for OOPSLA 2005 -- though, sadly, not for 2004 or 2006 -- but I like the addition of the link back from entries to the index. This may help readers follow my entries, especially when they are out of order, and it may help me when I occasionally want to link back to the workshop as a unit.]

This set of entries records my experiences at the SECANT 2007 workshop November 17-18, hosted by the Purdue Department of Computer Science.

Primary entries:

- Workshop

Intro: Teaching Science and Computing

-- on building a community - Workshop

1: Creating a Dialogue Between Science and CS

-- How can we help scientists and CS folks work together? - Workshop

2: Exception Gnomes, Garbage Collection Fairies, and Problems

-- on a hodgepodge of sessions around the intersection of science ed and computing - Workshop

3: The Next Generation

-- what scientists are doing out in the world and how computer scientists are helping them - Workshop

4: Programming Scientists

-- Should scientists learn to program? And, if so, how? - Workshop

5: Wrap-Up

-- on how to cause change and disseminate results

Ancillary entries:

- A Program is

an Idea

-- going farther on why scientists, and everyone else, should learn to program

The next few items on the newsfeed will be these entries, updated with the "transcript" cross-link. [Done]

December 18, 2007 2:12 PM

Post-Semester This and That

Now that things have wound down for the semester, I hope to do some mental clean-up and some CS. As much as I enjoyed the SECANT workshop last month (blogged in detail ending here), travel that late in a semester compresses the rest of the term into an uncomfortably small box. That said, going to conferences and workshops is essential:

Wherever you work, most of the smart people are somewhere else.

I saw that quote attributed to Bill Joy in an article by Tim Bray. Joy was speaking of decentralization and the web, but it applies to the pre-web network that makes up any scholarly discipline. Even with the web, it's good to get out of the tower every so often and participate in an old-fashioned conversation.

One part of the semester clean-up will be assessing the state of my department head backlog. Most days, I add more things to my to-do list than I am unable to cross off. Some of them are must-dos, details, and others are ideas, dreams. By the end of the semester, I have to be honest that many of the latter won't be done, soon if ever. I don't do a hard delete of most of these items; I just push them onto a "possibilities" list that can grow as large as it likes without affecting my mental hygiene.

I recently told my dean that, after two and a half years as head, I had almost come to peace with what I have taken to calling "time management by burying". He just smiled and said that the favorite part of his semester is doing just that, looking at his backlog and saying to himself, "Well, guess I'm not going to do that" as he deleted it from the list for good. Maybe I should be more ruthless myself. Or maybe that works better if you are a dean...

I've been following the story of the University of Michigan hiring West Virginia University's head football coach. Whatever one thinks of the situation -- and I think it brings shame to both Michigan and its new coach -- there was a very pragmatic piece of advice to be learned about managing people from one Pittsburgh Post-Gazette sports article about it. Says Bob Reynolds, former chief operating officer of Fidelity Investments:

I've been the COO of a 45,000-person company. When somebody's producing, you ask, 'What can I do for you to make your life better?' Not 'What can I do to make your life more miserable?'

That's a good thought for an academic department head to start each day with. And maybe a CS instructor, too.

December 17, 2007 5:02 PM

An Unexpected Opportunity

I had to drive to Des Moines for a luncheon today. Four hours driving, round-trip, for a 1.25-hour lunch -- the things I do for my employer! The purpose of the trip was university outreach: I was asked to represent the university at a lunch meeting of the Greater Des Moines Committee, in place of our president and dean.

The luncheon was valuable for making connections to the movers and shakers in the capital city, and for talking to business leaders about computer science enrollments, math and science in the K-12 schools, and IT policy for the state. The lunch speaker, Ted Crosbie, the chief technology officer of Iowa, gave a good talk on economic development and the future of the state's technology efforts.

But was it all worth four hours on the road? Probably so, but I will give a firm Yes, for an unexpected reason.

A couple of minutes after I took my seat for lunch, former Iowa Governor Terry Branstad (1983-1999) sat down at our table. He struck up a nice conversation. Then, a couple of minutes later, former Iowa Governor Robert Ray (1969-1983) joined us. Very cool. I was impressed at how involved and informed these retired public officials remain in the affairs of the state, especially in economic development. The latter is, of course, something of great importance to my department and its students, as well as the university as a whole.

Then on the drive home, I saw a bald eagle soar majestically over a small riverbed. A thing of beauty.

December 13, 2007 2:47 PM

Computing in Yet Another Discipline

Last month there was lots of talk here about how computing changes science. In that discussion I mentioned economics and finance as other disciplines that will be fundamentally different in the future as computation and massive data stores affect methodology. Here is another example, in a social science -- or perhaps the humanities, depending on your viewpoint.

Sergei Golitsinski is one of my MS students in Computer Science. Before catching the CS bug, via web design and web site construction, he had nearly completed an MA in Communication Studies (another CS!). For the last year or more, he has been at the thesis-writing stage in both departments. He finally defended his MA thesis yesterday afternoon.

The title of his thesis is "Significance of the General Public for Public Relations: A Study of the Blogosphere's Impact on the October 2006 Edelman/Wal-Mart Crisis". You'll have to read the thesis for the whole story, but here's a quick summary.

In October 2006, Edelman started a blog "as a publicity stunt on behalf of Wal-Mart" yet claimed it to be "an independent blog maintained by a couple traveling in their RV and writing stories about happy Wal-Mart employees". Eventually, bloggers got hold of the story and ran with it, creating a fuss that resulted in "significant negative consequences" for Edelman. Sergei collected data from these blogs and their comments, studied the graph of relationships among them, and argued that actions of the "general public" accounted for the effects felt by Edelman. This is significant in the PR world, it seems, because the PR world largely believes that the "general public" either does not exist or is insignificant. Only specific publics defined as coherent segments are able to effect change.

Sergei used publicly-available data from the blogosphere to drive an empirical study to support his claim that "new communication technologies have given the general public the power to cause direct negative consequences for organizations". Collecting data for an empirical study is not unusual in the Communications Studies world, but collecting it on this scale is unusual and using computer programs to study the data as a highly-interconnected graph is even less so.

I was not on this thesis committee, but I did ask a question at his defense: Does this type of research make it possible to ask questions in communications studies that were heretofore not askable? I suspected that the answer was yes but hoped to here some specific examples. I also hoped that these examples would help the Comm Studies folks in the room to see what computation will do to at least part of their discipline. His answer was okay, but not a grand slam; to be fair, I'm sure I caught him a bit off-guard.

From the questions that the Comm Studies committee members asked and the issues they discussed (mostly on the nature of "publics"), it wasn't clear whether they quite understand the full implication of the kind of work Sergei did. It will change how they do research is done, from statistical analyses of relatively small data sets to graph-theoretic analyses of large data sets. Computational research will make it possible to ask entirely new questions -- both ones that were askable before but not feasible to answer and ones that would not have been conceived before. This isn't news -- Duncan Watt's Small World Project is seminal work in this area -- but the time is right for this kind of work to explode into the mainstream.

What's up next Sergei? He has tried a Ph.D. program in Computer Science and found it not to his interests; it seemed too inward-looking, focused on narrow mathematics and not big problems. He may well stay in Communications and pursue a Ph.D. there. As I've told him, he could be part of vanguard in that discipline, helping to revolutionize methodology and ask some new and deeply interesting questions there.

December 12, 2007 1:02 PM

Not Your Father's Data Set

When I became department head, I started to receive a number of periodicals unrequested. One is Information Week. I never read it closely, but I usually browse at least the table of contents and perhaps a few of the news items and articles. (I'm an Apple/Mac/Steve Jobs junkie, if nothing else.)

The cover story of the December 10 issue is on the magazine's 2007 CIO of the Year, Tim Stanley of Harrah's Entertainment. This was an interesting look into a business for which IT is an integral component. One particular line grabbed my attention, in a sidebar labeled "Data Churn":

Our data warehouse is somewhat on the order of 20 Tbytes in terms of structure and architecture, but the level of turn of that information is many, many, many times that each day.

The database contains information on 42 million customers, and it turns data over multiple tens of terabytes a day.

Somehow, teaching our students to work with data sets of 10 or 50 or 100 integers or strings seems positively 1960s. It also doesn't seem to be all that motivating an approach for students carrying iPods with megapixel images and gigabytes of audio and video.

An application with 20 terabytes of data churning many times over each day could serve as a touchstone for almost an entire CS curriculum, from programming and data structures to architecture and programming languages, databases and theory. As students learn how to handle larger problems, they see how much more they need to learn in order to solve common IT problems of the day.

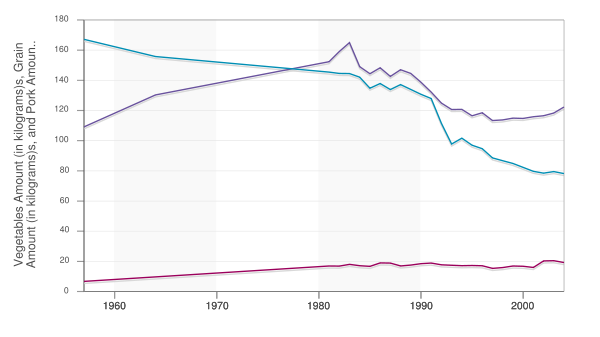

I'm not saying that we must use something on the order of Harrah's IT problem to do a good job or to motivate students, but we do need to meet our students -- and potential students -- closer to where they live technologically. And today we have so many opportunities -- Google, Google Maps, Amazon, Flickr, ... These APIs are plenty accessible to more advanced students. They might need a layer of encapsulation to be suitable for beginning students; that's something a couple of us have worked on occasionally at ChiliPLoP. But we all have even more options available these days, as data-sharing sites a lá Flickr become more common. (See, for example, Swivel; that's where I found the graph shown above, derived from data available at the USDA's Economic Research Service website.)

December 11, 2007 1:46 PM

Thoughts While Killing Time with the Same Old Ills

I have to admit to not being very productive this morning, just doing some reading and relaxing. There are a few essential tasks to do yet this finals week, some administrative (e.g., making some adjustments to our teaching schedule based on course enrollments) and some teaching (e.g., grading compiler projects and writing a few benchmark programs). But finals week is a time when I can usually afford half a day to let my mind float aimlessly in the doldrums.

Of course, by "making some adjustments to our teaching schedule based on course enrollments", I mean canceling a couple of classes due to low enrollments and being sure that our faculty have other teaching or scholarly assignments for the spring. The theme of low enrollments is ongoing even as we saw a nontrivial bump in number of majors last fall. Even if a trend develops in that direction, we have to deal with smaller upper-division enrollments from small incoming classes of the recent past.

Last month, David Chisnall posted an article called Is Computer Science Dying?, which speculates on the decline in majors, the differences between CS and software development, and the cultural change that has turned prospective students' interests to other pursuits. There isn't anything all that new in the article, but it's a thoughtful piece of the sort that is, sadly, all too common these days. At least he is hopeful about the long-term viability of CS as an academic discipline, though he doesn't have much to say about how CS and its applied professional component might develop together -- or apart.

In that regard, I like several of Shriram Krishnamurthi's theses on undergraduate CS. When he speaks of the future -- from a vantage point of two years ago -- he recommends that CS programs admit enough flexibility that they can evolve in ways that we see make sense.

(I also like his suggestion that we underspecify the problems we set before students:

Whether students proceed to industrial positions or to graduate programs, they will have to deal with a world of ambiguous, inconsistent and flawed demands. Often the difficulty is in formulating the problem, not in solving it. Make your assignments less clear than they could be. Do you phrase your problems as tasks or as questions?

This is one of the ways student learn best from big projects!)

Shriram also mentions forging connections between CS and the social sciences and the arts. One group of folks who is doing that is Mark Guzdial's group at Georgia Tech, with their media computation approach to teaching computer science. This approach has been used enough at enough different schools that Mark now has some data on how well it might help to reverse the decline in CS enrollments, especially among women and other underrepresented groups. As great as the approach is, the initial evidence is not encouraging: "We haven't really changed students' attitudes about computer science as a field." Even students who find that they love to design solutions and implement their designs in programs retain the belief that CS is boring. Students who start a CS course with a favorable attitude toward computing leave the university with a favorable attitude; those who start with an unfavorable attitude leave with the same.

Sigh. Granted, media computation aims at the toughest audience to "sell", folks most likely who consider themselves non-technical. But it's still sad to think we haven't made headway at least in helping them to see the beauty and intellectual import of computing. Mark's not giving up -- on computing for all, or on programming as a fundamental activity -- and neither am I. With this project, the many CPATH projects, and Owen Astrachan's Problem Based Learning in Computer Science project, and so many others, I think we will make headway. And I think that many of the ideas we are now pursuing, such as domain-specific applications, problems, and projects, is a right path.

Some of us here think that the media computation approach is one path worth pursue, so we are offering a CS1/CS2 track in media computation beginning next semester. This will be our third time, with the first two being in the Java version. (My course materials, from our first media comp effort, are still available on-line. This time, we are offering the course in Python -- a decision we made before I ended up hearing so much about the language at the SECANT workshop I reported on last month. I'm an old Smalltalk guy, and a fan of Scheme and Lisp, who likes the feel of a small, uniform language. We have been discussing the idea of using a so-called scripting language in CS1 for a long time, at least as one path into our major, and the opportunity is right. We'll see how it goes...

The secret to happiness is low expectations.

-- Barry Schwartz

In addition to reading this morning, I also watched a short video of Barry Schwartz from TED a couple of years ago. I don't know how I stumbled upon a link to such an old talk, but I'm glad I did. The talk was short, colorful, and a succinct summary of the ideas from Schwartz's oft-cited The Paradox of Choice. Somehow, his line about low expectations seemed a nice punctuation mark to much of what I was thinking about CS this morning. I don't feel negative when I think this, just sobered by the challenge we face.

December 10, 2007 1:19 PM

It's a Wrap

My first run as an actor has ended without a Broadway call. Nonetheless I consider it to have been successful enough. My character didn't cause any major interruptions in the flow of our three performances, and I even got us back on track a time or two. Performing in front of a crowd -- especially a crowd that contained personal friends -- was enough different from giving a lecture or speaking in public that it wracked a few nerves. But getting a laugh from a real audience was also enough different from a laugh in a lecture, too, and the buzz could feed the rest of the performance.

My first post on this topic recorded dome thoughts I had had on the relationship between developing software and directing a play. In those thoughts, the director or producer is cast as the software developer, or vice versa. In the last couple of weeks, my thoughts turned more often to my role as performer. Here are a few:

- Before each rehearsal and performance, I found myself spending important minutes setting up my environment: making sure props were in place, adjusting positions based on any results from the last time, and finding a place backstage for my time between scenes. This felt a lot like how I set up for time writing code, getting my tools in place.

- I wonder if there is any analogue in software development the self-consciousness I felt as I began to perform on

stage? Over time, the director helped me to morph some of the self-consciousness into a sensitivity to the scene and my

fellow players.

After a while, experience helps push self-consciousness into the background. It was even possible to get into a flow where the self disappeared for a moment. I think I need more experience in character to have more experiences like that! But those moments were special.

- Once we got into performances, I noticed a need for the same almost contradictory combination of hubris and humility that a programmer needs. At some point, I had to know my lines and scenes so well that I could nail them blind. That drove a form of confidence that let me "own" my character in a scene. Yet I had to be careful not to become too cocky, because suddenly something would go wrong and I'd find myself exposed and not at all in control. So I spent a few minutes before every scene preparing, just refreshing my memory of cues and placements and notes the director had given. That bit of humility gave me more confidence than I otherwise could have had.

Most of the relationships I noticed between acting in the play and building software were really patterns of good teams. In every scene I depended upon the presence and performance of others -- and they depended on me. Being a good teammate mattered both on stage (while performing) and off (when preparing and when taking and giving feedback). "The key to acting," said our director, "is listening to other people." Funny how that is the key to so many things.

As I look back on this (first?) experience being a player in a stage production, I think that there is a lot to this notion that developing software is like producing a play -- and that producing a play is like developing software. The two media are so different, but they are both malleable, and both ultimately depend on their audiences (users).

Over the course of two weeks or so, the director did a lot of what I call refactoring. For example, he found the equivalent of duplicated code -- lines and even larger parts of scenes that don't move the story forward, given how the rest of the play is being staged. Removing duplicated stuff frees up stage real estate and time for making other additions and changes. He also aggressively sought and deleted dead space -- moments when no one was on stage (say, in the transition between scenes) or no active was taking place (say, when lighting changed). Dead space kills the energy of the show and distracts the viewer. Dead space is a little like dead code and over-designed code -- code that isn't contributing to the application. Cut it.

Every night after rehearsal and even shows, the director "ran notes" with us. This was a time after each "iteration" dedicated to debugging and refactoring. That's good practice in software.

One other connection jumped out to me yesterday. After we closed the show, I was chatting with Scott Smith, a local filmmaker whose is real-life husband to the woman who played my wife in the show. We were discussing how filmmaking has changed in the last decade or so. In the not-so-old days folks in video were strongly encouraged to become specialists in one of the stages: writing, directing, shooting, editing, and so on. Now, with the wide availability of relatively inexpensive equipment and digital tools, and economic pressures to deliver more complete services, even veterans such as Smith find themselves developing skills across the board, becoming not a jack-of-all-trades but master of none, but rather strong in all phases of the game.

I immediately thought of extreme programming's rapid development cycle that requires programmers to be not only writers of code but also writers of stories and tests, to be able to interact with clients and to grow designs and architectures. It's hard to be a master of all trades, but the sort of move we have seen in software and in filmmaking from specialist to generalist encourages a deep competence in all areas. Too often I have heard folks say "I am a generalist" as way to explain their lack of expertise in any one area. But the new generalist is competent across the board, perhaps expert in multiple areas, and able to contribute meaningful to the whole lifecycle.

One last idea. Just before our final show, the director gave us our daily pep talk. He said that come performers view the last show as occasion to do something wacky -- to misplace someone's prop, or deliver a crazy line not from the script, or to affect some voice or mannerism on stage. That sounds like fun, he said, but remember: For the audience out in the seats today, this is the first show. They deserve to see the best version of the show that we can give. For some reason, I thought of software developers and users. Maybe my mind was just hyperactive at that moment when we were about to create our illusion. Maybe not.

December 07, 2007 4:41 PM

At the End of Week n

Classes are over. Next week, we do the semiannual ritual of finals week, which keeps many students on edge while at the same time releasing most of the tension in faculty. The tension for my compiler students will soon end, as the submission deadline is 39 minutes away as I type this sentence.

The compiler course has been a success several ways, especially in the most important: students succeeded in writing a compiler. Two teams submitted their completed programs earlier this week -- early! -- and a couple of others have completed the project since. These compilers work from beginning to end, generating assembly language code that runs on a simple simulated machine. Some of the language design decisions contributed to this level of success, so I feel good. (And I already know several ways to do better next time!)

I've actually wasted far too much time this week writing programs in our toy functional language, just because I enjoy watching them run under the power of my students' compilers.

More unthinkable: There is a greater-than-0% chance that at least one team will implement tail call optimization before our final exam period next. They don't have an exam to study for in my course -- the project is the purpose we are together -- so maybe...

In lieu of an exam, we will debrief the project -- nothing as formal as a retrospective, but an opportunity to demo programs, discuss their design, and talk a bit about the experience of writing such a large, non-trivial program. I have never found or made the time to do this sort of studio work during the semester in the compilers course, as I have in my other senior project courses. This is perhaps another way for me to improve this course next time around.

The end of week n is a good place to be. This weekend holds a few non-academic challenges for me: a snowy 5K with little hope for the planned PR and my first performances in the theater. Tonight is opening night... which feels as much like a scary final exam as anything I've done in a long time. My students may have a small smile in their hearts just now.

December 05, 2007 2:42 PM

Catch What You're Thrown

Context You are in an Interactive Performance, perhaps a play, using Scripted Dialogue.

Problem The performer speaking before you delivers a line incorrectly. The new line does not change the substance of the play, but it interrupts the linguistic flow.

Example Instead of saying "until the first of the year", the performer says as "for the rest of the year".

Forces You know your lines and want to deliver them correctly.

The author wrote the dialogue with a purpose in mind.

Delivering the line as you memorized it is the safest way for you to proceed, and also the safest route back on track.

BUT... Delivering the scripted line will call attention to the error. This may disconcert your partner. It will also break the mood for the audience.

So: Adapt your line to the set up. Respond in a way that is seamless to the audience, retains the key message of the story, and gets the dialogue back on track.

That is, catch what you are thrown.

Example Change your line to say "for the rest of the year?" instead of "until the first of the year?"

Related Patterns If the performer speaking before you misses a line entirely, or gets off the track of the Scripted Dialogue, deliver a Redirecting Line.

----

Postscript: This category of my blog is intended for software patterns and discussion thereof, but this is a pattern I just learned and felt a strong desire to right. I may well try to write Redirecting Line and maybe even the higher-level Scripted Dialogue and Interactive Performance patterns, if the mood strikes me and the time is available. I never thought of pattern language of performance when I signed on for this gig... And just so you know, I was the performer who mis-delivered his line in the example given above, where I first encountered Catch What You're Thrown.

December 03, 2007 10:12 AM

Good News, Bad News

On Friday morning, I had my best speed workout in at least fourteen months. I ran 1600m and 800m intervals at target 5K pace, followed by a ladder of 800m-400m-200m at an equivalent 1 mile pace. The 800m at 1-mile pace was almost certainly the fastest half-mile I have ever run in my life. Afterwards I felt great. Life is good.

Things look perfect for a chance to PR a 5K on Saturday, December 8. This is a great chance to put all my marathon training to good use in a speedier (and shorter!) race.

On Saturday, we had a huge ice storm. Snow and cold are one sort of challenge, but I'll take my chances running fast in them. A half-inch of ice is an entirely different matter.

I guess this will be a "fun run" after all, if the race is even able to go.