March 31, 2010 3:22 PM

"Does Not Play Well With Others"

Today I ran across a recent article by Brian Hayes on his home-baked graphics. Readers compliment him all the time on the great graphics in his articles. How does he do it? they ask. The real answer is that he cares what they look like and puts a lot of time into them. But they want to know what tools he uses. The answer to that question is simple: He writes code!

His graphics code of choice is PostScript. But, while PostScript is a full-featured postfix programming language, it isn't the sort of language that many people want to write general-purpose code in. So Hayes took the next natural step for a programmer and built his own language processor:

... I therefore adopted the modus operandi of writing a program in my language of choice (usually some flavor of Lisp) and having that program write a PostScript program as its output. After doing this on an ad hoc basis a few times, it became clear that I should abstract out all the graphics-generating routines into a separate module. The result was a program I named lips (for Lisp-to-PostScript).

Most of what lips does is trivial syntactic translation, converting the parenthesized prefix notation of Lisp to the bracketless postfix of PostScript. Thus when I write (lineto x y) in Lisp, it comes out x y lineto in PostScript. The lips routines also take care of chores such as opening and closing files and writing the header and trailer lines required of a well-formed PostScript program.

Programmers write code to solve problems. More often than many people, including CS students, realize, programmers write a language processor or even create a little language of their own to make solving the more convenient. We have been covering the idea of syntactic abstractions in my programming languages course for the last few weeks, and Hayes offers us a wonderful example.

Hayes describes his process and programs in some detail, both lips and his homegrown plotting program plot. Still, he acknowledges that the world has changed since the 1980s. Nowadays, we have more and better graphics standards and more and better tools available to the ordinary programmer -- many for free.

All of which raises the question of why I bother to roll my own. I'll never keep up -- or even catch up -- with the efforts of major software companies or the huge community of open-source developers. In my own program, if I want something new -- treemaps? vector fields? the third dimension? -- nobody is going to code it for me. And, conversely, anything useful I might come up with will never benefit anyone but me.

Why, indeed? In my mind, it's enough simply to want to roll my own. But I also live in the real world, where time is a scarce resource and the list of things I want to do grows seemingly unchecked by any natural force. Why then? Hayes answers that question in a way that most every programmer I know will understand:

The trouble is, every time I try working with an external graphics package, I run into a terrible impedance mismatch that gives me a headache. Getting what I want out of other people's code turns out to be more work than writing my own. No doubt this reveals a character flaw: Does not play well with others.

That phrase stood me up in my seat when I read it. Does not play well with others. Yep, that's me.

Still again, Hayes recognizes that something will have to give:

In any case, the time for change is coming. My way of working is woefully out of date and out of fashion.

I don't doubt that Hayes will make a change. Programmers eventually get the itch even with their homebrew code. As technology shifts and the world changes, so do our needs. I suspect, though, that his answer will not be to start using someone else's tools. He is going to end up modifying his existing code, or writing new programs all together. After all, he is a programmer.

March 30, 2010 8:57 PM

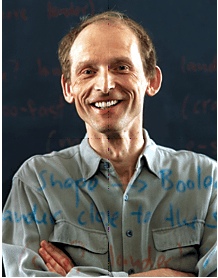

Matthias Felleisen Wins the Karl Karlstrom Award

Early today, Shriram Krishnamurthi announced on the PLT Scheme mailing list that Matthias Felleisen had won the Karl Karlstrom Outstanding Educator Award. The ACM presents this award annually

to an outstanding educator who is .. recognized for advancing new teaching methodologies, or effecting new curriculum development or expansion in Computer Science and Engineering; or making a significant contribution to the educational mission of the ACM.

A short blurb in the official announcement touts Felleisen "for his visionary and long-term contributions to K-12 outreach programs, innovative textbooks, and pedagogically motivated software". Krishnamurthi, in his message to the community borne out of Felleisen's leadership and hard work, said it nicely:

Everyone on this list has been touched, directly or indirectly, by Matthias Felleisen's intellectual leadership: from his books to his software to his overarching vision and singular execution, as well as his demand that the rest of us live up to his extraordinary standards.

As an occasional Scheme programmer and a teacher of programmers, I have been touched by Felleisen's work regularly over the last 20 years. I first read "The Little Lisper" long before I knew Matthias, and it changed how I approached programming with inductive data types. I assign "The Little Schemer" as the only textbook for my programming languages course, which introduces and uses functional programming. I have always felt as if I could write my own materials to teach functional programming and the languages content of the course, but "The Little Schemer" is a tour de force that I want my students to read. Of course, we also use Dr. Scheme and all of its tools for writing Scheme programs, though we barely scratch the surface of what it offers in our one-semester course.

We have never used Felleisen's book "How to Design Programs" in our introductory courses, but I consider its careful approach to teaching software design one of the most important intro CS innovations of the last twenty years. Back in the mid-1990s, when my department was making one of its frequent changes to the first-year curriculum, I called Matthias to ask his advice. Even after he learned that we were not likely to adopt his curriculum, he chatted me for a while and offered me pedagogical advice and even strategic advice my making a case for a curriculum based in a principle outside any given language.

That's one of the ironic things about Felleisen's contribution: He is most closely associated with Scheme and tools built in and for Scheme, but his TeachScheme! project is explicitly not about Scheme. (The "!" is even pronounced "not", a programming pun using the standard C meaning of the symbol.) TeachScheme! uses Scheme as a tool for creating languages targeted at novices who progress through levels of understanding and complexity. Just today in class, I talked with my students about Scheme's mindset of bringing to users of a language the same power available to language creators. This makes it an ideal intellectual tool for implementing Felleisen's curriculum, even as its relative lack of popularity has almost certainly hindered adoption of the curriculum more widely.

As my department has begun to reach out to engage K-12 students and teachers, I have come to appreciate just how impressive the TeachScheme! outreach effort is. This sort of engagement requires not only a zeal for the content but also sustained labor. Felleisen has sustained both his zeal and his hard work, all the while building a really impressive group of graduate students and community supporters. The grad students all seem to earn their degrees, move on as faculty to other schools, and yet remain a part of the effort.

Closer to my own work, I continue to think about the design recipe, which is the backbone of the HtDP curriculum. I remain convinced that this idea is compatible with the notion of elementary patterns, and that the design recipe can be integrated with a pattern language of novice programs harmoniously to create an even more powerful model for teaching new programmers how to design programs.

As Krishnamurthi wrote to the PLT Scheme developer and user communities, Felleisen's energy and ideas have enriched my work. I'm happy to see the ACM honor him for his efforts.

March 29, 2010 7:25 PM

This and That, Volume 2

[A transcript of the SIGCSE 2010 conference: Table of Contents]

Some more miscellaneous thoughts from a few days at the conference...

Deja Vu All Over Again

Though it didn't reach the level of buzz, concurrency and its role in the CS curriculum made several appearances at SIGCSE this year. At a birds-of-a-feather session on concurrency in the curriculum, several faculty talked about the need to teach concurrent programming and thinking right away in CS1. Otherwise, we teach students a sequential paradigm that shapes how they view problems. We need to make a "paradigm shift" so that we don't "poison students' minds" with sequential thinking.

I closed my eyes and felt like I was back in 1996, when people were talking about object-oriented programming: objects first, early, and late, and poisoning students' minds with procedural thinking. Some things never change.

Professors on Parade

How many professors throw busy slides full of words and bullet points up on the projector, apologize for doing so, and then plow ahead anyway? Judging from SIGCSE, too many.

How many professors go on and on about importance of active learning, then give straight lectures for 15, 45, or even 90 minutes? Judging from SIGCSE, too many.

Mismatches like these are signals that it's time to change what we say, or what we do. Old habits die hard, if at all.

Finally, anyone who thinks professors are that much different than students, take note. In several sessions, including Aho's talk on teaching compilers, I saw multiple faculty members in the audience using their cell phones to read e-mail, surf the web, and play games. Come on... We sometimes say, "So-and-so wrote the book on that", as a way to emphasize the person's contribution. Aho really did write the book on compilers. And you'd rather read e-mail?

I wonder how these faculty members didn't pay attention before we invented cell phones.

An Evening of Local Cuisine

Some people may not be all that excited by Milwaukee as a conference destination, but it is a sturdy Midwestern industrial town with deep cultural roots in its German and Polish communities. I'm not much of a beer guy, but the thought of going to a traditional old German restaurant appealed to me.

My last night in town, I had dinner at Mader's Restaurant, which dates to 1902 and features a fine collection of art, antiques, and suits of medieval armour "dating back to the 14th century". Over the years they have served political dignitaries such as the Kennedys and Ronald Reagan and entertainers such as Oliver Hardy (who, if the report is correct, ate enough pork shanks on his visit to maintain his already prodigious body weight).

I dined with Jim Leisy and Rick Mercer. We started the evening with a couple of appetizers, including herring on crostinis. For dinner, I went with the Ritter schnitzel, which came with German mashed potatoes and Julienne vegetables, plus a side order of spaetzele. I closed with a light creme brulee for dessert. After these delightful but calorie-laden dishes, I really should have run on Saturday morning!

Thanks to Jim and Rick for great company and a great meal.

March 28, 2010 6:15 PM

SIGCSE Day 3 -- Interdisciplinary Research

[A transcript of the SIGCSE 2010 conference: Table of Contents]

My SIGCSE seem to come to an abrupt end Saturday morning. After a week of long days, I skipped a last run in Milwaukee and slept in a bit. The front desk messed up my bill, so I spent forty-five minutes wangling for my guaranteed rate. As a result, I missed the Nifty Assignments session and arrived just in time for some time meeting with colleagues at the exhibits during the morning break. This left me time for one last session before my planned pre-luncheon departure.

I chose to attend the special session called Interdisciplinary Computing Education for the Challenges of the Future, with representatives of the National Science Foundation who have either carried out funded interdisciplinary research or who have funded and managed interdisciplinary programs. The purpose of the session was to discuss...

... the top challenges and potential solutions to the problem of educating students to develop interdisciplinary computing skills. This includes a computing perspective to interdisciplinary problems that enables us to think deeply about difficult problems and at the same time engage well under differing disciplinary perspectives.

This session contributed to the conference buzz of computer science looking outward, both for research and education. I found it quite interesting and, of course, think the problems it discussed are central to what people in CS should be thinking about these days. I only wish my mind had been more into the talk that morning.

Three ideas stayed with me as my conference closed:

- One panelist made a great comment in the spirit of looking outward. Paraphrase: While we in CS argue about what "computational thinking" means, we should embrace the diversity of computational thinking done out in the world and reach out to work with partners in many disciplines.

- Another panelist commented on the essential role that computing plays in other disciplines. He used biology as his example. Paraphrase: To be a biologist these days requires that you understand simulation, modeling, and how to work with large databases. Working with large databases is the defining characteristic of social science these days.

- Many of the issues that challenge computer scientists who want to engage in interdisciplinary research of this sort

are ones we have encountered for a long time. For instance, how can a computer scientist find the time to gain all of

the domain knowledge she needs?

Other challenges follow from how people on either side of the research view the computer scientist's role. Computer science faculties that make tenure and promotion decisions often do not see research value in interdisciplinary research. The folks on the applied side often contribute to this by viewing the computer science as a tool builder or support person, not as an integral contributor to solving the research problem. I have seen this problem firsthand while helping members of my department's faculty try to contribute to projects outside of our department.

This panel was a most excellent way to end my conference, with many thoughts about how to work with CS colleagues to develop projects that engage colleagues in other disciplines.

Pretty soon after the close of this session I was on the road home, whether repacked my bags and headed off for a few days of spring break with my family in St. Louis. This trip was a wonderful break, though ten days too early to see my UNI Panthers end their breakout season with an amazing run.

March 24, 2010 7:42 PM

SIGCSE Day 2 -- Al Aho on Teaching Compiler Construction

[A transcript of the SIGCSE 2010 conference: Table of Contents]

Early last year, I wrote a blog entry about using idea's from Al Aho's article, Teaching the Compilers Course, in the most recent offering of my course. When I saw that Aho was speaking at SIGCSE, I knew I had to go. As Rich Pattis told me in the hallway after the talk, when you get a chance to hear certain people speak, you do. Aho is one of those guys. (For me, so is Pattis.)

The talk was originally scheduled for Thursday, but persistent fog over southeast Wisconsin kept several people from arriving at the conference on time, including Aho. So the talk was rescheduled for Fri. I still had to see it, of course, so I skipped the attention-grabbing "If you ___, you might be a computational thinker".

Aho's talk covered much of the same ground as his inroads paper, which gave me the luxury of being able to listen more closely to his stories and elaborations than to the details. The talk did a nice job of putting the compiler course into its historical context and tried to explain why we might well teach a course very different -- yet in many ways similar -- to the course we taught forty, twenty-five, or even ten years ago.

He opened with lists of the top ten programming languages in 1970 and 2010. There was no overlap, which introduced Aho's first big point: the landscape of programming languages has changes in a big way since the beginning of our discipline, and there have been corresponding changes in the landscape of compilers. The dimensions of change are many: the number of languages, the diversity of languages, the number and kinds of applications we write. The growth in number and diversity applies not only to the programming languages we use, which are the source language to a compiler, but also to the target machines and the target languages produced by compilers.

From Aho's perspective, one of the most consequential changes in compiler construction has been the rise of massive compiler collections such as gcc and LLVM. In most environments, writing a compiler is no longer a matter of "writing a program" as much a software engineering exercise: work with a large existing system, and add a new front end or back end.

So, what should we teach? Syntax and semantics are fairly well settled as matter of theory. We can thus devote time to the less mathematical parts of the job, such as the art of writing grammars. Aho noted that in the 2000s, parsing natural languages is mostly a statistical process, not a grammatical one, thanks to massive databases of text and easy search. I wonder if parsing programming languages will ever move in this direction... What would that mean in terms of freer grammar, greater productivity, or confusion?

With the availability of modern tools, Aho advocates an agile "grow a language" approach. Using lex and yacc, students can quickly produce a compiler in approximately 20 lines of code. Due to the nature of syntax-directed translation, which is closely related to structural recursion, we can add new productions to a grammar with relative ease. This enables us to start small, to experiment with different ideas.

The Dragon book circa 2010 adds many new topics to its previous editions. It just keeps getting thicker! It covers much more material, both breadth and depth, than can be covered in the typical course, even with graduate students. This gives instructors lots of leeway in selecting a subset around which to build a course. The second edition already covers too much material for my undergrad course, and without enough of the examples that many students need these day. We end up selecting such a small subset of the material that the price of the book is too high for the number of pages we actually used.

The meat of the talk matched the meat of his paper: the compiler course he teaches these days. Here are a few tidbits.

On the Design of the Course

- Aho claims that, through all the years, every team has delivered a working system. He attributes this to experience teaching the course and the support they provide students.

- Each semester, he brings in at least one language designer in as a guest speaker, someone like Stroustrup or Gosling. I's love to do this but don't have quite the pull, connections, or geographical advantage of Aho. I'll have to be creative, as I was the last time I taught agile software development and arranged a phone conference with Ken Auer.

- Students in the course become experts in one language: the one they create. They become much more knowledgable in several others: the languages they to to write, build, and test their compiler.

On System Development

- Aho sizes each student project at 3,000-6,000 LOC. He uses Boehm's model to derive a team size of 5, which fits nicely with his belief that 5 is the ideal team size.

- Every team member must produce at least 500 lines of code on the project. I have never had an explicit rule about this in the past, but experience in my last two courses with team projects tells me that I should.

- Aho lets teams choose their own technology, so that they can in the way that makes them most comfortable. One serendipitous side effect of this choice is that requires him to stay current with what's going on in the world.

- He also allows teams to build interpreters for complex languages, rather than full-blown compilers. He feels that the details of assembly language get in the way of other important lessons. (I have not made that leap yet.)

On Language Design

- One technique he uses to scope the project is to require students to identify an essential core of their language along with a list of extra features that they will implement if time permits. In 15 years, he says, no team has ever delivered an extra feature. That surprises me.

- In order to get students past the utopian dream of a perfect language, he requires each team to write two or three programs in their language to solve representative problems in the language's domain. This makes me think of test-first design -- but of the language, not the program!

- Aho believes that students come to appreciate our current languages more after designing a language and grappling with the friction between dreams and reality. I think this lesson generalizes to most forms of design and creation.

I am still thinking about how to allow students to design their own language and still have the time and energy to produce a working system in one semester. Perhaps I could become more involved early in the design process, something Aho and his suite of teaching assistants can do, or even lead the design conversation.

On Project Management

- "A little bit of process goes a long way" toward successful delivery and robust software. The key is finding the proper balance between too much process, which stifles developers, and too little, which paralyzes them.

- Aho has experimented with different mechanisms for organizing teams and selecting team leaders. Over time, he has found it best to let teams self-organize. This matches my experience as well, as long as I keep an eye out for obviously bad configurations.

- Aho devotes one lecture to project management, which I need to do again myself. Covering more content is a siren that scuttles more student learning than it buoys.

~~~~

Aho peppered his talk with several reminiscences. He told a short story about lex and how it was extended with regular expressions from egrep by Eric Schmidt, Google CEO. Schmidt worked for Aho as a summer intern. "He was the best intern I ever had." Another interesting tale recounted one of his doctoral student's effort to build a compiler for a quantum computer. It was interesting, yes, but I need to learn more about quantum computing to really appreciate it!

My favorite story of the day was about awk, one of Unix's great little languages. Aho and his colleagues Weinberger and Kernighan wrote awk for their own simple data manipulation tasks. They figured they'd use it to write throwaway programs of 2-5 lines each. In that context, you can build a certain kind of language and be happy. But as Aho said, "A lot of the world is data processing." One day, a colleague came in to his office, quite perturbed at a bug he had found. This colleague had written a 10,000-line awk program to do computer-aided design. (If you have written any awk, you know just how fantabulous this feat is.) In a context where 10K-line programs are conceivable, you want a very different sort of language!

The awk team fixed the bug, but this time they "did it right". First, they built a regression test suite. (Agile Sighting 1: continuous testing.) Second, they created a new rule. To propose a new language feature for awk, you had to produce regression tests for it first. (Agile Sighting 2: test-first development.) Aho has built this lesson into his compiler course. Students must write their compiler test-first and instrument their build environments to ensure that the tests are run "all of the time". (Agile Sighting 3: continuous integration.)

An added feature of Aho's talk over his paper was three short presentations from members of a student team that produced PixelPower, a language which extends C to work with a particular graphics library. They shared some of the valuable insights from their project experience:

- They designed their language to have big overlap with C. This way, they had an existing compiler that they understood well and could extend.

- The team leader decided to focus the team, not try to make everyone happy. This is a huge lesson to learn as soon as you can, one the students in my last compiler course learned perhaps a bit too late. "Getting things done," Aho's students said, "is more important than getting along."

- The team kept detailed notes of all their discussions and all their decisions. Documentation of process is in many ways much more important than documentation of code, which should be able to speak for itself. My latest team used a wiki for this purpose, which was a good idea they had early in the semester. If anything, they learned that they should have used it more frequently and more extensively.

One final note to close this long report. Aho had this to say about the success of his course:

If you make something a little better each semester, after a while it is pretty good. Through no fault of my own this course is very good now.

I think Aho's course is good precisely because he adopted this attitude about its design and implementation. This attitude serves us well when designing and implementing software, too: Many iterations. Lots of feedback. Collective ownership of the work product.

An hour well spent.

Posted by Eugene Wallingford | Permalink | Categories: Computing, Software Development, Teaching and Learning

March 22, 2010 4:35 PM

SIGCSE Day 2 -- Reimagining the First Course

[A transcript of the SIGCSE 2010 conference: Table of Contents]

The title of this panel looked awfully interesting, so I headed off to it despite not knowing just what it was about. It didn't occur to me until I saw the set of speakers at the table that it would be about an AP course! As I have written before, I don't deal much with AP, though most of my SIGCSE colleagues do. This session turned out to be a good way to spend ninety minutes, both as a window into some of the conference buzz and as a way to see what may be coming down the road in K-12 computer science in a few years.

What's up? A large and influential committee of folks from high schools, universities, and groups such as the ACM and NSF are designing a new course. It is intended as an alternative to the traditional CS1 course, not as a replacement. Rather than starting with programming or mathematics as the foundation, of the the course, the committee is first identifying a set of principles of computing and then designing a course to teach these principles. Panel leader Owen Astrachan said that the are engineering a course, given the national scale of the project and the complexity of creating something that works at lots of schools and for lots of students.

Later, I hope to discuss the seven big ideas and the seven essential practices of computational thinking that serve as the foundation for this course, but for now you should read them for yourself. At first blush, they seem like a reasonable effort to delineate what computing means in the world and thus what high school graduates these days should know about the technology that circumscribes their lives. They emphasize creativity, innovation, and connections across disciplines, all of which can be lost when we first teach students a programming language and whip out "Hello, World" and the Towers of Hanoi.

Universities have to be involved in the design and promotion of this new course because it is intended for advanced placement, and that mean that it must earn college credit. Why does the AP angle matter? Right now, because it is the only high school CS course that counts at most universities. It turns out that advanced placement into a major matters less to many parents and HS students than the fact that the course carries university credit. Placement is the #1 reason that HS students take AP courses, but university credit is not too far behind.

For this reason, any new high school CS course that does not offer college credit will be hard to sell to any K-12 school district. (This is especially true in a context where even the existing AP CS is taught in only 7% of our high schools.) That's not too high a hurdle. At the university level, it is much easier to have an AP course approved for university or even major elective credit than it is to have a course approved for advanced placement in the major. So the panel encouraged university profs in the audience to do what they can at their institutions to prepare the way.

Someone on the panel may have mentioned the possibility of having a principles-based CS AP course count as a general education course. At my school we were successful a couple of years ago at having a CS course on simulation and modeling added as one of the courses that satisfies the "quantitative reasoning" requirement in our Liberal Arts Core. I wonder how successful we could be at having a course like the new course under development count for LAC credit. Given the current climate around our core, I doubt we could get a high school AP course to count, because it would not be a part of the shared experience our students have at the university.

The most surprising part of this panel was the vibe in the room. Proposals such as this one that tinker with the introductory course in CS usually draw a fair amount of skepticism and outright opposition. This one did not. The crowd seemed quite accepting, even when the panel turned its message into one of advocacy. They encouraged audience members to become advocates for this course and for AP CS more generally at their schools. They asked us not to tear down these efforts, but to join in and help make the course better. Finally, they asked us to join the College Board, the CS Teachers Association, and the ACM in presenting a united front to our universities, high schools, and state governments about the importance and role of computing in the K-12 curriculum. The audience seemed as if it was already on board.

In closing, there were two memorable quotes from the panel. First, Jan Cuny, currently a program officer for CISE at the National Science Foundation, addressed concern that all the talk these days about the "STEM disciplines" often leaves computing out of the explicit discussion:

There is a C in STEM. Nothing will happen in the S, the T, the E, or the M without the C.

I've been telling everyone at my university this for the last several years, and most are open to the broadening of the term when they are confronted with this truth.

Second, the front-runner for syllogism of the year is this gem from Owen Astrachan. Someone in the audience asked, "If this new course is not CS1, then is it CS0?" (CS0 is a common moniker for university courses taken by non-majors before they dive into the CS major-focused CS1 course.) Thus spake Owen:

This course comes before CS1.

0 is the only number less than 1.

Therefore, this course is CS0.

This was only half of Owen's answer, but it was the half that made me laugh.

March 20, 2010 9:04 PM

SIGCSE -- What's the Buzz?

[A transcript of the SIGCSE 2010 conference: Table of Contents]

Some years, I sense a definite undercurrent to the program and conversation at SIGCSE. In 2006, it was Python on the rise, especially as a possible language for CS1. Everywhere I turned, Python was part of the conversation or a possible answer to the questions people were asking. My friend Jim Leisy was already touting a forthcoming CS1 textbook that has since risen to the top of its niche. It pays for me to talk to people who are looking into the future!

Other years, the conference is melange of many ideas with little or no discernible unifier. SIGCSE 2008 seemed to want to be about computing with big data, but even a couple of high-profile keynote addresses couldn't put that topic onto everyone's lips. 2007 brought lots of program about "computational thinking", but there was no buzz. Big names and big talks don't create buzz; buzz comes from the people.

This year's wanna-be was concurrency. There were papers and special sessions about its role in the undergrad curriculum, and big guns Intel, Google, and Microsoft were talking it up. But conversation in the hallways and over drinks never seemed to gravitate toward concurrency. The one place where I saw a lot of people gathering to discuss it was a BoF on Thursday night, but even then the sense was more anticipation than activity. What are others doing? Maybe next year.

The real buzz this year was CS, and CS ed, looking outward. Consistent with recent workshops like SECANT, SIGCSE 2010 was full of talk about computer science interacting with other disciplines, especially science but also the arts. Some of this talk was about how CS can affect science education, and some was about how other disciplines can affect CS education.

But there was also a panel on integrating computing research and development with R&D in other sciences. While this may look like a step outside of SIGCSE's direct sphere of interest, it is essential that we keep the sights of our CS ed efforts out in the world where our graduates work. Increasingly, this is again in applications where computing is integral to how others do their jobs.

An even bigger outward-looking buzz coalesced around CS educators working with K-12 schools, teachers, and students. A big part of this involved teaching computer science in middle schools and high schools, including AP CS. But it also involved the broader task of teaching all students about "computational thinking": what it means, how to do it, and maybe even how to write programs that automate it. Such a focus on the general education of students is real evidence of looking outward. This isn't about creating more CS majors in college, though few at SIGCSE would object to that. It's about creating college students better prepared for every major and career they might pursue.

To me, this is a sign of how we in CS ed are maturing, going from a concern primarily for our own discipline to one for computing's larger role in the world. That is an ongoing theme of these blog, it seems, so perhaps I am suffering from confirmation bias. But I found it pretty exciting to see so many people working so hard to bring computing into classrooms and into research labs as a fundamental tool rather than as a separate discipline.

March 12, 2010 9:49 PM

SIGCSE This and That, Volume 1

[A transcript of the SIGCSE 2010 conference: Table of Contents]

Day 2 brought three sessions worth their own blog entries, but it was also a busy day meeting with colleagues. So those entries will have to wait until I have a few free minutes. For now, here are a few miscellaneous observations from conference life.

On Wednesday, I checked in at the table for attendees who had pre-registered for the conference. I told the volunteer my name, and he handed me my bag: conference badge, tickets to the reception and Saturday luncheon, and proceedings on CD -- all of which cost me in the neighborhood of $150. No one asked for identification. I though, what a trusting registration.

This reminded me of picking up my office and building keys on my first day at my current job. The same story: "Hi, I'm Eugene", and they said, "Here are your keys." When I suggested to a colleague that this was perhaps too trusting, he scoffed. Isn't it better to work at a place where people trust you, at least until we have a problem with people who violate that trust? I could not dispute that.

The Milwaukee Bucks are playing at home tonight. At OOPSLA, some of my Canadian and Minnesotan colleagues and I have a tradition of attending a hockey game whenever we are in an NHL town. I'm as big a basketball fan as they are hockey fans, so maybe I should check out an NBA game at SIGCSE? The cheapest seat in the house is $40 or so and is far from the court. I would go if I had a posse to go with, but otherwise it's a rather expensive way to spend a night alone watching a game.

SIGCSE without my buddy Robert Duvall feels strange and lonely. But he has better things to do this week: he is a proud and happy new daddy. Congratulations, Robert!

While I was writing this entry, the spellchecker on my Mac flagged www.cs.indiana.edu and suggested I replace it with www.cs.iadiana.edu. Um, I know my home state of Indiana is part of flyover country to most Americans, but in what universe is iadiana an improvement?

People, listen to me: problem-solve is not a verb. It is not a word at all. Just say solve problems. It works just fine. Trust me.

March 11, 2010 8:33 PM

SIGCSE Day One -- The Most Influential CS Ed Papers

[A transcript of the SIGCSE 2010 conference: Table of Contents]

This panel aimed to start the discussion of how we might identify which CS education papers have had the greatest influence on our practice of CS education. Each panelist produced a short list of candidates and also suggested criteria and principles that the community might use over time. Mike Clancy made explicit the idea that we should consider both papers that affect how we teach and papers that affect what we teach.

This is an interesting= process that most areas of CS eventually approach. A few years ago, OOPSLA began selecting a paper from the OOPSLA conference ten years prior that had had the most influence on OO theory or practice. That turns out to be a nice starting criterion for selection: wait ten years so that we have some perspective on a body of work and an opportunity to gather data on the effects of the work. Most people seem to think that ten years is long enough to wait.

You can see the list of papers, books, and websites offered by the panelists on this page. The most impassioned proposal was Eric Roberts's tale of how much Rich Pattis's Karel the Robot affects Stanford's intro programming classes to this day, over thirty years after Rich first created Karel.

I was glad to see several papers by Eliot Soloway and his students on the list. Early in my career, Soloway had a big effect on how I thought about novice programmers, design, and programming patterns. My patterns work was also influenced strongly by Linn and Clancy's The Case for Case Studies of Programming Problems, though I do not think I have capitalized on that work as much as I could have.

Mark Guzdial based his presentation on just this idea: our discipline in general does not fully capitalize on great work that has come before. So he decided to nominate the most important papers, not the most influential. What papers should we be using to improve our theory and practice?

- Cognitive Tutors: Lessons Learned, by Anderson, Corbett, Koedinger and Pelletier (1995): the most successful educational technology project in history

- Cognitive Objectives in a LOGO Debugging Curriculum: Instruction, Learning, and Transfer, by Klahr and Carver (1988): teaching students how to solve problems, not how to program

- Usability Analysis of Visual Programming Environments, by Green and Petre (1996): recent work has shown that visual programming is easier for beginners to learn, and the knowledge gained transfers to textual programming

I know Anderson's cognitive tutors work well, from the mid-1990s when I was preparing to move my AI research toward intelligent tutoring systems. The depth and breadth of that work is amazing.

Some of my favorite papers showed up as runners-up on various lists, including Gerald Weinberg's classic The Psychology of Programming. But I was especially thrilled when, in the post-panel discussion, Max Hailperin suggested Robert Floyd's Turing Award lecture, The Paradigms of Programming. I think this is one of the all-time great papers in CS history, with so many important ideas presented with such clarity. And, yes, I'm a fan.

March 11, 2010 7:47 PM

SIGCSE Day One -- What Should Everyone Know about Computation?

[A transcript of the SIGCSE 2010 conference: Table of Contents]

This afternoon session was a nice follow up to the morning session, though it had a focus beyond the interaction of computation and the sciences: What should every liberally-educated college graduate know about computation? This almost surely is not the course we start CS majors with, or even the course we might teach scientists who will apply computing in a technical fashion. We hope that every student graduates with an understanding of certain ideas from history, literature, math, and science. What about computation?

Michael Goldweber made an even broader analogy in his introduction. In the 1800s, knowledge about farming was pervasive throughout society, even among non-farmers. This was important for people, even city folk, to understand the world in which they lived. Just as agriculture once dominated our culture, so does technology now. To understand the world in which they live, people these days need to understand computation.

Ultimately, I found this session disappointing. We heard a devil's advocate argument against teaching any sort of "computer literacy"; a proposal that we teach all students what amounts to an applied, hand-waving algorithms course; and a course that teaches abstraction in contexts that connects with students. There was nothing wrong with these talks -- they were all entertaining enough -- but they didn't shed much new light on what is a difficult question to answer.

Henry Walker did say a few things that resonated with me. One, he reminded us that there is a difference between learning about science and doing science. We need to be careful to design courses that do one of these well. Two, he tried to explain why computer science is the right discipline for teaching problem solving as a liberal art, such as how a computer program can illustrate the consequences specific choices, the interaction of effects, and especially the precision with which we must use language to describe processes in a computer program. Walker was the most explicit of the panelists in treating programming as fundamental to what we offer the world.

In a way unlike many other disciplines, writing programs can affect how we think in other areas. A member of the audience pointed out CS also fundamentally changes other disciplines by creating new methodologies that are unlike anything that had been practical before. His example was the way in which Google processes and translates language. Big data and parallel processing have turned the world of linguistics away from Chomskian approach and toward statistical models of understanding and generating language.

March 11, 2010 7:04 PM

SIGCSE Day One -- Computation and The Sciences

[A transcript of the SIGCSE 2010 conference: Table of Contents]

I chose this session over a paper session on Compilers and Languages. I can always check those papers out in the proceedings to see if they offer any ideas I can borrow. This session connects back to my interests in the role of computing across all disciplines, and especially to my previous attendance at the SECANT workshops on CS and science in 2007 and 2008. I'm eager to hear about how other schools are integrating CS with other disciplines, doing data-intensive computing with their students, and helping teachers integrate CS into their work. Two of these talks fit this bill.

In then first, Ali Erkan talked about the need to prepare today's to work on large projects that span several disciplines. This is more difficult than simply teaching programming languages, data structures, and algorithms. It requires students to have disciplinary expertise beyond CS, the ability to do "systems thinking", and the ability to translate problems and solutions across the cultural boundaries of the disciplines. A first step is to have students work on problems that are bigger than the students of any single discipline can solve. ( Astrachan's Law marches on!)

Erkin then described an experiment at Ithaca College in which four courses run as parallel threads against a common data set of satellite imagery: ecology, CS, thermodynamics, and calculus. Students from any course can consult students in the other courses for explanations from those disciplines. Computer science students in a data structures course use the data not only to solve the problem but also to illustrate ideas, such as memory usage of depth-first and breadth-first searches of a grid of pixels. They can also apply more advanced ideas, such as data analysis techniques to smooth curves and generate 3D graphs.

I took away two neat points from this talk. The first was a link to The Cryosphere Today, a wonderful source of data on arctic and antarctic ice coverage for students to work with. The second was a reminder that writing programs to solve a problem or illustrate a data set helps students to understand the findings of other sciences. Raw data become real for them in writing and running their code.

In the second paper, Craig Struble described a three-day workshop for introducing computer science to high school science teachers. Struble and his colleagues at Marquette offered the workshop primarily for high school science teachers in southeast Wisconsin, building on the ideas described in A Novel Approach to K-12 CS Education: Linking Mathematics and Computer Science. The workshop had four kinds of sessions:

- tools: science, simulation, probability, Python, and VPython

- content: mathematics, physics, chemistry, and biology

- outreach: computing careers, lesson planning

- fun: CS unplugged activities, meals and other personal interaction with the HS teachers

This presentation echoed some of what we have been doing here. Wisconsin K-12 education presents the same challenge that we face in Iowa: there are very few CS courses in the middle- or high schools. The folks at Marquette decided to attack the challenge in the same way we have: introduce CS and the nebulous "computational thinking" through K-12 science classes. We are planning to offer a workshop for middle- and high school teachers. We are willing to reach an audience wider than science teachers and so will be showing teachers how to use Scratch to create simulations, to illustrate math concepts, and even to tell stories.

I am also wary of one of the things the Marquette group learned in follow-up with the teachers who attended their workshop. Most teachers likes it and learned a lot, but many are not able to incorporate what they learn into their classes. Some face time constraints from a prescribed curriculum, while others are limited by miscellaneous initiatives external to their curriculum that are foisted on them by their school. That is a serious concern for us as we try to help teachers do cool things with CS that change how they teach their usual material.

March 10, 2010 9:15 PM

SIGCSE DAY 0 -- New Educators Roundtable

[A transcript of the SIGCSE 2010 conference: Table of Contents]

I spent most of my afternoon at the New Educators Roundtable, as on of the veteran faculty seeding and guiding conversation. The veteran team came from big schools like Carnegie-Mellon, Stanford, and Washington, medium-sized schools like Creighton and UNI, and a small liberal-arts college, Union. The new educators themselves came from this range of schools and more (one teaches at Milwaukee Area Technical College up the street) and were otherwise an even more mixed lot, ranging from undergrads to university instructors with several years experience. The one thing they all have in common is a remarkable passion for teaching. They inspired this old-timer with their energy for 100-hour work weeks and their desire to do great things in the classroom.

The conversation ranged as far and as wide as the participants' backgrounds and experience. leaders Julie Zelenski and Dave Reed wisely planned not to impose much structure on the workshop, instead letting the the participants drive the conversation with questions and observations. We elders interjected with stories and observations of our own -- and even a question or two of our own, which let the young ones know that they'll still be learning the craft of teaching when they get old like us.

I did end up with one new item for my list of books to read: Mindset, by Carol Dweck. It came up in a discussion about how to help students overcome an unhealthy focus on grades, which eventually turned into a discussion about student motivation and attitudes about what it takes to succeed in CS. As one elder summarized nicely, "It's not about talent. It's about work."

March 10, 2010 8:35 PM

SIGCSE DAY 0 -- Media Computation Workshop

[A transcript of the SIGCSE 2010 conference: Table of Contents]

I headed to SIGCSE a day early this year in order to participate in a couple of workshops. The first draw was Mark Guzdial's and Barbara Ericson's workshop using media computation to teach introductory computing to both CS majors and non-majors. I have long been a fan of this work but have never seen them describe it. This seemed like a great chance to learn a little from first principles and also to hear about recent developments in the media comp community.

Because I taught a CS1 course in Java, using media comp four years ago, I was targeted by other media comp old-timers as a media comp old-timer. They decided, with Mark's blessing, to run a parallel morning session with the goal of pooling experience and producing a resource of value to the community.

When the moment came for the old-timers to break out on their own, I packed up my laptop, stood to leave -- and stopped. I felt like spending the morning as a beginner. This was not an entirely irrational decision. First, while I have done Java media comp, I have never worked with the original Python materials or the JES programming environment students use to do media comp in Python. Second, I wanted to see Mark present the material -- material he has spent years developing and for which he has great passion. I love to watch master teachers in action. Third, I wanted to play with code!

Throughout the morning, I diddled in JES with Python code to manipulate images, doing things I've done many times in Java. It was great fun. Along the way, I picked up a few nice quotes, ideas, and background facts:

- Mark: "For liberal arts students and business students, the computer is not a tool of calculation but a tool of communication.

- The media comp data structures book is built largely on explaining the technology needed to create the wildebeest stampede in The Lion King. (Check out this analysis, which contains a description of the scene in the section "Building the Perfect Wildebeests".)

- We saw code that creates a grayscale version of an image attuned to human perception. The value used for each color in a pixel weights its original values as 0.299*red + 0.587*blue + 0.114*green. This formula reinforces the idea that there are an infinite number of weightings we can use to create grayscale. There are, of course, only a finite number of grayscale versions of an image, though that number is very large: 256 raised to a power equal to the number of pixels in the image.

- After creating several Python methods that modify an image, non-majors eventually bump into the need to return a value, often a new image. Deciding when a function should return a value can be tough, especially for non-CS folks. Mark uses this rule of thumb to get them started: "If you make an image in the method, return it."

- Mark and Barb use Making of "The Matrix" to take the idea of chromakey beyond the example everyone seems to know, TV weather forecasters.

- Using mirroring to "fix" a flawed picture leads to a really interesting liberal arts discussion: How do you know when a picture is fake? This is a concept that every person needs to understand these days, and understanding the computations that can modify an image enables us to understand the issues at a much deeper level.

- Mark showed an idea proposed to him by students at one of his workshops for minority high school boys: when negating an image, change the usual 255 upper bound to something else, say, 180. This forces many of the resulting values to 0 and behaves like a strange posterize function!

I also learned about Susan Menzel's work at Indiana University to port media computation to Scheme. This is the second such project I've heard of, after Sam Rebelsky's work at Grinnell College connecting Scheme to Gimp.

Late in the morning, we moved on to sound. Mark demonstrated some wonderful tools for playing with and looking at sound. He whistled, sang, hummed, and played various instruments into his laptop's microphone, and using their MediaTools (written in Squeak) we could see the different mixes of tones available in the different sounds. These simple viewers enable us to see that different instruments produce their own patterns of sounds. As a relative illiterate in music, I only today understood how it is that different musical instruments can produce sounds of such varied character.

The best quote of the audio portion of the morning was, "Your ears are all about logarithms." Note systems with halving and doubling of frequencies across sets of notes is not an artifact of culture but an artifact of how the human ear works!

This was an all-day workshop, but I also had a role as a sage elder at the New Educators Roundtable in the afternoon, so I had to duck out for a few hours beginning with lunch. I missed out on several cool presentations, including advanced image processing ideas such as steganography and embossing, but did get back in time to hear how people are now using media computation to teach data structures ideas such as linked lists and graphs. Even with a gap in the day, this workshop was a lot of fun, and valuable as we consider expanding my department's efforts to teach computing to humanities students.

March 10, 2010 7:40 PM

Notes on SIGCSE 2010: Table of Contents

The set of entries cataloged here records some of my thoughts and experiences at SIGCSE 2010, in Milwaukee, Wisconsin, March 10-13. I'll update it as I post new essays about the conference.

Primary entries:

- SIGCSE Day 0

- SIGCSE Day 1

- SIGCSE Day 2

- Carl Wiemann on Teaching Science

-- including CS -- like a scientist - Al Aho on

Teaching Compiler Construction

... in the 21st century - Reimagining the First

Year of Computing

... in the form of a new AP CS course

- Carl Wiemann on Teaching Science

- SIGCSE Day 3

- Interdisciplinary

Research

... in which CS meets the sciences once again

Ancillary entries:

- What's the

Buzz?

... it's out there - This and That, Volume 1

- This and

That, Volume 2

... in which we remember profs are like everyone else - Running on the Road: Milwaukee, Wisconsin

... in which Eugene tries to put in a few miles

Posted by Eugene Wallingford | Permalink | Categories: Computing, Running, Software Development, Teaching and Learning

March 08, 2010 8:41 PM

A Knowing-and-Doing Gathering at SIGCSE?

I'm off tomorrow to SIGCSE. I'm looking forward to several events, among them the media computation workshop, the New Educators Roundtable, several sessions on programming languages and compilers, and especially a keynote address by physics Nobel laureate Carl Wiemann, who lots to say about using science to teach science. It should be a busy and fun week.

A couple of readers have indicated interest in visiting with me over a coffee break at the conference. Reader Matthew Hertz suggested something more: an informal meeting of Knowing and Doing readers. The lack of comments on this blog notwithstanding, I love hearing from readers, whether they have ideas to share or concerns with my frequently sketchy logic. As a reader myself, I often like to put a face on the authors I read. A few readers of this blog feel the same. My guess is that readers of my blog probably have a lot in common, and they might gain as much from meeting each other as meeting me!

So. If you are interested in meeting up with me at SIGCSE, partaking in an informal gathering of Knowing and Doing readers, or both, drop me a line by e-mail or on Twitter @wallingf. I'll gauge interest and let everyone know the verdict. I'm sure that, if there's interest, we can find a time and space to connect.

March 07, 2010 5:45 PM

Programming as Inevitable Consequence

My previous entry talked about mastering tools and improving process on the road to achievement. Garry Kasparov wrote of "programming yourself" as the way to make our processes better. To excel, we must program ourselves!

One way to do that is via computation. Humans use computers all the time now to augment their behavior. Chessplayers are a perfect example. Computers help us do what we do better, and sometimes they reconfigure us, changing who we are and what we do. Reconfigured well, a person or group of people can push their capabilities beyond even what human experts can do -- alone, together, or with computational help.

But what about our tools? How many chessplayers, or any other people for that matter, program their computers these days as a means of making the tools they need, or the tools they use better? This is a common lament among certain computer scientists. Ian Bogost reminds us that writing programs used to be an inevitable consequence of using computers. Computer manufacturers used to make writing programs a natural step in our mastery of the machine they sold us. They even promoted the personal computer as part of how we became more literate. Many of us old-timers tell stories of learning to program so that we could scratch some itch.

It's not obvious that we all need to be able to program, as long as the tools we need to use are created for us by others. Mark Guzdial discusses his encounters with the "user only" point of view in a recent entry motivated by Bogost's article. As Mark points out, though, the computer may be different than a bicycle and our other tools. Most tools extend our bodies, but the computer extends our minds. We can program our bodies by repetition and careful practice, but the body is not as malleable as the mind. With the right sort of interaction with the world, we seem able to amplify our minds in ways much different than what a bicycle can do for our legs.

Daniel Lemire expresses it nicely and concisely: If you understand an idea, you can implement it in software. To understand an idea is to be able to write a program. The act of writing itself gives rise to a new level of understanding, to a new way of describing and explaining the idea. But there is more than being able to write code. Having ideas and being able to program is, for so many people, a sufficient condition to want to program: Sometimes to scratch an itch; sometimes to understand better; and sometimes simply to enjoy the act.

This feeling is universal. As I wrote not long ago, computing has tools and ideas that make people feel superhuman. But there is more! As Thomas Guest reminds us, "ultimately, the power of the programmer is what matters". The tools help to make us powerful, true, but they also unleash power that is already within is.

By the way, I strongly recommend Guest's blog, Word Aligned. Guest doesn't write as frequently as some bloggers, but when he does, it is technically solid, deep, and interesting.

March 05, 2010 9:21 PM

Mastering Tools and Improving Process

Today, a student told me that he doesn't copy and paste code. If he wants to reuse code verbatim, he requires himself to type it from scratch, character by character. This way, he forces himself to confront the real cost of duplication right away. This may motivate him to refactor as soon as he can, or to reconsider copying the code at all and write something new. In any case, he has paid a price for copying and so has to take it seriously.

The human mind is wonderfully creative! I'm not sure I could make this my practice (I use duplication tactically), but it solves a very real problem and helps to make him an even better programmer. When our tools make it too easy to do something that can harm us -- such as copy and paste with wild abandon, no thought of the future pain it will cause us -- a different process can restore some balance to the world.

The interplay between tools and process came to mind as I read Clive Thompson's Garry Kasparov, cyborg. this afternoon. Last month, I read the same New York Review of Books essay by chess grandmaster Garry Kasparov, The Chess Master and the Computer, that prompted Thompson's essay. When I read Kasparov, I was drawn in by his analysis of what it takes for a human to succeed, as contrasted to what makes computers good at chess:

The moment I became the youngest world chess champion in history at the age of twenty-two in 1985, I began receiving endless questions about the secret of my success and the nature of my talent. ... I soon realized that my answers were disappointing. I didn't eat anything special. I worked hard because my mother had taught me to. My memory was good, but hardly photographic. ...

Kasparov understood that, talent or no talent, success was a function of working and learning:

There is little doubt that different people are blessed with different amounts of cognitive gifts such as long-term memory and the visuospatial skills chess players are said to employ. One of the reasons chess is an "unparalleled laboratory" and a "unique nexus" is that it demands high performance from so many of the brain's functions. Where so many of these investigations fail on a practical level is by not recognizing the importance of the process of learning and playing chess. The ability to work hard for days on end without losing focus is a talent. The ability to keep absorbing new information after many hours of study is a talent. Programming yourself by analyzing your decision-making outcomes and processes can improve results much the way that a smarter chess algorithm will play better than another running on the same computer. We might not be able to change our hardware, but we can definitely upgrade our software.

"Programming yourself" and "upgrading our software" -- what a great way to describe how it is that so many people succeed by working hard to change what they know and what they do.

While I focused on the individual element in Kasparov's story, Thompson focused on the social side: how we can "program" a system larger than a single player? He relates one of Kasparov's stories, about a chess competition in which humans were allowed to use computers to augment their analysis. Several groups of strong grandmasters entered the competition, some using several computers at the same time. Thompson then quotes this passage from Kasparov:

The surprise came at the conclusion of the event. The winner was revealed to be not a grandmaster with a state-of-the-art PC but a pair of amateur American chess players using three computers at the same time. Their skill at manipulating and "coaching" their computers to look very deeply into positions effectively counteracted the superior chess understanding of their grandmaster opponents and the greater computational power of other participants. Weak human + machine + better process was superior to a strong computer alone and, more remarkably, superior to a strong human + machine + inferior process.

Thompson sees this "algorithm" as an insight into how to succeed in a world that consists increasingly of people and machines working together:

[S]erious rewards accrue to those who figure out the best way to use thought-enhancing software. ... The process matters as much as the software itself.

I see these two stories -- Kasparov the individual laboring long and hard to become great, and "weak human + machine + better process" conquering all -- as complements to one another, and related back to my student's decision not to copy and paste code. We succeed by mastering our tools and by caring about our work processes enough to make them better in whatever ways we can.

Posted by Eugene Wallingford | Permalink | Categories: Computing, Software Development, Teaching and Learning

March 02, 2010 7:05 PM

Working on Advice for New Faculty

Julie Zelenski and Dave Reed have invited me to serve as a "sage elder" at the New Educators Roundtable, a pre-conference workshop at SIGCSE 2010. The roundtable is "designed to mentor college and university faculty who are new to teaching". This must surely be a mistake! I may well be an elder after all these years stalking the front of a classroom, but sage? Ha. In so many ways, I feel like a beginner every time I to the front of a class.

Experience does teach us lessons, though, so even if I still have a lot to learn and incorporate into how I teach, I probably do have some lessons I can share with people who are just getting started. If nothing else, I can talk with new faculty about some of the mistakes I have made. Each person must must live his or her own experiences, but sometimes we can plant a seed in people's minds that will germinate later, when the time is right for each person. (That sounds a lot like all teaching, actually.)

The website for the workshop will ultimately contain information from the workshop sessions themselves. To start, it will have short biographies of the elders. Actually, "biography" is a bit too formal a name for it. The header on the draft web page currently says "Bio / blurb / career highlights / anecdotes / historical fiction". Here is the retrospective falsification of my own career that I submitted:

In the fourth grade, my favorite teacher of all time told me that I would never be a teacher; I was too impatient with others. For that and many other reasons, I never expected to become a teacher when I grew up. As a graduate student doing AI at Michigan State, I was assigned to teach a few courses. I did fine, I think, but even then I planned to move into industry as a researcher and developer. Somehow, I ended up at UNI, a medium-sized public "teaching university". I've been teaching classes here since 1992. My largest section ever contained 53 students; the smallest, 4.

That is pretty much true, at least as I remember it. I included the size of my largest and smallest sections ever because many new faculty teach at big universities and will face massive CS1 and CS2 sections of several hundred students at a time. The advice I have to offer may not be as helpful in that context, so I want the people who attend the workshop to know my context. Several of my co-panelists will be able to speak more directly to those attendees. On the flip side, I have taught a long list of different courses over the years, so I can connect my experiences with many different content areas and types of course.

We elders were also asked to submit a list of "things you wish you had known" when we started teaching, as a way to jump start our thinking, as well as that of prospective attendees. Here is a list that I brainstormed:

Be honest. Students value honesty.

Even the best students will fall down occasionally. When they do, it doesn't mean there is something wrong with them, or with you.

Give feedback on assignments promptly.

The previous advice is a specific example of something more general: Most students don't handle uncertainty well. Even students who "get it" might think they do not.

Setting draconian standards and policies does more harm to your learning environment than it buys you.

Instead, set reasonable, firm, and challenging standards. Students appreciate that they are expected to accomplish something meaningful.

A lecture or classroom activity is only as good as what it helps your students do.

Each of these could use some elaboration ("Um, you thought being dishonest with students was a good thing?"), but as a start they reflect some of what I've learned.

As I prepare further for the workshop, I plan to read through the archives of the Teaching and Learning category of this blog. There are plenty of things I have learned and forgotten over the years. I hope that I wrote a few of those things down...

I think being on the New Educators' Roundtable might be as valuable for this elder as it is for the new educators. (I repeat: That sounds a lot like all teaching, actually.)