September 29, 2010 9:27 PM

The Manifest Destiny of Computer Science?

The June 2010 issue of Communications of the ACM included An Interview with Ed Feigenbaum, who is sometimes called the father of expert systems. Feigenbaum was always an ardent promoter of AI, and time doesn't seem to have made him less brash. The interview closes with the question, "Why is AI important?" The father of expert systems pulls no punches:

There are certain major mysteries that are magnificent open questions of the greatest import. Some of the things computer scientists study are not. If you're studying the structure of databases -- well, sorry to say, that's not one of the big magnificent questions.

I agree, though occasionally I find installing and configuring Rails and MySQL on my MacBook Pro to be one of the great mysteries of life. Feigenbaum is thinking about the questions that gave rise to the field of artificial intelligence more than fifty years ago:

I'm talking about mysteries like the initiation and development of life. Equally mysterious is the emergence of intelligence. Stephen Hawking once asked, "Why does the universe even bother to exist?" You can ask the same question about intelligence. Why does intelligence even bother to exist?

That is the sort of question that captivates a high school student with an imagination bigger than his own understanding of the world. Some of those young people are motivated by a desire to create an "ultra-intelligent computer:, as Feigenbaum puts it. Others are motivated more by the second prize on which AI founders set their eyes:

... a very complete model of how the human mind works. I don't mean the human brain, I mean the mind: the symbolic processing system.

That's the goal that drew the starry-eyed HS student who became the author of this blog into computer science.

Feigenbaum closes his answer with one of the more bodacious claims you'll find in any issue of Communications:

In my view the science that we call AI, maybe better called computational intelligence, is the manifest destiny of computer science.

There are, of course, many areas of computer science worthy of devoting one's professional life to. Over the years I have become deeply interested in questions related to language, expressiveness, and even the science or literacy that is programming. But it is hard for me to shake the feeling, still deep in my bones, that the larger question of understanding what we mean by "mind" is the ultimate goal of all that we do.

September 27, 2010 8:09 PM

Preconception, Priming, and Learning

Earlier today, @tonybibbs tweeted about this Slate article, which describes how political marketers use the way our minds work to slant how we think about candidates. It reports on some studies from experimental psych that found evidence for a disconcerting assertion:

Reminding people of their partisan loyalties makes them more likely to buy smears about their political opponents.

Our willingness to believe in smears is intricately tied to our internal concepts of "us" and "them." It does not matter how the "us" is defined.... The moment you prompt people to see the world in terms of us and them, you instantly make their minds hospitable to slurs about people belonging to the other group.

I wondered out loud on Twitter whether knowing this about how our minds work can help us combat the effects of such manipulation and thus to act more rationally. Given that the cues in these studies were so brief as to bypass conscious thought, I am not especially hopeful.

As you might imagine, the findings of these studies concern me, not only with regard to the possibility of an informed and rational electorate but also for what it means about my own personal behavior in the political marketplace. Walking across campus this afternoon, though, it occurred to me that as much as I care for the political implications, these findings might have a more immediate effect on my life as an instructor.

Every learner comes to a classroom or to a textbook with preconceptions. As far as I know, the physics education community has done more than other science communities to study the preconceptions novice students bring to their classrooms. However, they have also learned that their intro courses tend to make things worse. We in computer science often make things worse, too, but we don't know much about how or why!

The findings about bias and priming in political communication make me wonder what the implications of bias and priming might be for learning, especially computer science. Are students' preconceptions about CS enough like the partisan loyalties people have in politics to make the findings relevant? I doubt students have the same "us versus them" mentality about technical academic subjects as about politics, but research has shown that many non-CS students think that programmers are a different sort of people than themselves. This might lead to something of a "family" effect.

If so, then the priming effect exposed in the studies might also apply in some way. My first thought was of inadvertent priming, in which we send signals unintentionally that reinforce biases against learning to program or that strengthen misconceptions about computing. I realized later that inadvertent priming could also have positive effects. That side of the continuum seems inherently less interesting to me, but perhaps it shouldn't. It is good to know what we are doing right as well as what we are doing wrong.

My second thought was of how we might intentionally prime the mind to improve learning. Intentional priming is the focus of the Slate article, due to the nefarious ways in which political operatives use it to create misinformation and influence voter behavior. We teachers are in the business of shaping minds, too, but in good ways, affecting both the content and the form of student thinking. Educators should use what scientists learn about how the human mind works to do their job more effectively. This may be an opportunity.

Cognitive psychology is the science that underlies learning and teaching. We educators should look for more ways to use it to do our jobs better.

~~~~

(I need to track down citations for some of the claims I reference above, such as studies of naive physics and studies of how non-computing and novice computing students view programmers as a different breed. If you have any at hand, I'd love to hear from you.)

September 26, 2010 7:12 PM

Competition, Imitation, and Nothingness on a Sunday Morning

Joseph Brodsky said:

Another poet who really changed not only my idea of poetry, but also my perception of the world -- which is what it's all about, ya? -- is Tsvetayeva. I personally feel closer to Tsvetayeva -- to her poetics, to her techniques, which I was never capable of. This is an extremely immodest thing to say, but, I always thought, "Can I do the Mandelstam thing?" I thought on several occasions that I succeeded at a kind of pastiche.

But Tsvetayeva. I don't think I ever managed to approximate her voice. She was the only poet -- and if you're a professional that's what's going on in your mind -- with whom I decided not to compete.

Tsvetayeva was one of Brodsky's closest friends in Russia. I should probably read some of her work, though I wonder how well the poems translate into English.

When I am out running 22 miles, I have lots of time to think. This morning, I spent a few minutes thinking about Brodsky's quote, in the world of programmers. Every so often I have encountered a master programmer whose work changes my perception of world. I remember coming across several programs by Ward Cunningham's, including his wiki, and being captivated by its combination of simplicity and depth. Years before that, the ParcPlace Smalltalk image held my attention for months as I learned what object-oriented programming really was. That collection of code seemed anonymous at first, but I later learned its history and and became a fan of Ingalls, Maloney, and the team. I am sure this happens to other programmers, too.

Brodsky also talks about his sense of competition with other professional poets. From the article, it's clear that he means not a self-centered or destructive competition. He liked Tsvetayeva deeply, both professionally and personally. The competition he felt is more a call to greatness, an aspiration. He was following the thought, "That is beautiful" with "I can do that" -- or "Can I do that?"

I think programmers feel this all the time, whether they are pros or amateurs. Like artists, many programmers learn by imitating the code they see. These days, the open-source software world gives us so many options! See great code; imitate great code. Find a programmer whose work you admire consistently; imitate the techniques, the style, and, yes, the voice. The key in software, as in art, is finding the right examples to imitate.

Do programmers ever choose not to compete in Brodsky's sense? Maybe, maybe not. There are certainly people whose deep grasp of computer science ideas usually feels beyond my reach. Guy Steele comes to mind. But I think for programmers it's mostly a matter of time. We have to make trade-offs between learning one thing well or another.

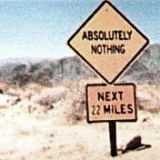

22 miles is a long run. I usually do only one to two runs that long during my training for a given marathon. Some days I start with the sense of foreboding implied by the image above, but more often the run is just there. Twenty-two miles. Run.

This time the morning was chill, 40 degrees with a bright sun. The temperature had fallen so quickly overnight that the previous day's rain had condensed in the leaves of every tree and bush, ready to fall like a new downpour at the slightest breeze.

This is my last long run before taking on Des Moines in three weeks. It felt neutral and good at the same time. It wasn't a great run, like my 20-miler two weeks ago, but it did what it needed to do: stress my legs and mind to run for about as long as the marathon will be. And I had plenty of time to think through the nothingness.

Now begins my taper, an annual ritual leading to a race. The 52 miles I logged this week will seep into my body for the next ten days or so as it acclimates to the stress. Now, I will pare back my mileage and devote a few more short and medium-sized runs to converting strength into the speed.

The quote that opens this entry comes from Joseph Brodsky, The Art of Poetry No. 28, an interview in The Paris Review by Sven Birkerts in December 1979. I like very much to hear writers talk about how they write, about other writers, and about the culture of writing. This long interview repaid me several times for the time I spent reading.

September 23, 2010 10:24 PM

Distracted All the Time, or "Warped by YouTube"

A friend sent me an e-mail message last night that said, among other things, "So, you've been recruiting." I began my response with, "It's a hard habit to break." Immediately, this song was in my ear. I had an irrational desire to link to it, or mention it, or at least go play it. But I doubt Rick cared to hear it, or even hear about my sudden obsession with it, and I had too much to do to take the time to surf over to YouTube and look it up.

Something like this happens to me nearly every time I sent down to write, especially when I blog. The desire to pack my entries with a dense network of links is strong. Most of those links are useful, giving readers an opportunity to explore the context of my ideas or to explore a particular idea deeper. But every so often, I want to link to a pop song or movie reference whose connection to my entry is meaningful only to me.

YouTube did this to me. So did Hulu and Wikipedia and Google and Twitter, and the rest of the web.

What an amazing resource. What a joy to be able to meet an unexpected need or desire.

What a complete distraction.

It is hard for someone who remembers the world pre-web to overstate how amazing the resource is. These days, we are far more likely to be surprised not to find what we want than the other way around. Another friend expressed faux distress this morning when he couldn't find a video clip on-line of an old AT&T television commercial from the 70s or 80s with a Viking calling home to Mom. Shocking! The interwebs had failed him. Or Google.

Still there are days when I wonder how much having ubiquitous information at my fingertips has changed me for the worse, too. The costs of distraction are often subtle, an undertow on the flow of conscious thought. Did I really need to think about Chicago while writing e-mail about ChiliPLoP? The Internet didn't invent distraction, but it did make a cheap, universal commodity out of it.

Ultimately, this all comes back to my own weakness, the peculiar way in which my biology and experience have wired my mind for making connections, whether useful or useless. That doesn't mean the Internet isn't an enabler.

I am in a codependent relationship with the web. And we all know that a codependent relationship can be a hard habit to break.

September 22, 2010 4:38 PM

What Agile Isn't

Traffic on the XP mailing list has been heavy for the last few weeks, with a lot of "meta" discussion spawned by messages from questioners seeking a firmer understanding of this thing we call "agile software development". I haven't had time to read even a small fraction of the messages, but I do check in occasionally. Often, I'll target in on comments from specific writers whose expertise and experience I value. Other times, I'll follow a sub-sub-plot to see a broader spectrum of ideas.

Two recent messages really stood out to me as important signposts in the long-term conversation about agile software development. First, Charlie Poole reminded everyone that Agile Isn't a "Thing".

The ongoing thread about whether is always/sometimes/not always/never/ whatever "right" for a given environment seems to me to be missing something rather important. It seems to be based on the assumption that "agile" is some particular thing that we can talk about unambiguously.

It isn't.

If you come to the "agile" community looking for one answer to any question, or agreement on specific practices, or a dictum that developers or managers can use to change minds, you'll be disappointed. It's much more nebulous than that:

Agile is a set of values. They fit anywhere that those values are respected, including places where folks are trying to move the company culture away from antithetical values and towards those of agile.

If you are working with a group of people who share these values, or who are open to them, then you can "do agile" by looking for ways to bring your group's practices more in alignment with your values. You can accomplish this in almost any environment. But to get specific about agile, Charlie reminds us, you probably have to shift the conversation to specific approaches to agile development, and even specific practices.

When I use the term "agile", I try not to use it solo. I like to say "agile software development" or simply "agile development". Software configuration management guru Brad Appleton wrote the second post to catch my eye and goes a step beyond "Agile Isn't a 'Thing'" to the root of the issue: "agile" is an adjective!

"Agile" is something that you are (or are not), not something that you "do".

So simple. Thanks, Brad.

I can talk about "agility" as a noun, where it is the quality attained by "being agile". I can talk about "agile" a modifier of a noun/thing, even if the "thing" it is modifying is a set of values, principles, beliefs, behaviors, etc.

He doesn't stop there, though, for which I'm glad. You can try to develop software in an agile way -- with an openness to change, typically using short iterations and continuous feedback -- and thus try to be more agile. You can adopt a set of values, but if you don't change what you do then you probably won't be any more agile.

I also liked that Brad points out it's not reasonable to expect to realize the promise developing software in an agile way if one ignores the premise of the agile approaches. For example, executing a certain method or set of practices won't enable you to respond to change with facility unless you also take actions that keep the cost of change low and pay attention to whether or not these actions are succeeding. Most importantly, "agile" is not a synonym for happiness or success. "Being agile" may be a way to be happier as a developer or person, but we should not confuse the goal of "being agile" with the goal of being happy or successful or happy.

The title of this entry, "What Agile Isn't", ought to sound funny, because it isn't entirely grammatically correct. If we quote the "Agile", at least then we could be honest in indicating that we are talking about a word.

Don't worry too much about whether you fit someone else's narrow definition of "agile". Just keep trying to get better by choosing -- deliberately and with care -- actions and practices that will move you in the direction of your goal. The rest will take care of itself.

September 20, 2010 4:28 PM

Alan Kay on "Real" Object-Oriented Programming

In Alan Kay's latest comment-turned-blog entry, he offers thoughts in response to Moti Ben-Ari's CACM piece about object-oriented programming. Throughout, he distinguishes "real oop" from what passes under the term these days. His original idea of object-oriented software is really quite simple to express: encapsulated modules all the way down, with pure messaging. This statement boils down to its essence many of the things he has written and talked about over the years, such as in his OOPSLA 2004 talks.

This is a way to think about and design systems, not a set of language features grafted onto existing languages or onto existing ways of programming. He laments what he calls "simulated data structure programming", which is, sadly, the dominant style one sees in OOP books these days. I see this style in nearly every OOP textbook -- especially those aimed at beginners, because those books generally start with "the fundamentals" of the old style. I see it in courses at my university, and even dribbling into my own courses.

One of the best examples of an object-oriented system is one most people don't think of as a system at all: the Internet:

It has billions of completely encapsulated objects (the computers themselves) and uses a pure messaging system of "requests, not commands", etc.

Distributed client-server apps make good examples and problems in courses on OO design precisely because they separate control of the client and the server. When we write OO software in which we control both sides of the message, it's often too tempting to take advantage of how we control both objects and to violate encapsulation. These violations can be quite subtle, even when we take into account idioms such as access methods and the Law of Demeter. To what extent does one component depend on how another component does its job? The larger the answer, the more coupled the components.

Encapsulation isn't an end unto itself, of course. Nor are other features of our implementation:

The key to safety lies in the encapsulation. The key to scalability lies in how messaging is actually done (e.g., maybe it is better to only receive messages via "postings of needs"). The key to abstraction and compactness lies in a felicitous combination of design and mathematics.

I'd love to hear Kay elaborate on this "felicitous combination of design and mathematics"... I'm not sure just what he means!

As an old AI guy, I am happy to see Kay make reference to the Actor model proposed by Carl Hewitt back in the 1970s. Hewitt's ideas drew some of their motivation from the earliest Smalltalk and gave rise not only to Hewitt's later work on concurrent programming but also Scheme. Kay even says that many of Hewitt's ideas "were more in the spirit of OOP than the subsequent Smalltalks."

Another old AI idea that came to my mind as I read the article was blackboard architecture. Kay doesn't mention blackboards explicitly but does talk about how messaging might better be if instead of an object sent messages to specific targets they might "post their needs". In a blackboard system, objects capable of satisfying needs monitor the blackboard and offer to respond to a request as they are able. The blackboard metaphor maintains some currency in the software world, especially in the distributed computing world; it even shows up as an architectural pattern in Pattern-Oriented Software Architecture. This is a rich metaphor with much room for exploration as a mechanism for OOP.

Finally, as a CS educator, I could help but notice Kay repeating a common theme of his from the last decade, if not longer:

The key to resolving many of these issues lies in carrying out education in computing in a vastly different way than is done today.

That is a tall order all its own, much harder in some ways than carrying out software development in a vastly different way than is done today.

September 14, 2010 9:58 PM

Thinking About Things Your Users Don't Know

Recently, one of the software developers I follow on Twitter posted a link to 10 Things Non-Technical Users Don't Understand About Your Software. It documents the gap the author has noticed between himself as a software developer and the people who use the software he creates. A couple, such as copy and paste and data storage, are so basic that they might surprise new developers. Others, such concurrency and the technical jargon of software, aren't all that surprising, but developers need to keep them in mind when building and documenting their systems. One, the need for back-ups, eludes even for technical users. Unfortunately,

You can mention the need for back-ups in your documentation and/or in the software, but it is unlikely to make much difference. History shows that this is a lesson most people have to learn the hard way (techies included).

Um, yeah.

As I read this article, I began to think that it would be fun and enlightening to write a series of blog entries on the things that CS majors don't understand about our courses. I could start with ten as a target length, but I'm pretty sure that I can identify even more. As the author of the non-technical users paper points out, the value in such a list is most definitely not to demean the students or users. Rather, it is exceedingly useful for professors to remember that their students are not like them and to keep these differences in mind as they design their courses, create assignments, and talk with the students. Like almost everyone who interacts with people, we can do a better job if we understand our audience!

So, I'll be on the look-out for topics specific to CS students. If you have any suggestions, you know how to reach me.

After I finished reading the article, I looked back at the list and realized that many of these things are themselves things that CS majors don't understand about their courses. Consider especially these:

the jargon you use

It took me several years to understand just how often the jargon I used in class sounded like white noise to my students. I'm under no illusion that I now speak in the clearest vocabulary and that all my students understand what I'm saying as I say it. But I think about this often as I prepare and deliver my lectures, and I think I'm better than I used to be.

they should read the documentation

I'm used to be surprised when, on our student assessments, a student responds to the question "What could I have done better to improve my learning in this course?" with "Read the book". (Even worse, some students say "Buy the book"!) Now, I'm just saddened. I can say only so much in class. Our work in class can only introduce students to the concepts we are learning, not cover them in their entirety. Students simply must read the textbook. In upper-division courses, they may well need to read secondary sources and software documentation, too. But they don't always know that, and we need to help them know it as soon as possible.

Finally, my favorite:

the problem exists between keyboard and chair

Let me sample from the article and substitute students for users:

Unskilled students often don't realize how unskilled they are. Consequently they may blame your course (and lectures and projects and tests) for problems that are of their own making.

For many students, it's just a matter of learning that they need to take responsibility for their own learning. Our K-12 schools often do not prepare them very well for this part of the college experience. Sometimes, professors have to be sensitive in raising this topic with students who don't seem to be getting it on their own. A soft touch can do wonders with some students; with others, polite but direct statements are essential.

The author of this article closes his discussion of this topic with advice that applies quite well in the academic setting:

However, if several people have the same problem then you need to change your product to be a better fit for your users (changing your users to be a better fit to your software unfortunately not being an option for most of us).

You see, sometimes the professor's problem exists between his keyboard and his chair, too!

September 12, 2010 12:49 PM

Two Kinds of Marathon Weekend

I feel so much better right now than I did two weeks ago at this time.

Then, I had just finished a big weekend of running, with more than a marathon's worth of mileage in little over a day. On Sunday morning, I ran my first 20-miler of the season. The day before, I ran in an 8-mile race. I had never raced at a distance other than 5K, half-marathon, and marathon.

That weekend was unusual, of course. I usually rest on the day before my long runs, but a race that was originally scheduled for last May was postponed due to flooded trails to that Saturday. So I decided to skip my 8-mile speed workout on Friday, rest that day, run the race on Saturday, and then do my scheduled 20-miler as planned.

The race went much better than I planned. I averaged 8:00 miles. That used to be my marathon goal pace, back in 2005-2006, but after health problems over the last few years, I now consider it more of a marathon fantasy pace. I now have a much friendlier goal pace, 8:30, though that is still a challenge.

I set up the Sunday 20-miler as two loops from my house, the first 11 miles and the second nine. That way, if I were to run into trouble, I could always cut the run off at half and call it a day. I ran slowly, deliberately, and all seemed fine until 15 miles. At that point I started to dehydrate, and the last five miles were as hard a struggle as I've ever had on a training run.

The rest of the day was unpleasant: sore and feeling poorly as my body tried to shake the effects of the dehydration. I wanted to write, but didn't have the energy.

Fast forward to this week. I actually ran more miles during the work week than in any previous week of the training cycle, thirty. But I did only one speed work-out, on Wednesday: 9 miles with 7x800m repeats and 400m recoveries. I decided to take it as easy as my body wanted for Friday's nine miles, and a stiff headwind that affected 40% of the run made it easy to take it easy. On Saturday, I rested.

For this morning's run, I resurrected a 20-mile route from my previous neighborhood, an old friend who I knew would give me a good mental ride. Last evening, I made a plan to drink at specific points in the run, much as I will in the marathon, in hopes of resisting my weakness for running long without taking nutrition.

The morning started at 50 degrees, sunny with a light wind. The temperature rose steadily as I ran, but the morning remained beautiful. My old friend served me well, taking me through new scenery and giving me a new view on some familiar scenery. I warmed up slowly and soon found a steady pace I was able to maintain as the miles fell behind. The last five miles take me out to an urban lake for a couple of hilly laps and back near my house.

I broke 3:10 for the twenty miles, just under a 9:30 per mile pace. That's a good long run speed to prepare for a 8:30/mile marathon. Better still, I feel good -- tired but strong, physically and mentally. Whatever dent the first 20-miler made in my confidence, this run has begun to rebuild it. With five weeks until race day, I again believe that it is possible for me to be ready to make a credible effort.

So, my first 50-mile week of this training period is in the books. I'll probably run one more 50-mile week, and one more 20+ mile training run. I hope that my work so far has prepared me to sustain mileage comfortably and to begin picking up my pace on the longer runs.

But for the rest of today, I will rest, take a good meal with my wife and daughters, and enjoy the feeling of a single good run.

September 10, 2010 2:22 PM

Recursion, Trampolines, and Software Development Process

Yesterday I read an old article on tail calls and trampolining in Scala by Rich Dougherty, which summarizes nicely the problem of recursive programming on the JVM. Scala is a functional language, which lends itself to recursive and mutually recursive programs. Compiling those programs to run on the JVM presents problems because (1) the JVM's control stack is shallow and (2) the JVM doesn't support tail-call optimization. Fortunately, Scala supports first-class functions, which enables the programmer to implement a "trampoline" that avoids the growing the stack. The resulting code is harder to understand and so to maintain, but it runs without growing the control stack. This is a nice little essay.

Dougherty's conclusion about trampoline code being harder to understand reminded me of a response by reader Edward Coffin to my July entry on CS faculty sending bad signals about recursion. He agreed that recursion usually is not a problem from a technical standpoint but pointed out a social problem (paraphrased):

I have one comment about the use of recursion in safety-critical code, though: it is potentially brittle with respect to changes made by someone not familiar with that piece of code, and brittle in a way that makes breaking the code difficult to detect. I'm thinking of two cases here: (1) a maintainer unwittingly modifies the code in a way that prevents the compiler from making the formerly possible tail-call optimization and (2) the organization moves to a compiler that doesn't support tail-call optimization from one that did.

Edward then explained how hard it is to warn the programmers that they have just made changes to the code that invalidate essential preconditions. This seems like a good place to comment the code, but we can't rely on programmers paying attention to such comments, even that the comments will accompany the code forever. The compiler may not warn us, and it may be hard to write test cases that reliably fail when the optimization is missed. Scala's @tailrec annotation is a great tool to have in this situation!

"Ideally," he writes, "these problems would be things a good organization could deal with." Unfortunately, I'm guessing that most enterprise computing shops are probably not well equipped to handle them gracefully, either by personnel or process. Coffin closes with a pragmatic insight (again, paraphrased):

... it is quite possible that [such organizations] are making the right judgement by forbidding it, considering their own skill levels. However, they may be using the wrong rationale -- "We won't do this because it is generally a bad idea." -- instead of the more accurate "We won't do this because we aren't capable of doing it properly."

Good point. I don't suppose it's reasonable for me or anyone to expect people in software shops to say that. Maybe the rise of languages such and Scala and Clojure will help both industry and academia improve the level of developers' ability to work with functional programming issues. That might allow more organizations to use a good technical solution when it is suitable.

That's one of the reasons I still believe that CS educators should take care to give students a balanced view of recursive programming. Industry is beginning to demand it. Besides, you never know when a young person will get excited about a problem whose solution feels so right as a recursion and set off to write a program to grow his excitement. We also want our graduates to be able to create solutions to hard problems that leverage the power of recursion. We need for students to grok the Big Idea of recursion as a means for decomposing problems and composing systems. The founding of Google offers an instructive example of using inductive definition recursion, as discussed in this Scientific American article on web science:

[Page and Brin's] big insight was that the importance of a page -- how relevant it is -- was best understood in terms of the number and importance of the pages linking to it. The difficulty was that part of this definition is recursive: the importance of a page is determined by the importance of the pages linking to it, whose importance is determined by the importance of the pages linking to them. [They] figured out an elegant mathematical way to represent that property and developed an algorithm they called PageRank to exploit the recursiveness, thus returning pages ranked from most relevant to least.

Much like my Elo ratings program that used successive approximation, PageRank may be implemented in some other way, but it began as a recursive idea. Students aren't likely to have big recursive ideas if we spend years giving them the impression it is an esoteric technique best reserved for their theory courses.

So, yea! for Scala, Clojure, and all the other languages that are making recursion respectable in practice.

September 07, 2010 4:53 PM

Quick Thoughts on "Computational Thinking"

When Mark Guzdial posted his article Go to the Data, offering "two stories of (really) computational thinking", I thought for sure that I'd write something on the topic soon. Over four and a half weeks have passed, and I have not written yet. That's because, despite thinking about it on and off ever since, I still don't have anything deep or detailed to say. I'm as confused as Mark says he and Barb are, I guess.

Still, I can't seem to shake these two simple thoughts:

- Whatever else else it may be, "computational thinking" has to involve, well, um, computation. As several commenters on his piece point out, lots of scientists collect and analyze data. Computer science connects data and algorithm in a way that other disciplines don't.

- If a bunch of smart, well-informed people can discuss a name like "computational thinking" for several years and still be confused, maybe the problem is with the name. Maybe it really is just another name for a kind of computer science, generalized so that we can speak meaningfully of non-CS types and non-programmers doing it. I believe strongly that computing offers a new medium for expressing and creating ideas but, as for the term computational thinking, maybe there's no there there.

I do agree with Mark that we runners can learn a lot by looking back at our running logs! An occasional short program can often expose truths that elude the naked eye.

September 06, 2010 12:45 PM

Empiricism, Bias, and Confidence

This morning, Mike Feathers tweeted a link to an old article by Donald Norman, Simplicity Is Highly Overrated and mentioned that he disagrees with Norman. Many software folks disagreed with Norman when he first wrote the piece, too. We in software, often being both designers and users, have learned to appreciate simplicity, both functionally and aesthetically. And, as Kent Beck suggested, products such as the iPod are evidence contrary to the claim that people prefer the appearance of complexity. Norman offered examples in support of his position, too, of course, and claimed that he has observed them over many years and in many cultures.

This seems like a really interesting area for study. Do people really prefer the appearance of complexity as a proxy for functionality? Is the iPod an exception, and if so why? Are software developers different from the more general population when it comes to matters of function, simplicity, and use?

When answering these questions, I am leery of relying on self-inspection and anecdote. Norman said it nicely in the addendum to his article:

Logic and reason, I have to keep explaining, are wonderful virtues, but they are irrelevant in describing human behavior.

He calls this the Engineer's Fallacy. I'm glad Norman also mentions economists, because much of the economic theory that drives our world was creating from deep analytic thought, often well-intentioned but usually without much evidence to support it, if any at all. Many economists themselves recognize this problem, as in this familiar quote:

If economists wished to study the horse, they wouldn't go and look at horses. They'd sit in their studies and say to themselves, "What would I do if I were a horse?"

This is a human affliction, not just a weakness of engineers and economists. Many academics accepted the Sapir-Whorf Hypothesis, which conjectures that our language restricts how we think, despite little empirical support for a claim so strong. The hypothesis affected in disciplines such as psychology, anthropology, and education, as well as linguistics itself. Fortunately, others subjected the hypothesis to study and found it lacking.

For a while, it was fashionable to dismiss Sapir-Whorf. Now, as a recent New York Times article reports, researchers have begun to demonstrate subtler and more interesting ways in which the language we speaks shapes how we think. The new theories follow from empirical data. I feel a lot more confident in believing the new theories, because we have derived them from more reliable data than we ever had for the older, stronger claim.

(If you read the Times article, you will see that Whorf was an engineer, so maybe the tendency to develop theories from logical analysis and sparse data really is more prominent in those of us trained in the creation of artifacts to solve problems...)

We see the same tendencies in software design. One of the things I find attractive about the agile world is its predisposition toward empiricism. Just yesterday Jason Gorman posted a great example, Reused Abstractions Principle. For me, software abstractions that we discover empirically have a head-start toward confident believability on the ones we design aforethought. We have seen them instantiated in actual code. Even more, we have seen them twice, so they have already been reused -- in advance of creating the abstraction.

Given how frequently even domain experts are wrong in their forecasts of the future and their theorizing about the world, how frequently we are all betrayed by our biases and other subconscious tendencies, I prefer when we have reliable data to support claims about human preferences and human behavior. A flip but too often true way to say "design aforethought" is "make up".

September 02, 2010 10:07 PM

Creation and the Beauty of Language

I learned today that my colleague Mark Jacobson created a blog last spring, mostly as a commonplace book of quotes on which he was reflecting. While looking at his few posts, I came across this passage from Rainer Maria Rilke in his inaugural entry:

If your daily life seems poor, do not blame it; blame yourself that you are not poet enough to call forth its riches; for the Creator, there is no poverty.

There were a couple of times this week when I really needed to hear this, and reading it today was good fortune. It's also a great passage for computer programmers, who need never accept the grayness of their tools because they have the powers of a Creator.

I have long been a fan of Rilke's imagery and have quoted him before. I remember reading my first Rilke poem, in my high school German IV course. Our wonderful teacher, Frau Griffith, had the heart of a poet and asked those of us who had survived into the fourth year to read as much original German literature as we could, in order that we might come to understand more fully what it means to be German. (She was a deeply emotional woman who had escaped Nazi Germany on a train in the dead of night, just ahead of the local police.) I came to love Rilke and to be mesmerized by Kafka, whose work I have read in translation many times since. His short fiction is often surprising.

I do not remember just which Rilke poem we read first. I only remember that it showed me German could be beautiful. My German IV classmates and I were often teased by classmates who studied French and Spanish. They praised the fluidity of their languages and mocked the turgid prose of ours. We mocked them back for studying easier languages, but secretly we admitted that German was sounded and looked uglier. Rilke showed us that we were wrong, that German syllables could flow as mellifluously as any other. Revelation!

Later Frau introduced us to the popular music of Udo Jürgens, and we were hooked.

Recently, I ran across a reference to Goethe's "The Holy Longing". I tracked it down in English and immediately understood its appeal. But the translation feels so clunky... The original German has a rhythm that is hard to capture. Read:

Keine Ferne macht dich schwierig,

Kommst geflogen und gebannt,

Und zuletzt, des Lichts begierig,

Bist du Schmetterling verbrannt.

That's not quite Rilke to my ears, but it feels right.

September 01, 2010 9:12 PM

The Beginning of a New Project

"Tell me you're not playing chess," my colleague said quizzically.

But I was. My newest grad student and I were sitting in my office playing a quick couple of games of progressive chess, in which I've long been interested. In progressive chess, white makes one move, then black makes two; white makes three moves, then black makes four. The game proceeds in this fashion until one of the players delivers checkmate or until the game ends in any other traditional way.

This may seem like a relatively simple change to the rules of the game, but the result is something that almost doesn't feel like chess. The values of the pieces changes radically, as does the value of space and the meaning of protection. That's why we needed to play a couple of games: to acquaint my student with how different it is from the classical chess I know and love and which has played since a child.

For his master's project, the grad student wanted to do something in the general area of game-playing and AI, and we both wanted to work on a problem that is relatively untouched, where a few cool discoveries are still accessible to mortals. Chess, the fruit fly of AI from the 1950s into the 1970s, long ago left the realm where newcomers could make much of a contribution. Chess isn't solved in the technical sense, as checkers is, but the best programs now outplay even the best humans. To improve on the state of the art requires specialty hardware or exquisitely honed software.

Progressive chess, on the other hand, has a funky feel to it and looks wide open. We are not yet aware of much work that has been done on it, either in game theory or automation. My student is just beginning his search of the literature and will know soon how much has been done and what problems have been solved, if any.

That is why we were playing chess in my office on a Wednesday afternoon, so that we could discuss some of the ways in which we will have to think differently about this problem as we explore solutions. Static evaluation of positions is most assuredly different from what works in classical chess, and I suspect that the best ways to search the state space will be quite different, too. After playing only a few games, my student proposed a new way to do search to capitalize on progressive chess's increasingly long sequences of moves by one player. I'm looking forward to exploring it further, giving it a try in code, and finding other new ideas!

I may not be an AI researcher first any more, but this project excites me. You never know what you will discover until you wander away from known territory, and this problem offers us a lot of unknowns.

And I'll get to say, "Yes, we are playing chess," every once in a while, too.