February 25, 2014 3:31 PM

Abraham Lincoln on Reading the Comment Section

From Abraham Lincoln's last public address:

As a general rule, I abstain from reading the reports of attacks upon myself, wishing not to be provoked by that to which I cannot properly offer an answer.

These remarks came two days after Robert E. Lee surrendered at Appomattox Court House. Lincoln was facing abuse from the North and the South, and from within his party and without.

The great ones speak truths that outlive their times.

February 22, 2014 2:05 PM

MOOCs: Have No Fear! -- Or Should We?

The Grumpy Economist has taught a MOOC and says in his analysis of MOOCs:

The grumpy response to moocs: When Gutenberg invented moveable type, universities reacted in horror. "They'll just read the textbook. Nobody will come to lectures anymore!" It didn't happen. Why should we worry now?

The calming effect of his rather long entry is mitigated by other predictions, such as:

However, no question about it, the deadly boring hour and a half lecture in a hall with 100 people by a mediocre professor teaching utterly standard material is just dead, RIP. And universities and classes which offer nothing more to their campus students will indeed be pressed.

In downplaying the potential effects of MOOCs, Cochrane seems mostly to be speaking about research schools and more prestigious liberal arts schools. Education is but one of the "goods' being sold by such schools; prestige and connections are often the primary benefits sought by students there.

I usually feel a little odd when I read comments on teaching from people who teach mostly graduate students and mostly at big R-1 schools. I'm not sure their experience of teaching is quite the same as the experience of most university professors. Consequently, I'm suspicious of the prescriptions and predictions they make for higher education, because our personal experiences affect our view of the world.

That said, Cochrane's blog spends a lot of time talking about the nuts and bolts of creating MOOCs, and his comments on fixed and marginal costs are on the mark. (He may be grumpy, but he is an economist!) And a few of his remarks about teaching apply just as well to undergrads at a state teaching university as they do to U. of Chicago's doctoral program in economics. One that stood out:

Most of my skill as a classroom teacher comes from the fact that I know most of the wrong answers as well as the right ones.

All discussions of MOOCs ultimately include the question of revenue. Cochrane reminds us that universities...

... are, in the end, nonprofit institutions that give away what used to be called knowledge and is now called intellectual property.

The question now, though, is how schools can afford to give away knowledge as state support for public schools declines sharply and relative cost structure makes it hard for public and private schools alike to offer education at a price reasonable for their respective target audiences. The R-1s face a future just as challenging as the rest of us; how can they afford to support researchers who spend most of their time creating knowledge, not teaching it to students?

MOOCs are a weird wrench thrown into this mix. They seem to taketh away as much as they giveth. Interesting times.

February 21, 2014 3:35 PM

Sticking with a Good Idea

My algorithms students and I recently considered the classic problem of finding the closest pair of points in a set. Many of them were able to produce a typical brute-force approach, such as:

minimum ← ∞

for i ← 1 to n do

for j ← i+1 to n do

distance ← sqrt((x[i] - x[j])² + (y[i] - y[j])²)

if distance < minimum then

minimum ← distance

first ← i

second ← j

return (first, second)

Alas, this is an O(n²) process, so we considered whether we might do better with a divide-and-conquer approach. It did not look promising, though. Divide-and-conquer doesn't let us solve the sub-problems independently. What if the closest pair straddles two partitions?

This is a common theme in computing, and problem solving more generally. We try a technique only to find that it doesn't quite work. Something doesn't fit, or a feature of the domain violates a requirement of the technique. It's tempting in such cases to give up and settle for something less.

Experienced problem solvers know not to give up too quickly. Many of the great advances in computing came under conditions just like this. Consider Leonard Kleinrock and the theory of packet switching.

In a Computing Conversations podcast published last year, Kleinrock talks about his Ph.D. research. He was working on the problem of how to support a lot of bursty network traffic on a shared connection. (You can read a summary of the podcast in an IEEE Computer column also published last year.)

His wonderful idea: apply the technique of time sharing from multi-user operating systems. The system could break all messages into "packets" of a fixed size, let messages take turns on the shared line, then reassemble each message on the receiving end. This would give every message a chance to move without making short messages wait too long behind large ones.

Thus was born the idea of packet switching. But there was a problem. Kleinrock says:

I set up this mathematical model and found it was analytically intractable. I had two choices: give up and find another problem to work on, or make an assumption that would allow me to move forward. So I introduced a mathematical assumption that cracked the problem wide open.

His "independence assumption" made it possible for him to complete his analysis and optimize the design of a packet-switching network. But an important question remained: Was his simplifying assumption too big a cheat? Did it skew the theoretical results in such a way that his model was no longer a reasonable approximation of how networks would behave in the real world?

Again, Kleinrock didn't give up. He wrote a program instead.

I had to write a program to simulate these networks with and without the assumption. ... I simulated many networks on the TX-2 computer at Lincoln Laboratories. I spent four months writing the simulation program. It was a 2,500-line assembly language program, and I wrote it all before debugging a single line of it. I knew if I didn't get that simulation right, I wouldn't get my dissertation.

High-stakes programming! In the end, Kleinrock was able to demonstrate that his analytical model was sufficiently close to real-world behavior that his design would work. Every one of us reaps the benefit of his persistence every day.

Sometimes, a good idea poses obstacles of its own. We should not let those obstacles beat us without a fight. Often, we just have to find a way to make it work.

This lesson applies quite nicely to using divide-and-conquer on the closest pairs problem. In this case, we don't make a simplifying assumption; we solve the sub-problem created by our approach:

After finding a candidate for the closest pair, we check to see if there is a closer pair straddling our partitions. The distance between the candidate points constrains the area we have to consider quite a bit, which makes the post-processing step feasible. The result is an O(n log n) algorithm that improves significantly on brute force.

This algorithm, like packet switching, comes from sticking with a good idea and finding a way to make it work. This is a lesson every computer science student and novice programmer needs to learn.

There is a complementary lesson to be learned, of course: knowing when to give up on an idea and move on to something else. Experience helps us tell the two situations apart, though never with perfect accuracy. Sometimes, we just have to follow an idea long enough to know when it's time to move on.

February 19, 2014 4:12 PM

Teaching for the Perplexed and the Traumatized

Teaching for the Perplexed and the Traumatized

On need for empathy when writing about math for the perplexed and the traumatized, Steven Strogatz says:

You have to help them love the questions.

Teachers learn this eventually. If students love the questions, they will do an amazing amount of working searching for answers.

Strogatz is writing about writing, but everything he says applies to teaching as well, especially teaching undergraduates and non-majors. If you teach only grad courses in a specialty area, you may be able to rely on the students to provide their own curiosity and energy. Otherwise having empathy, making connections, and providing Aha! moments are a big part of being successful in the classroom. Stories trump formal notation.

This semester, I've been trying a particular angle on achieving this trifecta of teaching goodness: I try to open every class session with a game or puzzle that the students might care about. From there, we delve into the details of algorithms and theoretical analysis. I plan to write more on this soon.

February 16, 2014 10:48 AM

Experience Happens When You Keep Showing Up

You know what they say about good design coming from experience, and experience coming from bad design? That phenomenon is true of most things non-trivial. Here's an example from men's college basketball.

The University of Florida has a veteran team. The University of Kentucky has a young team. Florida's players are very good, but not generally considered to be in the same class as Kentucky's highly-regarded players. Yesterday, the two teams played a close game on Kentucky's home floor.

Once they fell behind by five with less than two minutes remaining, Kentucky players panicked. Florida players didn't. Why not? "Well, we have a veteran group here that's panicked before -- that's been in this situation and not handled it well," [Patric] Young said.

How did Florida's players maintain their composure at the end of a tight game on the road against another good team? They had been in that same situation three times before, and failed. They didn't panic this time in large part because they had panicked before and learned from those experiences.

Kentucky's starters have played a total of 124 college games. Florida's four seniors have combined to play 491. That's a lot of experience -- a lot of opportunities to panic, to guess wrong, to underestimate a situation, or otherwise to come up short. And a lot of opportunities to learn.

The young players at Kentucky hurt today. As the author of the linked game report notes, Florida's players have hurt like that before, for coming up short in much the same way, "and they used that pain to get better".

It turns out that composure comes from experience, and experience comes from lack of composure.

As a teacher, I try to convince students not to shy away from the error messages their compiler gives them, or from the convoluted code they eventually sneak past it. Those are the experiences they'll eventually look back to when they are capable, confident programmers. They just need the opportunity to learn.

Posted by Eugene Wallingford | Permalink | Categories: Patterns, Software Development, Teaching and Learning

February 14, 2014 3:07 PM

Do Things That Help You Become Less Wrong

My students and I debriefed a programming assignment in class yesterday. In the middle of class, I said, "Now for a big question: How do you know your code is correct?

There were a lot of knowing smiles and a lot of nervous laughter. Most of them don't.

Sure, they ran a few test cases, but after making additions and changes to the code, some were just happy that it still ran. The output looked reasonable, so it must be done. I suggested that they might want to think more about testing.

This morning I read a great quote from Nathan Marz that I will share with my students:

Feedback is everything. Most of the time you're wrong, and feedback is the only way to realize your mistakes and help you become less wrong. This applies to everything.

Most of the time you're wrong. Do things that help you become less wrong. Getting feedback, early and often, is one of the best ways to do this.

A comment by a student earlier in the period foreshadowed our discussion of testing, which made me feel even better. In response to the retrospective question, "What design or programming choices went well for you?", he answered unit tests.

That set me up quite nicely to segue from manual testing into automated testing. If you aren't testing your code early and often, then manual testing is a huge improvement. But you can do even better by pushing those test cases into a form that can be executed quickly and easily, with the code doing the tedious work of verifying the output.

My students are writing code in many different languages, so I showed them testing frameworks in Ruby, Java, and Python. The code looks simple, even with the boilerplate imposed by the frameworks.

The big challenges in getting students to write unit tests are the same as for getting professionals to write them: lack of time, and misplaced confidence. I hope that a few of my students will see that the real time sink is debugging bad code and that a fear of changing code is a lack of confidence. The best way to be confident is to have evidence.

The student who extolled unit tests works in Racket and so has test cases in RackUnit. He set me up nicely for a future discussion, too, when he admitted out loud that he wrote his tests first. This time, it was I who smiled knowingly.

February 13, 2014 4:25 PM

Politics in a CS Textbook

Earlier this week, I looking for inspiration for an exam problem in my algorithms course. I started thumbing through a text I briefly considered considering for adoption this semester. (I ended up opting for no text without considering any of them very deeply.)

|

The first problem I read was written with a political spin, at the expense of one of the US political parties. I was aghast. I tweeted:

This textbook uses an end-of-chapter exercise to express an opinion about a political party. I will no longer consider it for adoption.

I don't care which party is made light of or demeaned. I'm no longer interested. I don't want a political point unrelated to my course to interfere with my students' learning -- or with the relationship we are building.

In general, I don't want students to think of me in a partisan way, whether the topic is politics, religion, or anything else outside scope of computer science. It's not my job as a CS instructor to make my students uncomfortable.

That isn't to say that I want students to think of me as bland or one-dimensional. They know me to be a sports fan and a chess player. They know I like to read books and to ride my bike. I even let them know that I'm a Billy Joel fan. All of these give me color and, while they may disagree with my tastes, none of these are likely to create distance between us.

Nor do I want them to think I have no views on important public issues of the day. I have political opinions and actually like to discuss the state of the country and world. Those discussions simply don't belong in a course on algorithms or compiler construction. In the context of a course, politics and religion are two of many unnecessary distractions.

In the end, I did use the offensive problem, but only as inspiration. I wrote a more generic problem, the sort you expect to see in an algorithms course. It included all the relevant features of the original. My problem gave the students a chance to apply what they have have learned in class without any noise. The only way this problem could offend students was by forcing them to demonstrate that they are not yet ready to solve such a problem. Alas, that is an offense that every exam risks giving.

... and yes, I still owe you a write-up on possible changes to the undergraduate algorithms canon, promised in the entry linked above. I have not forgotten! Designing a new course for spring semester is a time-constrained operation.

February 12, 2014 11:49 AM

They Are Always Watching

Rand's Inbox Reboot didn't do much for me on the process and tool side of things, perhaps other than rousing a sense of familiarity. Been there. The part that stood out for me was when he talked about the ultimate effect of not being in control of the deluge:

As a leader, you define your reputation all the time. You'd like to think that you could choose the moments that define your reputation, but you don't. They are always watching and learning. They are always updating their model regarding who you are and how you lead with each observable action, no matter how large or small.

He was speaking of technical management, so I immediately thought about my time as department head and how true this passage is.

But it is also true -- crucially true -- of the relationship teachers have with their students. There are few individual moments that define how a teacher is seen by his or her students. Students are always watching and learning. They infer things about the discipline and about the teacher from every action, from every interaction. What they learn in all the little moments comes to define you in their minds.

Of course, this is even more true of parents and children. To the extent I have any regrets as a parent, it is that I sometimes overestimated the effect of the Big Moments and underestimated the effect of all those Little Moments, the ones that flow by without pomp or recognition. They probably shaped my daughters, and my daughters' perception of me, more than anything particular action I took.

Start with a good set of values, then create a way of living and working that puts these values at the forefront of everything you do. Your colleagues, your students, and your children are always watching.

Posted by Eugene Wallingford | Permalink | Categories: Managing and Leading, Personal, Teaching and Learning

February 07, 2014 3:25 PM

Technology and Change at the University, Circa 1984

|

As I mentioned last time, I am re-reading David Lodge's novel Small World. Early on in the story, archetypal academic star and English professor Morris Zapp delivers a eulogy to the research university of the past:

The day of the individual campus has passed. It belongs to an obsolete technology -- railways and the printing press. I mean just look at this campus -- it epitomizes the whole thing: the heavy industry of the mind.

... It's huge, heavy, monolithic. It weighs about a billion tons. You can feel the weight of those buildings, pressing down the earth. Look at the Library -- built like a huge warehouse. The whole place says, "We have learning stored here; if you want it, you have to come inside and get it." Well, that doesn't apply any more.

... Information is much more portable in the modern world than it used to be. So are people. Ergo, it's no longer necessary to hoard your information in one building, or keep your top scholars corralled in one campus.

Small World was published in 1984. The technologies that had revolutionized the universities of the day were the copy machine, universal access to direct-dial telephone, and easy access to jet travel. Researchers no longer needed to be together in one place all the time; they could collaborate at a distance, bridging the distances for short periods of collocation at academic conferences.

Then came the world wide web and ubiquitous access to the Internet. Telephone and Xerox machines were quickly overshadowed by a network of machines that could perform the functions of both phone and copier, and so much more. In particular, they freed information even further from being bound to place. Take out the dated references to Xerox, and most of what Zapp has to say about universities could be spoken today:

And you don't have to grub about in library stacks for data: any book or article that sounds interesting they have Xeroxed and read at home. Or on the plane going to the next conference. I work mostly at home or on planes these days. I seldom go into the university except to teach my courses.

Now, the web is beginning to unbundle even the teaching function from the physical plant of a university and the physical presence of a teacher. One of our professors used to routinely spend hours hanging out with students on Facebook and Google+, answering questions and sharing the short of bonhomie that ordinarily happens after class in the hallways or the student union. -10 degrees outside? No problem.

Clay Shirky recently wrote that higher education's Golden Age has ended -- long ago, actually: "Then the 1970s happened." Most of Shirky's article deals with the way the economics of universities had changed. Technology is only a part of that picture. I like how Lodge's novel shows an awareness even in the early 1980s that technology was also changing the culture of scholarship and consequently scholarship's preeminent institution, the university.

Of course, back then old Morris Zapp still had to go to campus to teach his courses. Now we are all wondering how much longer that will be true for the majority of students and professors.

~~~~

IMAGE. The cover from the first edition of David Lodge's Small World, by Wendy Edelson. http://en.wikipedia.org/wiki/File:SmallWorldNovel.jpg

February 05, 2014 4:08 PM

Eccentric Internet Holdout Delbert T. Quimby

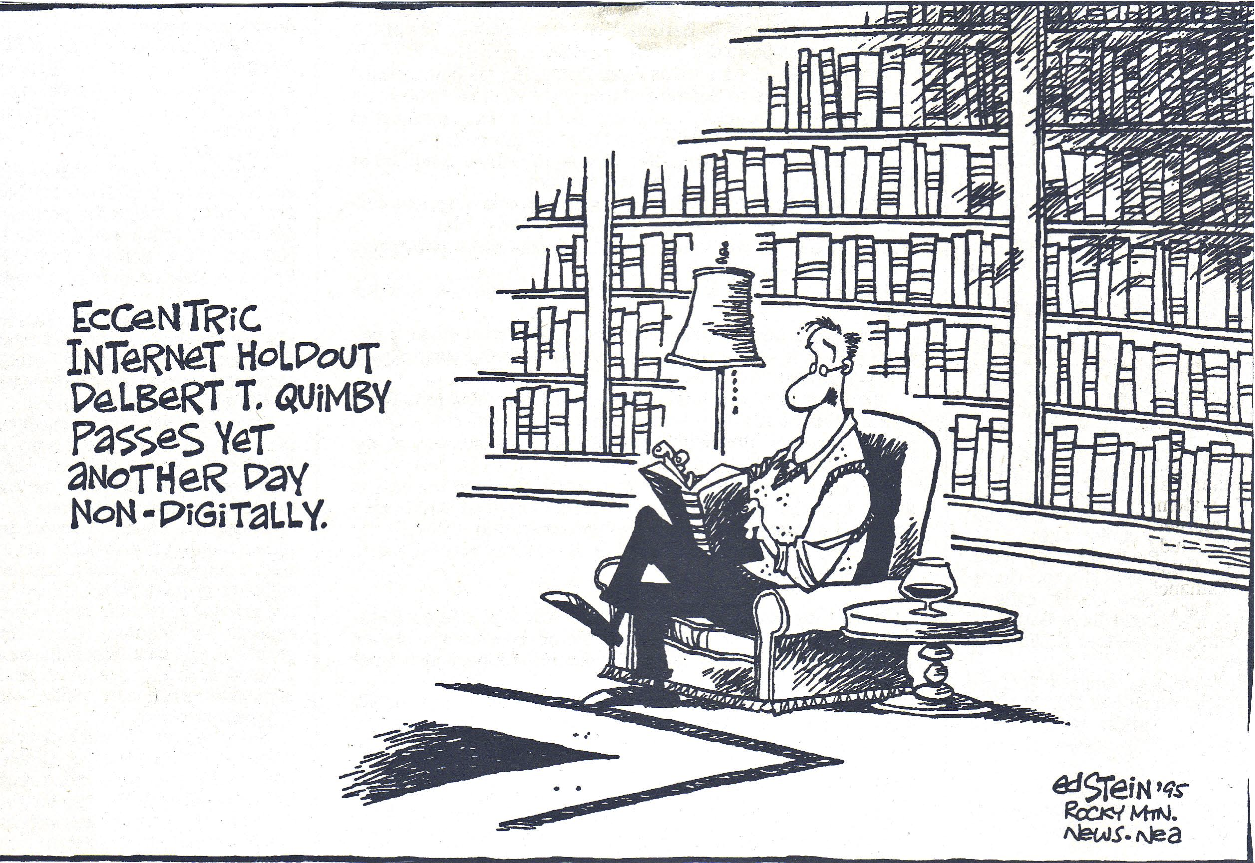

Back in a July 2006 entry, I mentioned a 1995 editorial cartoon by Ed Stein, then of the Rocky Mountain News. The cartoon featured "eccentric Internet holdout Delbert T. Quimby", contentedly passing another day non-digitally, reading a book in his den and drinking a glass of wine. It's always been a favorite of mine.

The cartoon had at least one other big fan. He looked for it on the web but had no luck finding it. When he googled the quote, though, there my blog entry was. Recently, his wife uncovered a newspaper clipping of the cartoon, and he remembered the link to my blog post. In an act of unprovoked kindness, he sent me a scan of the cartoon. So, 7+ years later, here it is:

The web really is an amazing place. Thanks, Duncan.

In 1995, being an Internet holdout was not quite as radical as it would be today. I'm guessing that most of the holdouts in 2014 are Of A Certain Age, remembering a simpler time when information was harder to come. To avoid the Internet and the web entirely these days is to miss out on a lot of life.

Even so, I am eccentric enough still to appreciate time off-line, a good book in my hand and a beverage at my side. Like my digital devices, I need to recharge every now and then.

(Right now, I am re-reading David Lodge's Small World. It's fun to watch academics made good sport of.)

February 03, 2014 4:07 PM

Remembering Generosity

For a variety of reasons, the following passage came to mind today. It is from a letter that Jonathan Schoenberg wrote as part of the "Dear Me, On My First Day of Advertising" series on The Egotist forum:

You got into this business by accident, and by the generosity of people who could have easily been less generous with their time. Please don't forget it.

It's good for me to remind myself frequently of this. I hope I can be as generous with time to my students and colleagues as as so many of my professors and colleagues were with their time. Even when it means explaining nested for-loops again.

February 02, 2014 5:19 PM

Things That Make Me Sigh

In a recent article unrelated to modern technology or the so-called STEM crisis, a journalist writes:

Apart from mathematics, which demands a high IQ, and science, which requires a distinct aptitude, the only thing that normal undergraduate schooling prepares a person for is... more schooling.

Sigh.

On the one hand, this seems to presume that one need neither a high IQ nor any particular aptitude to excel in any number of non-math and science disciplines.

On the other, it seems to say that if one does not have the requisite characteristics, which are limited to a lucky few, one need not bother with computer science, math or science. Best become a writer or go into public service, I guess.

I actually think that the author is being self-deprecating, at least in part, and that I'm probably reading too much into one sentence. It's really intended as a dismissive comment on our education system, the most effective outcome of which often seems to be students who are really good at school.

Unfortunately, the attitude expressed about math and science is far too prevalent, even in our universities. It demeans our non-scientists as well as our scientists and mathematicians. It also makes it even harder to convince younger students that, with a little work and practice, they can achieve satisfying lives and careers in technical disciplines.

Like I said, sigh.