March 14, 2024 12:37 PM

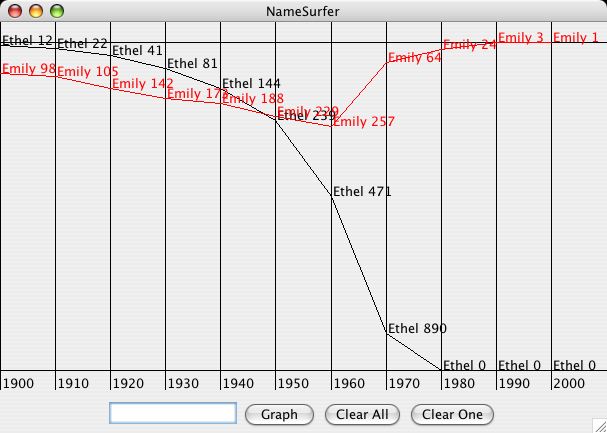

Gene Expression

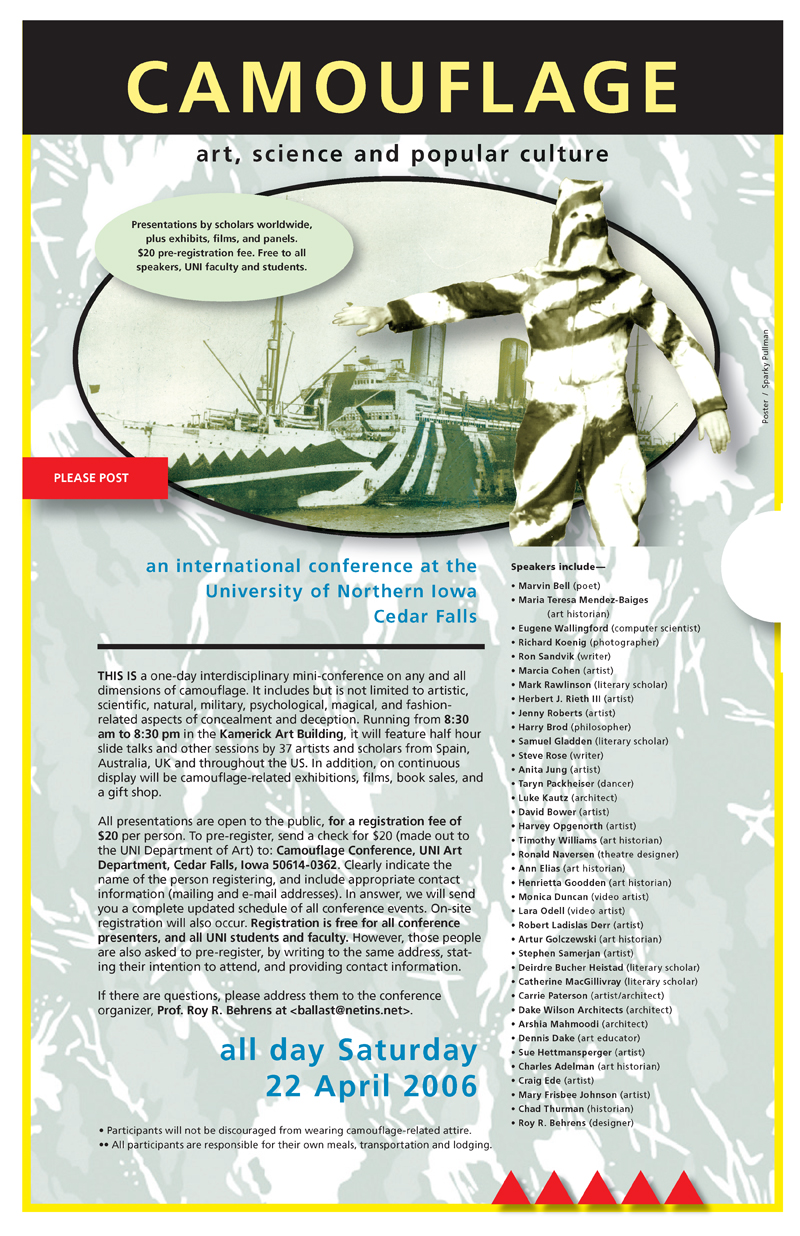

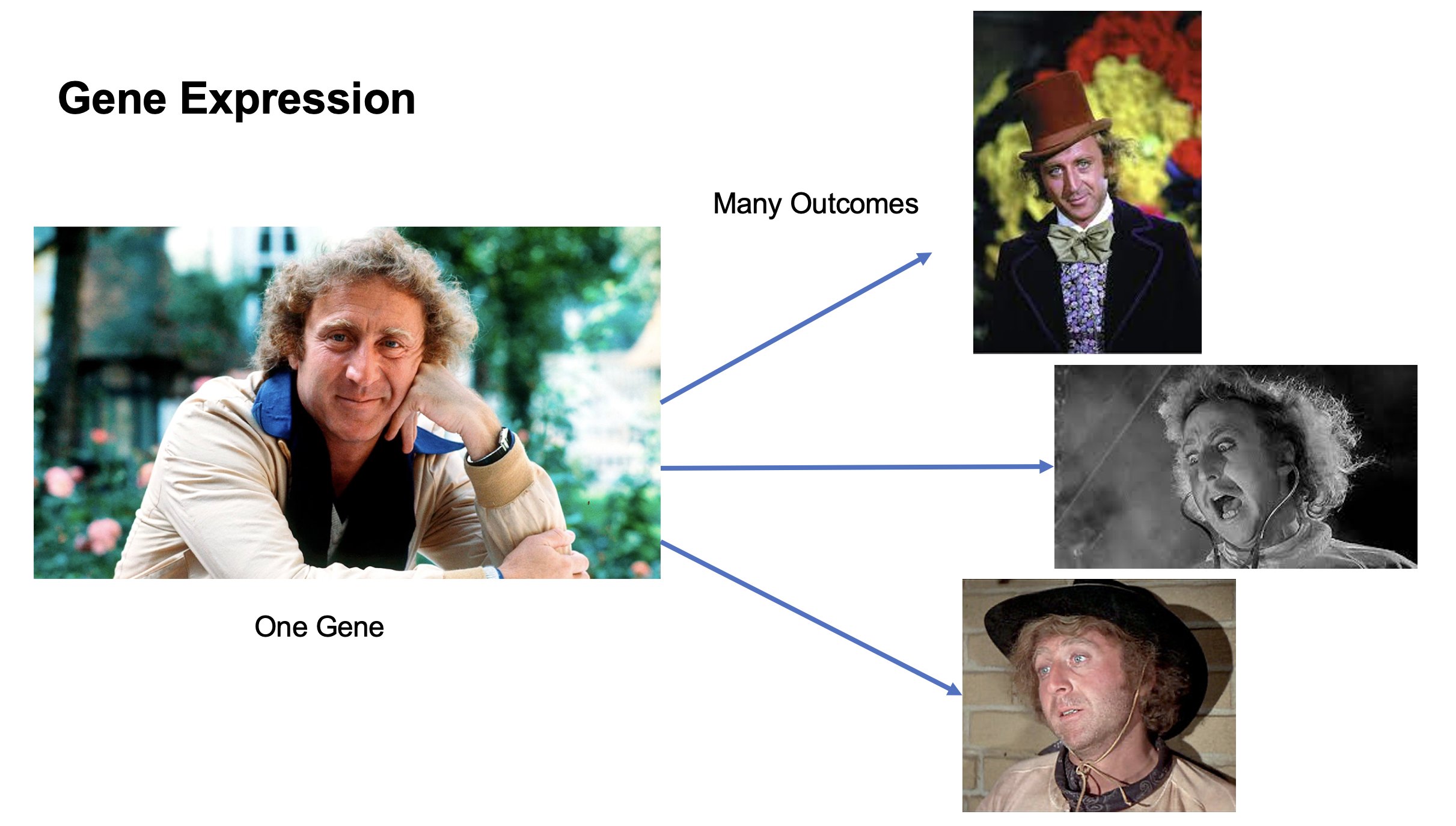

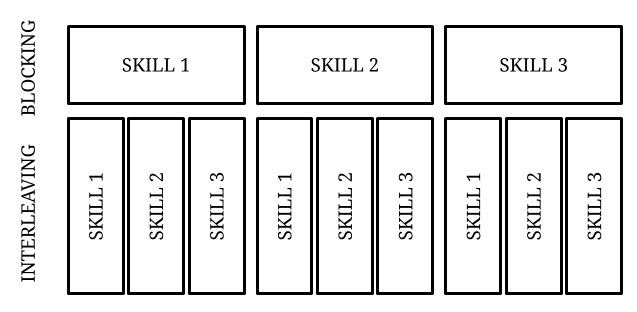

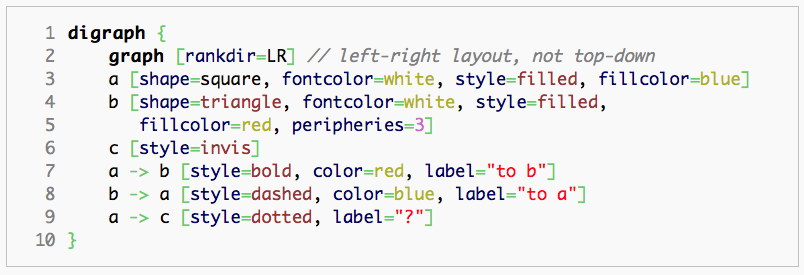

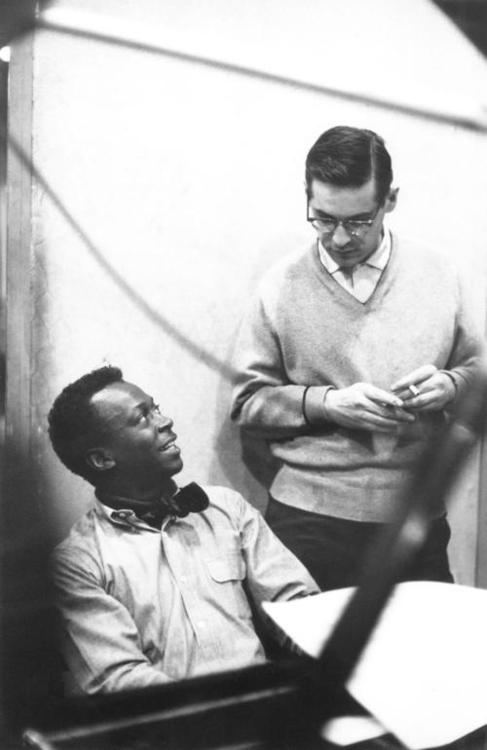

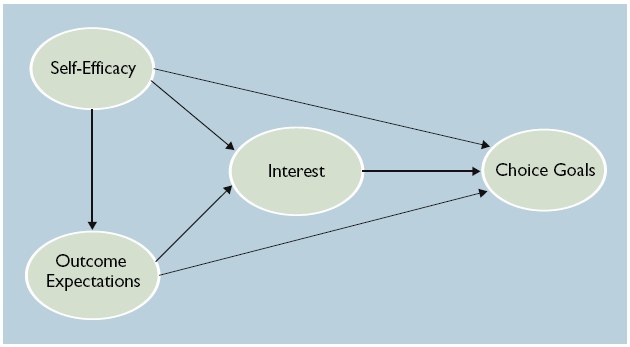

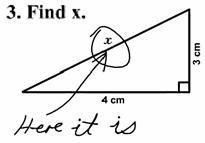

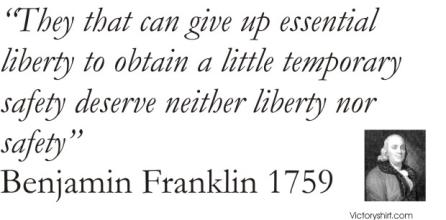

Someone sent me this image, from a slide deck they ran across somewhere:

I don't know what to do with it other than to say this:

As a person named 'Eugene' and an admirer of Mr. Wilder's work, I smile every time I see it. That's a clever way to reinforce the idea of gene expression by analogy, using actors and roles.

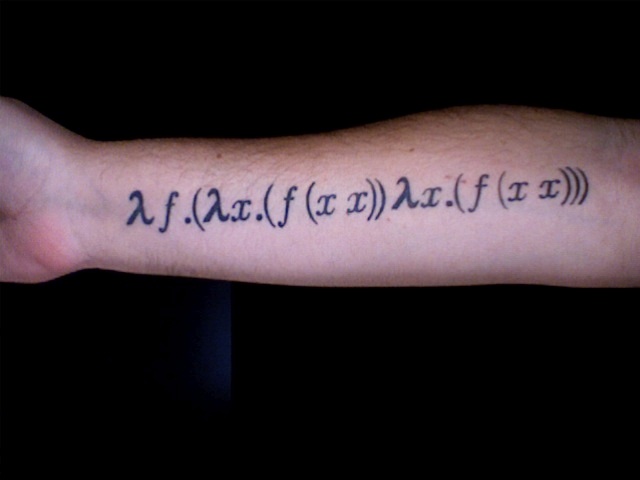

When I teach OOP and FP, I'm always looking for simple analogies like this from the non-programming world to reinforce ideas that we are learning about in class. My OOP repertoire is pretty deep. As I teach functional programming each spring, I'm still looking for new FP analogies all the time.

~~~~~

Note: I don't know the original source of this image. If you know who created the slide, please let me know via email, Mastodon, or Twitter (all linked in the sidebar). I would love to credit the creator.

February 29, 2024 3:45 PM

Finding the Torture You're Comfortable With

At some point last week, I found myself pointed to this short YouTube video of Jerry Seinfeld talking with Howard Stern about work habits. Seinfeld told Stern that he was essentially always thinking about making comedy. Whatever situation he found himself in, even with family and friends, he was thinking about how he could mine it for new material. Stern told him that sounded like torture. Jerry said, yes, it was, but...

Your blessing in life is when you find the torture you're comfortable with.

This is something I talk about with students a lot.

Sometimes it's a current student who is worried that CS isn't for them because too often the work seems hard, or boring. Shouldn't it be easy, or at least fun?

Sometimes it's a prospective student, maybe a HS student on a university visit or a college student thinking about changing their major. They worry that they haven't found an area of study that makes them happy all the time. Other people tell them, "If you love what you do, you'll never work a day in your life." Why can't I find that?

I tell them all that I love what I do -- studying, teaching, and writing about computer science -- and even so, some days feel like work.

I don't use torture as analogy the way Seinfeld does, but I certainly know what he means. Instead, I usually think of this phenomenon in terms of drudgery: all the grunt work that comes with setting up tools, and fiddling with test cases, and formatting documentation, and ... the list goes on. Sometimes we can automate one bit of drudgery, but around the corner awaits another.

And yet we persist. We have found the drudgery we are comfortable with, the grunt work we are willing to do so that we can be part of the thing it serves: creating something new, or understanding one little corner of the world better.

I experienced the disconnect between the torture I was comfortable with and the torture that drove me away during my first year in college. As I've mentioned here a few times, most recently in my post on Niklaus Wirth, from an early age I had wanted to become an architect (the kind who design houses and other buildings, not software). I spent years reading about architecture and learning about the profession. I even took two drafting courses in high school, including one in which we designed a house and did a full set of plans, with cross-sections of walls and eaves.

Then I got to college and found two things. One, I still liked architecture in the same way as I always had. Two, I most assuredly did not enjoy the kind of grunt work that architecture students had to do, nor did I relish the torture that came with not seeing a path to a solution for a thorny design problem.

That was so different from the feeling I had writing BASIC programs. I would gladly bang my head on the wall for hours to get the tiniest detail just the way I wanted it, either in the code or in the output. When the torture ended, the resulting program made all the pain worth it. Then I'd tackle a new problem, and it started again.

Many of the students I talk with don't yet know this feeling. Even so, it comforts some of them to know that they don't have to find The One Perfect Major that makes all their boredom go away.

However, a few others understand immediately. They are often the ones who learned to play a musical instrument or who ran cross country. The pianists remember all the boring finger exercises they had to do; the runners remember all the wind sprints and all the long, boring miles they ran to build their base. These students stuck with the boredom and worked through the pain because they wanted to get to the other side, where satisfaction and joy are.

Like Seinfeld, I am lucky that I found the torture I am comfortable with. It has made this life a good one. I hope everyone finds theirs.

Posted by Eugene Wallingford | Permalink | Categories: Computing, Personal, Running, Software Development, Teaching and Learning

February 09, 2024 3:45 PM

Finding Cool Ideas to Play With

In a recent post on Computational Complexity, Bill Gasarch wrote up the solution to a fun little dice problem he had posed previously. Check it out. After showing the solution, he answered some meta-questions. I liked this one:

How did I find this question, and its answer, at random? I intentionally went to the math library, turned my cell phone off, and browsed some back issues of the journal Discrete Mathematics. I would read the table of contents and decide what article sounded interesting, read enough to see if I really wanted to read that article. I then SAT DOWN AND READ THE ARTICLES, taking some notes on them.

He points out that turning off his cell phone isn't the secret to his method.

It's allowing yourself the freedom to NOT work on a a paper for the next ... conference and just read math for FUN without thinking in terms of writing a paper.

Slack of this sort used to be one of the great attractions of the academic life. I'm not sure it is as much a part of the deal as it once was. The pace of the university seems faster these days. Many of the younger faculty I follow out in the world seem always to be hustling for the next conference acceptance or grant proposal. They seem truly joyous when an afternoon turns into a serendipitous session of debugging or reading.

Gasarch's advice is wise, if you can follow it: Set aside time to explore, and then do it.

It's not always easy fun; reading some articles is work. But that's the kind of fun many of us signed up for when we went into academia.

~~~~~

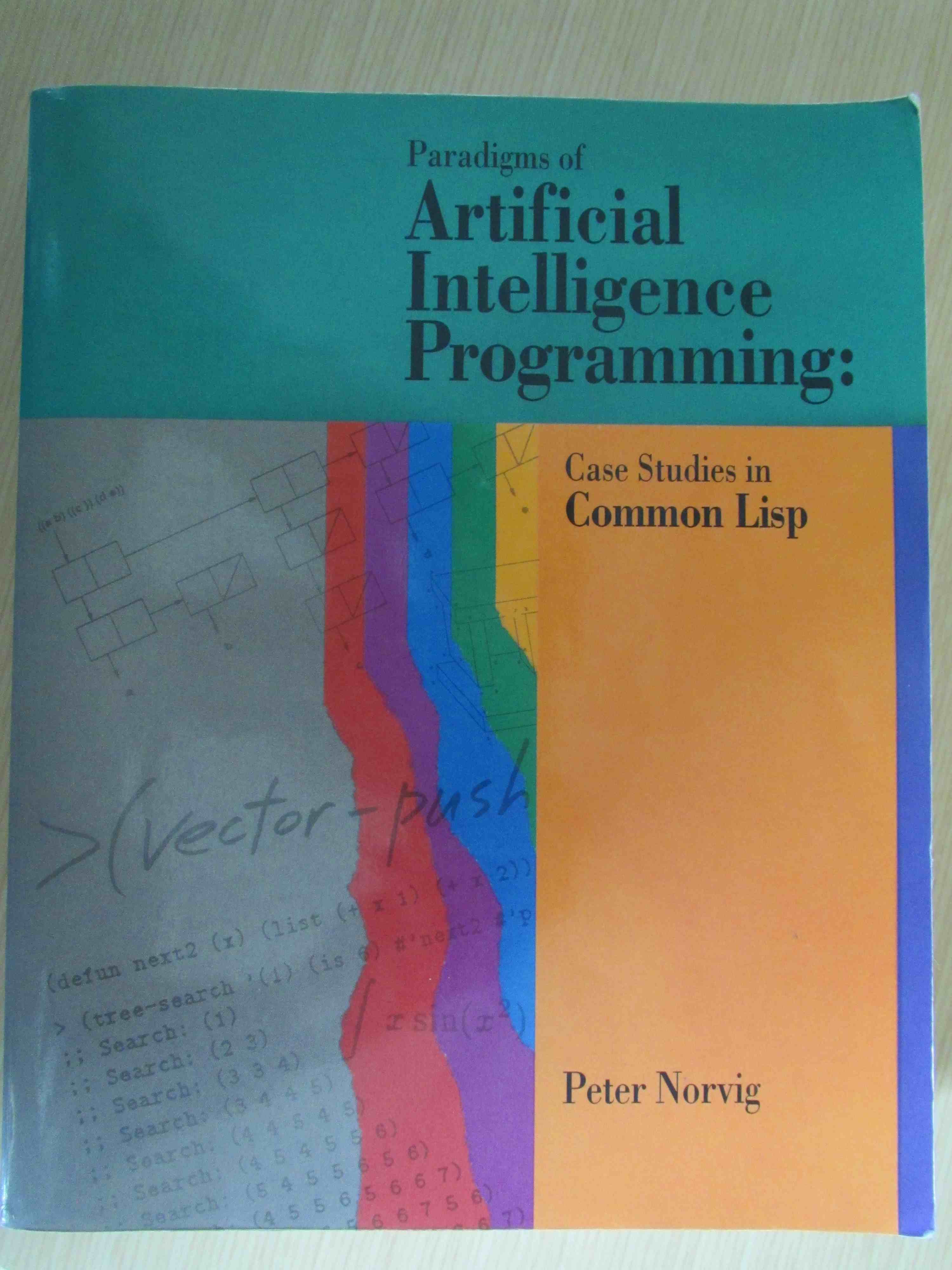

I haven't made enough time to explore recently, but I did get to re-read an old paper unexpectedly. A student came to me to discuss possible undergrad research projects. He had recently been noodling around, implementing his own neural network simulator. I've never been much of a neural net person, but that reminded of this paper on PushForth, a concatenative language in the spirit of Forth and Joy designed as part of an evolutionary programming project. Genetic programming has always interested me, and concatenative languages seem like a perfect fit...

I found the paper in a research folder and made time to re-read it for fun. This is not the kind of fun Gasarch is talking about, as it had potential use for a project, but I enjoyed digging into the topic again nonetheless.

The student looked at the paper and liked the idea, too, so we embarked on a little project -- not quite serendipity, but a project I hadn't planned to work on at the turn of the new year. I'll take it!

January 21, 2024 8:28 AM

A Few Thoughts on How Criticism Affects People

The same idea popped up in three settings this week: a conversation with a colleague about student assessments, a book I am reading about women writers, and a blog post I read on the exercise bike one morning.

The blog post is by Ben Orlin at Math With Bad Drawings from a few months ago, about an occasional topic of this blog: being less wrong each day [ for example, 1 and 2 ]. This sentence hit close enough to home that I saved it for later.

We struggle to tolerate censure, even the censure of idiots. Our social instrument is strung so tight, the least disturbance leaves us resonating for days.

Perhaps this struck a chord because I'm currently reading A Room of One's Own, by Virginia Woolf. In one early chapter, Woolf considers the many reasons that few women wrote poetry, fiction, or even non-fiction before the 19th century. One is that they had so little time and energy free to do so. Another is that they didn't have space to work alone, a room of one's own. But even women who had those things had to face a third obstacle: criticism from men and women alike that women couldn't, or shouldn't, write.

Why not shrug off the criticism and soldier on? Woolf discusses just how hard that is for anyone to do. Even many of our greatest writers, including Tennyson and Keats, obsessed over every unkind word said about them or their work. Woolf concludes:

Literature is strewn with the wreckage of men who have minded beyond reason the opinions of others.

Orlin's post, titled Err, and err, and err again; but less, and less, and less, makes an analogy between the advance of scientific knowledge and an infinite series in mathematics. Any finite sum in the series is "wrong", but if we add one more term, it is less wrong than the previous sum. Every new term takes us closer to the perfect answer.

He then goes on to wonder whether the same is, or could be, true of our moral development. His inspiration is American psychologist and philosopher William James. I have mentioned James as an inspiration myself a few times in this blog, most explicitly in Pragmatism and the Scientific Spirit, where I quote him as saying that consciousness is "not a thing or a place, but a process".

Orlin connects his passage on how humans receive criticism to James's personal practice of trying to listen only to the judgment of ever more noble critics, even if we have to imagine them into being:

"All progress in the social Self," James says, "is the substitution of higher tribunals for lower."

If we hold ourselves to a higher, more noble standard, we can grow. When we reach the next plateau, we look for the next higher standard to shoot for. This is an optimistic strategy for living life: we are always imperfect, but we aspire to grow in knowledge and moral development by becoming a little less wrong each step of the way. To do so, we try to focus our attention on the opinions of those whose standard draws us higher.

Reading James almost always leaves my spirit lighter. After Orlin's post, I feel a need to read The Principles of Psychology in full.

These two threads on how people respond to criticism came together when I chatted with a colleague this week about criticism from students. Each semester, we receive student assessments of our courses, which include multiple-choice ratings as well as written comments. The numbers can be a jolt, but their effect is nothing like that of the written comments. Invariably, at least one student writes a negative response, often an unkind or ungenerous one.

I told my colleague that this is recurring theme for almost every faculty member I have known: Twenty-nine students can say "this was a good course, and I really like the professor", but when one student writes something negative... that is the only comment we can think about.

The one bitter student in your assessments is probably not the ever more noble critic that James encourages you to focus on. But, yeah. Professors, like all people, are strung pretty tight when it comes to censure.

Fortunately, talking to others about the experience seems to help. And it may also remind us to be aware of how students respond to the things we say and do.

Anyway, I recommend both the Orlin blog post and Woolf's A Room of One's Own. The former is a quick read. The latter is a bit longer but a smooth read. Woolf writes well, and once my mind got on the book's wavelength, I found myself engaged deeply in her argument.

January 06, 2024 10:41 AM

end.

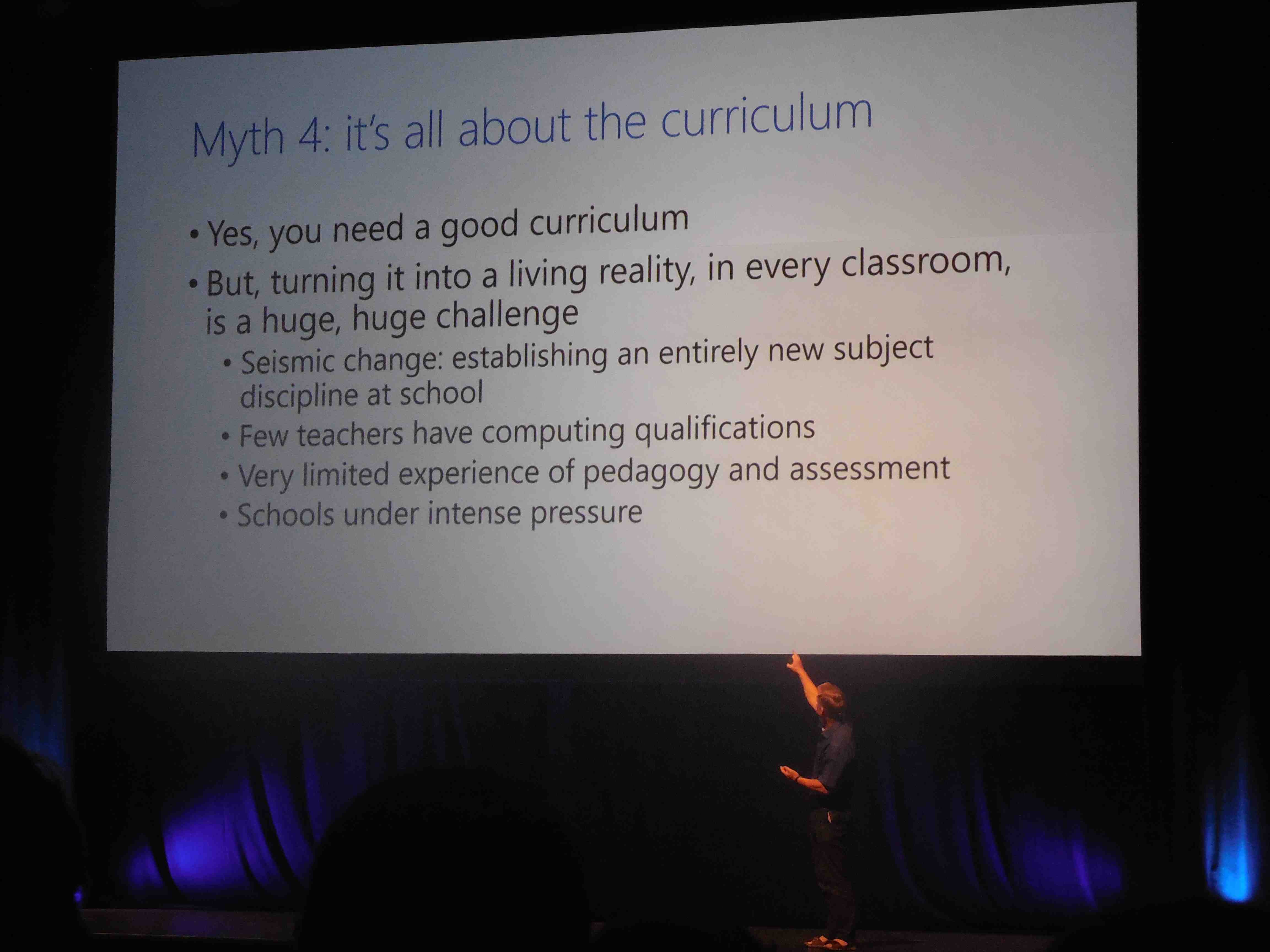

My social media feed this week has included many notes and tributes on the passing of Niklaus Wirth, including his obituary from ETH Zurich, where he was a professor. Wirth was, of course, a Turing Award winner for his foundational work designing a sequence of programming languages.

Wirth's death reminded me of

END DO,

my post on the passing of John Backus, and before that

a post

on the passing of Kenneth Iverson. I have many fond memories related

to Wirth as well.

Pascal

Pascal was, I think, the fifth programming language I learned. After that, my language-learning history starts to speed up and blur. (I do think APL and Lisp came soon after.)

I learned BASIC first, as a junior in high school. This ultimately changed the trajectory of my life, as it planted the seeds for me to abandon a lifelong dream to be an architect.

Then at university, I learned Fortran in CS 1, PL/I in Data Structures (you want pointers!), and IBM 360/370 assembly language in a two-quarter sequence that also included JCL. Each of these language expanded my mind a little.

Pascal was the first language I learned "on my own". The fall of my junior year, I took my first course in algorithms. On Day 1, the professor announced that the department had decided to switch to Pascal in the intro course, so that's what we would use in this course.

"Um, prof, that's what the new CS majors are learning. We know Fortran and PL/I." He smiled, shrugged, and turned to the chalkboard. Class began.

After class, several of us headed immediately to the university library, checked out one Pascal book each, and headed back to the dorms to read. Later that week, we were all using Pascal to implement whatever classical algorithm we learned first in that course. Everything was fine.

I've always treasured that experience, even if it was little scary for a week or so. And don't worry: That professor turned out to be a good guy with whom I took several courses. He was a fellow chess player and ended up being the advisor on my senior project: a program to perform the Swiss system commonly used to run chess tournaments. I wrote that program in... Pascal. Up to that point, it was the largest and most complex program I had ever written solo. I still have the code.

The first course I taught as a tenure-track prof was my university's version of CS 1 -- using Pascal.

Fond memories all. I miss the language.

Wirth sightings in this blog

I did a quick search and found that Wirth has made an occasional appearance in this blog over the years.

• January 2006: Just a Course in Compilers

This was written at the beginning of my second offering of our compiler course, which I have taught and written about many times since. I had considered using as our textbook Wirth's Compiler Construction, a thin volume that builds a compiler for a subset of Wirth's Oberon programming language over the course of sixteen short chapters. It's a "just the facts and code" approach that appeals to me most days.

I didn't adopt the book for several reasons, not least of which that at the time Amazon showed only four copies available, starting at $274.70 each. With two decades of experience teaching the course now, I don't think I could ever really use this book with my undergrads, but it was a fun exercise for me to work through. It helped me think about compilers and my course.

Note: A PDF of Compiler Construction has been posted on the

web for many years, but every time I link to it, the link ultimately

disappears. I decided to mirror the files locally, so that the link

will last as long as this post lasts:

[

Chapters 1-8

|

Chapters 9-16

]

• September 2007: Hype, or Disseminating Results?

... in which I quote Wirth's thoughts on why Pascal spread widely in the world but Modula and Oberon didn't. The passage comes from a short historical paper he wrote called "Pascal and its Successors". It's worth a read.

• April 2012: Intermediate Representations and Life Beyond the Compiler

This post mentions how Wirth's P-code IR ultimately lived on in the MIPS compiler suite long after the compiler which first implemented P-code.

• July 2016: Oberon: GoogleMaps as Desktop UI

... which notes that the Oberon spec defines the language's desktop as "an infinitely large two-dimensional space on which windows ... can be arranged".

• November 2017: Thousand-Year Software

This is my last post mentioning Wirth before today's. It refers to the same 1999 SIGPLAN Notices article that tells the P-code story discussed in my April 2012 post.

I repeat myself. Some stories remain evergreen in my mind.

The Title of This Post

I titled my post on the passing of John Backus END DO

in homage to his intimate connection to Fortran. I wanted to do something

similar for Wirth.

Pascal has a distinguished sequence to end a program:

"end.". It seems a

fitting way to remember the life of the person who created it and who

gave the world so many programming experiences.

December 31, 2023 1:35 PM

"I Want to Find Something to Learn That Excites Me"

In his year-end wrap-up, Greg Wilson writes:

I want to find something to learn that excites me. A new musical instrument is out because of my hand; I've thought about reviving my French, picking up some Spanish, diving into Postgres or machine learningn (yeah, yeah, I know, don't hate me), but none of them are making my heart race.

What he said. I want to find something to learn that excites me.

I just spent six months immersed in learning more about HTML, CSS, and JavaScript so that I could work with novice web developers. Picking up that project was one part personal choice and one part professional necessity. It worked out well. I really enjoyed studying the web development world and learned some powerful new tools. I will continue to use them as time and energy permit.

But I can't say that I am excited enough by the topic to keep going in this area. Right now, I am still burned out from the semester on a learning treadmill. I have a followup post to my early reactions about the course's JavaScript unit in the hopper, waiting for a little desire to finish it.

What now? There are parallels between my state and Wilson's.

- After my first-ever trip to Europe in 2019, for a Dagstuhl seminar (brief mention here), my wife and I talked about a return trip, with a focus this time on Italy. Learning Italian was part of the nascent plan. Then came COVID, along with a loss of energy for travel. I still have learning Italian in my mind.

- In the fall of 2020, the first full semester of the pandemic, I taught a database course for the first time (bookend posts here and here). I still have a few SQL projects and learning goals hanging around from that time, but none are calling me right now.

- LLMs are the main focus of so many people's attention these days, but they still haven't lit up me up. In some ways, I envy David Humphrey, who fell in love with AI this year. Maybe something about LLMs will light me up one of these days. (As always, you should read David's stuff. He does neat work and shares it with the world.)

Unlike Wilson, I do not play a musical instrument. I did, however, learn a little basic piano twenty-five years ago when I was a Suzuki piano parent with my daughters. We still have our piano, and I harbor dreams of picking it back up and going farther some day. Right now doesn't seem to be that day.

I have several other possibilities on the back burner, particularly in the area of data analytics. I've been intrigued by the work on data-centric computing in education being done by Kathi Fisler and Shriram Krishnamurthi have been at Brown. I also will be reading a couple of their papers on program design and plan composition in the coming weeks as I prepare for my programming languages course this spring. Fisler and Krishnamurthi are coming at these topics from the side of CS education, but the topics are also related to my grad-school work in AI. Maybe these papers will ignite a spark.

Winter break is coming to an end soon. Like others, I'm thinking about 2024. Let's see what the coming weeks bring.

November 24, 2023 12:17 PM

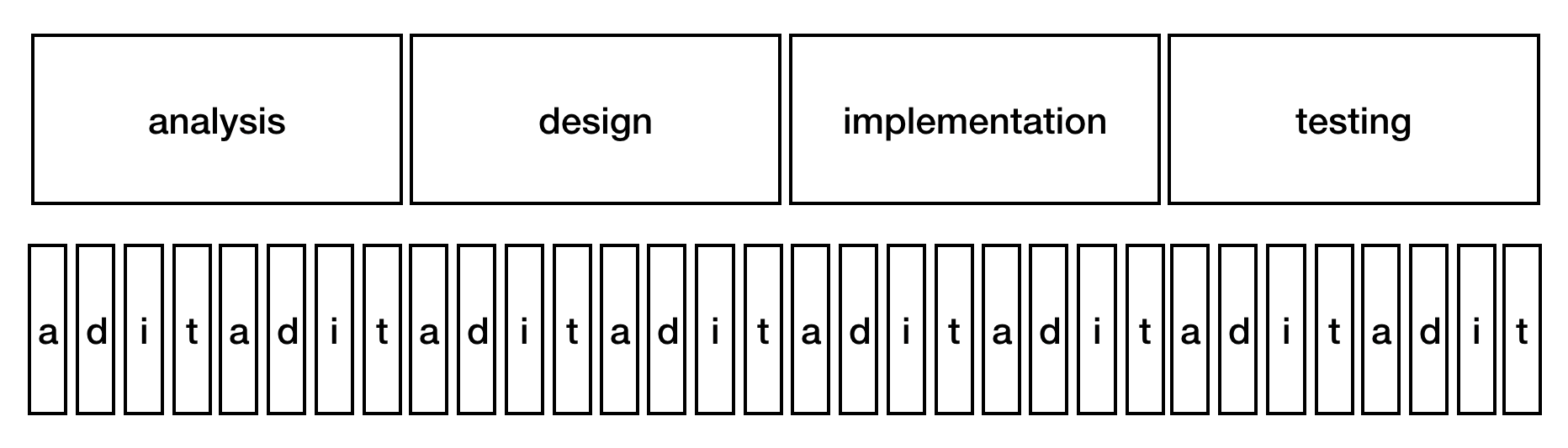

And Then Came JavaScript

It's been a long time again between posts. That seems to be the new normal. On top of that, though, teaching web development this fall for the first time has been soaking up all of my free hours in the evenings and over the weekends, which has left me little time to be sad about not blogging. I've certainly been writing plenty of text and a bit of code.

The course started off with a lot of positive energy. The students were excited to learn HTML and CSS. I made a few mistakes in how I organized and presented topics, but things went pretty well. By all accounts, students seemed to enjoy what they were learning and doing.

And then came JavaScript.

Well, along came real computer programming. It could have been Python or some other language, I think, but the transition to writing programs, even short bits of code, took the wind out of the students' excitement.

I was prepared for the possibility that the mood of the course would change when we shifted from CSS to JavaScript. A previous offering of the course had encountered a similar obstacle. Learning to program is a challenge. I'm still not convinced that learning to program is that much harder than a lot of things people learn to do, but it does take time.

As I prepared for the course last summer, I saw so many different approaches to teaching JavaScript for web development. Many assumed a lot of HTML/CSS/DOM background, certainly more than my students had picked up in six weeks. Others assumed programming experience most of my students didn't have, even when the approach said 'no experience necessary!'. So I had to find a middle path.

My main source of inspiration in the first half of the course was David Humphrey's WEB 222 course, which was explicitly aimed at an audience of CS students with programming experience. So I knew that I had to do something different with my students, even as I used his wonderful course materials whenever I could.

My department had offered this course once many years ago, aimed at much the same kind of audience as mine, and the instructor — a good friend — shared all of his materials. I used that offering as a primary source of ideas for getting started with JavaScript, and I occasionally adapted examples for use in my class.

The results were not ideal. Students don't seem to have enjoyed this part of the course much at all. Some acknowledged that to me directly. Even the most engaged students seemed to lose a bit of their energy for the course. Performance also sagged. Based on homework solutions and a short exam, I would say that only one student has achieved the outcomes I had originally outlined for this unit.

I either expected too much or did not do a good enough job helping students get to where I wanted them to be.

I have to do better next time.

But how?

Programming isn't as hard as some people tell us, but most of us can't learn to do it in five or six weeks, at least not enough to become very productive. We don't expect students to master all of CSS or even HTML in such a short time, so we can't expect them to master JavaScript either. The difference is that there seems to be a smooth on-ramp for learning HTML and CSS on the way to mastery, while JavaScript (or any other programming language) presents a steep climb, with occasional plateaus.

For now, I am thinking that the key to doing better is to focus on an even narrower set of concepts and skills.

If people starting from scratch can't learn all of JavaScript in five or six weeks, or even enough to be super-productive, what useful skills can they learn in that time? For this course I trimmed down the set of topics that we might cover in an intro CS considerably, but I think I need to trim even more and — more importantly — choose topics and examples that are even more embedded in the act of web development.

Earlier this week, a sudden burst of thought outlined something like this:

-

document.querySelector()to select an element in a page - simple assignment statements to modify

innerText,innerHTML, and various style attributes - parameterizing changes to an element to create a function

-

document.querySelectorAll()to select collections of elements in a page -

forEachto process every element in a collection - guarded actions to select items in the collection using

ifstatements, withoutelseclauses

That is a lot to learn in five weeks! Even so, it cuts way back on several topics I tried cover this time, such as a more general discussion of objects, arrays, and boolean values, and a deeper look at the DOM. And it eliminates even mentioning several topics altogether:

-

if-elsestatements -

whilestatements - counted

forloops and, more generally, map-like behavior - any fiddling with numbers and arithmetic, which are often used

to learn assignment statements,

ifstatements, and function

There are so many things a programmer can't do without these concepts, such as writing an indefinite data validation loop or really understanding what's going on in the DOM tree. But trying to cover all of those topics too did not result in students being able to do them either! I think it left them confused, with so many new ideas jumbled in their minds, and a general dissatisfaction at being unable to use JavaScript effectively.

Of course I would want to build hooks into the course for students who want to go deeper and are ready to do so. There is so much good material on the web for people who are ready for more. Providing more enriched opportunities for advanced students is easier than designing learning opportunities for beginners.

Can something like this work?

I won't know for a while. It will be at least a year before I teach this course again. I wish I could teach it again sooner, so that I could try some of my new ideas and get feedback on them sooner. Such is the curse of a university calendar and once-yearly offerings.

It's too late to make any big changes in trajectory this semester. We have only two weeks after the current one-week Thanksgiving break. Next week, we will focus on input forms (HTML), styling (CSS), and a little data validation (HTML+JavaScript). I hope that this return to HTML+CSS helps us end the course on a positive note. I'd like for students to finish with a good feeling about all they have learned and now can do.

October 31, 2023 7:12 PM

The Spirit of Spelunking

Last month, Gus Mueller announced v7.4.3 of his image-editing tool Acorn. This release has a fun little extra built in:

One more super geeky thing I've added is a JavaScript Console:

...

This tool is really meant for folks developing plugins in Acorn, and it is only accessible from the Command Bar, but a part of me absolutely loves pointing out little things like this. I was just chatting with Brent Simmons the other day at Xcoders how you can't really spelunk in apps any more because of all the restrictions that are (justifiably) put on recent MacOS releases. While a console isn't exactly a spelunking tool, I still think it's kind of cool and fun and maybe someone will discover it accidentally and that will inspire them to do stupid and entertaining things like we used to do back in the 10.x days.

I have had JavaScript consoles on my mind a lot in the last few weeks. My students and I have used the developer tools in our browsers as part of my web development course for non-majors. To be honest, I had never used a JavaScript console until this summer, when I began preparing for the course in earnest. REPLs are, of course, a big part of the programming background, from Lisp to Racket to Ruby to Python, so I took to the console with ease and joy. (My past experience with JavaScript was mostly in Node.js, which has its own REPL.) We just started our fourth week studying JavaScript in class, so my students have started getting used to the console. At the outset, it was new to most of them, who have never programmed before. Our attention has now turned to interacting with the DOM and manipulating the content of web page. It's been a lot of fun for me. I'm not sure how it feels for all of my students, though. Many came to the course for web design and really enjoy HTML and CSS. JavaScript, on the other hand, is... programming: more syntax, more semantics, and a lot of new details just to select, say, the third h3 on the page.

Sometimes, you just gotta work over the initial hump to sense the power and fun. Some of them are getting there.

Today I had great fun showing them how to add some simple search functionality to a web page. It was our first big exercise using document.querySelectorAll() and processing a collection of HTML elements. Soon we'll learn about text fields and buttons and events, at which point my The Books of Bokonon will become much more useful to the many readers who still find pleasure in it. Just last night that page got its first modern web styling in the form of a CSS style sheet. For its first twenty-four years of existence, it was all 1990s-era HTML and pseudo-layout using <center>, <hr>, and <br> tags.

Anyway, I appreciate Gus's excitement at adding a console to Acorn, making his tool a place to play as well as work. Spread the joy.

September 22, 2023 8:38 PM

Time and Words

Earlier this week, I posted an item on Mastodon:

After a few months studying and using CSS, every once in a while I am able to change a style sheet and cause something I want to happen. It feels wonderful. Pretty soon, though, I'll make a typo somewhere, or misunderstand a property, and not be able to make anything useful happen. That makes me feel like I'm drowning in complexity.

Thankfully, the occasional wonderful feelings — and my strange willingness to struggle with programming errors — pull me forward.

I've been learning a lot while preparing to teach to our web development course [ 1 | 2 ]. Occasionally, I feel incompetent, or not very bright. It's good for me.

I haven't been blogging lately, but I've been writing lots of words. I've also been re-purposing and adapting many other words that are licensed to be reusable (thanks, David Humphrey and John Davis). Prepping a new course is usually prime blogging time for me, with my mind in a constant state of churn, but this course has me drowning in work to do. There is so much to learn, and so much new material — readings, session plans and notes, examples, homework assignments — to create.

I have made a few notes along the way, hoping to expand them into posts. Today they become part of this post.

VS Code

This is my first time in a long time using an editor configured to auto-complete and do all the modern things that developers expect these days. I figured, new tool, why not try a new workflow...

After a short period of breaking in, I'm quite enjoying the experience. One feature I didn't expect to use so much is the ability to collapse an HTML element. In a large HTML file, this has been a game changer for me. Yes, I know, this is old news to most of you. But as my brother loves to say when he gets something used or watches a movie everyone else has already seen, "But, hey, it's new to me!" VS Code's auto-complete across HTML, CSS, and JavaScript, with built-in documentation and links to MDN's more complete documentation, lets me type code much faster than ever before. It made me think of one of my favorite Kent Beck lines:

As the speed of development approaches infinity, reuse becomes irrelevant.

When programming, I often copy, paste, and adapt previous code. In VS Code, I have found myself moving fast enough that copy, paste, and adapt would slow me down. That sort of reuse has become irrelevant.

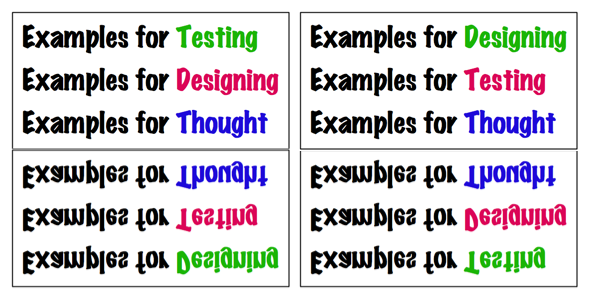

Examples > Prose

The class sessions for which I have written the longest and most complete notes for my students (and me) tend to be the ones for which I have the fewest, or least well-developed, code examples. The reverse is also true: lots of good examples and code tends to mean smaller class notes. Sometimes that is because I run out of time to write much prose to accompany the session. Just as often, though, it's because the examples give us plenty to do live in class, where the learning happens in the flow of writing and examining code.

This confirms something I've observed over many years of teaching: Examples First tends to work better for most students, even people like me who fancy themselves as top-down thinkers. Good examples and code exercises can create the conditions in which abstract knowledge can be learned. This is a sturdy pedagogical pattern.

Concepts and Patterns

There is so, so much to CSS! HTML itself has a lot of details for new coders to master before they reach fluency. Many of the websites aimed at teaching and presenting these topics quickly begin to feel like a downpour of information, even when the authors try to organize it all. It's too easy to fall into, "And then there's this other property...".

After a few weeks, I've settled into trying to help students learn two kinds of things for each topic:

- a couple of basic concepts or principles

- a few helpful patterns

~~~~~

We are only five weeks into a fifteen week semester, so take any conclusions I draw with a grain of salt. We also haven't gotten to JavaScript yet, the teaching and learning of which will present a different sort of challenge than HTML and CSS with students who have little or no experience programming. Maybe I will make time to write up our experiences with JavaScript in a few weeks.

Posted by Eugene Wallingford | Permalink | Categories: Patterns, Software Development, Teaching and Learning

July 31, 2023 2:35 PM

Learning CSS By Doing It

I ran across this blog post earlier this summer when browsing articles on CSS, as one does while learning CSS for a fall course. Near the end, the writer says:

This is thoroughly exciting to me, and I don't wanna whine about improvements in CSS, but it's a bit concerning since I feel like what the web is now capable of is slipping through my fingers. And I guess that's what I'm worried about; I no longer have a good idea of how these things interact with each other, or where the frontier is now.

The map of CSS in my mind is real messy, confused, and teetering with details that I can't keep straight in my head.

Imagine how someone feels as they learn CSS basically from the beginning and tries to get a handle both on how to use it effectively and how to teach it effectively. There is so much there... The good news, of course, is that our course is for folks with no experience, learning the basics of HTML, CSS, and JavaScript from the beginning, so there is only so far we can hope to go in fifteen weeks anyway.

My impressions of HTML and CSS at this point are quite similar: very little syntax, at least for big chunks of the use cases, and lots and lots of vocabulary. Having convenient access to documentation such as that available at developer.mozilla.org via the web and inside VS Code makes exploring all of the options more manageable in context.

I've been watching Dave Humphrey's videos for his WEB 222 course at Seneca College and learning tons. Special thanks to Dave for showing me a neat technique to use when learning -- and teaching -- web development: take a page you use all the time and try to recreate it using HTML and CSS, without looking at the page's own styles. He has done that a couple times now in his videos, and I was able to integrate the ideas we covered about the two languages in previous videos as Dave made the magic work. I have tried it once on my own. It's good fun and a challenging exercise.

Learning layout by viewing page source used to be easier in the old days, when pages were simpler and didn't include dozens of CSS imports or thousands of scripts. Accepting the extra challenge of not looking at a page's styles in 2023 is usually the simpler path.

Two re-creations I have on tap for myself in the coming days are a simple Wikipedia-like page for myself (I'm not notable enough to have an actual Wikipedia page, of course) and a page that acts like my Mastodon home page, with anchored sidebars and a scrolling feed in between. Wish me luck.

July 10, 2023 12:28 PM

What We Know Affects What We See

Last time I posted this passage from Shop Class as Soulcraft, by Matthew Crawford:

Countless times since that day, a more experienced mechanic has pointed out to me something that was right in front of my face, but which I lacked the knowledge to see. It is an uncanny experience; the raw sensual data reaching my eye before and after are the same, but without the pertinent framework of meaning, the features in question are invisible. Once they have been pointed out, it seems impossible that I should not have seen them before.

We perceive in part based on what we know. A lack of knowledge can prevent us from seeing what is right in front of us. Our brains and eyes work together, and without a perceptual frame, they don't make sense of the pattern. Once we learn something, our eyes -- and brains -- can.

This reminds me of a line from the movie The Santa Clause, which my family watched several times when my daughters were younger. The new Santa Claus is at the North Pole, watching magical things outside his window, and comments to the elf whose been helping him, "I see it, but I don't believe it." She replies that adults don't understand: "Seeing isn't believing; believing is seeing." As a mechanic, Crawford came to understand that knowing is seeing.

Later in the book, Crawford describes another way that knowing and perceiving interact with one another, this time with negative results. He had been struggling to figure out why there was no spark at the spark plugs in his VW Bug, and his father -- an academic, not a mechanic -- told him about Ohm's Law:

Ohm's law is something explicit and rulelike, and is true in the way that propositions are true. Its utter simplicity makes it beautiful; a mind in possession of this equation is charmed with a sense of its own competence. We feel we have access to something universal, and this affords a pleasure that is quasi-religious, perhaps. But this charm of competence can get in the way of noticing things; it can displace, or perhaps hamper the development of, a different kind of knowledge that may be difficult to bring to explicit awareness, but is superior as a practical matter. It superiority lies in the fact that it begins with the typical rather than the universal, so it goes more rapidly and directly to particular causes, the kind that actually tend to cause ignition problems.

Rule-based, universal knowledge imposes a frame on the scene. Unfortunately, its universal nature can impede perception by blinding us to the particular details of the situation we are actually in. Instead of seeing what is there, we see the scene as our knowledge would have it.

|

This reminds me of a story and a technique from the book Drawing on the Right Side of the Brain, which I first wrote about in the earliest days of this blog. When asked to draw a chair, most people barely even look at the chair in front of them. Instead, they start drawing their idea of a chair, supplemented by a few details of the actual chair they see. That works about as well as diagnosing an engine by diagnosing your mind's eye of an engine, rather than the mess of parts in front of you.

In that blog post, I reported my experience with one of Edwards's techniques for seeing the chair, drawing the negative space:

One of her exercises asked the student to draw a chair. But, rather than trying to draw the chair itself, the student is to draw the space around the chair. You know, that little area hemmed in between the legs of the chair and the floor; the space between the bottom of the chair's back and its seat; and the space that is the rest of the room around the chair. In focusing on these spaces, I had to actually look at the space, because I don't have an image in my brain of an idealized space between the bottom of the chair's back and its seat. I had to look at the angles, and the shading, and that flaw in the seat fabric that makes the space seem a little ragged.

Sometimes, we have to trick our eyes into seeing, because otherwise our brains tell us what we see before we actually look at the scene. Abstract universal knowledge helps us reason about what we see, but it can also impede us from seeing in the first place.

What we know both enables and hampers what we perceive. This idea has me thinking about how my students this fall, non-CS majors who want to learn how to develop web sites, will encounter the course. Most will be novice programmers who don't know what they see when they are looking at code, or perhaps even at a page rendered in the browser. Debugging code will be a big part of their experience this semester. Are there exercises I can give them to help them see accurately?

As I said in my previous post, there's lots of good stuff happening in my brain as I read this book. Perhaps more posts will follow.

Posted by Eugene Wallingford | Permalink | Categories: Patterns, Software Development, Teaching and Learning

July 04, 2023 11:55 AM

Time Out

Any man can call time out, but no man

can say how long the time out will be.

--

Books of Bokonon

I realized early last week that it had been a while since I blogged. June was a morass of administrative work, mostly summer orientation. Over the month, I had made notes for several potential posts, on my web dev course, on the latest book I was reading, but never found -- made -- time to write a full post. I figured this would be a light month, only a couple of short posts, if I only I could squeeze another one in by Friday.

Then I saw that the date of my most recent post was May 26, with the request for ideas about the web course coming a week before.

I no longer trust my sense of time.

This blog has certainly become much quieter over the years, due in part to the kind and amount of work I do and in part to choices I make outside of work. I may even have gone a month between posts a few fallow times in the past. But June 2023 became my first calendar month with zero posts.

It's somewhat surprising that a summer month would be the first to shut me out. Summer is a time of no classes to teach, fewer student and faculty issues to deal with, and fewer distinct job duties. This occurrence is a testament to how much orientation occupies many of my summer days, and how at other times I just want to be AFK.

A real post or two are on their way, I promise -- a promise to myself, as well as to any of you who are missing my posts in your newsreader. In the meantime...

On the web dev course: thanks to everyone who sent thoughts! There were a few unanimous, or near unanimous, suggestions, such as to have students use VS code. I am now learning it myself, and getting used to an IDE that autocompletes pairs such as "". My main prep activity up to this point has been watching David Humphrey's videos for WEB 222. I have been learning a little HTML and JavaScript and a lot of CSS and how these tools work together on the modern web. I'm also learning how to teach these topics, while thinking about the differences between my student audience and David's.

On the latest book: I'm currently reading Shop Class as Soulcraft, by Matthew Crawford. It came out in 2010 and, though several people recommended it to me then, I had never gotten around to it. This book is prompting so many ideas and thoughts that I'm constantly jotting down notes and thinking about how these ideas might affect my teaching and my practice as a programmer. I have a few short posts in mind based on the book, if only I commit time to flesh them out. Here are two passages, one short and one long, from my notes.

Fixing things may be a cure for narcissism.

Countless times since that day, a more experienced mechanic has pointed out to me something that was right in front of my face, but which I lacked the knowledge to see. It is an uncanny experience; the raw sensual data reaching my eye before and after are the same, but without the pertinent framework of meaning, the features in question are invisible. Once they have been pointed out, it seems impossible that I should not have seen them before.

Both strike a chord for me as I learn an area I know only the surface of. Learning changes us.

May 19, 2023 2:57 PM

Help! Teaching Web Development in 2023

With the exception of teaching our database course during the COVID year, I have been teaching a stable pair of courses for the last many semesters: Programming Languages in the spring and our compilers course, Translation of Programming Languages, in the fall. That will change this fall due to some issues with enrollments and course demands. I'll be teaching a course in client-side web development.

The official number and title of the course are "CS 1100 Web Development: Client-Side Coding". The catalog description for the course was written years ago by committee:

Client-side web development adhering to current Web standards. Includes by-hand web page development involving basic HTML, CSS, data acquisition using forms, and JavaScript for data validation and simple web-based tools.

As you might guess from the 1000-level number, this is an introductory course suitable for even first-year students. Learning to use HTML, CSS, and Javascript effectively is the focal point. It was designed as a service course for non-majors, with the primary audience these days being students in our Interactive Digital Studies program. Students in that program learn some HTML and CSS in another course, but that course is not a prerequisite to ours. A few students will come in with a little HTML5+CSS3 experience, but not all.

So that's where I am. As I mentioned, this is one of my first courses to design from scratch in a long time. Other than Database Systems, we have to go back to Software Engineering in 2009. Starting from scratch is fun but can be daunting, especially in a course outside my core competency of hard-core computer science.

The really big change, though, was mentioned two paragraphs ago: non-majors. I don't think I've taught non-majors since teaching my university's capstone 21 years ago -- so long ago that this blog did not yet exist. I haven't taught a non-majors' programming course in even longer, 25 years or more, when I last taught BASIC. That is so long ago that their was no "Visual" in the language name!

So: new area, new content, new target audience. I have a lot of work to do this summer.

I could use some advice from those of you who do web development for a living, who know someone who does, or who are more up to date on the field than I.

Generally, what should a course with this title and catalog description be teaching to beginners in 2023?

Specifically, can you point me toward...

- similar courses with material online that I could learn from?

- resources for students to use as they learn: websites, videos, even books?

For example, a former student and now friend mentioned that the w3schools website includes a JavaScript tutorial which allows students to type and test code within the web browser. That might simplify practice greatly for non-CS students while they are learning other development tools.

I have so many questions to answer about tools in particular right now: Should we use an IDE or a simple text editor? Which one? Should we learn raw JavaScript or a simple framework? If a framework, which one?

This isn't a job-training course, but to the extent that's reasonable I would like for students to see a reasonable facsimile of what they might encounter out in industry.

Thinking back, I guess I'm glad now that I decided to play some around with JavaScript in 2022... At least I now have more context for evaluatins the options available for this course.

If you have any thoughts or suggestions, please do send them along. Sending email or replying on Mastodon or Twitter all work. I'll keep you posted on what I learn.

May 07, 2023 8:36 AM

"The Society for the Diffusion of Useful Knowledge"

I just started reading Joshua Kendall's The Man Who Made Lists, a story about Peter Mark Roget. Long before compiling his namesake thesaurus, Roget was a medical doctor with a local practice. After a family tragedy, though, he returned to teaching and became a science writer:

In the 1820s and 1830s, Roget would publish three hundred thousand words in the Encyclopaedia Brittanica and also several lengthy review articles for the Society for the Diffusion of Useful Knowledge, the organization affiliated with the new University of London, which sought to enable the British working class to educate itself.

What a noble goal, enabling the working class to educate itself. And what a cool name: The Society for the Diffusion of Useful Knowledge!

For many years, my university has provided a series of talks for retirees, on topics from various departments on campus. This is a fine public service, though without the grand vision -- or the wonderful name -- of the Society for the Diffusion of Useful Knowledge. I suspect that most universities depend too much on tuition and lower costs these days to mount an ambitious effort to enable the working class to educate itself.

Mental illness ran in Roget's family. Kendall wonders if Roget's "lifelong desire to bring order to the world" -- through his lecturing, his writing, and ultimately his thesaurus, which attempted to classify every word and concept -- may have "insulated him from his turbulent emotions" and helped him stave off the depression that afflicted several of his family members.

Academics often live an obsessive connection with the disciplines they practice and study. Certainly that sort of focus can can be bad for a person when taken too far. (Is it possible for an obsession not to go too far?) For me, though, the focus of studying something deeply, organizing its parts, and trying to communicate it to others through my courses and writing has always felt like a gift. The activity has healing properties all its own.

In any case, the name "The Society for the Diffusion of Useful Knowledge" made me smile. Reading has the power to heal, too.

April 23, 2023 12:09 PM

PyCon Day 2

Another way that attending a virtual conference is like the in-person experience: you can oversleep, or lose track of time. After a long morning of activity before the time-shifted start of Day 2, I took a nap before the 11:45 talk, and...

Talk 1: Python's Syntactic Sugar

Grrr. I missed the first two-thirds of this talk, which I greatly anticipated, but I slept longer than I planned. My body must have needed more than I was giving it.

I saw enough of the talk, though, to know I want to watch the video on YouTube when it shows up. This topic is one of my favorite topics in programming languages: What is the smallest set of features we need to implement the rest of the language? The speaker spent a couple of years implementing various Python features in terms of others, and arrived at a list of only ten that he could not translate away. The rest are sugar. I missed the list at the beginning of the talk, but I gathered a few of its members in the ten minutes I watched: while, raise, and try/except.

I love this kind of exercise: "How can you translate the statement if X: Y into one that uses only core features?" Here's one attempt the speaker gave:

try:

while X:

Y

raise _DONE

except _DONE:

None

I was today days old when I learned that Python's bool subclasses int, that True == 1, and that False == 0. That bit of knowledge was worth interrupting my nap to catch the end of the talk. Even better, this talk was based on a series of blog posts. Video is okay, but I love to read and play with ideas in written form. This series vaults to the top of my reading list for the coming days.

Talk 2: Subclassing, Composition, Python, and You

Okay, so this guy doesn't like subclasses much. Fair enough, but... some of his concerns seem to be more about the way Python classes work (open borders with their super- and subclasses) than with the idea itself. He showed a lot of ways one can go wrong with arcane uses of Python subclassing, things I've never thought to do with a subclass in my many years doing OO programming. There are plenty of simpler uses of inheritance that are useful and understandable.

Still, I liked this talk, and the speaker. He was honest about his biases, and he clearly cares about programs and programmers. His excitement gave the talk energy. The second half of the talk included a few good design examples, using subclassing and composition together to achieve various ends. It also recommended the book Architecture Patterns with Python. I haven't read a new software patterns book in a while, so I'll give this one a look.

Toward the end, the speaker referenced the line "Software engineering is programming integrated over time." Apparently, this is a Google thing, but it was new to me. Clever. I'll think on it.

Talk 3: How We Are Making CPython Faster -- Past, Present and Future

I did not realize that, until Python 3.11, efforts to make the interpreter had been rather limited. The speaker mentioned one improvement made in 3.7 to optimize the typical method invocation, obj.meth(arg), and one in 3.8 that sped up global variable access by using a cache. There are others, but nothing systematic.

At this point, the talk became mutually recursive with the Friday talk "Inside CPython 3.11's New Specializing, Adaptive Interpreter". The speaker asked us to go watch that talk and return. If I were more ambitious, I'd add a link to that talk now, but I'll let you any of you are interested to visit yesterday's post and scroll down two paragraphs.

He then continued with improvements currently in the works, including:

- efforts to optimize over larger regions, such as the different elements of a function call

- use of partial evaluation when possible

- specialization of code

- efforts to speed up memory management and garbage collection

He also mentions possible improvements related to C extension code, but I didn't catch the substance of this one. The speaker offered the audience a pithy takeaway from his talk: Python is always getting faster. Do the planet a favor and upgrade to the latest version as soon as you can. That's a nice hook.

There was lots of good stuff here. Whenever I hear compiler talks like this, I almost immediately start thinking about how I might work some of the ideas into my compiler course. To do more with optimization, we would have to move faster through construction of a baseline compiler, skipping some or all of the classic material. That's a trade-off I've been reluctant to make, given the course's role in our curriculum as a compilers-for-everyone experience. I remain tempted, though, and open to a different treatment.

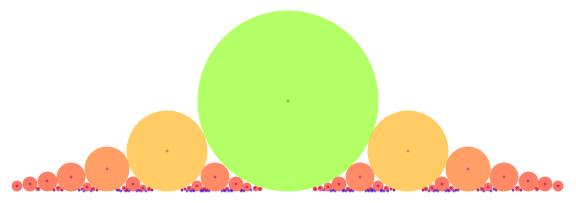

Talk 4: The Lost Art of Diagrams: Making Complex Ideas Easy to See with Python

Early on, this talk contained a line that programmers sometimes need to remember: Good documentation shows respect for users. Good diagrams, said the speaker, can improve users' lives. The talk was a nice general introduction to some of the design choices available to us as we create diagrams, including the use of color, shading, and shapes (Venn diagrams, concentric circles, etc.). It then discussed a few tools one can use to generate better diagrams. The one that appealed most to me was Mermaid.js, which uses a Markdown-like syntax that reminded me of GraphViz's Dot language. My students and use GraphViz, so picking up Mermaid might be a comfortable task.

~~~~~

My second day at virtual PyCon confirmed that attending was a good choice. I've seen enough language-specific material to get me thinking new thoughts about my courses, plus a few other topics to broaden the experience. A nice break from the semester's grind.

Posted by Eugene Wallingford | Permalink | Categories: Computing, Software Development, Teaching and Learning

April 09, 2023 8:24 AM

It Was Just Revision

There are several revised approaches to "what's the deal with the ring?" presented in "The History of The Lord of the Rings", and, as you read through the drafts, the material just ... slowly gets better! Bit by bit, the familiar angles emerge. There seems not to have been any magic moment: no electric thought in the bathtub, circa 1931, that sent Tolkien rushing to find a pen.

It was just revision.

Then:

... if Tolkien can find his way to the One Ring in the middle of the fifth draft, so can I, and so can you.

-- Robin Sloan, How The Ring Got Good

Posted by Eugene Wallingford | Permalink | Categories: General, Software Development, Teaching and Learning

March 29, 2023 2:39 PM

Fighting Entropy in Class

On Friday, I wrote a note to myself about updating an upcoming class session:

Clean out the old cruft. Simplify, simplify, simplify! I want students to grok the idea and the implementation. All that Lisp and Scheme history is fun for me, but it gets in the students' way.

This is part of an ongoing battle for me. Intellectually, I know to design class sessions and activities focused on where students are and what they need to do in order to learn. Yet it continually happens that I strike upon a good approach for a session, and then over the years I stick a little extra in here and there; within a few iterations I have a big ball of mud. Or I fool myself that some bit of history that I find fascinating is somehow essential for students to learn about, too, so I keep it in a session. Over the years, the history grows more and more distant; the needs of the session evolve, but I keep the old trivia in there, filling up time and distracting my students.

It's hard.

The specific case in question here is a session in my programming languages course called Creating New Syntax. The session has the modest goal of introducing students to the idea of using macros and other tools to define new syntactic constructs in a language. My students come into the course with no Racket or Lisp experience, and only a few have enough experience with C/C++ that they may have seen its textual macros. My plan for this session is to expose them to a few ideas and then to demonstrate one of Racket's wonderful facilities for creating new syntax. Given the other demands of the course, we don't have time to go deep, only to get a taste.

[In my dreams, I sometimes imagine reorienting this part of my course around something like Matthew Butterick's Beautiful Racket... Maybe someday.]

Looking at my notes for this session on Friday, I remembered just how overloaded and distracting the session has become. Over the years, I've pared away the extraneous material on macros in Lisp and C, but now it has evolved to include too many ideas and incomplete examples of macros in Racket. Each by itself might make for a useful part of the story. Together, they pull attention here and there without ever closing the deal.

I feel like the story I've been telling is getting in the way of the one or two key ideas about this topic I want students to walk away from the course with. It's time to clean the session up -- to make some major changes -- and tell a more effective story.

The specific idea I seized upon on Friday is an old idea I've had in mind for a while but never tried: adding a Python-like for-loop:

(for i in lst: (sqrt i))

[Yes, I know that Racket already has a fine set of for-loops! This is just a simple form that lets my students connect their fondness for Python with the topic at hand.]

This functional loop is equivalent to a Racket map expression:

(map (lambda (i)

(sqrt i))

lst)

We can write a simple list-to-list translator that converts the loop to an equivalent map:

(define for-to-map

(lambda (for-exp)

(let ((var (second for-exp))

(lst (fourth for-exp))

(exp (sixth for-exp)))

(list 'map

(list 'lambda (list var) exp)

lst))))

This code handles only the surface syntax of the new form. To add it to the language, we'd have to recursively translate the form. But this simple function alone demonstrates the idea of translational semantics, and shows just how easy it can be to convert a simple syntactic abstraction into an equivalent core form.

Racket, of course, gives us better options! Here is the same transformer using the syntax-rules operator:

(define-syntax for-p

(syntax-rules (in :)

( (for-p var in lst : exp)

(map (lambda (var) exp) lst) ) ))

So easy. So powerful. So clear. And this does more than translate surface syntax in the form of a Racket list; it enables the Racket language processor to expand the expression in place and execute the result:

> (for-p i in (range 0 10):

(sqrt i))

'(0

1

1.4142135623730951

...

2.8284271247461903

3)

This small example demonstrates the key idea about macros and syntax transformers that I want students to take from this session. I plan to open the session with for-p, and then move on to range-case, a more complex operator that demonstrates more of syntax-rules's range and power.

This sets me up for a fun session in a little over a week. I'm excited to see how it plays with students. Renewed simplicity and focus should help.

March 12, 2023 9:00 AM

A Spectator to Phase Change

Robin Sloan speculates that language-learning models like ChatGPT have gone through a phase change in what they can accomplish.

AI at this moment feels like a mash-up of programming and biology. The programming part is obvious; the biology part becomes apparent when you see AI engineers probing their own creations the way scientists might probe a mouse in a lab.

Like so many people, I find my social media and blog feeds filled with ChatGPT and LLMs and DALL-E and ... speculation about what these tools mean for (1) the production of text and code, and (2) learning to write and program. A lot of that speculation is tinged with fear.

I admire Sloan's effort to be constructive in his approach to the uncertainty:

I've found it helpful, these past few years, to frame my anxieties and dissatisfactions as questions. For example, fed up with the state of social media, I asked: what do I want from the internet, anyway?

It turns out I had an answer to that question.

Where the GPT-alikes are concerned, a question that's emerging for me is:

What could I do with a universal function — a tool for turning just about any X into just about any Y with plain language instructions?

I admit that I am reacting to these developments slowly compared to many people. That's my style in most things: I am more likely to under-react to a change than to over-react, especially at the onset of the change. In this case, there is no chance of immediate peril, so waiting to see what happens as people use these tools seems like a reasonable reasonable. I haven't made any effort to use these tools actively (or even been moved to), so any speculating I do would be uninformed by personal experience.

Instead, I read as people whose work I respect experiment with these tools and try to make sense of them. Occasionally, I draw a small, tentative conclusion, such as that prompting these generators is a lot like prompting students. After a few months of reading and a little reflection, I still think the biggest risk we face is probably that we tend to change the world around us to accommodate our technologies. If we put these tools to work for us in ways that enhance what we do, then the accommodation will pay off. If not, then we may, as Daniel Steinberg wrote in one of his newsletters, stop asking the questions we want to ask and start asking only the questions these tools can answer.

Professionally, I think most about the effect that ChatGPT and its ilk will have on programming and CS education. In these regards, I've been paying special attention to reports from David Humphrey, such as this blog post on his university's attempt to grapple the widespread availability of these tools. David has approached OpenAI with an open mind and written thoughtfully about the promise and the risk. For example, he has written a lot of code with an LLM assistant and found it improving his ability both to write code and to think about problems. Advanced CS students can benefit from this kind of assistance, too, but David wonders how such tools might interfere with students first learning to program.

What do we educators want from generative programming tools anyway? What do I as a programmer and educator want from them?

So, at this point, my personal interaction with the phase change that Sloan describes has been mostly passive: I read about what others are doing and think about the results of their exploration. Perhaps this post is a guilty conscience asserting that I should be doing more. Really, though, I think of it more as an active form of inaction: an effort to collect some of my thinking as I slowly respond to the changes that are coming. Perhaps some day soon I will feel moved to use of these tools as I write code of my own. For now, though, I am content to watch from the sidelines. You can learn a lot just by watching.

Posted by Eugene Wallingford | Permalink | Categories: Personal, Software Development, Teaching and Learning

March 05, 2023 9:47 AM

Context Matters

In this episode of Conversations With Tyler, Cowen asks economist Jeffrey Sachs if he agrees with several other economists' bearish views on a particular issue. Sachs says they "have been wrong ... for 20 years", laughs, and goes on to say:

They just got it wrong time and again. They had failed to understand, and the same with [another economist]. It's the same story. It doesn't fit our model exactly, so it can't happen. It's got to collapse. That's not right. It's happening. That's the story of our time. It's happening.

"It doesn't fit our model, so it can't happen." But it is happening.

When your model keeps saying that something can't happen, but it keeps happening anyway, you may want to reconsider your model. Otherwise, you may miss the dominant story of the era -- not to mention being continually wrong.

Sachs spends much of his time with Cowen emphasizing the importance of context in determining which model to use and which actions to take. This is essential in economics because the world it studies is simply too complex for the models we have now, even the complex models.

I think Sachs's insight applies to any discipline that works with people, including education and software development.

The topic of education even comes up toward the end of the conversation, when Cowen asks Sachs how to "fix graduate education in economics". Sachs says that one of its problems is that they teach econ as if there were "four underlying, natural forces of the social universe" rather than studying the specific context of particular problems.

He goes on to highlight an approach that is affecting every discipline now touched by data analytics:

We have so much statistical machinery to ask the question, "What can you learn from this dataset?" That's the wrong question because the dataset is always a tiny, tiny fraction of what you can know about the problem that you're studying.

Every interesting problem is bigger than any dataset we build from it. The details of the problem matter. Again: context. Sachs suggests that we shouldn't teach econ like physics, with Maxwell's overarching equations, but like biology, with the seemingly arbitrary details of DNA.

In my mind, I immediately began thinking about my discipline. We shouldn't teach software development (or econ!) like pure math. We should teach it as a mix of principles and context, generalities and specific details.

There's almost always a tension in CS programs between timeless knowledge and the details of specific languages, libraries, and tools. Most of students don't go on to become theoretical computer scientists; they go out to work in the world of messy details, details that keep evolving and morphing into something new.

That makes our job harder than teaching math or some sciences because, like economics:

... we're not studying a stable environment. We're studying a changing environment. Whatever we study in depth will be out of date. We're looking at a moving target.

That dynamic environment creates a challenge for those of us teaching software development or any computing as practiced in the world. CS professors have to constantly be moving, so as not to fall our of date. But they also have to try to identify the enduring principles that their students can count on as they go on to work in the world for several decades.

To be honest, that's part of the fun for many of us CS profs. But it's also why so many CS profs can burn out after 15 or 20 years. A never-ending marathon can wear anyone out.

Anyway, I found Cowens' conversation with Jeffrey Sachs to be surprisingly stimulating, both for thinking about economics and for thinking about software.

Posted by Eugene Wallingford | Permalink | Categories: Computing, Software Development, Teaching and Learning

February 18, 2023 11:16 AM

CS Students Should Take Their Other Courses Seriously, Too

(These days, I'm posting a bit more on Mastodon. It has a higher character limit than Twitter, so I sometimes write longer posts, including quoted passages. Those long posts start to blur in length and content with blog posts. In an effort to blog more, and to preserve writing that may have longer value than a social media post provides, I may start capturing threads there as blog posts here. This post originated as a Mastodon post last week.)

~~~~~

This post contains one of the reasons I tell prospective CS students to take their humanities and social science courses seriously:

In short, the key skill for making sense of the world of information is developing the ability to accurately and neutrally summarize some body of information in your own words.

The original poster responded that it wasn't until going back to pursue a master's degree in library and information science that this lesson hit home for him.

I always valued my humanities and social science courses, both because I enjoyed them and because they let me practice valuable skills that my CS and math courses didn't exercise. But this lesson hit home for me in a different way after I became a professor.

Over my years teaching, I've seen students succeed and struggle in a lot of different ways. The ability to read and synthesize information with facility is one of the main markers of success, one of the things that can differentiate between students who do well and those who don't. It's also hard to develop this skill after students get to college. Nearly every course and major depends on it, even technical courses, even courses and majors that don't foreground this kind of skill. Without it, students keep falling farther behind. It's hard to develop the skill quickly enough to not fall so far behind that success feels impossible.

So, kids and parents, when you ask how to prepare to succeed as a CS student while in high school, one of my answers will almost always be: take four years of courses in every core area, including English and social science. The skills you develop and practice there will pay off many-fold in college.

February 11, 2023 1:53 PM

What does it take to succeed as a CS student?

Today I received an email message similar to this:

I didn't do very well in my first semester, so I'm looking for ways to do better this time around. Do you have any ideas about study resources or tips for succeeding in CS courses?

As an advisor, I'm occasionally asked by students for advice of this sort. As department head, I receive even more queries, because early on I am the faculty member students know best, from campus visits and orientation advising.

When such students have already attempted a CS course or two, my first step is always to learn more about their situation. That way, I can offer suggestions suited to their specific needs.

Sometimes, though, the request comes from a high school student, or a high school student's parent: What is the best way to succeed as a CS student?

To be honest, most of the advice I give is not specific to a computer science major. At a first approximation, what it takes to succeed as a CS student is the same as what it takes to succeed as a student in any major: show up and do the work. But there are a few things a CS student does that are discipline-specific, most of which involve the tools we use.

I've decided to put together a list of suggestions that I can point our students to, and to which I can refer occasionally in order to refresh my mind. My advice usually includes one or all of these suggestions, with a focus on students at the beginning of our program:

- Go to every class and every lab session. This is #0 because it should go without saying, but sometimes saying it helps. Students don't always have to go to their other courses every day in order to succeed.

- Work steadily on a course. Do a little work on your course, both programming and reading or study, frequently -- every day, if possible. This gives your brain a chance to see patterns more often and learn more effectively. Cramming may help you pass a test, but it doesn't usually help you learn how to program or make software.

- Ask your professor questions sooner rather than later. Send email. Visit office hours. This way, you get answers sooner and don't end up spinning your wheels while doing homework. Even worse, feeling confused can lead you to shying away from doing the work, which gets in the way of #1.

- Get to know your programming environment. When programming in Python, simply feeling comfortable with IDLE, and with the file system where you store your programs and data, can make everything else seem easier. Your mind doesn't have to look up basic actions or worry about details, which enables you to make the most of your programming time: working on the assigned task.

- Spend some of your study time with IDLE open. Even when you

aren't writing a program, the REPL can help you! It lets you

try out snippets of code from your reading, to see them work.

You can run small experiments of your own, to see whether you

understand syntax and operators correctly. You can make up

your own examples to fill in the gaps in your understanding

of the problem.

Getting used to trying things out in the interactions window can be a huge asset. This is one of the touchstones of being a successful CS student.

That's what came to mind at the end of a Friday, at the end of a long week, when I sat down to give advice to one student. I'd love to hear your suggestions for improving the suggestions in my list, or other bits of advice that would help our students. Email me your ideas, and I'll make my list better for anyone who cares to read it.

Posted by Eugene Wallingford | Permalink | Categories: Computing, Software Development, Teaching and Learning

January 29, 2023 7:51 AM

A Thousand Feet of Computing

Cory Doctorow, in a recent New Yorker interview reminisces about learning to program. The family had a teletype and modem.

My mom was a kindergarten teacher at the time, and she would bring home rolls of brown bathroom hand towels from the kid's bathroom at school, and we would feed a thousand feet of paper towel into the teletype and I would get a thousand feet of computing after school at the end of the day.

Two things:

- Tsk, tsk, Mom. Absconding with school supplies, even if for a noble cause! :-) Fortunately, the statute of limitations on pilfering paper hand towels has likely long since passed.

- I love the idea of doing "a thousand feet of computing" each day. What a wonderful phrase. With no monitor, the teletype churns out paper for every line of code, and every line the code produces. You know what they say: A thousand feet a day makes a happy programmer.

The entire interview is a good read on the role of computing in modern society. The programmer in me also resonated with this quote from Doctorow's 2008 novel, Little Brother:

If you've never programmed a computer, there's nothing like it in the whole world. It's awesome in the truest sense: it can fill you with awe.

My older daughter recommended Little Brother to me when it first came out. I read many of her recommendations promptly, but for some reason this one sits on my shelf unread. (The PDF also sits in my to-read/ folder, unread.) I'll move it to the top of my list.

January 18, 2023 2:46 PM

Prompting AI Generators Is Like Prompting Students

Ethan Mollick tells us how to generate prompts for programs like ChatGPT and DALL-E: give direct and detailed instructions.

Don't ask it to write an essay about how human error causes catastrophes. The AI will come up with a boring and straightforward piece that does the minimum possible to satisfy your simple demand. Instead, remember you are the expert and the AI is a tool to help you write. You should push it in the direction you want. For example, provide clear bullet points to your argument: write an essay with the following points: -Humans are prone to error -Most errors are not that important -In complex systems, some errors are catastrophic -Catastrophes cannot be avoided

But even the results from such a prompt are much less interesting than if we give a more explicit prompt. Fo instance, we might add:

use an academic tone. use at least one clear example. make it concise. write for a well-informed audience. use a style like the New Yorker. make it at least 7 paragraphs. vary the language in each one. end with an ominous note.