TITLE: A Reflection on Alan Turing, the Turing Test, and Machine Intelligence

AUTHOR: Eugene Wallingford

DATE: March 30, 2012 5:22 PM

DESC:

-----

BODY:

In 1950, Alan Turing published a paper that launched the

discipline of artificial intelligence,

Computing Machinery and Intelligence.

If you have not read this paper, go and do so. Now. 2012

is

the centennial of Turing's birth,

and you owe yourself a read of this seminal paper as part

of the celebration. It is a wonderful work from a wonderful

mind.

This paper gave us the Imitation Game, an attempt to replace

the question of whether a computer could be intelligent by

withn something more concrete: a probing dialogue. The

Imitation became

the Turing Test,

now a staple of modern culture and the inspiration for

contests and analogies and

speculation.

After reading the paper, you will understand something that

many people do not: Turing is not describing a way for us

to tell the difference between human intelligence and machine

intelligence. He is telling us that the distinction is not

as important as we seem to think. Indeed, I think he is

telling us that there is no distinction at all.

I mentioned in

an entry a few years ago

that I always have my undergrad AI students read Turing's

paper and discuss the implications of what we now call the

Turing Test. Students would often get hung up on religious

objections or, as noted in that entry, a deep and a-rational

belief in "gut instinct". A few ended up putting their

heads in the sand, as Turing knew they might, because they

simply didn't want to confront the implication of

intelligences other than our own. And yet they were in an

AI course, learning techniques that enable us to write

"intelligent" programs. Even students with the most diehard

objections wanted to write programs that could learn from

experience.

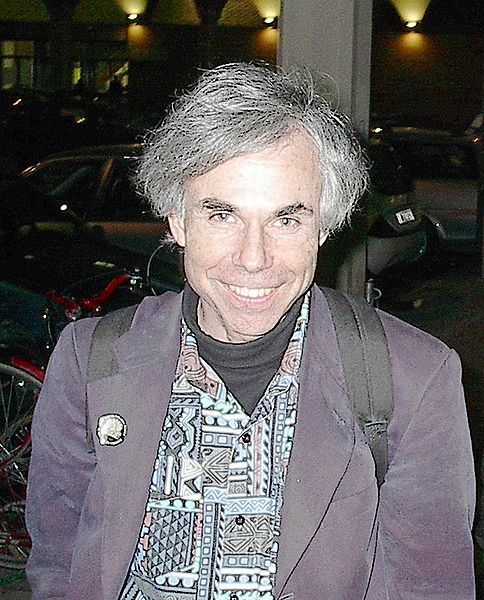

Douglas Hofstadter, who

visited campus this month,

has encountered another response to the Turing Test that

surprised him. On his second day here, in honor of the

Turing centenary, Hofstadter offered a seminar on some ideas

related to the Turing Test. He quoted two snippets of

hypothetical man-machoine dialogue from Turing's seminal

paper in his classic

Gödel, Escher, Bach.

Over the the years, he occasionally runs into philosophers

who think the Turing Test is shallow, trivial to

pass with trickery and "mere syntax". Some are concerned

that it explores "only behavior". Is behavior all there

is? they ask.

As a computer programmer, the idea that the Turing test

explores only behavior never bothered me. Certainly, a

computer program is a static construct and, however

complex it is, we can read and understand it. (Students

who take my programming languages course learn that even

another program can read and process programs

in a helpful way.) This was not a problem for Hofstadter

either, growing up as he did in a physicist's household.

Indeed, he found Turing's formulation of the Imitation

Game to be deep and brilliant. Many of us who are drawn

to AI feel the same. "If I could write a program capable

of playing the Imitation Game," we think, "I will have

done something remarkable."

One of Hofstadter's primary goals in writing GEB

was to make a compelling case form Turing's vision.

Those of us who attended the Turing seminar read a section

from Chapter 13 of

Le Ton beau de Marot,

a more recent book by Hofstadter in which he explores many

of the same ideas about words, concepts, meaning, and

machine intelligence as GEB, in the context of

translating text from one language to another. Hofstadter

said the focus in this book is on the subtlety of words

and the ideas they embody, and what that means for

translation. Of course, these are the some of the issues

that underlie Turing's use of dialogue as sufficient for

us to understand what it means to be intelligent.

In the seminar, he shared with us some of his efforts to

translate a modern French poem into faithful English. His

source poem had itself been translated from older French

into modern French by a French poet friend of his. I

enjoyed hearing him talk about "the forces" that pushed

him toward and away from particular words and phrases.

Le Ton beau de Marot uses creative dialogues of

the sort seen in GEB, this time between the Ace

Mechanical Translator (his fictional computer program) and

a Dull Rigid Human. Notice the initials of his raconteurs!

They are an homage to Turing. The human translator, Douglas

R. Hofstadter himself, is cast in the role of AMT, which

shares its initials with Alan M. Turing, the man who started

this conversation over sixty years ago.

Like Hofstadter, I have often encountered people who object

to the Turing test. Many of my AI colleagues are comfortable

with a behavioral test for intelligence but dislike that

Turing considers only linguistic behavior. I am

comfortable with linguistic behavior because it captures what

is for me the most important feature of intelligence: the

ability to express and discuss ideas.

Others object that it sets too low a bar for AI, because it

is agnostic on method. What if a program "passes the test",

and when we look inside the box we don't understand what we

see? Or worse, we do understand what we see and are

unimpressed? I think that this is beside the point.

Not to say that we shouldn't want to understand. If we

found such I program, I think that we would make it an

overriding goal to figure out how it works. But how an

entity manages to be "intelligent" is a different question

from whether it is intelligent. That is precisely

Turing's point!

I agree with Brian Christian, who won the prize for being

"The Most Human Human" in a competition based on Turing's

now-famous test. In

an interview with The Paris Review,

he said,

Some see the history of AI as a dehumanizing narrative;

I see it as much the reverse.

Turing does not diminish what it is to be human when he

suggests that a computer might be able to carry on a rich

conversation about something meaningful. Neither do AI

researchers or teenagers like me, who dreamed of figuring

just what it is that makes it possible for humans to do

what we do. We ask the question precisely because we

are amazed. Christian again:

We build these things in our own image, leveraging all the

understanding of ourselves we have, and then we get to see

where they fall short. That gap always has something new

to teach us about who we are.

As in science itself, every time we push back the curtain,

we find another layer of amazement -- and more questions.

I agree with Hofstadter. If a computer could do what it

does in Turing's dialogues, then no one could rightly say

that it wasn't "intelligent", whatever that might mean.

Turing was right.

~~~~

PHOTOGRAPH 1: the Alan Turing centenary celebration. Source:

2012 The Alan Turing Year.

PHOTOGRAPH 2: Douglas Hofstadter in Bologna, Italy, 2002. Source:

Wikimedia Commons.

-----