September 29, 2009 5:51 PM

Life, Artificial and Oh So Real

Artificial. Tyler Cowen writes about a new arena of battle for the Turing Test:

I wonder when the average quality of spam comment will exceed the average quality of a non-spam comment.

This is not the noblest goal for AI, but it may be one for which the economic incentive to succeed drives someone to work hard enough to do so.

Oh So Real. I have written periodically over the last sixteen months about being sick with an unnamed and undiagnosed malady. At times, I was sick enough that I was unable to run for stretches of several weeks. When I tried to run, I was able to run only slowly and only for short distances. What's worse, the symptoms always returned; sometimes they worsened. The inability of my doctors to uncover a cause worried me. The inability to run frustrated and disappointed me.

Yesterday I read an essay by a runner about the need to run through a battle with cancer:

I knew, though, if I was going to survive, I'd have to keep running. I knew it instinctively. It was as though running was as essential as breathing.

Jenny's essay is at turns poetic and clinical, harshly realistic and hopelessly romantic. It puts my own struggles into a much larger context and makes them seem smaller. Yet in my bones I can understand what she means: "... that is why I love running: nothing me feel more alive. I hope I can run forever."

September 28, 2009 8:32 PM

Two Sides of My Job

Today brought high contrast to my daily duties.

I spent my morning preparing a talk on computer science, careers, and job prospects for an audience of high school counselors from around the state. Then I gave the talk and lunched with them. Both prep and presentation were a lot of fun! I get to take some element of computer science and use it as a way to communicate how much fun CS and why these counselors should encourage their students to consider CS as a major. Many of the adults in my audience were likely prepared for the worst, to be bored by a hard topic they don't understand. Seeing the looks in their eyes when they saw how an image could be disassembled and reassembled to hide information -- I hope the look in my eyes reflected it.

Early afternoon found me working on next semester's schedule of courses and responding to requests for consultations on curricular changes being made by another department. The former is in most ways paperwork, seemingly for paperwork's sake. The latter requires delicate language and, all too frequently, politics. Every minute seems a grind. This is an experience of getting caught up in stupid details, administration style.

I ended the day by visiting with a local company about a software project that one of our senior project courses might work on. I learned a little about pumps and viscosity and flow, and we discussed the challenges they face in deploying an application that meets all their needs. Working on real problems with real clients is still one of the great things about building software.

Being head of our department has brought me more opportunities like the ones that bookended my day, but it has also thrust me into far too many battles with paperwork and academic process and politics like the ones that filled the in-between time. After four-plus years, I have not come to enjoy that part of the job, even when I appreciate the value it can bring to our department and university when I do it well. I know it's a problem when I have to struggle to maintain my focus on the task at hand just to make progress. Such tasks offer nothing like the flow that comes from preparing and giving a talk, writing code, or working on a hard problem.

A few months ago I read about a tool called Freedom, which "frees you from the distractions of the internet, allowing you time to code, write, or create." (It does this by disabling one's otherwise functional networking for up to eight hours.") I don't use Freedom or any tool like it, but there are moments when I fear I might need one to keep doing my work. Funny, but none of those moments involve preparing and giving a talk, writing code, or working on a hard problem.

Tim Bray said it well:

If you need extra mental discipline or tool support to get the focus you need to do what you have to do, there's nothing wrong with that, I suppose. But if none of your work is pulling you into The Zone, quite possibly you have a job problem not an Internet problem.

Fortunately, some of my work pulls me into The Zone. Days like today remind me how much different it feels. When I am mired in a some tarpit outside of the zone, it's most definitely not an Internet problem.

September 27, 2009 11:19 AM

History Mournful and Glorious

While prepping for my software engineering course last summer, I was re-reading some old articles by Philip Greenspun on teaching, especially an SE course focused on building on-line communities. One of the talks he gives is called Online Communities. This talk builds on the notion that "online communities are at the heart" of most successful applications of the Internet". Writing in 2006, he cites amazon.com, AOL, and eBay as examples, and the three years since have only strengthened his case. MySpace seems to have passed its peak yet remains an active community. I sit hear connected with friends from grade school who have been flocking to Facebook in droves, and Twitter is now one of my primary sources for links to valuable professional articles and commentary.

As a university professor, the next two bullets in his outline evoke both sadness and hope:

- the mournful history of applying technology to education: amplifying existing teachers

- the beauty of online communities: expanding the number of teachers

Perhaps we existing faculty are limited by our background, education, or circumstances. Perhaps we simply choose the more comfortable path of doing what has been done in the past. Even those of us invested in doing things differently sometimes feel like strangers in a strange land.

The great hope of the internet and the web is that it lets many people teach who otherwise wouldn't have a convenient way to reach a mass audience except by textbooks. This is a threat to existing institutions but also perhaps an open door on a better world for all of us.

September 24, 2009 8:07 PM

Always Start With A Test

... AKA Test-Driven X: Teaching

Writing a test before writing code provides a wonderful level of accountability to self and world. The test helps me know what code to write and when we are done. I am often weak and like being able to keep myself honest. Tests also enable me to tell my colleagues and my boss.

These days, I usually think in test-first terms whenever I am creating something. More and more finding myself wondering whether a test-driven approach might work even better. In a recent blog entry, Ron Jeffries asked for analogues of test-driven development outside the software world. My first thought was, what can't be done test-first or even test-driven? Jeffries is, in many ways, better grounded than I am, so rather than talk about accountability, he writes about clarity and concreteness as virtues of TDD. Clarity, concreteness, and accountability seem like good features to build into most processes that create useful artifacts.

I once wrote about student outcomes assessment as a source of accountability and continuous feedback in the university. I quoted Ward Cunningham at the top of that entry, " It's all talk until the tests run.", to suggest to myself a connection to test-first and test-driven development.

Tests are often used to measure student outcomes from courses that we teach at all levels of education. Many people worry about placing too much emphasis on a specific test as a way to evaluate student learning. Among other things, they worry about "teaching to the test". The implication is that we will focus all of our instruction and learning efforts on that test and miss out on genuine learning. Done poorly, teaching to the test limits learning in the way people worry it will. But we can make a similar mistake when using tests to drive our programming, by never generalizing our code beyond a specific set of input values. We don't want to do that in TDD, and we don't want to do that when teaching. The point of the test is to hold us accountable: Can our students actually do what we claim to teach them?

Before the student learning outcomes craze, the common syllabus was the closest thing most departments had to a set of tests for a course. The department could enumerate a set of topics and maybe even a set of skills expected of the course. Faculty new to the course could learn a lot about what to teach by studying the syllabus. Many departments create common final exams for courses with many students spread across many sections and many instructors. The common final isn't exactly like our software tests, though. An instructor may well have done a great job teaching the course, but students have to invest time and energy to pass the test. Conversely, students may well work hard to make sense of what they are taught in class, but the instructor may have done a poor or incomplete job of covering the assigned topics.

I thought a lot about TDD as I was designing what is for me a new course this semester, Software Engineering. My department does not have common syllabi for courses (yet), so I worked from a big binder of material given to me by the person who has taught the course for the last few years. The material was quite useful, but it stopped short of enumerating the specific outcomes of the course as it has been taught. Besides, I wanted to put my stamp on the course, too... I thought about what the outcomes should be and how I might help students reach them. I didn't get much farther than identifying a set of skills for students to begin learning and a set of tools with which they should be familiar, if not facile.

Greg Wilson has done a very nice job of designing his Software Carpentry course in the open and using user stories and other target outcomes to drive his course design. In modern parlance, my efforts in this regard can be tagged #fail. I'm not too surprised, though. For me, teaching a course the first time is more akin to an architectural spike than a first iteration. I have to scope out the neighborhood before I know how to build.

Ideally, perhaps I should have done the spike prior to this semester, but neither the world nor I are ideal. Doing the course this way doesn't work all that badly for the students, and usually no worse than taking a course that has been designed up front by someone who hasn't benefitted from the feedback of teaching the course. In the latter case, the expected outcomes and tests to know they have been met will be imperfect. I tend to be like other faculty in expecting too much from a course the first few times I teach it. If I design the new course all the way to the bottom, either the course is painful for students in expecting too much too fast, or the it is painful for me as I un-design and re-design huge portions of the course.

Ultimately, writing and running tests come back to accountability. Accountability is in short supply in many circles, university curricula included, and tests help us to have it. We owe it to our users, and we owe it to ourselves.

September 22, 2009 5:18 PM

Agile Hippies?

The last two class days in my Software Engineering course, I have introduced students to agile software development. Here is a recent Twitter exchange between me and student in the course:

Student: Agile development is the hippy approach to programming.

Eugene: @student: "Agile development is the hippy approach to programming." Perhaps... If valuing people makes me a hippie, so be it.

Student: @wallingf I leave it up to the individual whether they interpret that as good or bad. Personally, I prefer agile, but am also a free spirit

Eugene: @student It's just rare that anyone would mistake me for a hippie...

Student: @wallingf you do like the phrase "roll my own" a lot

I guess I can never be sure what to expect when I tell a story. I've been talking about agile approaches with colleagues for a decade, and teaching them to students off and on since 2002. No student has ever described agile to me as hippie-style development development.

As I think about it from the newcomer's perspective, though, it makes sense. Consider Change, and keep changing, a recent article by Liz Keogh:

Sometimes people ask me, "When we've gone Agile ... what will it look like?" ...

Things will be changing. You'll be in a better place to respond to change. Your people will have a culture of courage and respect, and will seek continuous improvement, feedback and learning. ...

I have no idea what skills your people will need. The people you have are good people; start with them. ...

There is no end-state with Agile or Lean. You'll be improving, and continue to improve, trying new things out and discarding the ones which don't work. ...

I love Liz Keogh's stuff. Is this article representative of the agile community? I think so, at least of a vibrant part of it. Reading this article as I did right after participating in the above exchange, I can see what the student means.

I do not think there is a necessary connection between hippie culture and a preference for agile development. I am so not a hippie. Ask anyone who knows me. I see agile ideas from a perspective of pragmatism. Change happens. Responding to it, if possible, is better than trying to ignore it while it happens before my eyes.

Then again, a lot of my friends in the agile community probably were hippies, or could have been. The Lisp and Smalltalk worlds have long been sources of agile practice, and I am willing to speculate that the counterculture has long been over-represented there. Certainly, those worlds are countercultural amid the laced up corporate world of Cobol, PL/I, and Java.

I decided to google "agile hippie" to see who else has talked about this connection. The top hit talks about common code ownership as "hippie socialism" and highlights the need for a strong team leader to make P work. Hmm. Then the second hit is an essay by Lisp guru and countercultural thinker Richard Gabriel. It refers to agile methodologies as rising from the world of paid consultants and open-source software as the hippie thing.

One of Gabriel's points in this essay echoes something I told my students today: the difference between traditional and agile approaches to software is a matter of degree and style, not a difference in kind. I told my students that nearly all software development these days is iterative development, more or less. The agile community simply prefers and encourages an even tighter spiral through the stages of development. Feedback is good.

Folks may see a hippie connection in agile's emphasis on conversation, working together, and close teamwork. One thing I like about the Gabriel essay is its mention of transparency -- being more open about the process to the rest of the world, especially the client. I need to bring this element out explicitly in my presentation, too,

Maybe even the most straight-laced of agile developers have a touch of hippie radical inside them.

September 20, 2009 7:20 PM

A Ditty For Running Long

Courtesy of Waylon Jennings:

Staight'nin' the curves

Flat'nin' the hills

Someday the mountain might get 'em

But the law never will

On most of my long runs, the road is rarely straight. Even when I'm not at the edge of comfort, I like to take curves tight. I'm not sure why; I guess it makes me feel like I'm racing. And, inevitably, two things come to mind. In one of those curves, I hear Waylon singing, and then I start thinking of the math that goes with straightening curves in the trail.

This week, I did my long run on Saturday, so that my family could take a day at the fair. This was my first 20-miler in two years. I last ran a distance that long in the Marine Corps Marathon, and I last ran a training run that long three weeks before the marathon, at the peak of my plan.

This 20-miler went well. I started slowly but maintained a steady pace early. I ran a bit faster in the middle and then returned to slower pace to finish. I ended up doing 20.5 miles or so, average 9:30 miles. Even after years of marathon training, I still haven't completely grasped the idea that most of my long runs should be 45 to 90 seconds slower than the goal pace for my race. If that's true, then this run was in the perfect neighborhood.

Afterward, my legs were sore, and they remain so today. I ought not be surprised... I raced a half at marathon pace last Saturday and ran seven miles at near-10K pace on Wednesday. I am tired but feel good. Just hope that I can walk downstairs normally tomorrow!

September 19, 2009 9:09 PM

Quick Hits with an Undercurrent of Change

Yesterday evening, in between volleyball games, I had a chance to do some reading. I marked several one-liners to blog on. I planned a disconnected list of short notes, but after I started writing I realized that they revolve around a common theme: change.

Over the last few months, Kent Beck has been blogging about his experiences creating a new product and trying to promote a new way to think about his design. In his most recent piece, Turning Skills into Money, he talks about how difficult it can be to create change in software service companies, because the economic model under which they operates actually encourages them to have a large cohort of relatively inexperienced and undertrained workers.

The best line on that page, though, is a much-tweeted line from a comment by Niklas Bjørnerstedt:

A good team can learn a new domain much faster than a bad one can learn good practices.

I can't help thinking about the change we would like to create in our students through our software engineering course. Skills and good practices matter. We cannot overemphasize the importance of proficiency, driven by curiosity and a desire to get better.

Then I ran across Jason Fried's The Next Generation Bends Over, a salty and angry lament about the sale of Mint to Intuit. My favorite line, with one symbolic editorial substitution:

Is that the best the next generation can do? Become part of the old generation? How about kicking the $%^& out of the old guys? What ever happened to that?

I experimented with Mint and liked it, though I never convinced myself to go all the way it. I have tried Quicken, too. It seemed at the same time too little and too much for me, so I've been rolling my own. But I love the idea of Mint and hope to see the idea survive. As the industry leader, Intuit has the leverage to accelerate the change in how people manage their finances, compared to the smaller upstart it purchased.

For those of us who use these products and services, the nature of the risk has just changed. The risk with the small guy is that it might fold up before it spreads the change widely enough to take root. The risk with the big power is that it doesn't really get it and wastes an opportunity to create change (and wealth). I suspect that Intuit gets it and so hold out hope.

Still... I love the feistiness that Fried shows. People with big ideas and need not settle. I've been trying to encourage the young people with whom I work, students and recent alumni, to shoot for the moon, whether in business or in grad school.

This story meshed nicely with Paul Graham's Post-Medium Publishing, in which Graham joins in the discussion of what it will be like for creators no longer constrained by the printed page and the firms that have controlled publication in the past. The money line was:

... the really interesting question is not what will happen to existing forms, but what new forms will appear.

Change will happen. It is natural that we all want to think about our esteemed institutions and what the change means for them. But the real excitement lies in what will grow up to replace them. That's where the wealth lies, too. That's true for every discipline that traffics in knowledge and ideas, including our universities.

Finally, Mark Guzdial ruminates on what changes CS education. He concludes:

My first pass analysis suggests that, to make change in CS, invent a language or tool at a well-known institution. Textbooks or curricula rarely make change, and it's really hard to get attention when you're not at a "name" institution.

I think I'll have more to say about this article later, but I certainly know what Mark must be feeling. In addition to his analysis of tools and textbooks and pedagogies, he has his own experience creating a new way to teach computing to non-majors and major alike. He and his team have developed a promising idea, built the infrastructure to support it, and run experiments to show how well it works. Yet... The CS ed world looks much like it always has, as people keep doing what they've always been doing, for as many reasons as you can imagine. And inertia works against even those with the advantages Mark enumerates. Education is a remarkably conservative place, even our universities.

Posted by Eugene Wallingford | Permalink | Categories: Computing, General, Software Development, Teaching and Learning

September 16, 2009 5:31 PM

The Theory of Relativity, Running Style

A couple of ideas that crystalized for me while racing Saturday.

The Theory of Special Relativity

The faster you run, the closer together the mile markers are.

Later, this made me think of a famous story about Bill Rodgers, one of the greatest marathoners of all time. He was talking with a middle-of-the-pack marathoner, who expressed admiration for Rodgers's being able to finish in a little more than two hours. Rodgers turned the table on the guy and expressed admiration for all the four-hour marathoners. "I can't imagine running for four hours straight," Rodgers said.

Those mile markers are awfully far apart at a four-hour marathoner's pace.

Corollary: Relativity and Accelerating Frames

Runners who accelerate near the finish line pass no one of consequence.

Attempts to gain on competitors later in a race usually fail due to this law. As you change your speed, you find that most of your competitors -- at least the ones you care about -- are changing their speed, too.

At the start of a race, beginners sometimes think that they will save some gas for the end of the race, accelerate over the last few miles, and pass lots of people with the surge. But they don't. Many of those runners are surging, too.

Passing runners who don't surge doesn't count for much, because most of them have slowed down and are easy targets. It's not sporting to revel in passing a stationary object, or one that might as well be.

September 14, 2009 10:53 PM

Old Dreams Live On

"Only one, but it's always the right one."

-- Jose Raoul Capablanca,

when asked how many moves ahead

he looked while playing chess

When I was in high school, I played a lot of chess. That's also when I first learned about computer programming. I almost immediately was tantalized by the idea of writing a program to play chess. At the time, this was still a novelty. Chess programs were getting better, but they couldn't compete with the best humans yet, and I played just well enough to know how hard it was to play the game really well. Like so many people of that era, I thought that playing chess was a perfect paradigm of intelligence. It looked like such a wonderful challenge to the budding programmer in me.

I never wrote a program that played chess well, yet my programming life often crossed paths with the game. My first program written out of passion was a program to implement a ratings system for our chess club. Later, in college, I wrote a program to perform the Swiss system commonly used to run chess tournaments as a part of my senior project. This was a pretty straightforward program, really, but it taught me a lot about data structures, algorithms, and how to model problems.

Though I never wrote a great chessplaying program, that was the problem that mesmerized me and ultimately drew me to artificial intelligence and a major in computer science.

In a practical sense, chess has been "solved", but not in the way that most of us who loved AI as kids had hoped. Rather than reasoning symbolically about positions and plans, attacks and counterattacks, Deep Blue, Fritz, and all of today's programs win by deep search. This is a strategy that works well for serial digital computers but not so well for the human mind.

To some, the computer's approach seems uncivilized even today, but those of us who love AI ought be neither surprised nor chagrined. We have long claimed that intelligence can arise from any suitable architecture. We should be happy to learn how it arises most naturally for machines with fast processors and large memories. Deep Blue's approach may not help us to understand how we humans manage to play the game well in the face of its complexity and depth, but it turns out that this is another question entirely.

Reading David Mechner's All Systems Go last week brought back a flood of memories. The Eastern game of Go stands alone these days among the great two-person board games, unconquered by the onslaught of raw machine power. The game's complexity is enormous, with a branching factor at each ply so high that search-based programs soon drown in a flood of positions. As such, Go encourages programmers to dream the Big Dream of implementing a deliberative, symbolic reasoner in order to create a programs that plays the game well. The hubris and well-meaning naivete of AI researchers have promised huge advances throughout the years, only to have ambitious predictions go unfulfilled in the face of unexpected complexity. Well-defined problems such as chess turned out to be complex enough that programs reasoning like humans were unable to succeed. Ill-defined problems involving human language and the interconnected network of implicit knowledge that humans seem to navigate so easily -- well, they are even more resistant to our solutions.

Then, when we write programs to play games like chess well, many people -- including some AI researchers -- move the goal line. Schaefer et al. solved checkers with Chinook, but many say that its use of fast search and a big endgame databases is unfair. Chess remains unsolved in the formal sense, but even inexpensive programs available on the mass market play far, far better than all but a handful of humans in the world. The best program play better than the best humans.

Not so with Go. Mechner writes:

Go is too complex, too subtle a game to yield to exhaustive, relatively simpleminded computer searches. To master it, a program has to think more like a person.

And then:

Go sends investigators back to the basics--to the study of learning, of knowledge representation, of pattern recognition and strategic planning. It forces us to find new models for how we think, or at least for how we think we think.

Ah, the dream lives!

Even so, I am nervous when I read Mechner talking about the subtlety of Go, the depth of its strategy, and the impossibility of playing it well in by search and power. The histories of AI and CS have demonstrated repeatedly that what we think difficult often turns out to be straightforward for the right sort of program, and that what we think easy often turns out to be achingly difficult to implement. What Mechner calls 'subtle' about Go may well just be a name for our ignorance, for our lack of understanding today. It might be wise for Go aficionados to remain humble... Man's hubris survives only until the gods see fit to smash it.

We humans draw on the challenge of great problems to inspire us to study, work, and create. Lance Fortnow wrote recently about the mystique of the open problem. He expresses the essence of one of the obstacles we in CS face in trying to excite the current generation of students about our discipline: "It was much more interesting to go to the moon in the 60's than it is today." P versus NP may excite a small group of us, but when kids walk around with iPhones in their pockets and play on-line games more realistic than my real life, it is hard to find the equivalent of the moon mission to excite students with the prospect of computer science. Isn't all of computing like Fermat's last theorem: "Nothing there left to dream there."?

For old fogies like me, there is still a lot of passion and excitement in the challenge of a game like Go. Some days, I get the urge to ditch my serious work -- work that matters to people in the world, return to my roots, and write a program to play Go. Don't tell me it can't be done.

September 13, 2009 2:06 PM

An Encore Performance

Marathon coaches commonly recommend that runners training for a marathon run a half marathon four to six weeks prior to their big race. A half marathon offers a way to test both speed and endurance, under conditions that resemble the day of the marathon. If nothing else, a half is a good speed workout on a weekend without an insanely long long run.

While training for my previous marathons, the timing had never seemed quite right for me to run a half marathon. We only have one half in area during the month of September, and those were weeks when I was trying to work in a final 20-miler.

This year, I decided to run a half as a test: Do I still think I can be ready to run a marathon in six weeks? In past years, this was never a question, but when I drew up my training plan a mere eight weeks ago, I had real doubts that my body would allow me to work up to the speed and I mileage I needed for a marathon. The Park to Park Half Marathon, which has quickly become the top distance race in the Cedar Valley, was timed perfectly for me, six weeks before my race. I designed a training schedule that ramped my long-run mileage up very slowly, and I needed a break the weekend of Park to Park between 18- and 20-milers. So I penciled the race in to the plan.

My intention for this was typical: run ten miles at marathon goal pace and then, if my body felt good, speed up and "race" the last 5K. My dream goal pace this year is 8:30, which is quite a bit slower than my gaol the last few years of training. 8:30 feels good these days. As the race started, I kept telling myself to stay steady, not to speed up just because I thought I could. My catch phrase became, "Don't breathe hard until the 10 mile mark." While that's a tough request, I did manage to run comfortably over the opening miles of the course.

My run followed the script perfectly. I reached the 10-mile mark in 84 minutes. My body felt good enough to speed up. Mile 11: 7:54, including a drink stop. Mile 12: 7:50. Still good. Mile 13: 7:30.

As we neared the 13-mile mark, I was just trailing a group of five or six people and decided in my good spirits to sprint full-out and try to pass them. I took the first n-1 of them easily, but the last was a younger guy who heard me coming and sped up. Did I have another gear? Yes, but so did he. We each surged a couple of times in that last tenth of a mile, but he was too good for me and stayed ahead. I ended up running that sprint in 36 seconds. Later, I saw on the results board that my rival those last few seconds won the under-19 age group. I am not too surprised that a young guy still had more gas in his tank -- and anaerobic capacity -- than I.

My course time: 1:47:47. (My chip time was a few seconds more, because there no timing mat at the starting line). I finished 15th in age group and 95th overall out of 550 half-marathoners.

This day felt like a rerun of best case scenario: a good time, better time than I might have hoped. My body and mind felt good after the race. That is the good kind of repeat I am happy to see.

I ran this race without a partner to talk me through the time, as I did in Indianapolis. That is good, too, because I'll be running solo at my marathon next month, which will also be much smaller than any other marathon I've run. I'll need to discipline my own mind in Mason City. I did have one pleasant conversation during the half, around the 7- or 8-mile marker. A former student caught up to me, said 'hello', and told me that he was running his first half marathon. We talked about pace and endurance, and he admitted that he was thinking about dropping out after 9 miles. I encouraged him, and he dropped back.

Later, I was happy to hear his name called out on the intercom as he crossed the finish line. The look of satisfaction on his face, having endured something he feared he could not, was worth as much as my own good feeling. Now that I have run several long races and many more 5Ks, it is easy to view the ups and the downs with a jaded eye. But accomplishing a goal as challenging as this is never ho-hum. Seeing Joe finish reminded me that.

This was a perfect tune-up for me, and now I have some reason to think that I can run a marathon six weeks from now at a reasonable pace. I have a couple of more long runs to do -- a 20-miler, then a second 20 or a 22-miler among them -- along with some speed work.

But today, I do what God did on the seventh day: rest. I ache all over.

September 10, 2009 9:48 PM

Starting to Think

Today one of my students tweeted that he had started doing something new: setting aside time each to sit and think. This admirable discipline led to a Twitter exchange among students that ended with the sentiment that schools don't teach us how to think.

I'm not sure what I think about this. At one level, I know what they mean. Universities offer a lot of courses in which we expect students to be able to think already, but it's rare when courses aim specifically to teach students how to think. Similarly, we don't often teach students how to read, and in software engineering, we don't often teach students how to do analysis. We demonstrate it and hope they catch on. (Welcome to calculus!)

Some courses take aim at how to think, or at least do on paper, but they tend to be gen ed courses or courses in philosophy that most students don't take, or don't take seriously. Majors courses are the best hope for many, because there is some hope that students will care enough about their majors to want to learn to think like experts in the discipline. In CS, our freshman-level discrete structures course is a place where we purport to help students reason the way computer scientists do. In Software Engineering, I've decided the only way we can possibly learn how to build software is to do it and then analyze what happens. Again, I am not teaching students so much how to think, so much as hoping to put them in a position where they can learn.

This is one part of my attempt to start where students are. But where do we go, or try to go, from there?

That said, lots of people in education and academia spend a lot of their time thinking about how to help students learn to think. Last month, I saved a link to a post at Dangerously Irrelevant on Education and Learning to Think. That post assumes that one goal of education is "higher-order thinking" -- thinking about thinking so that we can learn to do it better. It lists a number of features of higher-order thinking, goals for our students and thus for our courses. The list looks awfully attractive to me right now, because my current teaching assignment aims to prepare prospective software developers to work as professionals, and these are precisely the sorts of skills we would like for them to have before they graduate. Systems analysis and requirements gathering are all about imposing meaning, finding structure in apparent disorder. Building software involves uncertainty. Not everything that bears on the task at hand is known. Judgment and self-regulation are essential skills of the professional, but much of our students' previous fourteen or fifteen years of education have taught them not to self-regulate, if only by giving them so few opportunities to do so. When faced with it for the first time, they tend to balk. Should we be surprised?

There are other possible thinking outcomes we might aim for. A while back, I wrote about a particular HS teacher's experience as a summer CS student in Teaching Is Hard. Mark Guzdial also wrote about that teacher's blog entries, and Alan Kay left a comment on Mark's blog. Alan suggested that one of the key traits that we must help students develop is skepticism. This is one of the defining traits of the scientist, who must question what she sees and hears, run experiments to test ideas, and gather evidence to support claims. One of the great lessons of the Enlightenment is that we all can and should think and act like scientists, even when we aren't "doing science". The methods of science are the most reliable way for us to understand the world in which we live. Skepticism and experiment are the best ways to improve how we think and act.

There is more. People such as Richard Gabriel and Paul Graham tell us that education should help us develop taste. This is one of the defining traits of the maker. Just as all people should be able to think and act like scientists, so should all people be able to think and act creators. This is how we shape the world in which we live. Alan Kay talks about taste, too, in other writings and would surely find much to agree with in Gabriel's and Graham's work.

All this adds up to a pretty tall order for our schools and universities, for our apprenticeships and our workplaces. I don't think it's too much of a cop-out to say that one of the best ways to learn all of these higher-order skills is to do -- act like scientists, act like creators -- and then reflect on what happens.

If this were not enough, the post at Dangerously Irrelevant linked to above closes a quote that sets up one last hurdle for students and teachers alike:

It is a new challenge to develop educational programs that assume that all individuals, not just an elite, can become competent thinkers.

100% may be beyond our reach, but in general I start from this assumption. Like I said, teaching is hard. So is learning to think.

September 09, 2009 10:04 PM

Reviewing a Career Studying Camouflage

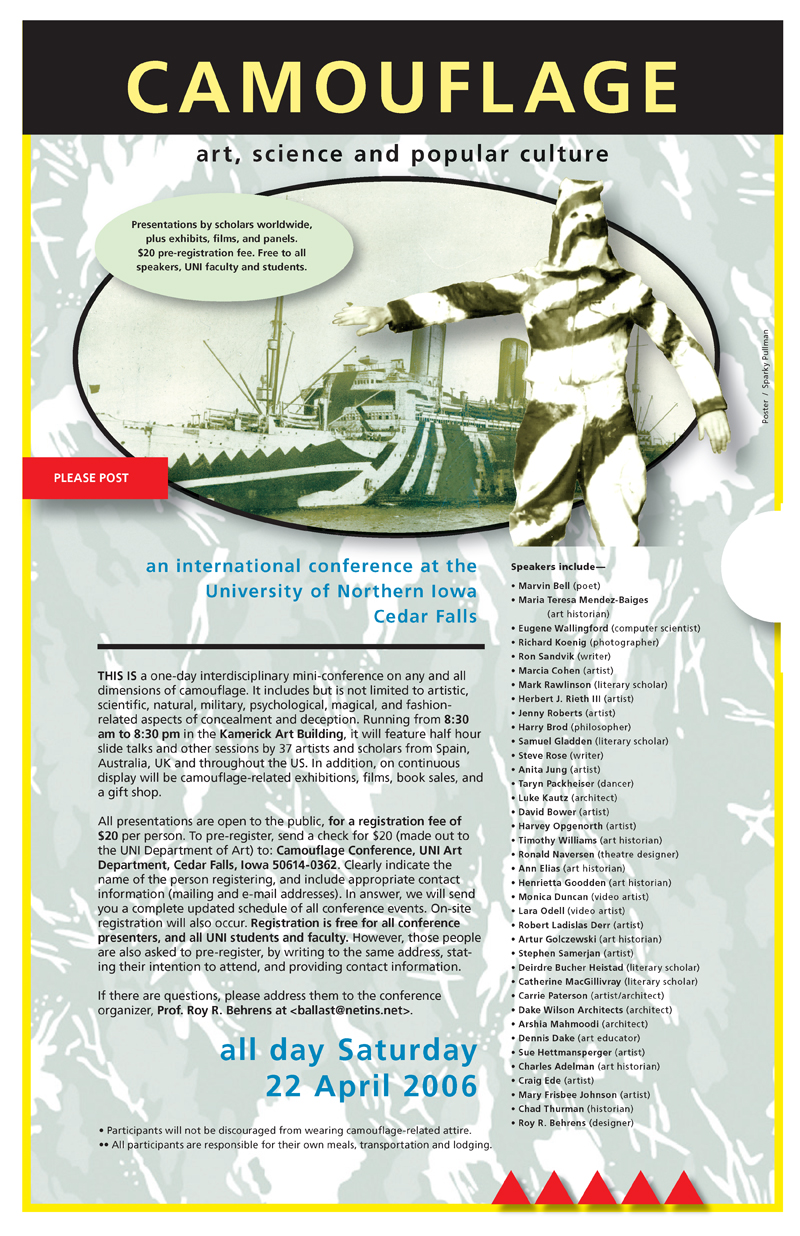

A few years ago I blogged when my university colleague Roy Behrens won a faculty excellence award in his home College of Humanities and Fine Arts. That entry, Teaching as Subversive Inactivity, taught me a lot about teaching, though I don't yet practice it very well. Later, I blogged about A Day with Camouflage Scholars, when I had the opportunity to talk about how a technique of computer science, steganography, related to the idea of camouflage as practiced in art and the military. Behrens is an internationally recognized expert on camouflage who organized an amazing one-day international conference on the subject here at my humble institution. To connect with these scholars, even for a day, was a great thrill. Finally, I blogged about Feats of Association when Behrens gave a mesmerizing talk illustrating "that the human mind is a connection-making machine, an almost unwilling creator of ideas that grow out of the stimuli it encounters."

As you can probably tell, I am a big fan of Behrens and his work. Today, I had a new chance to hear him speak, as he gave a talk associated with his winning another award, this time the university's Distinguished Scholar Award. After hearing this talk, no one could doubt that he is a worthy recipient, whose omnivorous and overarching interest in camouflage reflects a style of learning and investigation that we could all emulate. Today's talk was titled "Unearthing Art and Camouflage" and subtitled my research on the fence between art and science. It is a fence that more of us should try to work on.

The talk wove together threads from Roy's study of the history and practice of camouflage with bits of his own autobiography. It's a style I enjoyed in Kurt Vonnegut's Palm Sunday and have appreciated at least since my freshman year in college, when in an honors colloquium at Ball State University I was exposed to the idea of history from the point of view of the individual. As someone who likes connections, I'm usually interested in how accomplished people come to do what they do and how they make the connections that end up shaping or even defining their work.

Behrens was in the first generation of his family to attend college. He came from a small Iowa town to study here at UNI, where he first did research in the basement of the same Rod Library where I get my millions. He held his first faculty position here, despite not having a Ph.D. or the terminal degree of discipline, an M.F.A. After leaving UNI, he earned an M.A. from the Rhode Island School of Design. But with a little lucky timing and a publication record that merited consideration, he found his way into academia.

From where did his interest in camouflage come? He was never interested in military, though he served as a sergeant in the Vietnam-era Marine Corps. His interest lay in art, but he didn't enjoy the sort of art in which subjective tastes and fashion drove practice and criticism. Instead, he was interested in what was "objective, universal, and enduring" and as such was drawn to design and architecture. He and I share an interest in the latter; I began mu undergraduate study as an architecture major. A college professor offered him a chance to do undergraduate research, and his result was a paper titled "Perception in the Visual Arts", in which he first examined the relationship between the art we make and the science that studies how we perceive it. This paper was later published in major art education journal.

That project marked his first foray into perceptual psychology. Behrens mentioned a particular book that made an impression on him, Aspects of Form, edited by Lancelot Law Whyte. It contained essays on the "primacy of pattern" by scholars in both the arts and the sciences. Readers of this blog know of my deep interest in patterns, especially in software but in all domains. (They also know that I'm a library junkie and won't be surprised to know that I've already borrowed a copy of Whyte's book.)

Behrens noted that it was a short step from "How do people see?" to "How are people prevented from seeing?" Thus began what has been forty years of research on camouflage. He studies not only the artistic side of camouflage but also its history and the science that seeks to understand it. I was surprised to find that as a RISD graduate student he already intended to write a book on the topic. At the time, he contacted Rudolf Arnheim, who was then a perceptual psychologist in New York, with a breathless request for information and guidance. Nothing came of that request, I think, but in 1990 or so Behrens began a fulfilling correspondence with Arnheim that lasted until his death in 2007. After Arnheim passed away, Behrens asked his family to send all of his photos so that Behrens could make copies, digitize them, and then return the originals to the family. They agreed, and the result is a complete digital archive of photographs from Arnheim's long professional life. This reminded me of Grady Booch's interest in preservation, both of the works of Dijkstra and of the great software architectures of past and present.

While he was at RISD, Behrens did not know that the school library had 455 original "dazzle" camouflage designs in its collection and so missed out on the opportunity to study them. His ignorance of these works was not a matter of poor scholarship, though; the library didn't realize their significance and so had them uncataloged on a shelf somewhere. In 2007, his graduate alma mater contacted him with news of the items, and he has now begun to study them, forty years later.

As grad student, Behrens became in interested in the analogical link between (perceptual) figure-ground diagrams and (conceptual) Venn diagrams. He mentioned another book that helped him make this connection, Community and Privacy, by Serge Chermayeff and Christopher Alexander, whose diagrams of cities and relationships were Venn diagrams. This story brings to light yet another incidental connection between Behrens's work and mine. Alexander is, of course, the intellectual forebear of the software patterns movement, through his later books Notes On The Synthesis Of Form, The Timeless Way Of Building, A Pattern Language, and The Oregon Experiment.

UNI hired Behrens in 1972 into a temporary position that became permanent. He earned tenure and, fearing the lack of adventure that can come from settling down to soon, immediately left for the University of Wisconsin-Milwaukee. He worked there ten years and earned his tenure anew. It was at UW-M where he finally wrote the book he had begun planning in grad school. Looking back now, he is embarrassed by it and encouraged us not to read it!

At this point in the talk, Behrens told us a little about his area of scholarship. He opened with a meta-note about research in the era of the world wide web and Google. There are many classic papers and papers that scholars should know about. Most of them are not yet on-line, but one can at least find annotated bibliographies and other references to them. He pointed us to one of his own works, Art and Camouflage: An Annotated Bibliography, as an example of what is now available to all on the web.

Awareness of a paper is crucial, because it turns out that often we can find it in print -- even in the periodical archives of our own libraries! These papers are treasures unexplored, waiting to be rediscovered by today's students and researchers.

Camouflage consists of two primary types. The first is high similarity, as typified by figure-ground blending in the arts and mimicry in nature. This is the best known type of camouflage and the type most commonly seen in popular culture.

The second is high difference, or what is often called figure disruption. This sort of camouflage was one of the important lessons of World War I. We can't make a ship invisible, because the background against which it is viewed changes constantly. A British artist named Norman Wilkinson had the insight to reframe the question: We are not trying to hide a ship; we are trying to prevent the ship from being hit by a torpedo!

(Redefining one problem in terms of another is a standard technique in computer science. I remember when I first encountered it as such, in a graduate course on computational theory. All I had to do was find a mapping from a problem to, say, 3-SAT, and -- voilá! -- I knew a lot about it. What a powerful idea.)

This insight gave birth to dazzle camouflage, in which the goal came to be break an image into incoherent or imperceptible parts. To protect a ship, the disruption need not be permanent; it needed only to slow the attackers sufficiently that they were unable to target it, predict its course, and launch a relatively slow torpedo at it with any success.

Behrens offered that there is a third kind of camouflage, coincident disruption, that is different enough to warrant its own category. Coincident disruption mixes the other two types, both blending into the background and disrupting the viewer's perception. He suggested that this may well be the most common form of camouflage found in nature using the Gabon viper, pictured here, as one of his examples of natural coincident disruption.

Most of Behrens' work is on modern camouflage, in the 20th century, but study in the area goes back farther. In particular, camouflage was discussed in connection to Darwin's idea of natural selection. Artist Abbott Thayer was a preeminent voice on camouflage in the 19th century who thought and wrote on both blending and disruption as forms in nature. Thayer also recommended that the military use both forms of camouflage in combat, a notion that generated great controversy.

In World War I, the French ultimately employed 3,000 artists as "camoufleurs". The British and Americans followed suit on a smaller scale. Behrens gave a detailed history of military camouflage, most of which was driven by artists and assisted by a smaller number of scientists. He finds World War II's contributions less interesting but is excited by recent work by biologists, especially in the UK, who have demonstrated renewed interest in natural camouflage. They are using empirical methods and computer modeling as ways to examine and evaluate Thayer's ideas from over a hundred years ago. Computational modeling in the arts and sciences -- who knew?

Toward the end of his talk, Behrens told several stories from the "academic twilight zone", where unexpected connections fall into the scholar's lap. He called these the "unsung delights of researching". These are stories best told first hand, but they involved a spooky occurrence of Shelbyville, Tennessee, on a pencil he bought for a quarter from a vending machine, having the niece and nephew of Abbott Thayer in attendance at a talk he gave in 1987, and buying a farm in Dysart, Iowa, in 1992 only then to learn that Everett Warner, whom he had studied, was born in Vinton, Iowa -- 14 miles away. In the course of studying a topic for forty years, the strangest of coincidences will occur. We see these patterns whether we like to or not.

Behrens's closing remarks included one note that highlights the changes in the world of academic scholarship that have occurred since he embarked on his study of camouflage forty years ago. He admitted that he is a big fan of Wikipedia and has been an active contributor on pages dealing with the people and topics of camouflage. Social media and web sites have fundamentally changed how we build and share knowledge, and increasingly they are being used to change how we do research itself -- consider the Open Science and Polymath projects.

Today's talk was, indeed, the highlight of my week. Not only did I learn more about Behrens and his work, but I also ended up with a couple of books to read (the aforementioned Whyte book and Kimon Nicolaïdes's The Natural Way to Draw), as well as a couple of ideas about what it would mean for software patterns to hide something. A good way to spend an hour.

September 05, 2009 3:10 PM

Thoughts Early in a Run

I ran my Sunday long run this morning, so that I can adjust the coming week's schedule for a Saturday half marathon. This week's long run called for 18 miles. After a week of hard runs, I approached this morning gingerly. Slow, steady, nothing fast. Just get the mileage under my belt, get home, rest and move another week toward my marathon.

About four miles in, my legs were feeling a little sore. A lot of miles lay before me. Rather than get down, I had a comforting thought: All I have to do is just keep running. Nothing fancy. Just keep running.

It occurred to me that, at its simplest, this what a marathon comes down to. Can you just keep running for 26.2 miles? All the training we do prepares us to answer affirmatively.

Racing a marathon is a little different, because now time matters. But the question doesn't disappear; it only takes on a qualifier: How fast can you just keep running for 26.2 miles? In the end, you just keep running.

It was a beautiful late summer morning for a run. Cool. Sunny. I just kept running.

September 04, 2009 4:09 PM

Skepticism and Experiment

There's been a long-running thread on the XP discussion list about a variation of pair programming suggested by a list member, on the basis of an experiment run in his shop and documented in a simple research report. One reader is skeptical; he characterized the research as 'only' an experience report and asked for more evidence. Dave Nicolette responded:

Point me to the study that proves...point me to the study that proves...point me to the study that proves...<yawn>

There is no answer that will satisfy the person who demands studies as proof.

Studies aren't proof. Studies are analyses of observed phenomena. The thing a study analyzes has already happened before the study is performed.

... The proof is in the doing.

This is one of those issues where each perspective has value, and even dominates the other under the right conditions.

Skepticism is good. Asking for data before changing how you, your team, or your organization behaves is reasonable. Change is hard enough on people when it succeeds; when it fails, it wastes time and can dispirit people. At times, skepticism is an essential defense mechanism.

Besides, if you are happy with how things work now, why change at all?

The bigger the changes, the more costly the change, the more valuable is skepticism.

In the case of the XP list discussion, though, we see a different set of conditions. The practice being suggested has been tried at one company, so its research report really is "just" an experience report. But that's fine. We can't draw broadly applicable conclusions from an experiment of one anyway, at least not if we want the conclusions to be reliable. This sort of suggestion is really an invitation to experiment: We tried this, it worked for us, and you might want to try it.

If you are dissatisfied with your current practice, then you might try the idea as a way to break out of a suboptimal situation. If you are satisfied enough but have the freedom and inclination to try to improve, then you might try the idea on a lark. When the cost of giving the practice a test drive is small enough, it doesn't take much of a push to do so.

What a practice like this needs is to have a lot of people try it out, under similar conditions and different conditions, to find out if the first trial's success indicates a practice that can be useful generally or was a false positive. That's where the data for a real study comes from!

This sort of practice is one that professional software developers must try out. I could run an experiment with my undergraduates, but they are not (yet) like the people who will use the practice in industry, and the conditions under which they develop are rarely like the conditions in industry. We could gain useful information from such an experiment (especially about how undergrads work and think), but the real proof will come when teams in industry use the practice and we see what happens.

Academics can add value after the fact, by collecting information about the experiments, analyzing the data, and reporting it to the world. That is one of the things that academics do well. I am reminded of Jim Coplien's exhortations a decade and more ago for academic computer scientists to study existing software artifacts, in conjunction with practitioners, to identify useful patterns and document the pattern languages that give rise to good and beautiful programs. While some CS academics continue to do work of this sort -- the area of parallel programming patterns is justifiably hot right now -- I think we in academic CS have missed an opportunity to contribute as much as we might otherwise to software development.

We can't document a practice until it has been tried. We can't document a pattern until it recurs. First we do, then we document.

This reminds me of a blog entry written by Perryn Fowler about a similar relationship between "best practices" and success:

Practices document success; they don't create it.

A tweet before its time...

As I have scanned through this thread on the discussion list, I have found it interesting that some agile people are as skeptical about new agile practices as non-agile folks were (and still are) about agile practices. You would think that we should be practicing XP as it was writ nearly a decade ago. Even agile behavior tends toward calcification if we don't remain aware of why we do what we do.