July 30, 2013 2:43 PM

Education, Then and Now

... is your job.

Then:

Whatever be the qualifications of your tutors, your improvement must chiefly depend on yourselves. They cannot think or labor for you, they can only put you in the best way of thinking and laboring for yourselves. If therefore you get knowledge, you must acquire it by your own industry.

Now:

School isn't over. School is now. School is blogs and experiments and experiences and the constant failure of shipping and learning.

This works if you interpret "then and now" as in the past (Joseph Priestly, in his dedication of New College, London, 1794) and in the present (Seth Godin, in Brainwashed, from 2008).

It also works if you interpret "then and now" as when you were in school and now that you are out in the real world. It's all real.

July 25, 2013 10:01 AM

Software is Hard...

... but here I am, writing another program.

She could feel her own lungs suspended as she worked, and she forced herself to inhale, suddenly frustrated by the insurmountable inability to make the paint correspond exactly and precisely to what was in her head. It was always doomed from the outset, but here she was, making another goddamned painting.

(From The Great Man, by Kate Christensen.)

July 24, 2013 11:44 AM

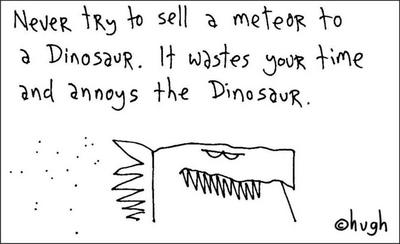

Headline: "Dinosaurs Object to Meteor's Presence"

|

Nate Silver recently announced that he is leaving the New York Times for ESPN. Margaret Sullivan offers some observations on the departure, into how political writers at the Times viewed Silver and his work:

... Nate disrupted the traditional model of how to cover politics.

His entire probability-based way of looking at politics ran against the kind of political journalism that The Times specializes in: polling, the horse race, campaign coverage, analysis based on campaign-trail observation, and opinion writing. ...

His approach was to work against the narrative of politics. ...

A number of traditional and well-respected Times journalists disliked his work. The first time I wrote about him I suggested that print readers should have the same access to his writing that online readers were getting. I was surprised to quickly hear by e-mail from three high-profile Times political journalists, criticizing him and his work. ...

Maybe Silver decided to acquiesce to Hugh MacLeod's advice. Maybe he just got a better deal.

The world changes, whether we like it or not. The New York Times and its journalists probably have the reputation, the expertise, and the strong base they need to survive the ongoing changes in journalism, with or without Silver. Other journalists don't have the luxury of being so cavalier.

I don't know any more attitudes inside the New York Times than what I see reported in the press, but Sullivan's article made me think of one of Anil Dash's ten rules of the internet:

When a company or industry is facing changes to its business due to technology, it will argue against the need for change based on the moral importance of its work, rather than trying to understand the social underpinnings.

I imagine that a lot of people at the Times are indeed trying to understand the social underpinnings of the changes occurring in the media and trying to respond in useful ways. But that doesn't mean that everyone on the inside is, or even that the most influential and high-profile people in the trenches are. And that's adds an internal social challenge to the external technological challenge.

Alas, we see much the same dynamic playing out in universities across the country, including my own. Some dinosaurs have been around for a long time. Others are near the beginning of their careers. The internal social challenges are every bit as formidable as the external economic and technological ones.

July 23, 2013 9:46 AM

Some Meta-Tweeting Silliness

my previous tweet

missed an opportunity,

should have been haiku

(for @fogus)

July 18, 2013 2:22 PM

AP Computer Science in Iowa High Schools

Mark Guzdial posted a blog entry this morning pointing to a Boston Globe piece, Interest in computer science lags in Massachusetts schools. Among the data supporting this assertion was participation in Advanced Placement:

Of the 85,753 AP exams taken by Massachusetts students last year, only 913 were in computing.

Those numbers are a bit out of context, but they got me to wondering about the data for Iowa. So I tracked down this page on AP Program Participation and Performance Data 2012 and clicked through to the state summary report for Iowa. The numbers are even more dismal than Massachusetts's.

Of the 16,413 AP exams taken by Iowa students in 2012, only sixty-nine were in computer science. The counts for groups generally underrepresented in computing were unsurprisingly small, given that Iowa is less diverse than many US states. Of the sixty-nine, fifty-four self-reported as "white", ten as "Asian", and one as "Mexican-American", with four not indicating a category.

The most depressing number of all: only nine female students took the AP Computer Science exam last year in Iowa.

Now, Massachusetts has roughly 2.2 times as many people as Iowa, but even so Iowa compares unfavorably. Iowans took about one-fifth as many AP exams as many Massachusetts students, and for CS the percentage drops to 7.5%. If AP exams indicate much about the general readiness of a state's students for advanced study in college, then Iowa is at a disadvantage.

I've never been a huge proponent of the AP culture that seems to dominate many high schools these days (see, for instance, this piece), but the low number of AP CS exams taken in Iowa is consistent with what I hear when I talk to HS students from around the state and their parents: Iowa schools are not teaching much computer science at all. The university is the first place most students have an opportunity to take a CS course, and by then the battle for most students' attention has already been lost. For a state with a declared goal of growing its promising IT sector, this is a monumental handicap.

Those of us interested in broadening participation in CS face an even tougher challenge. Iowa's demographics create some natural challenges for attracting minority students to computing. And if the AP data are any indication, we are doing a horrible job of reaching women in our high schools.

There is much work to do.

July 17, 2013 1:41 PM

And This Gray Spirit Yearning

On my first day as a faculty member at the university, twenty years ago, the department secretary sent me to Public Safety to pick up my office and building keys. "Hi, I'm Eugene Wallingford," I told the person behind the window, "I'm here to pick up my keys." She smiled, welcomed me, and handed them to me -- no questions asked.

Back at the department, I commented to one of my new colleagues that this seemed odd. No one asked to see an ID or any form of authorization. They just handed me keys giving me access to a lot of cool stuff. My colleague shrugged. There has never been a problem here with unauthorized people masquerading as new faculty members and picking up keys. Until there is a problem, isn't it nice living in a place where trust works?

Things have changed. These days, we don't order keys for faculty; we "request building access". This phrase is more accurate than a reference to keys, because it includes activating the faculty ID to open electronically-controlled doors. And we don't simply plug a new computer into an ethernet jack and let faculty start working; to get on the wireless network, we have to wait for the Active Directory server to sync with the HR system, which updates only after electronic approval of a Personnel Authorization Form that set up of the employee's payroll record. I leave that as a run-on phrase, because that's what living it feels like.

The paperwork needed to get a new faculty member up and running these days reminds me just how simple life was when in 1992. Of course, it's not really "paperwork" any more.

July 15, 2013 2:41 PM

Version Control for Writers and Publishers

Mandy Brown again, this time on on writing tools without memory:

I've written of the web's short-term memory before; what Manguel trips on here is that such forgetting is by design. We designed tools to forget, sometimes intentionally so, but often simply out of carelessness. And we are just as capable of designing systems that remember: the word processor of today may admit no archive, but what of the one we build next?

This is one of those places where the software world has a tool waiting to reach a wider audience: the version control system. Programmers using version control can retrieve previous states of their code all the way back to its creation. The granularity of the versions is limited only by the frequency with which they "commit" the code to the repository.

The widespread adoption of version control and the existence of public histories at place such as GitHub have even given rise to a whole new kind of empirical software engineering, in which we mine a large number of repositories in order to understand better the behavior of developers in actual practice. Before, we had to contrive experiments, with no assurance that devs behaved the same way under artificial conditions.

Word processors these days usually have an auto-backup feature to save work as the writer types text. Version control could be built into such a feature, giving the writer access to many previous versions without the need to commit changes explicitly. But the better solution would be to help writers learn the value of version control and develop the habits of committing changes at meaningful intervals.

Digital version control offers several advantages over the writer's (and programmer's) old-style history of print-outs of previous versions, marked-up copy, and notebooks. An obvious one is space. A more important one is the ability to search and compare old versions more easily. We programmers benefit greatly from a tool as simple as diff, which can tell us the textual differences between two files. I use diff on non-code text all the time and imagine that professional writers could use it to better effect than I.

The use of version control by programmers leads to profound changes in the practice of programming. I suspect that the same would be true for writers and publishers, too.

Most version control systems these days work much better with plain text than with the binary data stored by most word processing programs. As discussed in my previous post, there are already good reasons for writers to move to plain text and explicit mark-up schemes. Version control and text analysis tools such as diff add another layer of benefit. Simple mark-up systems like Markdown don't even impose much burden on the writer, resembling as they do how so many of us used to prepare text in the days of the typewriter.

Some non-programmers are already using version control for their digital research. Check out William Turkel's How To for doing research with digital sources. Others, such The Programming Historian and A Companion to Digital Humanities, don't seem to mention it. But these documents refer mostly to programs for working with text. The next step is to encourage adoption of version control for writers doing their own thing: writing.

Then again, it has taken a long time for version control to gain such widespread acceptance even among programmers, and it's not yet universal. So maybe adoption among writers will take a long time, too.

July 12, 2013 3:08 PM

"Either Take Control, or Cede it to the Software"

Mandy Brown tells editors and "content creators" to take control of their work:

It's time content people of all stripes recognized the WYSIWYG editor for what it really is: not a convenient shortcut, but a dangerous obstacle placed between you and the actual content. Because content on the web is going to be marked up one way or another: you either take control of it or you cede it to the software, but you can't avoid it. WYSIWYG editors are fine for amateurs, but if you are an editor, or copywriter, or journalist, or any number of the kinds of people who work with content on the web, you cannot afford to be an amateur.

Pros can sling a little code, too.

Brown's essay reminded me of a blog entry I was discussing with a colleague recently, Andrew Hayes's Why Learn Syntax? Hayes tells statisticians that they, too, should take control of their data, by learning the scripting language of the statistical packages they use. Code is a record of an analysis, which allows it to be re-run and shared with others. Learning to write code also hones one's analytical skills and opens the door to features not available through the GUI.

These articles speak to two very different audiences, but the message is the same. Don't just be a user of someone else's tools and be limited to their vision. Learn to write a little code and take back the power to create.

July 11, 2013 2:57 PM

Talking to the New University President about Computer Science

Our university recently hired a new president. Yesterday, he and the provost came to a meeting of the department heads in humanities, arts, and sciences, so that he could learn a little about the college. The dean asked each head to introduce his or her department in one minute or less.

I came in under a minute, as instructed. Rather than read a litany of numbers that he can read in university reports, I focused on two high-level points:

- Major enrollment has recovered nicely since the deep trough after the dot.com bust and is now steady. We have near-100% placement, but local and state industry could hire far more graduates.

- For the last few years we have also been working to reach more non-majors, which is a group we under-serve relative to most other schools. This should be an important part of the university's focus on STEM and STEM teacher education.

I closed with a connection to current events:

We think that all university graduates should understand what 'metadata' is and what computer programs can do with it -- enough so that they can understand the current stories about the NSA and be able to make informed decisions as a citizen.

I hoped that this would be provocative and memorable. The statement elicited laughs and head nods all around. The president commented on the Snowden case, asked me where I thought he would land, and made an analogy to The Man Without a Country. I pointed out that everyone wants to talk about Snowden, including the media, but that's not even the most important part of the story. Stories about people are usually of more interest than stories about computer programs and fundamental questions about constitutional rights.

I am not sure how many people believe that computer science is a necessary part of a university education these days, or at least the foundations of computing in the modern world. Some schools have computing or technology requirements, and there is plenty of press for the "learn to code" meme, even beyond the CS world. But I wonder how many US university graduates in 2013 understand enough computing (or math) to understand this clever article and apply that understand to the world they live in right now.

Our new president seemed to understand. That could bode well for our department and university in the coming years.

July 08, 2013 1:05 PM

A Random Thought about the Metadata and Government Surveillance

In a recent mischievous mood, I decided it might be fun to see the following.

The next whistleblower with access to all the metadata that the US government is storing on its citizens assembles a broad list of names: Republican and Democrat; legislative, executive, and judicial branches; public official and private citizens. The only qualification for getting on the list is that the person has uttered any variation of the remarkably clueless statement, "If you aren't doing anything wrong, then you have nothing to hide."

The whistleblower thens mine the metadata and, for each person on this list, publishes a brief that demonstrates just how much someone with that data can conclude -- or insinuate -- about a person.

If they haven't done anything wrong, then they don't have anything to worry about. Right?

July 07, 2013 9:32 AM

Interesting Sentences, Personal Weakness Edition

The quest for comeuppance is a misallocation of personal resources. -- Tyler Cowen

Far too often, my reaction to events in the world around me is to focus on other people not following rules, and the unfairness that results. It's usually not my business, and even when it is, it's a foolish waste of mental energy. Cowen expresses this truth nicely in neutral, non-judgmental language. That may help me develop a more productive mental habit.

What we have today is a wonderful bike with training wheels on. Nobody knows they are on, so nobody is trying to take them off. -- Alan Kay, paraphrased from The MIT/Brown Vannevar Bush Symposium

Kay is riffing off Douglas Engelbart's tricycle analogy, mentioned last time. As a computer scientist, and particularly one fortunate enough to have been exposed to the work of Ivan Sutherland, Englebart, Kay and the Xerox PARC team, and so many others, I should be more keenly conscious that we are coasting along with training wheels on. I settle for limited languages and limited tools.Even sadder, when computer scientists and software developers settle for training wheels, we tend to limit everyone else's experience, too. So my apathy has consequences.

I'll try to allocate my personal resources more wisely.

July 06, 2013 9:57 AM

Douglas Engelbart Wasn't Just Another Computer Guy

Bret Victor nails it:

Albert Einstein, Discoverer of Photoelectric Effect, Dies at 76

In the last few days, I've been telling family and friends about Engelbart's vision and effect on the world the computing, and thus on their world. He didn't just "invent the mouse".

It's hard to imagine these days just how big Engelbart's vision was for the time. Watching The Mother of All Demos now, it's easy to think "What's the big deal? We have all that stuff now." or even "Man, that video looks prehistoric." First of all, we don't have all that stuff today. Watch again. Second, in a sense, that demo was prehistory. Not only did we not have such technology at the time, almost no one was thinking about it. It's not that people thought such things were impossible; they couldn't think about them at all, because no one had conceived them yet. Engelbart did.

Engelbart didn't just invent a mouse that allows us to point at files and web links. His ideas helped point an entire industry toward the future.

Like so many of our computing pioneers, though, he dreamed of more than what we have now, and expected -- or at least hoped -- that we would build on the industry's advanced to make his vision real. Engelbart understood that skills which make people productive are probably difficult to learn. But they are so valuable that the effort is worth it. I'm reminded of Alan Kay's frequent use of a violin as an example, compared to a a simpler music-making device, or even to a radio. Sure, a violin is difficult to play well. But when you can play -- wow.

Engelbart was apparently fond of another example: the tricycle

Riding a bicycle -- unlike a tricycle -- is a skill that requires a modest degree of practice (and a few spills), but the rider of a bicycle quickly outpaces the rider of a tricycle.

Most of the computing systems we use these days are tricycles. Doug Engelbart saw a better world for us.

July 03, 2013 10:22 AM

Programming for Everyone, Venture Capital Edition

Christina Cacioppo left Union Square Ventures to learn how to program:

Why did I want to do something different? In part, because I wanted something that felt more tangible. But mostly because the story of the internet continues to be the story of our time. I'm pretty sure that if you truly want to follow -- or, better still, bend -- that story's arc, you should know how to write code.

So, rather than settle for her lot as a non-programmer, beyond the accepted school age for learning these things -- technology is a young person's game, you know -- Cacioppo decided to learn how to build web apps. And build one.

When did we decide our time's most important form of creation is off-limits? How many people haven't learned to write software because they didn't attend schools that offered those classes, or the classes were too intimidating, and then they were "too late"? How much better would the world be if those people had been able to build their ideas?

Yes, indeed.

These days, she is enjoying the experience of making stuff: trying ideas out in code, discarding the ones that don't work, and learning new things every day. Sounds like a programmer to me.

Posted by Eugene Wallingford | Permalink | Categories: Computing, Software Development, Teaching and Learning

July 01, 2013 11:10 AM

Happy in my App

In a typically salty post, Jamie Zawinski expresses succinctly one of the tenets of my personal code:

I have no interest in reading my feeds through a web site (no more than I would tolerate reading my email that way, like an animal).

Living by this code means that, while many of my friends and readers are gnashing their teeth on this first of July, my life goes on uninterrupted. I remain a happy long-time user of NetNewsWire (currently, v3.1.6).

Keeping my feeds in sync with NetNewsWire has always been a minor issue, as I run the app on at least two different computers. Long ago, I wrote a couple of extremely simple scripts -- long scp commands, really -- that do a pretty good job. They don't give me thought-free syncing, but that's okay.

A lot of people tell me that apps are dead, that the HTML5-powered web is the future. I do know that we're very quickly running out of stuff we can't do in the browser and applaud the people who are making that happen. If I were a habitual smartphone-and-tablet user, I suspect that I would be miffed if web sites made me download an app just to read their web content. All that said, though, I still like what a clean, simple app gives me.