August 31, 2013 11:32 AM

A Good Language Conserves Programmer Energy

Game programmer Jeff Wofford wrote a nice piece on some of the lessons he learned by programming a game in forty-eight hours. One of the recurring themes of his article is the value of a high-powered scripting language for moving fast. That's not too surprising, but I found his ruminations on this phenomenon to be interesting. In particular:

A programmer's chief resource is the energy of his or her mind. Everything that expends or depletes that energy makes him or her less effective, more tired, and less happy.

A powerful scripting language sitting atop the game engine is one of the best ways to conserve programmer energy. Sometimes, though, a game programmer must work hard to achieve the performance required by users. For this reason, Wofford goes out of his way not to diss C++, the tool of choice for many game programmers. But C++ is an energy drain on the programmer's mind, because the programmer has to be in a constant state of awareness of machine cycles and memory consumption. This is where the trade-off with a scripting language comes in:

When performance is of the essence, this state of alertness is an appropriate price to pay. But when you don't have to pay that price -- and in every game there are systems that have no serious likelihood of bottlenecking -- you will gain mental energy back by essentially ignoring performance. You cannot do this in C++: it requires an awareness of execution and memory costs at every step. This is another argument in favor of never building a game without a good scripting language for the highest-level code.

I think this is true of almost every large system. I sure wish that the massive database systems at the foundation of my university's operations had scripting languages sitting on top. I even want to script against the small databases that are the lingua franca of most businesses these days -- spreadsheets. The languages available inside the tools I use are too clunky or not powerful, so I turn to Ruby.

Unfortunately, most systems don't come with a good scripting language. Maybe the developers aren't allowed to provide one. Too many CS grads don't even think of "create a mini-language" as a possible solution to their own pain.

Fortunately for Wofford, he both has the skills and inclination. One of his to-dos after the forty-eight hour experience is all about language:

Building a SWF importer for my engine could work. Adding script support to my engine and greatly refining my tools would go some of the distance. Gotta do something.

Gotta do something.

I'm teaching our compiler course again this term. I hope that the dozen or so students in the course leave the university knowing that creating a language is often the right next action and having the skills to do it when they feel compelled to do something.

August 29, 2013 4:31 PM

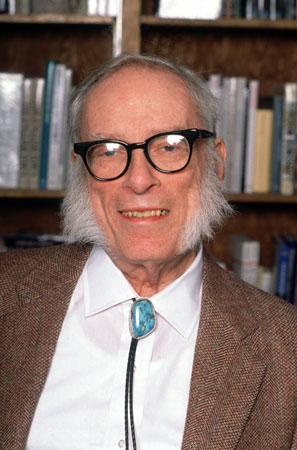

Asimov Sees 2014, Through Clear Eyes and Foggy

|

A couple of years ago, I wrote Psychohistory, Economics, and AI, in which I mentioned Isaac Asimov and one way that he had influenced me. I never read Asimov or any other science fiction expecting to find accurate predictions of future. What drew me in was the romance of the stories, dreaming "what if?" for a particular set of conditions. Ultimately, I was more interested in the relationships among people under different technological conditions than I was in the technology itself. Asimov was especially good at creating conditions that generated compelling human questions.

Some of the scenarios I read in Asimov's SF turned out to be wildly wrong. The world today is already more different from the 1950s than the world of the Foundation, set thousands of years in the future. Others seem eerily on the mark. Fortunately, accuracy is not the standard by which most of us judge good science fiction.

But what of speculation about the near future? A colleague recently sent me a link to Visit to the World's Fair of 2014, an article Asimov wrote in 1964 speculating about the world fifty years hence. As I read it, I was struck by just how far off he was in some ways, and by how close he was in others. I'll let you read the story for yourself. Here are a few selected passages that jumped out at me.

General Electric at the 2014 World's Fair will be showing 3-D movies of its "Robot of the Future," neat and streamlined, its cleaning appliances built in and performing all tasks briskly. (There will be a three-hour wait in line to see the film, for some things never change.)

3-D movies are now common. Housecleaning robots are not. And while some crazed fans will stand in line for many hours to see the latest comic-book blockbuster, going to a theater to see a movie has become much less important part of the culture. People stream movies into their homes and into their hands. My daughter teases me for caring about the time any TV show or movie starts. "It's on Hulu, Dad." If it's not on Hulu or Netflix or the open web, does it even exist?

Any number of simultaneous conversations between earth and moon can be handled by modulated laser beams, which are easy to manipulate in space. On earth, however, laser beams will have to be led through plastic pipes, to avoid material and atmospheric interference. Engineers will still be playing with that problem in 2014.

There is no one on the moon with whom to converse. Sigh. The rest of this passage sounds like fiber optics. Our world is rapidly becoming wireless. If your device can't connect to the world wireless web, does it even exist?

In many ways, the details of technology are actually harder to predict correctly than the social, political, economic implications of technological change. Consider:

Not all the world's population will enjoy the gadgety world of the future to the full. A larger portion than today will be deprived and although they may be better off, materially, than today, they will be further behind when compared with the advanced portions of the world. They will have moved backward, relatively.

Spot on.

When my colleague sent me the link, he said, "The last couple of paragraphs are especially relevant." They mention computer programming and a couple of its effects on the world. In this regard, Asimov's predictions meet with only partial success.

The world of A.D. 2014 will have few routine jobs that cannot be done better by some machine than by any human being. Mankind will therefore have become largely a race of machine tenders. Schools will have to be oriented in this direction. ... All the high-school students will be taught the fundamentals of computer technology will become proficient in binary arithmetic and will be trained to perfection in the use of the computer languages that will have developed out of those like the contemporary "Fortran" (from "formula translation").

The first part of this paragraph is becoming truer every day. Many people husband computers and other machines as they do tasks we used to do ourselves. The second part is, um, not true. Relatively few people learn to program at all, let alone master a programming language. And how many people understand this t-shirt without first receiving an impromptu lecture on the street?

Again, though, Asimov is perhaps closer on what technological change means for people than on which particular technological changes occur. In the next paragraph he says:

Even so, mankind will suffer badly from the disease of boredom, a disease spreading more widely each year and growing in intensity. This will have serious mental, emotional and sociological consequences, and I dare say that psychiatry will be far and away the most important medical specialty in 2014. The lucky few who can be involved in creative work of any sort will be the true elite of mankind, for they alone will do more than serve a machine.

This is still speculation, but it is already more true than most of us would prefer. How much truer will it be in a few years?

My daughters will live most of their lives post-2014. That worries the old fogey in me a bit. But it excites me more. I suspect that the next generation will figure the future out better than mine, or the ones before mine, can predict it.

~~~~

PHOTO. Isaac Asimov, circa 1991. Britannica Online for Kids. Web. 2013 August 29. http://kids.britannica.com/comptons/art-136777.

August 28, 2013 3:07 PM

Risks and the Entrepreneurs Who Take Them

Someone on the SIGCSE mailing list posted a link to an article in the Atlantic, that explores a correlation between entrepreneurship, teenaged delinquency, and white male privilege. The article starts with

It does not strike me as a coincidence that a career path best suited for mild high school delinquents ends up full of white men.

and concludes with

To be successful at running your own company, you need a personality type that society is a lot more forgiving of if you're white.

The sender of the link was curious what educational implications these findings have, if any, for how we treat academic integrity in the classroom. That's an interesting question, though my personal tendency to follow rules and not rock the boat has always made me more sensitive to the behavior of students who employ the aphorism "ask for forgiveness, not permission" a little too cavalierly for my taste.

My first reaction to the claims of this article was tied to how I think about the kinds of risks that entrepreneurs take.

When most people in the start-up world talk about taking risks, they are talking about the risk of failure and, to a lesser extent, the risk of being unconventional, not the risk of being caught doing something wrong. In my personal experience, the only delinquent behavior our entrepreneurial former students could be accused of is not doing their homework as regularly as they should. Time spent learning for their business is time not spent on my course. But that's not delinquent behavior; it's curiosity focused somewhere other than my classroom.

It's not surprising, though, that teens who were willing take legal risks are more likely willing to take business risk, and (sadly) legal risks in their businesses. Maybe I've simply been lucky to have worked with students and other entrepreneurs of high character.

Of course, there is almost certainly a white male privilege associated with the risk of failure, too. White males are often better positioned financially and socially than women or minorities to start over when a company fails. It's also easier to be unconventional and stand out from the crowd when you don't already stand out from the crowd due to your race or gender. That probably accounts for the preponderance of highly-educated white men in start-ups better than a greater willingness to partake in "aggressive, illicit, risk-taking activities".

August 22, 2013 2:45 PM

A Book of Margin Notes on a Classic Program?

I recently stumbled across an old How We Will Read interview with Clive Thompson and was intrigued by his idea for a new kind of annotated book:

I've had this idea to write a provocative piece, or hire someone to write it, and print it on-demand it with huge margins, and then send it around to four people with four different pens -- red, blue, green and black. It comes back with four sets of comments all on top of the text. Then I rip it all apart and make it into an e-book.

This is an interesting mash-up of ideas from different eras. People have been writing in the margins of books for hundreds of years. These days, we comment on blog entries and other on-line writing in plain view of everyone. We even comment on other people's comments. Sites such as Findings.com, home of the Thompson interview, aim to bring this cultural practice to everything digital.

Even so, it would be pretty cool to see the margin notes of three or four insightful, educated people, written independently of one another, overlaid in a single document. Presentation as an e-book offers another dimension of possibilities.

Ever the computer scientist, I immediately began to think of programs. A book such as Beautiful Code gives us essays from master programmers talking about their programs. Reading it, I came to appreciate design decisions that are usually hidden from readers of finished code. I also came to appreciate the code itself as a product of careful thought and many iterations.

My thought is: Why not bring Thompson's mash-up of ideas to code, too? Choose a cool program, perhaps one that changed how we work or think, or one that unified several ideas into a standard solution. Print it out with huge margins, and send it to three of four insightful, thoughtful programmers who read it, again or for the first time, and mark it up with their own thoughts and ideas. It comes back with four sets of comments all on top of the text. Rip it apart and create an e-book that overlays them all in a single document.

Maybe we can skip the paper step. Programming tools and Web 2.0 make it so easy to annotate documents, including code, in ways that replace handwritten comments. That's how most people operate these days. I'm probably showing my age in harboring a fondness for the written page.

In any case, the idea stands apart from the implementation. Wouldn't it be cool to read a book that interleaves and overlays the annotations made by programmers such as Ward Cunningham and Grady Booch as they read John McCarthy's first Lisp interpreter, the first Fortran compiler from John Backus's team, QuickDraw, or Qmail? I'd stand in line for a copy.

Writing this blog entry only makes the idea sound more worth doing. If you agree, I'd love to hear from you -- especially if you'd like to help. (And especially if you are Ward Cunningham and Grady Booch!)

August 21, 2013 3:19 PM

Gathering Place

For a while now I've been intending to take more photographs, both for practice and for use on my blog. Some new furniture in the university quad prompted me to snap a few this morning. Here is one; click through for high-res.

|

Where two or three are gathered...

~~~~

PHOTO. Gathering Place, by Eugene Wallingford, 2013. This work is licensed under a Creative Commons Attribution 3.0 Unported license.

August 13, 2013 3:11 PM

Refactoring is Underrated

I think revision is hugely underrated. It is very seldom recognized as a place where the higher creativity can live, or where it can manifest. I think it was Yeats who said that literary revision was the only place in life where a man could truly improve himself.

-- William Gibson, The Art of Fiction No. 211

I find it a lot easier to come up with clean, simple designs when I have code in my hands to work with, rather than requirements. Even detailed requirements are abstract with respect to our programs. Code is the raw material.