January 30, 2009 3:10 PM

Pop Interview!

The phone rings.

"Hi, I'm [local radio personality]. I'd like to interview you about the grant your department received from State Farm."

"Um, sure." Quick -- compose yourself.

"So, what is this grant all about?"

A short game of Twenty Questions ensued. This was a first for me: a cold call from a radio station requesting an interview. Fortunately the interview was conducted off-line; my answers were recorded and will be used to produce a finished piece later.

I have done phone interviews before, some of which I have discussed here. But those were arranged in advance, so I had time to prepare specific comments and to get into the right frame of mind. to answer questions in that context. Also, my previous interviews have always been for my own personal work, which I know at a different level than I know my department work. Even though I wrote the grant proposal in question, it was collective work, not mine, and that shows in how well I feel the project.

A quick word about the grant... State Farm Insurance is based a few hours' drive from here and hires many of our best software engineering students into its systems development division. Through its foundation, State Farm supports universities with grants to support educational work. A few years ago, one of these grants helped us to build our first computational cluster and begin using it in our bioinformatics program, and to support a number of computational science projects on campus. The fact that an insurance company would fund this kind of work shows that it has a long-term view of education, which we at the university appreciate.

We recently received a new grant to purchase two quad-socket, quad-core servers and integrate their use into our architecture, systems, and programming courses. The world is going multi-core, and we would like to give our students some of the experiences they will need to contribute.

Anyway, I now have a new set of skills to work on: the pop interview. Or I at least need to develop a mind quick enough to say, "Hey, can I call you back in five?"

January 29, 2009 7:33 AM

Using Code to Document Lab Procedure

I've been following the idea of open notebook science for a while, both for its meaning to science and for the technological need it creates. Yesterday I read Cameron Neylon's piece on a web-native lab notebook. It was an interesting read, though it did contain a single paragraph that ran for two pages... After he describes how to use a blog to organize an integrated record of lab activities, he says:

What we're left with is the procedures which after all is the core of the record, right? Well no. Procedures are also just documents. Maybe they are text documents, but perhaps they are better expressed as spreadsheets or workflows (or rather the record of running a workflow). Again these may well be better handled by external services, be they word processors, spreadsheets, or specialist services. They just need to be somewhere where we can point at them.

Procedures are, well, procedures. It would be very cool if we could help scientists record their procedures as code. Programs require more precision than free-form text, which would make sharing scientific procedures more reliable, and they support naturally the distinction between static and dynamic presentation. Neylon sees this opportunity when he talks about recording a procedure as a workflow or as a record of running one.

Just another wild-hair idea at the boundary where CS meets people doing their work.

January 26, 2009 7:52 PM

Our Gods Die Hard

Many of us know this feeling:

When gods die, they die hard. It's not like they fade away, or grow old, or fall asleep. They die in fire and pain, and when they come out of you, they leave your guts burned. It hurts more than anything you can talk about. And maybe worst of all is, you're not sure if there will ever be another god to fill their place. You don't want the fire to go out inside you twice.

I'm tempted to say that the urge to share this came from a couple of experiences I had at the Rebooting Computing summit, but this passage goes beyond the emotions I felt there.

(This paragraph comes from a "young adult" novel called the The Wednesday Wars, by Gary D. Schmidt. I did not realize how many good books there are out there for young readers until my daughters started reading them and recommending them to me. If there is a book that can make Shakespeare more relevant to the life of a seventh-grader or more attractive for a teen to read than this one -- all the while being funny and on the mark for its audience -- I'd love to read it.)

January 23, 2009 1:02 PM

Design in Agile Methods

I recently read an early version of a paper by a colleague in the agile world that talks about several different ways he approaches design problems. I like that he is writing about design. After all these years, I still encounter many people who think this statement is an axiom:

In those situations, I try to share (gently) my experience as developer and occasional consultant, but the notion is pretty well ingrained. As I wrote last month, it's hard a slog.

As I read the preprint, I thought more about doing design in a reactive way, responding to new features as we add them to our code. My skeptical colleagues think that the sort of design so many of us do in agile settings is not design at all. But I think they are wrong, and the word "reactive" brought to mind a similar notion from my days in AI: reactive planning.

When I first heard that term in a graduate readings course, it sounded like an oxymoron to me. Planning is the opposite of reaction, right? If an agent does not construct a plan, then how is it planning? Reactive planning contrasts with what then came to be known as classical planning. An agile wag wag might coin the term "BPUF" -- big planning up-front.

But I learned about the idea and found it wasn't an oxymoron at all, that reactive planning was a real and valuable way for an agent to prepare its actions. I have learned the same is true of "reactive" design. We folks in the agile world probably haven't done enough to teach agile novices to design in this way, which is one reason I'm hoping to see more papers like the draft I just read.

January 22, 2009 4:05 PM

A Story-Telling Pattern from the Summit

At the Rebooting Computing Summit, one exercise called for us to interview each other and then report back to the group about the person we interviewed. The reports my partner and I gave, coupled with some self-reported experiences later in the day, reminded me of a pattern I've experienced in other contexts. Here is a rough first draft. Let me know what you think.

Second-Hand Story

When we need to know a person's story, our first tendency is often to ask him to tell us. After all, he know it best, because he lived it. He has had a chance to reflect on it, to reconsider decisions, and to evaluate what the story "means".

This approach can disappoint us. Sometimes, the person is too close to the experience and attaches accidental emotions and details. Sometimes, even though he has had a chance to reflect on his experience, he hasn't reflected enough -- or perhaps not at all! Telling the story may be the first time he has thought about some of those experiences in a long time. While trying to tell the story and summarize its meaning at the same time, the storyteller may reach for an easily-found answer. The result can be trite, convenient, or self-protective. Maybe the person is simply too close to an experience to see its true meaning.

Therefore, ask the person to tell his story to someone else, focusing on "just the facts". Then, ask the interviewer to tell the story, perhaps in summary form. Let the interviewer and the listeners look for patterns and themes.

The interviewer has distance and an opportunity to listen objectively. She is less likely to impose well-rehearsed personal baggage over the story.

The result can still be trite. If the listener does not listen carefully, or is too eager to stereotype the story, then the resulting story may well be worse than the original, because it is not only sanitized but sanitized by someone without intimate connection to it.

It can be refreshing to hear someone else tell your own story, to draw conclusions, to summarize what is most important. A good listener can pick up on essential details and remove the shroud of humility or disappointment that too often obscures your own view. You can learn something about yourself!

This technique depends on two people's ability to tell a story, not one. The original story-teller must be open, honest, and willing to describe situations more than evaluate them too much. (A little evaluation is unavoidable and also useful. The listener learns something about the story-teller from that party of the story, too.) The interviewer must be a careful listener and have a similar enough background to be able to put the story into context and form reasonable abstractions about it.

Examples. I found the interviewer's reports at the Rebooting Computing summit to be insightful, including the ones that Joe Carthy and I gave on one another. Hearing someone else "tell my story" let me hear it more objectively than if I had told it myself. Occasionally I felt let like chiming in to correct or add something, but I'm not sure than anything I said could have done a better job introducing myself to the rest of the group. Something Joe said during his interview of me made me think more about just how my non-CS profs helped lead me into CS, something I had never thought much about before that.

Later that day, we heard several self-reported stories, and those stories -- told by the same people who had reported on others earlier -- sounded flat and trite. I kept thinking, "What's the real story?" Maybe someone else could have told it better!

Related Ideas. I am reminded of several techniques I learned as an architecture major while studying Betty Edwards's Drawing on the Right Side of the Brain:

- drawing a chair by looking at the negative space around it

- drawing a picture of Igor Stravinsky upside down, in order to trick the visualization mechanism in our minds that jump right to stereotypes

- drawing a paper wad, which matched no stereotyped form already in our memories

This pattern and these exercises are all examples of techniques for indirect learning. This is is perhaps the first time I realized just how well indirect learning can work in a social setting.

January 21, 2009 7:55 AM

Rebooting Computing Summit -- This and That

As always, my report on the Rebooting Computing Summit left out some of the random thoughts and events that made my trip. Here are a few.

• When I was growing up I learned that the prefixes "Mc" and "O'" indicated "son of" when used in names such as McDonald and O'Herlihy. I always wondered who the original ancestors were -- the Donalds and Herlihys. (Even then, I was concerned with the base case...) One of my favorite grad school profs was named Bill McCarthy, but I had never met a Carthy. Now I have... One of my table mates at the summit was Joe Carthy of Dublin! Joe shared some valuable insights on teaching computing.

• In my report, I wrote of my vision for the future of computing, in which children will routinely walk to the computer and write a program.... "Walk to the computer" -- that is so 1990s! Today's children carry their technology in their hands.

• During one of his messages, Peter Denning showed the familiar quote, "Insanity is doing the same thing over again, expecting different results," as a motivation to change. But I think there is more to it than that. I was reminded of a recent Frazz comic, in which the precocious Caulfield pointed out that the world is always changing, so it is also insanity to do the same thing over again, expecting the same results. The world is changing around computing and computing education. There is no particular reason to think that doing the same old things better will get us anywhere useful.

• At one point, Alan Kay said that part of what is wrong with computing is that too many of us "fool around", rather than working to change the world. This, he said, is a feature of a popular culture, not a serious one. First, we had real guitar. Then came air guitar. And now we have Guitar Hero. He is, of course, right, and written occasionally of being shamed at coming up short when measured against his vision.

Later that evening, my roommate Robert Duvall discussed whether Guitar Hero might have some positives, by motivating some of the people who play it to learn to play a real guitar. I don't have a good feel for the culture around Guitar Hero, so I'll have to wait and see. New technologies often interact with younger generations in ways that we old folks can't predict. (My prurient side wants to say that Guitar Hero can't be all bad if it gives us Heidi Klum playing air guitar in her privates.)

• A Creative Interlude

On the second day of the summit, each table was asked to communicate to the rest of the groups its vision of the future of CS. The facilitators encouraged us to express our vision creatively, via role play or some other non-bullet list medium. One group did a neat job on this, with one ham performer playing the central role in a number of vignettes showing where the computing of tomorrow will have taken us.

This is the sort of exercise for which I am ill-equipped to excel alone, but I am able to do all right if I am in a group. My table decided to gang-write a song -- doggerel, really. With Christmas still close in our memory, we chose the tune to the familiar carol "Angels We Have Heard On High", in part, I think, for its soaring "Gloria"s. The result was "Everyone Now Loves CS".

Our original plan was for Susan Horwitz to sing our creation, as she does this sort of thing in many of her classes and so is used to the attention. A few of us toyed with the idea of playing air guitar in the background, but I'm glad we opted not to; the juxtaposition of our performance with Alan Kay's remarks later that afternoon would have been unfortunate indeed! About five minutes before the performance Susan informed us that we would be singing as a group. So we did. My students should not expect a reprise.

My conference history now includes singing and acting. I don't imagine that dance is in my future, but you never know.

January 20, 2009 4:27 PM

Rebooting Computing Workshop Approach Redux

I commented a couple of times in my review of the Rebooting Computing summit, about the process we used, and the reaction it created among the participants. We used an implementation of Appreciative Inquiry that emphasized collectively exploring our individual experiences and visions before springing into action.

Some people were skeptical from the outset, as some people will be about anything that so "touchy-feely". Perhaps some assumed that we all know what the problem is and that enough of us know what the solution should be and so wanted to get down to business. That seems unlikely to me. Were this problem easily understood or solved, we wouldn't have needed a summit to form action groups.

The process began to unravel on the second day, and many people were losing patience. After lunch -- more than halfway through the summit -- the entire group worked together to construct an overlaid set of societal, technical, and personal timelines of computing. For me, time began to drag during this exercise, though I enjoyed hearing the stories and seeing what so many people thought stood out in our technical history.

The 'construct a timeline of computing' was the final straw for some, especially some of the bigger names. While constructing the timeline, we found that many people in the room didn't know the right dates for the events they were adding. One of my colleagues finally couldn't stop himself and moved a couple of items to their right spots. It must have been especially frustrating for the people in the room who were there when these events were happening -- or who were the principal players! This is an example of how collaboration for collaboration's sake often doesn't work.

Later, Alan Kay referred to the summit's process as "participation without progress". Our process emphasized the former but in many ways impeded (or prevented) the latter. Kay said that this approach assumes all people's ideas are equally valid or useful. He called for something more like a representative democracy than what we had, which I would liken to a town hall form of government.

Kay may well be right, though a representative democracy-like approach risks losing buy-in and enthusiasm from the wider audience. Our representatives would need to be able either to solve the problems themselves or to energize "we, the people" to do the work. I'm not sure whether what ails computing can be solved by only a few, so I think bringing a large community on board at some point is necessary.

The next question is, who has the ideas that we should be acting on? I think the summit was a least partly successful in giving people with good ideas a chance to express them to a large group and in particular to let some of the more influential people in the room know about the people and the ideas. Unfortunately, the process throttled some other ideas with lack of interest. One that stands out to me now is the issue of outsourcing and intellectual property, which has been a dominant topic of discussion on the listserv since we left San Jose. Fortunately, people are talking about it now.

(I have to admit that I do not yet fully understand the problem. I either need more time to read or a bigger brain -- maybe both.)

In the end when we broke identified and joined action groups, some people asked, "How different is this set of action groups than if we had started the summit with this exercise?" Many people thought we would have ended up with the same groups. I think they might be right, though I'm not sure this means there was no value in the work that led to them.

Perhaps one problem was that this process does not scale well to 200+ people. If it does, then perhaps it just didn't scale well to these 200+ people. The room was full of Type A personalities. The industry people were the kind with strong opinions and a drive to solve problems. The faculty were... Well, most faculty are control freaks, and this group was representative.

Personally, I found the process to be worth at least some of the time we spent. I enjoyed looking back at my life in computing, reflecting on my own history, reliving a few stories, and thinking about what has influenced. I realized that my interest in computer science wasn't driven by math or CS teachers in high school or my undergraduate years.. I had a natural affinity for computing and what it means. The teachers who most affected me were ones who encouraged me to think abstractly and to take ideas seriously, who gave me reason to think I could do those things. The key was to find my passion and run.

I first saw that sort of passion in William Magrath my honors humanities prof, as a freshmen in college. As much as I loved humanities and political science and all the rest, I had a sense that CS was where my passion lay. The one undergrad CS prof who comes to mind as an influence, William Brown, was not a researcher. He was a serious systems guy who had come from IBM. In retrospect, I credit him with showing me that CS had serious ideas and was worth deep thought. He encouraged me subtly to go to grad school and answered a lot of questions from a naive student whose background made grad school seem as far away as the moon.

I can't give a definitive review of the Rebooting Computing workshop process because, sadly, I had to return home to do my other day job so missed the third day. From what I last, hear, the last day seemed to have clicked better for more people. I will say this. We have to realize that the goal of "rebooting" computing is a big task, and not everyone who needs to be involved shares the same context, history, motivation, or goals. It was worth trying to figure out some of that background before trying to make plans. Even if the process moved slower than some of us thought it should, it did get us all talking. That is a start, and the agile developer in me knows the value in that.

January 19, 2009 9:53 AM

Rebooting the Public Image of Computing

In addition to my more general comments on the Rebooting Computing summit, I made a lot of notes about the image of the discipline, which was, I think, one of the primary motivations for many summit participants. The bad image that most kids and parents have of careers in computing these days came up frequently. How can we make computing as attractive as medicine or law or business?

One of my table mates told us a story of seeing brochures for two bioinformatics programs at the same university. One was housed in the CS department, and the other was housed with the life sciences. The photos used in the two brochures painted strikingly different images in terms of how people were dressed and what the surroundings looked like. One looked like a serious discipline, while the other was "scruffy". Which one do you think ambitious students will choose? Which one will appeal to the parents of prospective students? Which one do you think was housed in CS?

Sometimes, the messages we send about our discipline are subtle, and sometimes not.

Too often, what K-12 students see in school these days under the guise of "computing" is applications. It is boring, full of black boxes with no mystery. It is about tools to use, not ideas for making things. After listening to several people relate their dissatisfaction with this view of computing, it occurred to me that one thing we might do to immediately improve the discipline's image is to get what currently passes for computing out of our schools. It tells the wrong stories!

The more commonly proposed solution is to require CS in K-12 schools and do it right. Cutting computing would be easier... Adding a new requirement to the crowded K-12 curriculum is a tall task fraught with political and economic roadblocks. And, to be honest, our success in presenting a good image of computing through introductory university courses doesn't fill me with confidence that we are ready to teach required CS in K-12 everywhere.

Don't take any of these thoughts too seriously. I'm still thinking out loud, in the spirit of the workshop. But I don't think there are any easy or obvious answers to the problems we face. One thing I liked about the summit was spending a few days with many different kinds of people who care about the problems and who all seem to be trying something to make things better.

The problems facing computing are not just about image. Some think to think so, but they aren't. Yet image is part of the problem. And the stories we tell -- explicitly and implicitly -- matter.

January 17, 2009 3:09 PM

Notes on the Rebooting Computing Summit

![]()

[This is the first of several pieces on my thoughts at the Rebooting Computing summit, held January 12-14 in San Jose, California. Later articles talk about image of CS, thoughts on approach, thoughts on personal stories and, as always, a little of this and that.]

This was unusual: a workshop with no laptops out. Some participants broke the rule almost immediately, including some big names, but I decided to follow my leaders. That meant no live note-taking, except on paper, nor any interleaved blogging. I decided to expand this opportunity and take advantage of an Internet-free trip, even back in the hotel!

The workshop brought together 225 people or so from all parts of computer science: industry, universities, K-12 education, and government. We had a higher percentage of women than the discipline as a whole, which made sense given our goals, and a large international contingent. Three Turing Award winners joined in: Alan Kay, Vinton Cerf, and Fran Allen, who was an intellectual connection to the compiler course I put on hold for a few days in order to participate myself. There were also a number of other major contributors to computing, people such as Grady Booch, Richard Gabriel, Dan Ingalls, and the most famous of three Eugenes in the room, Gene Spafford.

Most came without knowing what in particular we would do for these three days, or how. The draw was the vision: a desire to reinvigorate computing everywhere.

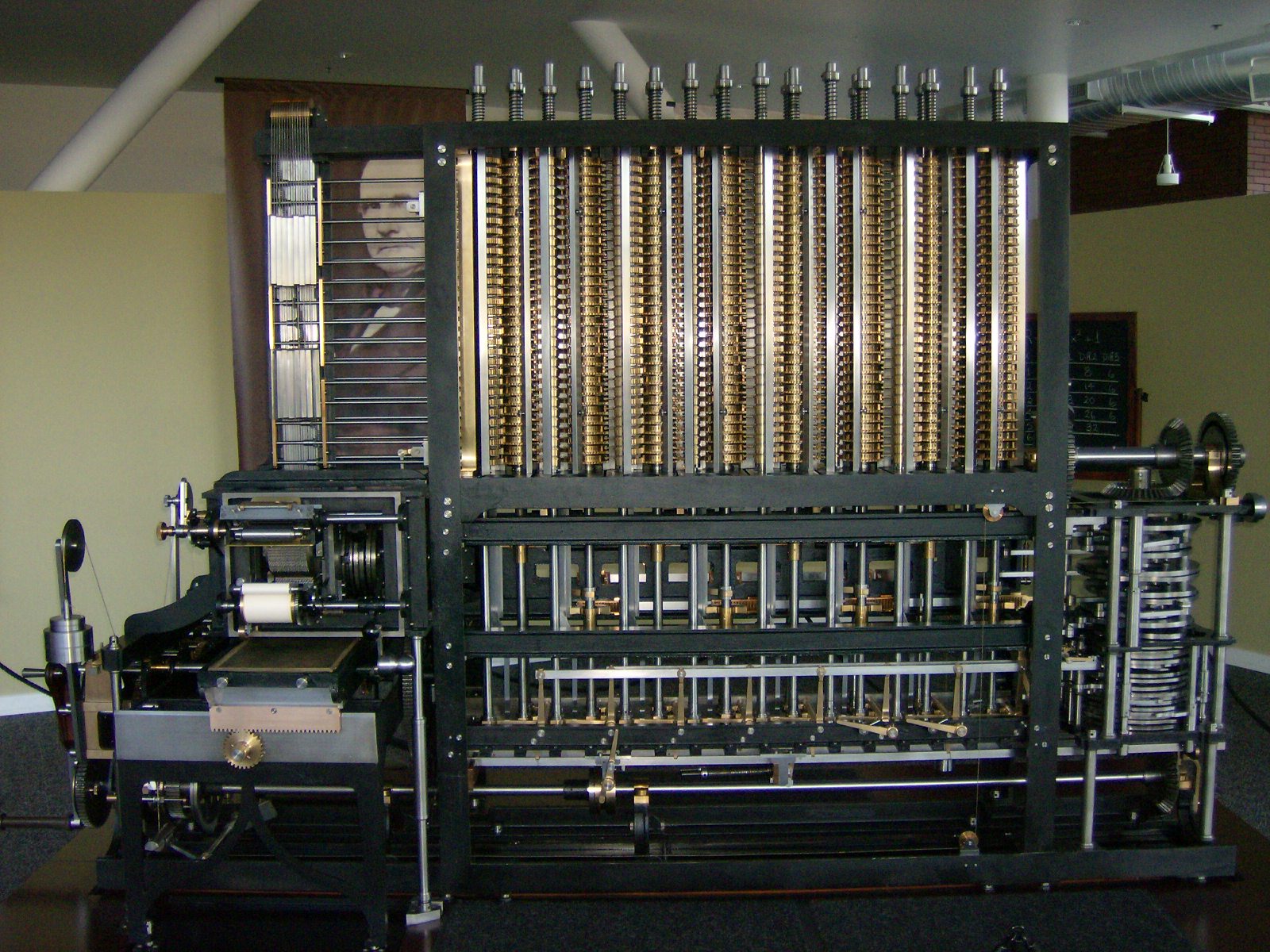

The location was a perfect backdrop, the Computer History Museum. The large printout of a chess program on old green and white computer paper hanging from the rafters near our upper-floor meeting room served as a constant reminder of a day when everything about computers and programming seemed new and exciting, when everything was a challenge to tackle. The working replica of Charles Babbage's Difference Engine on the main floor reminded us that big dreams are good -- and may go unfulfilled in one's lifetime.

The Introduction

Peter Denning opened with a few remarks on what led him to organize the workshop. He expressed his long-standing dissatisfaction with the idea that computer science == programming. Dijkstra famously proclaimed that he was a programmer, and proud of it, but Denning always knew there was something bigger. What is missing if we think only of programming? Using his personal experience as an example, he claimed that the outliers in the general population who make enter CS have invested many hours in several kinds of activity: math, science, and engineering.

Throughout much of the history of computer science, people made magic because they didn't know what they were doing was impossible. This gave rise to the metaphor driving the workshop and his current effort -- to reboot computing, to clean out the cruft. We have non-volatile memory, though, so we can start fresh with wisdom accumulated over the first 60-70 years of the discipline. (Later the next day, Alan Kay pointed out that rebooting leaves same operating system and architecture in place, and that what we need to do is redesign them from the bottom up, too!)

A spark ignited inside each of us once that turned us onto computing. What was it? Why did it catch? How? One of the goals of the summit was to find out how we can create the same conditions for others.

The Approach

The goal of the workshop was to change the world -- to change how people think about computing. The planning process destroyed the stereotypes that the non-CS facilitators held about CS people.

The workshop was organized around Appreciative Inquiry (AI, but not the CS one -- or the one from the ag school!), a process I first heard about in a paper by Kent Beck. It uses a an exploration of positive experiences to help understand a situation and choose action. For the summit, this meant developing a shared positive context about computing before moving on to the choosing of specific goals and plans.

Our facilitators used an implementation they characterized as Discovery-Dream-Design-Destiny. The idea was to start by examining the past, then envision the future, and finally to return to the present and make plans. Understanding the past helps us put our dreams into context, and building a shared vision of the future helps us to convert knowledge into actions.

One of our facilitators, Frank Barrett, is a former prof jazz musician, said an old saying from his past career is "Great performances create great listeners." He believes, though, that the converse is true: "Great listeners create great performances." He encouraged us to listen to one another's stories carefully and look for common understanding that we could convert into action.

Frank also said that the goal of the workshop is really to change how people talk, not just think, about computing. Whenever you propose that kind of change, people will see you as a revolutionary -- or as a lunatic.

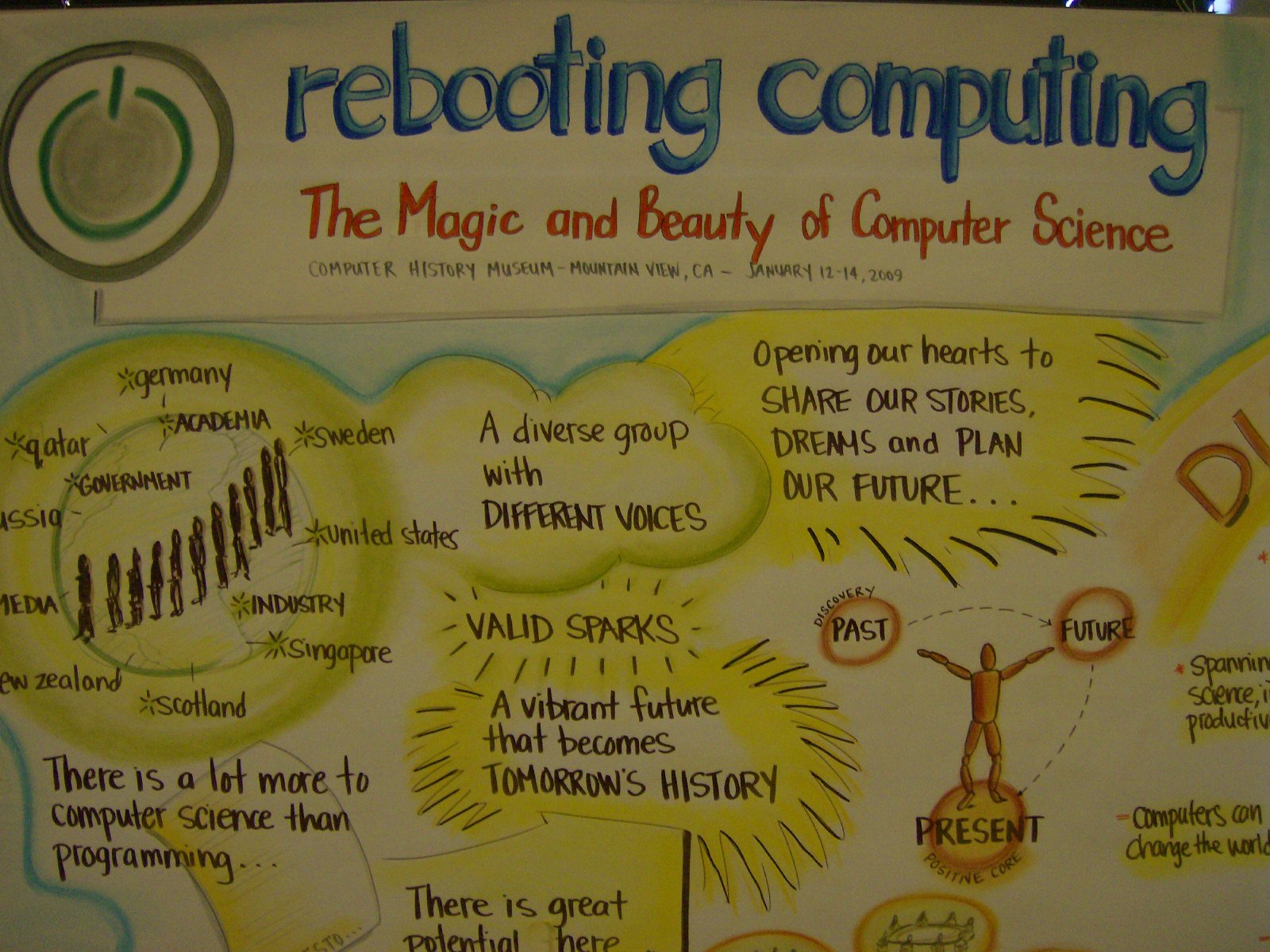

An unusual complement to this humanistic approach to the workshop was a graphic artist who was recording the workshop live before our eyes, in image and word. Even when the record was mostly catchphrases that could have become part of a slide presentation, the colors and shapes added a nice touch to the experience.

The Spark

What excited most of us about computing was solving a problem -- having some something that that was important to us, sometimes bigger than we could do easily by hand, and doing it with a computer. Sometimes we enjoyed the making of things that could serve our needs. Sometimes we were enlivened by making something we found beautiful.

Still, a lot of people in the room "stumbled" or "drifted" into CS from other places. Those words carry quite different images of peoples' experiences. However subtle the move, they all seemed to have been working on real problems.

One of the beauties of computer science is that it is in and about everything. For many, computing is a lens through which to study problems and create solutions. Like mathematics, but more.

One particular comment made the first morning stood out in my mind. The gap between what people want to make with a computer and what they can reasonably make has widened considerably in the last thirty years. What they want to make is influenced by what they see and use every day. Back in 1980 I wanted to write a program to compute chess ratings, and a bit of BASIC was all I needed. Kids these days walk around with computational monsters in their pockets, sometimes a couple, and their desires have grown to match. Show them Java or Python, let alone BASIC, and they may well feel deflated before considering just what they could do.

Computing creates a new world. It builds new structures on top of old, day by day. Computing is different today than it was thirty years ago -- and so is the world. What excited us may well not excite today's youth.

What about what excited us might?

(Like any good computer scientist, I have gone meta.)

Educators cannot create learning. Students do that. What can educators provide? A sense of quality. What is good? What is worth doing? Why?

History

A lot of great history was made and known by the people in this room. The rest of us have lived through some of it. Just hearing some of these lessons reignited the old spark inside of me.

Consider Alan Turing's seminal 1935 paper on the Halting Problem. Part of the paper is Turing "thumbing his nose" at his skeptical mathematician colleagues, saying "The very question is computation. You can't escape it."

One time, Charles Babbage was working with his friend, the astronomer Herschel. They were using a set of astronomy tables to solve a problem, and Babbage became frustrated by errors in the tables. He threw the book at a wall and said, "I wish these calculations had been executed by steam!" Steam.

Ada Lovelace referred to Babbage's Difference Engine as a machine that "weaves patterns of ideas".

Alan Kay reminded us of the ultimate importance of what Turing taught us: If you don't like the machine you have, you can make the machine you want.

AI -- the computing kind, which was the source of many of my own initial sparks -- has had two positive effects on the wider discipline. First, it has always offered a big vision of what computing can be. Second, even when it struggles to reach that vision, it spins off new technologies it creates along the way.

At some point in the two days, Alan Kay chastised us. Know your history! Google has puts all seventy-five or so of Douglas Engelbart's papers at your fingertips. Do you even type the keywords into the search box, let alone read them?

About Computing

A common thread throughout the workshop was, what are the key ideas and features of computing that we should not lose as we move forward? There were some common answers. People want to solve real problems for real people. They want to find ideas in experience and applications. Another was the virtue of persistence, which one person characterized as "embracing failure" -- a twisted but valuable perspective. Yet another was the idea of "no more black boxes", whether hardware or software. Look inside, and figure out what makes it tick. None of these are unique to CS, but they are in some essential to it.

Problem solving came up a lot, too. I think that people in most disciplines "solve problems" and so would claim problem solving as an essential feature of the discipline. Is computer science different? I think so. CS is about the process of solving problems. We seek to understand the nature of algorithms and how they manipulate information. Whichever real problem we have just solved, we ask, "How?" and "Why?" We try to generalize and understand the data and the algorithm.

Another common feature that many thought essential to computing is that it is interdisciplinary. CS reaches into everything. This has certainly been one of the things that has attracted me to computing all these years. I am interested in many things, and I love to learn about ideas that transcend disciplines -- or that seem to but don't. What is similar and dissimilar between two problems or solutions? Much of AI comes down to knowing what is similar and what is not, and that idea held my close attention for more than a decade.

While talking with one of my table mates at the summit, I realized that this was one of the biggest influences my Ph.D. advisor, Jon Sticklen, had on me. He approached AI from all directions, from the perspectives of people solving problems in all disciplines. He created an environment that sought and respected ideas from everywhere, and he encouraged that mindset in all who studied in his lab.

Programming

While I respect Denning's dissatisfaction with the idea that computer science == programming, I don't think we should lose the idea of programming whatever we do to reinvigorate computing. Whatever else computing is, in the end, it all comes down to a program running somewhere.

When it was my turn to describe part of my vision for the future of computing, I said something like this:

When they have questions, children will routinely walk to the computer and write a program to find the answers, just as they now use Google, Wikipedia, or IMDB to look up answers.

I expected to have my table mates look at me funny, but my vision went over remarkably well. People embraced the idea -- as long as we put it in the context of "solving a problem". When I ventured further to using a program "to communicate an idea", I met resistance. Something unusual happened, though. As the discussion continued, every once in a while someone would say, "I'm still thinking about communicating an idea with a program...". It didn't quite fit, but they were intrigued. I consider that progress.

Closing

At the end of the second day, we formed action groups around a dozen or so ideas that had a lot of traction across the whole group. I joined a group interested in using problem-based learning to change how we introduce computing to students.

That seemed like a moment when we would really get down to business, but unfortunately I had to miss the last day of the summit. This was the first week of winter semester classes at my university, and I could not afford to miss both sessions of my course. I'm waiting to hear from other members of my group, to see what they discussed on Wednesday and what we are going to do next.

As I was traveling back home the next day, I thought about whether the workshop had been worth missing most of the first week of my semester. I'll talk more about the process and our use of time in a later entry. But whatever else, the summit put a lot of different people from different parts of the discipline into one room and got us talking about why computing matters and how we can help to change how the world thinks -- and talks -- about it. That was good.

Before I left for California, I told a colleague that this summit held the promise of being something special, and that it also bore the the risk of being the same old thing, with visionaries, practitioners, and career educators chasing their tails in a vain effort to tame this discipline of ours. In the end, I think it was -- as so many things turn out to be -- a little of both.

January 07, 2009 6:18 PM

Looking Ahead -- To Next Week

The latest edition of inroads, the quarterly publication of SIGCSE, arrived in my mailbox yesterday. The invited editorial was timely for me, as I finally begin to think about teaching this spring. Alfred Aho, co-author of the dragon book and creator of several influential languages and programming tools, wrote about Teaching the Compilers Course. He didn't write a theoretical article or a solely historical one, either; he's been teaching compilers every semester for the last many years at Columbia.

As always, I enjoyed reading what an observant mind has to say about his own work. It's comforting to know that he and his students face many of the same challenges with this course as my students and I do, from the proliferation of powerful, practical tools to the broad range of languages and target machines available. (He also faces a challenge I do not -- teaching his course to 50-100 students every term. Let's just say that my section this spring offers ample opportunity for familiarity and one-on-one interaction!)

Two of Aho's ideas are now germinating in my mind as I settle on my course. The first is something that has long been a feature of my senior project courses other than compilers: an explicit focus on the software engineering side of the project. Back when I taught our Knowledge-Based Systems course (now called Intelligent Systems) every year, we paid a lot of attention to the process of writing a large program as a team, from gathering requirements and modeling knowledge to testing and deploying the final system. We often used a supplementary text on managing the process of building KBS. Students produced documents as well as code and evaluated team members on their contributions and efforts.

When I moved to compilers five or six years ago after a year on sabbatical, I de-emphasized the software engineering process. First of all, I had smaller classes and so no teams of three, four, or five. Instead, I had pairs or even individuals flying solo. Managing team interactions became less important, especially when compared to the more intellectually daunting content of the compiler course. Second, the compiler students tended to skew a little higher academically, and they seemed to be able to handle more effectively the challenge of writing a big program. Third, maybe I got a little lazy and threw myself into the fun content of the course, where my own natural inclinations lie.

Aho has his students work in teams of five and, in addition to writing a compiler and demoing at the end of the semester:

- write a white paper on their source language,

- write a tutorial on using it, and

- close with a substantial project report written by every member of the team.

This short article has reawakened my interest in having my students -- many of whom will graduate into professional careers developing software and managing its development -- attend more to process. I'll keep it light, but these three documents (white paper, tutorial, and project report) will provide structure to tasks the students already have to do, such as to understand their source language well and to explore the nooks and crannies of its use.

The second idea from Aho's article is to have students design their own language to compile. This is something I have never done. It is also a feature that brings more value to the writing of a white paper and a tutorial for the language. I've always given students a language loosely of my own design, adapted from the many source languages I've encountered in colleagues' courses and professional experience. When I design the language, I have to write specs and clarifications; I have to code sample programs to demonstrate the semantics of the language and to test the students' compilers at the end of the semester.

I like the potential benefits of having students design their own language. They will encounter some of the issues that the designers of the languages they use, such as Java, C++, and Ada faced. They can focus their language in a domain or a technology niche of interest to them, such as music and gaming or targeting a multi-core machine. They may even care more about their project if they are implementing an engine that makes their own creation come to life.

If I adopt these course features, they will shift the burden between instructor and student in some unusual ways. Students will have to exert more energy into the languages of the course and write more documentation. I will have to learn about their languages and follow their projects much more intimately as the semester proceeds in order to be able to provide the right kind of guidance at the right moments. But this shift, while demanding different kinds of work on both our parts, should benefit both of us. When I design more of the course upfront, I have greater control over how the projects proceed. This gives me a sense of comfort but deprives the students of experiences with language design and evolution that will serve them well in their careers. The sense of comfort also deprives me of something: the opportunity to step into the unknown in real-time and learn. Besides, my students often surprise me with what they have to teach me.

As I said, I'm just now starting to think about my course in earnest after letting Christmas break be a break. And I'm starting none to soon -- classes begin Monday. Our first session will not be until Thursday, however, as I'll begin the week at the Rebooting Computing summit. This is not the best timing for a workshop, but it does offer me the prospect of a couple of travel days away from the office to think more about my course!

January 02, 2009 10:42 PM

Fly on the Wall

A student wrote to tell me that I had been Reddited again, this time for my entry reflecting on this semester's final exam. I had fun reading the comments. Occasionally, I found myself thinking about how a poster had misread my entry. Even a couple of other posters commented as much. But I stopped myself and remembered what I had learned from writers' workshops at PLoP: they had read my words, which must stand on their own. Reading over a thread that discusses something I've written feels a little bit like a writers' workshop. As an author, I never know how others might interpret what I have written until I hear or read their interpretation in their own words. Interposing clarifying words is a tempting but dangerous trap.

Blog comments, whether on the author's site or on a community site such as Reddit, do tend to drift far afield from the original article. That is different from a PLoP workshop, in which the focus should remain on the work being discussed. In the case of my exam entry, the drift was quite interesting, as people discussed accumulator variables (yes, I was commenting on how students tend to overuse them; they are a wonderful technique when used appropriately) and recursion (yes, it is hard for imperative thinkers to learn, but there are techniques to help...). Well worth the read. But I could also see that sometimes a subthread comes to resemble an exchange in the children's game Telephone. Unless every commenter has read the original article -- in this case, mine -- the discussion tends to drift monotonically away from the content of the original as it loses touch with each successive post. Frankly, that's all right, too. I just hope that I am not held accountable for what someone at the end of the chain says I wrote...