September 22, 2023 8:38 PM

Time and Words

Earlier this week, I posted an item on Mastodon:

After a few months studying and using CSS, every once in a while I am able to change a style sheet and cause something I want to happen. It feels wonderful. Pretty soon, though, I'll make a typo somewhere, or misunderstand a property, and not be able to make anything useful happen. That makes me feel like I'm drowning in complexity.

Thankfully, the occasional wonderful feelings — and my strange willingness to struggle with programming errors — pull me forward.

I've been learning a lot while preparing to teach to our web development course [ 1 | 2 ]. Occasionally, I feel incompetent, or not very bright. It's good for me.

I haven't been blogging lately, but I've been writing lots of words. I've also been re-purposing and adapting many other words that are licensed to be reusable (thanks, David Humphrey and John Davis). Prepping a new course is usually prime blogging time for me, with my mind in a constant state of churn, but this course has me drowning in work to do. There is so much to learn, and so much new material — readings, session plans and notes, examples, homework assignments — to create.

I have made a few notes along the way, hoping to expand them into posts. Today they become part of this post.

VS Code

This is my first time in a long time using an editor configured to auto-complete and do all the modern things that developers expect these days. I figured, new tool, why not try a new workflow...

After a short period of breaking in, I'm quite enjoying the experience. One feature I didn't expect to use so much is the ability to collapse an HTML element. In a large HTML file, this has been a game changer for me. Yes, I know, this is old news to most of you. But as my brother loves to say when he gets something used or watches a movie everyone else has already seen, "But, hey, it's new to me!" VS Code's auto-complete across HTML, CSS, and JavaScript, with built-in documentation and links to MDN's more complete documentation, lets me type code much faster than ever before. It made me think of one of my favorite Kent Beck lines:

As the speed of development approaches infinity, reuse becomes irrelevant.

When programming, I often copy, paste, and adapt previous code. In VS Code, I have found myself moving fast enough that copy, paste, and adapt would slow me down. That sort of reuse has become irrelevant.

Examples > Prose

The class sessions for which I have written the longest and most complete notes for my students (and me) tend to be the ones for which I have the fewest, or least well-developed, code examples. The reverse is also true: lots of good examples and code tends to mean smaller class notes. Sometimes that is because I run out of time to write much prose to accompany the session. Just as often, though, it's because the examples give us plenty to do live in class, where the learning happens in the flow of writing and examining code.

This confirms something I've observed over many years of teaching: Examples First tends to work better for most students, even people like me who fancy themselves as top-down thinkers. Good examples and code exercises can create the conditions in which abstract knowledge can be learned. This is a sturdy pedagogical pattern.

Concepts and Patterns

There is so, so much to CSS! HTML itself has a lot of details for new coders to master before they reach fluency. Many of the websites aimed at teaching and presenting these topics quickly begin to feel like a downpour of information, even when the authors try to organize it all. It's too easy to fall into, "And then there's this other property...".

After a few weeks, I've settled into trying to help students learn two kinds of things for each topic:

- a couple of basic concepts or principles

- a few helpful patterns

~~~~~

We are only five weeks into a fifteen week semester, so take any conclusions I draw with a grain of salt. We also haven't gotten to JavaScript yet, the teaching and learning of which will present a different sort of challenge than HTML and CSS with students who have little or no experience programming. Maybe I will make time to write up our experiences with JavaScript in a few weeks.

Posted by Eugene Wallingford | Permalink | Categories: Patterns, Software Development, Teaching and Learning

July 10, 2023 12:28 PM

What We Know Affects What We See

Last time I posted this passage from Shop Class as Soulcraft, by Matthew Crawford:

Countless times since that day, a more experienced mechanic has pointed out to me something that was right in front of my face, but which I lacked the knowledge to see. It is an uncanny experience; the raw sensual data reaching my eye before and after are the same, but without the pertinent framework of meaning, the features in question are invisible. Once they have been pointed out, it seems impossible that I should not have seen them before.

We perceive in part based on what we know. A lack of knowledge can prevent us from seeing what is right in front of us. Our brains and eyes work together, and without a perceptual frame, they don't make sense of the pattern. Once we learn something, our eyes -- and brains -- can.

This reminds me of a line from the movie The Santa Clause, which my family watched several times when my daughters were younger. The new Santa Claus is at the North Pole, watching magical things outside his window, and comments to the elf whose been helping him, "I see it, but I don't believe it." She replies that adults don't understand: "Seeing isn't believing; believing is seeing." As a mechanic, Crawford came to understand that knowing is seeing.

Later in the book, Crawford describes another way that knowing and perceiving interact with one another, this time with negative results. He had been struggling to figure out why there was no spark at the spark plugs in his VW Bug, and his father -- an academic, not a mechanic -- told him about Ohm's Law:

Ohm's law is something explicit and rulelike, and is true in the way that propositions are true. Its utter simplicity makes it beautiful; a mind in possession of this equation is charmed with a sense of its own competence. We feel we have access to something universal, and this affords a pleasure that is quasi-religious, perhaps. But this charm of competence can get in the way of noticing things; it can displace, or perhaps hamper the development of, a different kind of knowledge that may be difficult to bring to explicit awareness, but is superior as a practical matter. It superiority lies in the fact that it begins with the typical rather than the universal, so it goes more rapidly and directly to particular causes, the kind that actually tend to cause ignition problems.

Rule-based, universal knowledge imposes a frame on the scene. Unfortunately, its universal nature can impede perception by blinding us to the particular details of the situation we are actually in. Instead of seeing what is there, we see the scene as our knowledge would have it.

|

This reminds me of a story and a technique from the book Drawing on the Right Side of the Brain, which I first wrote about in the earliest days of this blog. When asked to draw a chair, most people barely even look at the chair in front of them. Instead, they start drawing their idea of a chair, supplemented by a few details of the actual chair they see. That works about as well as diagnosing an engine by diagnosing your mind's eye of an engine, rather than the mess of parts in front of you.

In that blog post, I reported my experience with one of Edwards's techniques for seeing the chair, drawing the negative space:

One of her exercises asked the student to draw a chair. But, rather than trying to draw the chair itself, the student is to draw the space around the chair. You know, that little area hemmed in between the legs of the chair and the floor; the space between the bottom of the chair's back and its seat; and the space that is the rest of the room around the chair. In focusing on these spaces, I had to actually look at the space, because I don't have an image in my brain of an idealized space between the bottom of the chair's back and its seat. I had to look at the angles, and the shading, and that flaw in the seat fabric that makes the space seem a little ragged.

Sometimes, we have to trick our eyes into seeing, because otherwise our brains tell us what we see before we actually look at the scene. Abstract universal knowledge helps us reason about what we see, but it can also impede us from seeing in the first place.

What we know both enables and hampers what we perceive. This idea has me thinking about how my students this fall, non-CS majors who want to learn how to develop web sites, will encounter the course. Most will be novice programmers who don't know what they see when they are looking at code, or perhaps even at a page rendered in the browser. Debugging code will be a big part of their experience this semester. Are there exercises I can give them to help them see accurately?

As I said in my previous post, there's lots of good stuff happening in my brain as I read this book. Perhaps more posts will follow.

Posted by Eugene Wallingford | Permalink | Categories: Patterns, Software Development, Teaching and Learning

December 22, 2022 1:21 PM

The Ability to Share Partial Results Accelerated Modern Science

This passage is from Lewis Thomas's The Lives of a Cell, in the essay "On Societies as Organisms":

The system of communications used in science should provide a neat, workable model for studying mechanisms of information-building in human society. Ziman, in a recent "Nature" essay, points out, "the invention of a mechanism for the systematic publication of fragments of scientific work may well have been the key event in the history of modern science." He continues:A regular journal carries from one research worker to another the various ... observations which are of common interest. ... A typical scientific paper has never pretended to be more than another little piece in a larger jigsaw -- not significant in itself but as an element in a grander scheme. The technique of soliciting many modest contributions to the store of human knowledge has been the secret of Western science since the seventeenth century, for it achieves a corporate, collective power that is far greater than any one individual can exert [italics mine].

In the 21st century, sites like arXiv lowered the barrier to publishing and reading the work of other scientists further. So did blogs, where scientists could post even smaller, fresher fragments of knowledge. Blogs also democratized science, by enabling scientists to explain results for a wider audience and at greater length than journals allow. Then came social media sites like Twitter, which made it even easier for laypeople and scientists in other disciplines to watch -- and participate in -- the conversation.

I realize that this blog post quotes an essay that quotes another essay. But I would never have seen the Ziman passage without reading Lewis. Perhaps you would not have seen the Lewis passage without reading this post? When I was in college, the primary way I learned about things I didn't read myself was by hearing about them from classmates. That mode of sharing puts a high premium on having the right kind of friends. Now, blogs and social media extend our reach. They help us share ideas and inspirations, as well as helping us to collaborate on science.

~~~~

I first mentioned The Lives of a Cell a couple of weeks ago, in If only ants watched Netflix.... This post may not be the last to cite the book. I find something quotable and worth further thought every few pages.

December 04, 2022 9:18 AM

If Only Ants Watched Netflix...

In the essay "On Societies as Organisms", Lewis Thomas says that we "violate science" when we try to read human meaning into the structures and behaviors of insects. But it's hard not to:

Ants are so much like human beings as to be an embarrassment. They farm fungi, raise aphids as livestock, launch armies into wars, use chemical sprays to alarm and confuse enemies, capture slaves. The families of weaver ants engage in child labor, holding their larvae like shuttles to spin out the thread that sews the leaves together for their fungus gardens. They exchange information ceaselessly. They do everything but watch television.

I'm not sure if humans should be embarrassed for still imitating some of the less savory behaviors of insects, or if ants should be embarrassed for reflecting some of the less savory behaviors of humans.

Biology has never been my forte, so I've read and learned less about it than many other sciences. Enjoying chemistry a bit at least helped keep me within range of the life sciences. I was fortunate to grow up in the Digital Age.

But with many people thinking the 21st century will the Age of Biology, I feel like I should get more in tune with the times. I picked up Thomas's now classic The Lives of a Cell, in which the quoted essay appears, as a brief foray into biological thinking about the world. I'm only a few pages in, but it is striking a chord. I can imagine so many parallels with computing and software. Perhaps I can be as at home in the 21st century as I was in the 20th.

December 03, 2022 2:34 PM

Why Teachers Do All That Annoying Stuff

Most people, when they become teachers, tell themselves that they won't do all the annoying things that their teachers did. If they teach for very long, though, they almost all find themselves slipping back to practices they didn't like as a student but which they now understand from the other side of the fence. Dynomight has written a nice little essay explaining why. Like deadlines. Why have deadlines? Let students learn and work at their own pace. Grade what they turn in, and let them re-submit their work later to demonstrate their newfound learning.

Indeed, why not? Because students are clever and occasionally averse to work. A few of them will re-invent a vexing form of the ancient search technique "Generate and Test". From the essay:

- Write down some gibberish.

- Submit it.

- Make a random change, possibly informed by feedback on the last submission.

- Resubmit it. If the grade improved, keep it, otherwise revert to the old version.

- Goto 3.

You may think this is a caricature, but I see this pattern repeated even in the context of weekly homework assignments. A student will start early and begin a week-long email exchange where they eventually evolve a solution that they can turn in when the assignment is due.

I recognize that these students are responding in a rational way to the forces they face: usually, uncertainty and a lack of the basic understanding needed to even start the problem. My strategy is to try to engage them early on in the conversation in a way that helps them build that basic understanding and to quiet their uncertainty enough to make a more direct effort to solve the problem.

Why even require homework? Most students and teachers want for grades to reflect the student's level of mastery. If we eliminate homework, or make it optional, students have the opportunity to demonstrate their mastery on the final exam or final project. Why indeed? As the essay says:

But just try it. Here's what will happen:

- Like most other humans, your students will be lazy and fallible.

- So many of them will procrastinate and not do the homework.

- So they won't learn anything.

- So they will get a terrible grade on the final.

- And then they will blame you for not forcing them to do the homework.

Again, the essay is written in a humorous tone that exaggerates the foibles and motivations of students. However, I have been living a variation of this pattern in my compilers course over the last few years. Here's how things have evolved.

I assign the compiler project as six stages of two weeks each. At the end of the semester, I always ask students for ways I might improve the course. After a few years teaching the course, students began to tell me that they found themselves procrastinating at the start of each two-week cycle and then having to scramble in the last few days to catch up. They suggested I require future students to turn something in at the end of the first week, as a way to get them started working sooner.

I admired their self-awareness and added a "status check" at the midpoint of each two-week stage. The status check was not to be graded, but to serve as a milepost they could aim for in completing that cycle's work. The feedback I provided, informal as it was, helped them stay course, or get back on course, if they had missed something important.

For several years, this approach worked really well. A few teams gamed the system, of course (see generate-and-test above), but by and large students used the status checks as intended. They were able to stay on track time-wise and to get some early feedback that helped them improve their work. Students and professor alike were happy.

Over the last couple of years, though, more and more teams have begun to let the status checks slide. They are busy, overburdened in other courses or distracted by their own projects, and ungraded work loses priority. The result is exactly what the students who recommended the status checks knew would happen: procrastination and a mad scramble in the last few days of the stage. Unfortunately, this approach can lead a team to fall farther and farther behind with each passing stage. It's hard to produce a complete working compiler under these conditions.

Again, I recognize that students usually respond in a rational way to the forces they face. My job now is to figure out how we might remove those forces, or address them in a more productive way. I've begun thinking about alternatives, and I'll be debriefing the current offering of the course with my students over the next couple of weeks. Perhaps we can find something that works better for them.

That's certainly my goal. When a team succeeds at building a working compiler, and we use it to compile and run an impressive program -- there's no feeling quite as joyous for a programmer, or a student, or a professor. We all want that feeling.

Anyway, check out the full essay for an entertaining read that also explains quite nicely that teachers are usually responding in a rational way to the forces they face, too. Cut them a little slack.

Posted by Eugene Wallingford | Permalink | Categories: Managing and Leading, Patterns, Teaching and Learning

October 30, 2022 9:32 AM

Recognize

From Robin Sloan Sloan's newsletter:

There was a book I wanted very badly to write; a book I had been making notes toward for nearly ten years. (In my database, the earliest one is dated December 13, 2013.) I had not, however, set down a single word of prose. Of course I hadn't! Many of you will recognize this feeling: your "best" ideas are the ones you are most reluctant to realize, because the instant you begin, they will drop out of the smooth hyperspace of abstraction, apparate right into the asteroid field of real work.

I can't really say that there is a book I want very badly to write. In the early 2000s I worked with several colleagues on elementary patterns, and we brainstormed writing an intro CS textbook built atop a pattern language. Posts from the early days of this blog discuss some of this work from ChiliPLoP, I think. I'm not sure that such a textbook could ever have worked in practice, but I think writing it would have been a worthwhile experience anyway, for personal growth. But writing such a book takes a level of commitment that I wasn't able to make.

That experience is one of the reasons I have so much respect for people who do write books.

While I do not have a book for which I've been making notes in recent years, I do recognize the feeling Sloan describes. It applies to blog posts and other small-scale writing. It also applies to new courses one might create, or old courses one might reorganize and teach in a very different way.

I've been fortunate to be able to create and re-create many courses over my career. I also have some ideas that sit in the back of my mind because I'm a little concerned about the commitment they will require, the time and the energy, the political wrangling. I'm also aware that the minute I begin to work on them, they will no longer be perfect abstractions in my mind; they will collide with reality and require compromises and real work.

(TIL I learned the word "apparate". I'm not sure how I feel about it yet.)

July 28, 2022 11:48 AM

A "Teaching with Patterns" Workshop

|

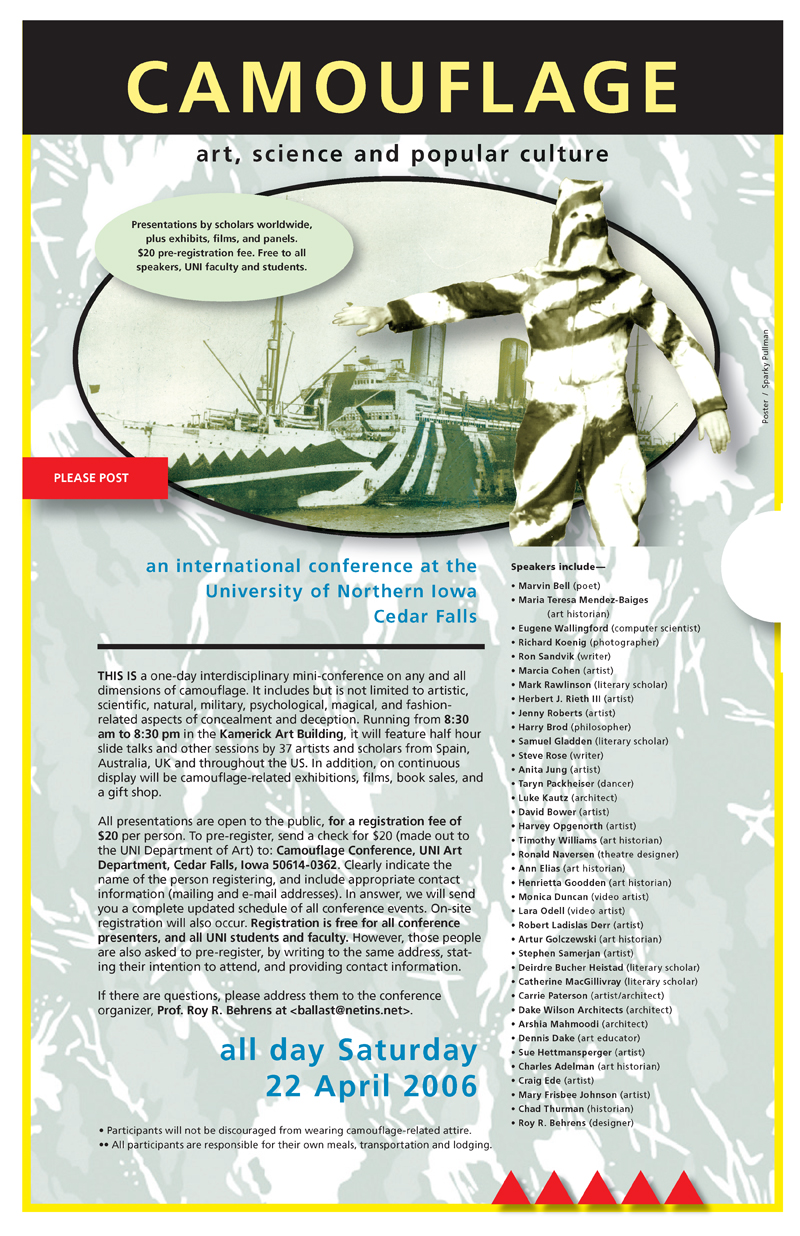

Yesterday afternoon I attended a Teaching with Patterns workshop that is part of PLoP 2022.

When the pandemic hit in 2020, PLoP went virtual, as did so many conferences. It was virtual last year and will be again this year as well. On the spur of the moment, I attended a couple of the 2020 keynote talks online. Without a real commitment to the conference, though I let my day-to-day teaching and admin keep me from really participating. (You may recall that I did it right for StrangeLoop last year, as evidenced by several blog posts ending here.)

When I peeked in on PLoP virtually a couple of years ago, it had already been too many years away from my last PLoP, which itself came after too many years away! Which is to say: I miss PLoP and my friends and colleagues in the software patterns world.

PLoP 2022 itself is in October. Teaching with Patterns was a part of PLoPourri, a series of events scheduled throughout the year in advance of the conference proper. It was organized by Michael Weiss of Carleton University in Ottawa, a researcher on open source software.

Upon joining the Zoom session for the workshop, I was pleased to see Michael, a colleague I first met at PLoP back in the 1990s, and Pavel Hruby, whom I met at one of the first ChiliPLoPs many years ago. There were a handful of other folks participating, too, bringing experience from a variety of domains. One, Curt McNamara, has significant experience using patterns in his course on sustainable graphic design.

The workshop was a relaxed affair. First, participants shared their experiences teaching with patterns. Then we all discussed our various approaches and shared lessons we have learned. Michael gave a few opening remarks and then asked four questions to prime the conversation:

- How do you use patterns when you teach a topic?

- What format do you use to teach with patterns?

- Do you teach with your own or existing patterns?

- What works best/worst?

My answers may sound familiar to anyone who has read my blog long enough to hear me talk about the courses I teach and, going way back, to my work at PLoP and ChiliPLoP.

I use patterns to teach design techniques and trade-offs. It's easy to to lecture students on particular design solutions, but that's not very effective at helping students learn how to do design, to make choices based on the forces at play in any given problem. Patterns help me to think about the context in which a technique applies, the tradeoffs it helps us to make, and the state of their solution.

I rarely have students read patterns themselves, at least in one of the stylized pattern forms. Instead, I integrate the patterns into the stories I tell, into the conversations I have with my students about problems and solutions, and finally into the session notes I write up for them and me.

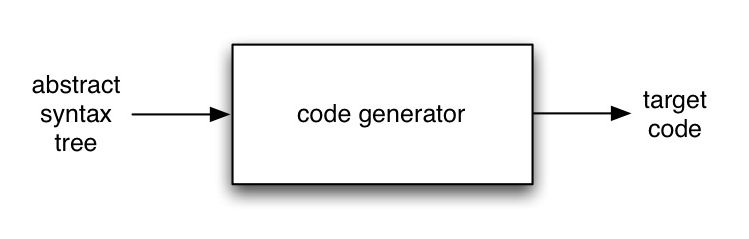

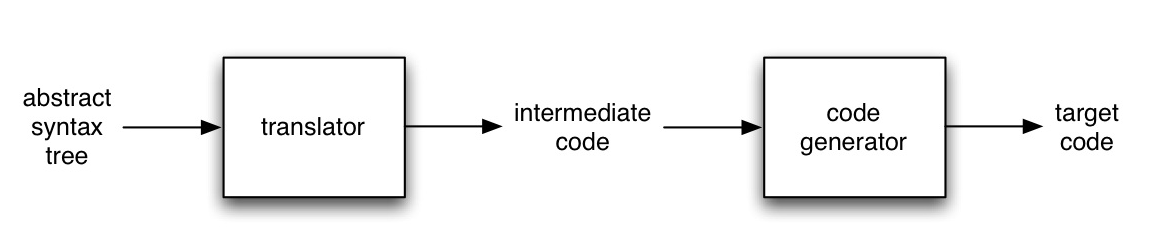

These days, I mostly teach courses on programming languages and compilers. I have written my own patterns for the programming languages course and use them to structure two units, on structurally recursive programming and closures for managing state. I've long dreamed of writing more patterns for the compiler course, which seems primed for the approach: So many of the structures we build when writing a compiler are the result of decades of experience, with tradeoffs that are often left implicit in textbooks and in the heads of experts.

I have used my own patterns as well as patterns from the literature when teaching other courses as well, back in the days when I taught three courses a semester. When I taught knowledge-based systems every year, I used patterns that I wrote to document the "generic task" approach to KBS in which my work lived. In our introductory OOP courses, I tended to use patterns from the literature both as a way to teach design techniques and trade-offs and as way to prepare students to graduate into a world where OO design patterns were part of the working vocabulary.

I like how patterns help me discuss code with students and how they help me evaluate solutions -- and communicate how I evaluate solutions.

That is more information than I shared yesterday... This was only a one and a half-hour workshop, and all of the participants had valuable experience to share.

I didn't take notes on what others shared or on the conversations that followed, but I did note a couple of things in general. Several people use a set of patterns or even a small pattern language to structure their entire course. I have done this at the unit level of a course, never at the scale of the course. My compiler course would be a great candidate here, but doing so would require that I write a lot of new material to augment and replace some of what I have now.

Likewise, a couple of participants have experimented with asking students to write patterns themselves. My teaching context is different than theirs, so I'm not sure this is something that can work for me in class. I've tried it a time or two with master's-level students, though, and it was educational for both the student and me.

I did grab all the links posted in the chat room so that I can follow up on them to read more later:

- the Pattern Language page on the Communities for the Future wiki

- a revised Bloom's taxonomy

- the SOLO taxonomy, a complement to Bloom's

- the Pattern Language page on the Open Pattern Repository wiki

- System: The Shaping of Modern Knowledge, a book by Clifford Siskin (from the title, this sounds interesting)

I also learned one new tech thing at the workshop: Padlet, a real-time sharing platform that runs in the browser. Michael used a padlet as the workshop's whiteboard, in lieu of Zoom's built-in tool (which I've still never used). The padlet made it quite straightforward to make and post cards containing text and other media. I may look into it as a classroom tool in the future.

Padlet also made it pretty easy to export the whiteboard in several formats. I grabbed a PDF copy of the workshop whiteboard for future reference. I considered linking to that PDF here, but I didn't get permission from the group first. So you'll just have to trust me.

Attending this workshop reminded me that I do not get to teach enough, at least not formal classroom teaching, and I miss it! As I told Michael in a post-workshop thank-you e-mail:

That was great fun, Michael. Thanks for organizing! I learned a few things, but mostly it is energizing simply to hear others talk about what they are doing."Energizing" really is a good word for what I experienced. I'm hyped to start working in more detail on my fall course, which starts in less than four weeks.

I'm also energized to look into the remaining PLoPourri events between now and the conference. (I really wish now that I had signed up for The Future of Education workshop in May, which was on "patterns for reshaping learning and the campus".) PLoP itself is on a Monday this year, in late October, so there is a good chance I can make it as well.

I told Michael that Teaching with Patterns would be an excellent regular event at PLoP. He seemed to like that thought. There is a lot of teaching experience out there to be shared -- and a lot of energy to be shared as well.

June 08, 2022 1:51 PM

Be a Long-Term Optimist and a Short-Term Realist

Before I went to bed last night, I stumbled across actor Robert De Niro speaking with Stephen Colbert on The Late Show. De Niro is, of course, an Oscar winner with fifty years working in films. I love to hear experts talk about what they do, so I stayed up a few extra minutes.

I think Colbert had just asked De Niro to give advice to actors who were starting out today, because De Niro was demurring: he didn't like to give advice, and everyone's circumstances are different. But then he said that, when he himself was starting out, he went on lots of auditions but always assumed that he wasn't going to get the job. There were so many ways not to get a job, so there was no reason to get his hopes up.

Colbert related that anecdote to his own experience getting started in show business. He said that whenever he had an acting job, he felt great, and whenever he didn't have a job, pessimism set in: he felt like he was never going to work again. De Niro immediately said, "oh, I never felt that way". He always felt like he was going to make it. He just had to keep going on auditions.

There was a smile on Colbert's face. He seemed to have trouble squaring De Niro's attitude toward auditions with his claimed confidence about eventual success. Colbert moved on with his interview.

It occurred to me that the combination of attitudes expressed by De Niro is a healthy, almost necessary, way to approach big goals. In the short term, accept that each step is uncertain and unlikely to pay off. Don't let those failures get you down; they are the price of admission. For the long term, though, believe deeply that you will succeed. That's the spirit you need to keep taking steps, trying new things when old things don't seem to work, and hanging around long enough for success to happen.

De Niro's short descriptions of his own experiences revealed how both sides of his demeanor contributed to him ultimately making it. He never knew what casting agents, directors, and producers were looking for, so he was willing to read every part in several different ways. Even though he didn't expect to get the job, maybe one of those people would remember him and mention him to a friend in the business, and maybe that connection would pay off. All he could do was audition.

The self-assurance De Niro seemed to feel almost naturally reminded me of things that Viktor Frankl and John McCain said about their ability to survive time in war camps. Somehow, they were able to maintain a confidence that they would eventually be free again. In the end, they were lucky to survive, but their belief that they would survive had given them a strength to persevere through much worse treatment than simply being rejected for a part in a movie. That perseverance helped them stay alive and take actions that would leave them in a position to be lucky.

I realize that the story De Niro tells, like those of Frankl and McCain, is potentially suspect due to survivor bias. We don't get to hear from people who believed that they would make it as actors but never did. Even so, their attitude seems like a pragmatic one to implement, if we can manage it: be a long-term optimist and a short-term realist. Do everything you can to hang around long enough for fortune to find us.

Like De Niro, I am not much one to give advice. In the haze of waking up and going back to sleep last night, though, I think his attitude gives us a useful model to follow.

April 16, 2022 2:32 PM

More Fun, Better Code: A Bug Fix for my Pair-as-Set Implementation

In my previous post, I wrote joyously of a fun bit of programming: implementing ordered pairs using sets.

Alas, there was a bug in my solution. Thanks to Carl Friedrich Bolz-Tereick for finding it so quickly:

Heh, this is fun, great post! I wonder what happens though if a = b? Then the set is {{a}}. first should still work, but would second fail, because the set difference returns the empty set?

Carl Friedrich had found a hole in my small set of tests, which sufficed for my other implementations because the data structures I used separate cells for the first and second parts of the pair. A set will do that only if the first and second parts are different!

Obviously, enforcing a != b is unacceptable. My first code thought was to guard second()'s behavior:

if my formula finds a result

then return that result

else return (first p)

This feels like a programming hack. Furthermore, it results in an impure implementation: it uses a boolean value and an if expression. But it does seem to work. That would have to be good enough unless I could find a better solution.

Perhaps I could use a different representation of the pair. Helpfully, Carl Friedrich followed up with pointers to several blog posts by Mark Dominus from last November that looked at the set encoding of ordered pairs in some depth. One of those posts taught me about another possibility: Wiener pairs. The idea is this:

(a,b) = { {{a},∅}, {{b}} }

Dominus shows how Wiener pairs solve the a == b edge case in Kuratowski pairs, which makes it a viable alternative.

Would I ever have stumbled upon this representation, as I did onto the Kuratowski pairs? I don't think so. The representation is more complex, with higher-order sets. Even worse for my implementation, the formulas for first() and second() are much more complex. That makes it a lot less attractive to me, even if I never want to show this code to my students. I myself like to have a solid feel for the code I write, and this is still at the fringe of my understanding.

Fortunately, as I read more of Dominus's posts, I found there might be a way to save my Kuratowski-style solution. It turns out that the if expression I wrote above parallels the set logic used to implement a second() accessor for Kuratowski pairs: a choice between the set that works for a != b pairs and a fallback to a one-set solution.

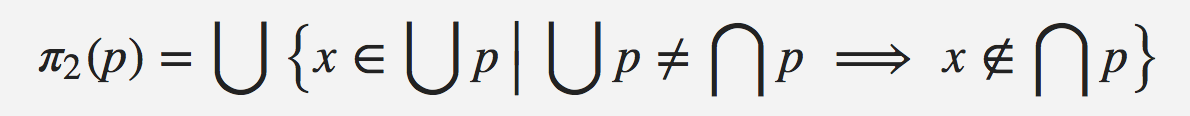

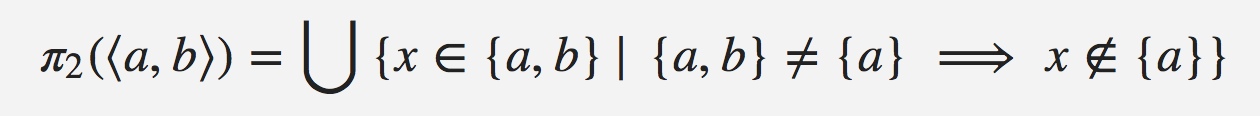

From this Dominus post, we see the correct set expression for second() is:

... which can be simplified to:

The latter expression is useful for reasoning about second(), but it doesn't help me implement the function using set operations. I finally figured out what the former equation was saying: if (∪ p) is same as (∩ p), then the answer comes from (∩ p); otherwise, it comes from their difference.

I realized then that I could not write this function purely in terms of set operations. The computation requires the logic used to make this choice. I don't know where the boundary lies between pure set theory and the logic in the set comprehension, but a choice based on a set-empty? test is essential.

In any case, I think I can implement the my understanding of the set expression for second() as follows. If we define union-minus-intersection as:

(set-minus (apply set-union aSet)

(apply set-intersect aSet))

then:

(second p) = (if (set-empty? union-minus-intersection)

(set-elem (apply set-intersect aSet))

(set-elem union-minus-intersection))

The then clause is the same as the body of first(), which must be true: if the union of the sets is the same as their intersection, then the answer comes from the interesection, just as first()'s answer does.

It turns out that this solution essentially implements my first code idea above: if my formula from the previous blog entry finds a result, then return that result. Otherwise, return first(p). The circle closes.

Success! Or, I should: Success!(?) After having a bug in my original solution, I need to stay humble. But I think this does it. It passes all of my original tests as well as tests for a == b, which is the main edge case in all the discussions I have now read about set implementations of pairs. Here is a link to the final code file, if you'd like to check it out. I include the two simple test scenarios, for both a == b and a == b, as Rackunit tests.

So, all in all, this was a very good week. I got to have some fun programming, twice. I learned some set theory, with help from a colleague on Twitter. I was also reacquainted with Mark Dominus's blog, the RSS feed for which I had somehow lost track of. I am glad to have it back in my newsreader.

This experience highlights one of the selfish reasons I like for students to ask questions in class. Sometimes, they lead to learning and enjoyment for me as well. (Thanks, Henry!) It also highlights one of the reasons I like Twitter. The friends we make there participate in our learning and enjoyment. (Thanks, Carl Friedrich!)

April 13, 2022 2:27 PM

Programming Fun: Implementing Pairs Using Sets

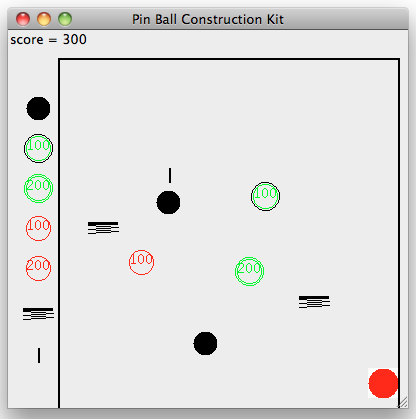

Yesterday's session of my course was a quiz preceded by a fun little introduction to data abstraction. As part of that, I use a common exercise. First, I define a simple API for ordered pairs: (make-pair a b), (first p), and (second p). Then I ask students to brainstorm all the ways that they could implement the API in Racket.

They usually have no trouble thinking of the data structures they've been using all semester. Pairs, sure. Lists, yes. Does Racket have a hash type? Yes. I remind my students about vectors, which we have not used much this semester. Most of them haven't programmed in a language with records yet, so I tell them about structs in C and show them Racket's struct type. This example has the added benefit of seeing that Racket generates constructor and accessor functions that do the work for us.

The big payoff, of course, comes when I ask them about using a Racket function -- the data type we have used most this semester -- to implement a pair. I demonstrate three possibilities: a selector function (which also uses booleans), message passaging (which also uses symbols), and pure functions. Most students look on these solutions, especially the one using pure functions, with amazement. I could see a couple of them actively puzzling through the code. That is one measure of success for the session.

This year, one student asked if we could use sets to implement a pair. Yes, of course, but I had never tried that... Challenge accepted!

While the students took their quiz, I set myself a problem.

The first question is how to represent the pair. (make-pair a b) could return {a, b}, but with no order we can't know which is first and which is second. My students and I had just looked at a selector function, (if arg a b), which can distinguish between the first item and the second. Maybe my pair-as-set could know which item is first, and we could figure out the second is the other.

So my next idea was (make-pair a b) → {{a, b}, a}. Now the pair knows both items, as well as which comes first, but I have a type problem. I can't apply set operations to all members of the set and will have to test the type of every item I pull from the set. This led me to try (make-pair a b) → {{a}, {a, b}}. What now?

My first attempt at writing (first p) and (second p) started to blow up into a lot of code. Our set implementation provides a way to iterate over the members of a set using accessors named set-elem and set-rest. In fine imperative fashion, I used them to start whacking out a solution. But the growing complexity of the code made clear to me that I was programming around sets, but not with sets.

When teaching functional programming style this semester, I have been trying a new tactic. Whenever we face a problem, I ask the students, "What function can help us here?" I decided to practice what I was preaching.

Given p = {{a}, {a, b}}, what function can help me compute the first member of the pair, a? Intersection! I just need to retrieve the singleton member of ∩ p:

(first p) = (set-elem (apply set-intersect p))

What function can help me compute the second member of the pair, b? This is a bit trickier... I can use set subtraction, {a, b} - {a}, but I don't know which element in my set is {a, b} and which is {a}. Serendipitously, I just solved the latter subproblem with intersection.

Which function can help me compute {a, b} from p? Union! Now I have a clear path forward: (∪ p) – (∩ p):

(second p) = (set-elem

(set-minus (apply set-union p)

(apply set-intersect p)))

I implemented these three functions, ran the tests I'd been using for my other implementations... and they passed. I'm not a set theorist, so I was not prepared to prove my solution correct, but the tests going all green gave me confidence in my new implementation.

Last night, I glanced at the web to see what other programmers had done for this problem. I didn't run across any code, but I did find a question and answer on the mathematics StackExchange that discusses the set theory behind the problem. The answer refers to something called "the Kuratowski definition", which resembles my solution. Actually, I should say that my solution resembles this definition, which is an established part of set theory. From the StackExchange page, I learned that there are other ways to express a pair as a set, though the alternatives look much more complex. I didn't know the set theory but stumbled onto something that works.

My solution is short and elegant. Admittedly, I stumbled into it, but at least I was using the tools and thinking patterns that I've been encouraging my students to use.

I'll admit that I am a little proud of myself. Please indulge me! Department heads don't get to solve interesting problems like this during most of the workday. Besides, in administration, "elegant" and "clever" solutions usually backfire.

I'm guessing that most of my students will be unimpressed, though they can enjoy my pleasure vicariously. Perhaps the ones who were actively puzzling through the pure-function code will appreciate this solution, too. And I hope that all of my students can at least see that the tools and skills they are learning will serve them well when they run into a problem that looks daunting.

March 14, 2022 11:55 AM

Taking Plain Text Seriously Enough

Or, Plain Text and Spreadsheets -- Giving Up and Giving In

One day a couple of weeks ago, a colleague and I were discussing a student. They said, I think I had that student in class a few semesters ago, but I can't find the semester with his grade."

My first thought was, "I would just use grep to...". Then I remembered that my colleagues all use Excel for their grades.

The next day, I saw Derek Sivers' recent post that gives the advice I usually give when asked: Write plain text files.

Over my early years in computing, I lost access to a fair bit of writing that was done using various word processing applications. All stored data in proprietary formats. The programs are gone, or have evolved well beyond the version and OS I was using at the time, and my words are locked inside. Occasionally I manage to pull something useful out of one of those old files, but for the most part they are a graveyard.

No matter how old, the code and essays I wrote in plaintext are still open to me. I love being able to look at programs I wrote for my undergrad courses (including the first parser I ever wrote, in Pascal) and my senior honors project (an early effort to implement Swiss System pairings for chess tournament). All those programs have survived the move from 5-1/4" floppies, through several different media, and still open just fine in emacs. So do the files I used to create our wedding invitations, which I wrote in troff(!).

The advice to write in plain text transfers nicely from proprietary formats on our personal computers to tools that run out on the web. The same week as Sivers posted his piece, a prolific Goodreads reviewer reported losing all his work when Goodreads had a glitch. The reviewer may have written in plain text, but his reviewers are buried in someone else's system.

I feel bad for non-tech folks when they lose their data to a disappearing format or app. I'm more perplexed when a CS prof or professional programmer does. We know about plain text; we know the history of tools; we know that our plain text files will migrate into the future with us, usable in emacs and vi and whatever other plain text editors we have available there.

I am not blind, though, to the temptation. A spreadsheet program does a lot of work for us. Put some numbers here, add a formula or two over there, and boom! your grades are computed and ready for entry -- into the university's appalling proprietary system, where the data goes to die. (Try to find a student's grade from a forgotten semester within that system. It's a database, yet there are no affordances available to users for the simplest tasks...)

All of my grade data, along with most of what I produce, is in plain text. One cost of this choice is that I have to write my own code to process it. This takes a little time, but not all that much, to be honest. I don't need all of Numbers or Excel; all I need most of the time is the ability to do simple computations and a bit of sorting. If I use a comma-separated values format, all of my programming languages have tools to handle parsing, so I don't even have to do much input processing to get started. If I use Racket for my processing code, throwing a few parens into the mix enables Racket to read my files into lists that are ready for mapping and filtering to my heart's content.

Back when I started professoring, I wrote all of my grading software in whatever language we were using in the class in that semester. That seemed like a good way for me to live inside the language my students were using and remind myself what they might be feeling as they wrote programs. One nice side effect of this is that I have grading code written in everything from Cobol to Python and Racket. And data from all those courses is still searchable using grep, filterable using cut, and processable using any code I want to write today.

That is one advantage of plain text I value that Sivers doesn't emphasize: flexibility. Not only will plain text survive into the future... I can do anything I want with it. I don't often feel powerful in this world, but I feel powerful when I'm making data work for me.

In the end, I've traded the quick and easy power of Excel and its ilk for the flexible and power of plain text, at a cost of writing a little code for myself. I like writing code, so this sort of trade is usually congenial to me. Once I've made the trade, I end up building a set of tools that I can reuse, or mold to a new task with minimal effort. Over time, the cost reaches a baseline I can live with even when I might wish for a spreadsheet's ease. And on the day I want to write a complex function to sort a set of records, one that befuddles Numbers's sorting capabilities, I remember why I like the trade. (That happened yet again last Friday.)

A recent tweet from Michael Nielsen quotes physicist Steven Weinberg as saying, "This is often the way it is in physics -- our mistake is not that we take our theories too seriously, but that we do not take them seriously enough." I think this is often true of plain text: we in computer science forget to take its value and power seriously enough. If we take it seriously, then we ought to be eschewing the immediate benefits of tools that lock away our text and data in formats that are hard or impossible to use, or that may disappear tomorrow at some start-up's whim. This applies not only to our operating system and our code but also to what we write and to all the data we create. Even if it means foregoing our commercial spreadsheets except for the most fleeting of tasks.

March 03, 2022 2:22 PM

Knowing Context Gives You Power, Both To Choose And To Make

We are at the point in my programming languages course where my students have learned a little Racket, a little functional programming, and a little recursive programming over inductive datatypes. Even though I've been able to connect many of the ideas we've studied to programming tasks out in the world that they care about themselves, a couple of students have asked, "Why are we doing this again?"

This is a natural question, and one I'm asked every time I teach this course. My students think that they will be heading out into the world to build software in Java or Python or C, and the ideas we've seen thus far seem pretty far removed from the world they think they will live in.

These paragraphs from near the end of Chelsea Troy's 3-part essay on API design do a nice job of capturing one part of the answer I give my students:

This is just one example to make a broader point: it is worthwhile for us as technologists to cultivate knowledge of the context surrounding our tools so we can make informed decisions about when and how to use them. In this case, we've managed to break down some web request protocols and build their pieces back up into a hybrid version that suits our needs.

When we understand where technology comes from, we can more effectively engage with its strengths, limitations, and use cases. We can also pick and choose the elements from that technology that we would like to carry into the solutions we build today.

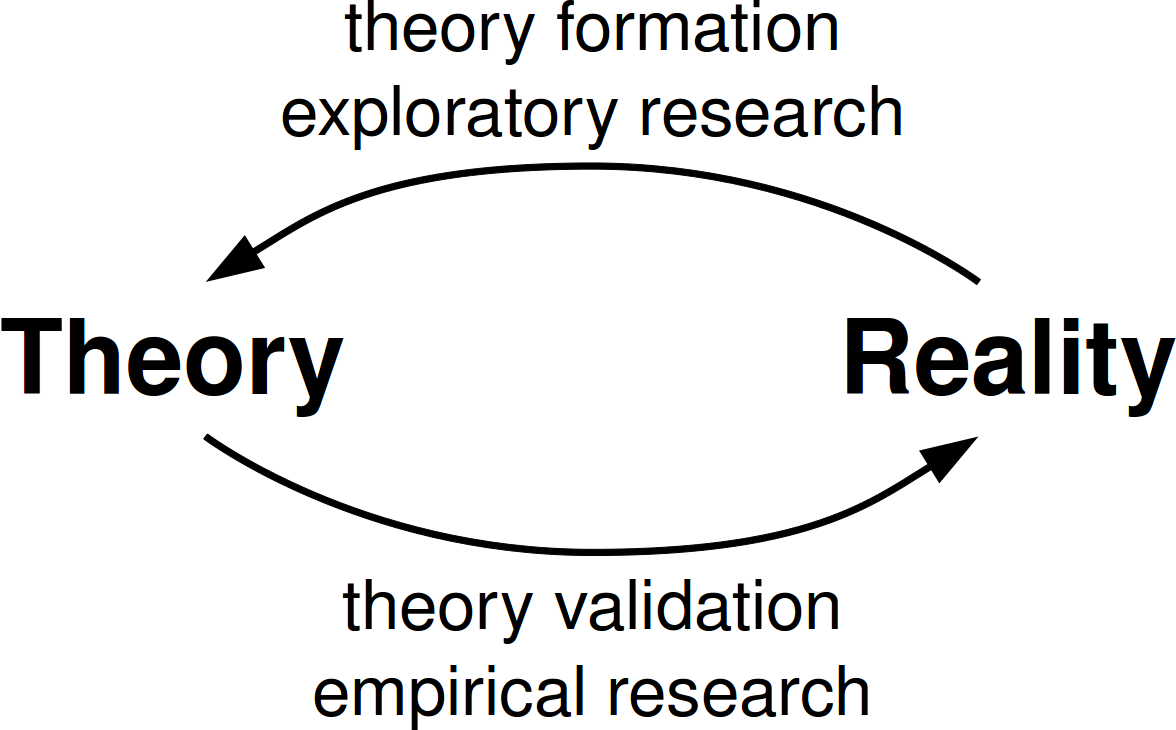

The languages we use were designed and developed in a particular context. Knowing that context gives us multiple powers. One power is the ability to make informed decisions about the languages -- and language features -- we choose in any given situation. Another is the ability to make new languages, new features, and new combinations of features that solve the problem we face today in the way that works best in our current context.

Not knowing context limits us to our own experience. Troy does a wonderful job of demonstrating this using the history of web API practice. I hope my course can help students choose tools and write code more effectively when they encounter new languages and programming styles.

Computing changes. My students don't really know what world they will be working in in five years, or ten, or thirty. Context is a meta-tool that will serve them well.

Posted by Eugene Wallingford | Permalink | Categories: Patterns, Software Development, Teaching and Learning

August 22, 2020 4:09 PM

Learning Something You Thought You Already Knew

Sandi Metz's latest newsletter is about the heuristic not to name a class after the design pattern it implements. Actually, it's about a case in which Metz wanted to name a class after the pattern it implements in her code and then realized what she had done. She decided that she either needed to have a better reason for doing it than "because it just felt right" or she needed practice what she preaches to the rest of us. What followed was some deep thinking about what makes the rule a good one to follow and her efforts to put her conclusions in writing for the benefit of her readers.

I recognize with Metz's sense of discomfort at breaking a rule when it feels right and her need to step back and understand the rule at a deeper level. Between her set up and her explanation, she writes:

I've built a newsletter around this rule not only because I believe that it's useful, but also because my initial attempts to explain it exposed deep holes in my understanding. This was a revelation. Had I not been writing a book, I might have hand-waved around these gaps in my knowledge forever.

People sometimes say, "If you you really want to understand something, teach it to others." Metz's story is a great example of why this is really true. I mean, sure, you can learn any new area and then benefit from explaining it to someone else. Processing knowledge and putting it in your own words helps to consolidate knowledge at the surface. But the real learning comes when you find yourself in a situation where you realize there's something you've taken for granted for months or for years, something you thought you knew, but suddenly you sense a deep hole lying under the surface of that supposed understanding. "I just know breaking the rule is the right thing to do here, but... but..."

I've been teaching long enough to have had this experience many times in many courses, covering many areas of knowledge. It can be terrifying, at least momentarily. The temptation to wave my hands and hurry past a student's question is enormous. To learn from teaching in these moments requires humility, self-awareness, and a willingness to think and work until you break through to that deeper understanding. Learning from these moments is what sets the best teachers and writers apart from the rest of us.

As you might guess from Metz's reaction to her conundrum, she's a pretty good teacher and writer. The story in the newsletter is from the new edition of her book "99 Bottles of OOP", which is now available. I enjoyed the first edition of "99 Bottles" and found it useful in my own teaching. It sounds like the second edition will be more than a cleanup; it will have a few twists that make it a better book.

I'm teaching our database systems course for the first time ever this fall. This is a brand new prep for me: I've never taught a database course before, anywhere. There are so many holes in my understanding, places where I've internalized good practices but don't grok them in the way an expert does. I hope I have enough humility and self-awareness this semester to do my students right.

Posted by Eugene Wallingford | Permalink | Categories: Patterns, Software Development, Teaching and Learning

February 29, 2020 11:19 AM

Programming Healthily

... or at least differently. From Inside Google's Efforts to Engineer Its Food for Healthiness:

So, instead of merely changing the food, Bakker changed the foodscape, ensuring that nearly every option at Google is healthy -- or at least healthyish -- and that the options that weren't stayed out of sight and out of mind.

This is how I've been thinking lately about teaching functional programming to my students, who have experience in procedural Python and object-oriented Java. As with deciding what we eat, how we think about problems and programs is mostly unconscious, a mixture of habit and culture. It is also something intimate and individual, perhaps especially for relative beginners who have only recently begun to know a language well enough to solve problems with some facility. But even for us old hands, the languages and mental tools we use to write programs can become personal. That makes change, and growth, hard.

In my last few offerings of our programming languages course, in which students learn Racket and functional style, I've been trying to change the programming landscape my students see in small ways whenever I can. Here are a few things I've done:

- I've shrunk the set of primitive functions that we use in the first few weeks. A larger set of tools offers more power and reach, but it also can distract. I'm hoping that students can more quickly master the small set of tools than the larger set and thus begin to feel more accomplished sooner.

- We work for the first few weeks on problems with less need for the tools we've moved out of sight, such as local variables and counter variables. If a program doesn't really benefit from a local variable, students will be less likely to reach for one. Instead, perhaps, they will reach for a function that can help them get closer to their goal.

- In a similar vein, I've tried to make explicit connections between specific triggers ("We're processing a list of data, so we'll need a loop.") with new responses ("map can help us here."). We then follow up these connections with immediate and frequent practice.

By keeping functional tools closer at hand, I'm trying to make it easier for these tools to become the new habit. I've also noticed how the way I speak about problems and problem solving can subtly shape how students approach problems, so I'm trying to change a few of my own habits, too.

It's hard for me to do all these things, but it's harder still when I'm not thinking about them. This feels like progress.

So far students seem to be responding well, but it will be a while before I feel confident that these changes in the course are really helping students. I don't want to displace procedural or object-oriented thinking from their minds, but rather to give them a new tool set they can bring out when they need or want to.

Posted by Eugene Wallingford | Permalink | Categories: Patterns, Software Development, Teaching and Learning

January 06, 2020 3:13 PM

A Writing Game

I recently started reading posts in the archives of Jason Zweig's blog. He writes about finance for a living but blogs more widely, including quite a bit about writing itself. An article called On Writing Better: Sharpening Your Tools challenges writers to look at each word they write as "an alien object":

As the great Viennese journalist Karl Kraus wrote, "The closer one looks at a word, the farther away it moves." Your goal should be to treat every word you write as an alien object: You should be able to look at it and say, What is that doing here? Why did I use that word instead of a better one? What am I trying to say here? How can I get to where I'm going if I use such stale and lifeless words?

My mind immediately turned this into a writing game, an exercise that puts the idea into practice. Take any piece of writing.

- Choose a random word in the document.

- Change the word -- or delete it! -- in a way that improves the text.

- Go to 1.

Play the game for a fixed number of rounds or for a fixed period of time. A devilish alternative is to play until you get so frustrated with your writing that you can't continue. You could then judge your maturity as a writer by how long you can play in good spirits.

We could even automate the mechanics of the game by writing a program that chooses a random word in a document for us. Every time we save the document after a change, it jumps to a new word.

As with most first ideas, this one can probablyb be improved. Perhaps we should bias word selection toward words whose replacement or deletion are most likely to improve our writing. Changing "the" or "to" doesn't offer the same payoff as changing a lazy verb or deleting an abstract adverb. Or does it? I have a lot of room to improve as a writer; maybe fixing some "the"s and "to"s is exactly what I need to do. The Three Bears pattern suggests that we might learn something by tackling the extreme form of the challenge and seeing where it leads us.

Changing or deleting a single word can improve a piece of text, but there is bigger payoff available, if we consider the selected word in context. The best way to eliminate many vague nouns is to turn them back into verbs, where they act with vigor. To do that, we will have to change the structure of the sentence, and maybe the surrounding sentences. That forces us to think even more deeply about the text than changing a lone word. It also creates more words for us to fix in following rounds!

I like programming challenges of this sort. A writing challenge that constrains me in arbitrary ways might be just what I need to take time more often to improved my work. It might help me identify and break some bad habits along the way. Maybe I'll give this a try and report back. If you try it, please let me know the results!

And no, I did not play the game with this post. It can surely be improved.

Postscript. After drafting this post, I came across another article by Zweig that proposes just such a challenge for the narrower case of abstract adverbs:

The only way to see if a word is indispensable is to eliminate it and see whether you miss it. Try this exercise yourself:

- Take any sentence containing "actually" or "literally" or any other abstract adverb, written by anyone ever.

- Delete that adverb.

- See if the sentence loses one iota of force or meaning.

- I'd be amazed if it does (if so, please let me know).

We can specialize the writing game to focus on adverbs, another part of speech, or almost any writing weakness. The possibilities...

October 25, 2019 3:55 PM

Enjoyment Bias in Programming

Earlier this week, I read this snippet about the benefits of "enjoyment bias" in Morgan Housel's latest blog post:

2. Enjoyment bias: An inefficient investing strategy that you enjoy will outperform an efficient one that feels like work because anything that feels like work will eventually be abandoned.

Getting anything to work requires giving it an appropriate amount of time. Giving it time requires not getting bored or burning out. Not getting bored or burning out requires that you love what you're doing, because that's when the hard parts become acceptable.

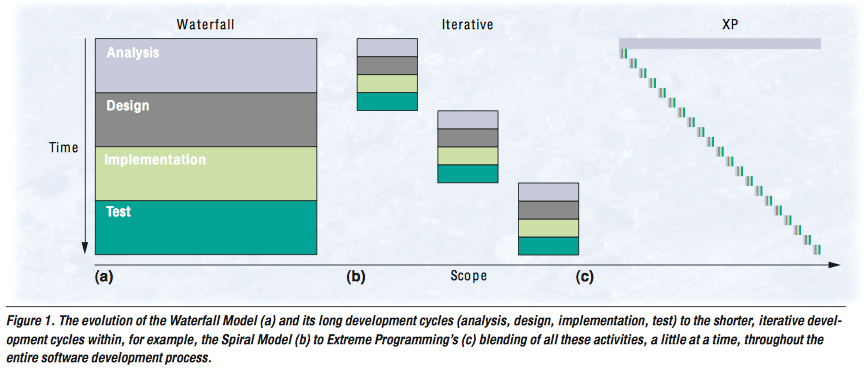

The programmer in me immediately thought, "I have this pattern." My guess is that this bias applies to a lot of things outside of investing. In software development, the choices of development methodology and programming language often benefit from enjoyment bias.

In programming as in investing, we can take this too far and hurt ourselves, our teams, and our users. Anything can be overdone. But, in general, we are more likely to stick with the hard work of building software when we enjoy the way we are building it and the tools we are using. Don't let others shame you away from what works for you.

This bias actually reminded me of a short bit from one of Paul Graham's essays on, of all things, procrastination:

I think the way to "solve" the problem of procrastination is to let delight pull you instead of making a to-do list push you. Work on an ambitious project you really enjoy, and sail as close to the wind as you can, and you'll leave the right things undone.

Delight can keep you happily working when the going gets rough, and it can pull you toward work when a lack of delight would leave you killing time on stuff that doesn't matter.

(By the way, I think that several other biases described by Housel are also useful in programming. Consider the value of reasonable ignorance, number three on his list....)

August 30, 2019 4:26 PM

Unknown Knowns and Explanation-Based Learning

Like me, you probably see references to this classic quote from Donald Rumsfeld all the time:

There are known knowns; there are things we know we know. We also know there are known unknowns; that is to say, we know there are some things we do not know. But there are also unknown unknowns -- the ones we don't know we don't know.

I recently ran across it again in an old Epsilon Theory post that uses it to frame the difference between decision making under risk (the known unknowns) and decision-making under uncertainty (the unknown unknowns). It's a good read.

Seeing the passage again for the umpteenth time, it occurred to me that no one ever seems to talk about the fourth quadrant in that grid: the unknown knowns. A quick web search turns up a few articles such as this one, which consider unknown knowns from the perspective of others in a community: maybe there are other people who know something that you do not. But my curiosity was focused on the first-person perspective that Rumsfeld was implying. As a knower, what does it mean for something to be an unknown known?

My first thought was that this combination might not be all that useful in the real world, such as the investing context that Ben Hunt writes about in Epsilon Theory. Perhaps it doesn't make any sense to think about things you don't know that you know.

As a student of AI, though, I suddenly made an odd connection ... to explanation-based learning. As I described in a blog post twelve years ago:

Back when I taught Artificial Intelligence every year, I used to relate a story from Russell and Norvig when talking about the role knowledge plays in how an agent can learn. Here is the quote that was my inspiration, from Pages 687-688 of their 2nd edition:Sometimes one leaps to general conclusions after only one observation. Gary Larson once drew a cartoon in which a bespectacled caveman, Zog, is roasting his lizard on the end of a pointed stick. He is watched by an amazed crowd of his less intellectual contemporaries, who have been using their bare hands to hold their victuals over the fire. This enlightening experience is enough to convince the watchers of a general principle of painless cooking.

I continued to use this story long after I had moved on from this textbook, because it is a wonderful example of explanation-based learning.

In a mathematical sense, explanation-based learning isn't learning at all. The new fact that the program learns follows directly from other facts and inference rules already in its database. In EBL, the program constructs a proof of a new fact and adds the fact to its database, so that it is ready-at-hand the next time it needs it. The program has compiled a new fact, but in principle it doesn't know anything more than it did before, because it could always have deduced that fact from things it already knows.

As I read the Epsilon Theory article, it struck me that EBL helps a learner to surface unknown knowns by using specific experiences as triggers to combine knowledge it already into a piece of knowledge that is usable immediately without having to repeat the (perhaps costly) chain of inference ever again. Deducing deep truths every time you need them can indeed be quite costly, as anyone who has ever looked at the complexity of search in logical inference systems can tell you.

When I begin to think about unknown knowns in this way, perhaps it does make sense in some real-world scenarios to think about things you don't know you know. If I can figure it all out, maybe I can finally make my fortune in the stock market.

June 18, 2019 3:09 PM

Notations, Representations, and Names

In The Power of Simple Representations, Keith Devlin takes on a quote attributed to the mathematician Gauss: "What we need are notions, not notations."

While most mathematicians would agree that Gauss was correct in pointing out that concepts, not symbol manipulation, are at the heart of mathematics, his words do have to be properly interpreted. While a notation does not matter, a representation can make a huge difference.

Spot on. Devlin's opening made me think of that short video of Richard Feynman that everyone always shares, on the difference between knowing the name of something and knowing something. I've seen people mis-interpret Feynman's words in both directions. The people who share this video sometimes seem to imply that names don't matter. Others dismiss the idea as nonsense: how can you not know the names of things and claim to know anything?

Devlin's distinction makes clear the sense in which Feynman is right. Names are like notations. The specific names we use don't really matter and could be changed, if we all agreed. But the "if we all agreed" part is crucial. Names do matter as a part of a larger model, a representation of the world that relates different ideas. Names are an index into the model. We need to know them so that we can speak with others, read their literature, and learn from them.

This brings to mind an article with a specific example of the importance of using the correct name: Through the Looking Glass, or ... This is the Red Pill, by Ben Hunt at Epsilon Theory:

I'm a big believer in calling things by their proper names. Why? Because if you make the mistake of conflating instability with volatility, and then you try to hedge your portfolio today with volatility "protection" ...., you are throwing your money away.

Calling a problem by the wrong name might lead you to the wrong remedy.

Feynman isn't telling us that names don't matter. He's telling us that knowing only names isn't valuable. Names are not useful outside the web of knowledge in which they mean something. As long as we interpret his words properly, they teach us something useful.

May 19, 2019 10:48 AM

Me and Not Me

At one point in the novel "Outline", by Rachel Cusk, a middle-aged man relates a conversation that he had with his elderly mother, in which she says:

I could weep just to think that I'll never see you again as you were at the age of six -- I would give anything, she said, to meet that six-year-old one more time.

This made me think of two photographs I keep on the wall at my office, of my grown daughters when they were young. In one, my older daughter is four; in the other, my younger daughter is two. Every once in a while, my wife asks why I don't replace them with something newer. My answer is always the same: They are my two favorite pictures in the world. When my daughters were young, they seemed to be infinite bundles of wonder: always curious, discovering things and ideas everywhere they went, making connections. They were restless in a good way, joyful, and happy. We can be all of these things as we grow into adulthood, but I experienced them so much differently as a father, watching my girls live them.

I love the people my daughters are now, and are becoming, and cherish my relationship with them. Yet, like the old woman in Cusk's story, there is a small part of me that would love to meet those little girls again. When I see one of my daughters these days, she is both that little girl, grown up, and not that little girl, a new person shaped by her world and by her own choices. The photographs on my wall keep alive memories not just of a time but also of specific people.

As I thought about Cusk's story, it occurred to me that the idea of "her and not her" does not apply only to my daughters, or to my wife, old pictures of whom I enjoy with similar intensity. I am me and not me.

I'm both the little guy who loved to read encyclopedias and shoot baskets every day, and not him. I'm not the same guy who walked into high school in a new city excited about the possibilities it offered and nervous about how I would fit in, yet I grew out of him. I am at once the person who started college as an architecture major -- who from the time he was eight years old had wanted to be an architect -- and not him. I'm not the same person who defended a Ph.D. dissertation half a life ago, but who I am owes a lot to him. I am both the man my wife married and not, being now the man that man has become.

And, yes, the father of those little girls pictured on my wall: me and not me. This is true in how they saw me then and how they see me now.

I'm not sure how thinking about this distinction will affect future me. I hope that it will help me to appreciate everyone in my life, especially my daughters and my wife, a bit more for who they are and who they have been. Maybe it will even help me be more generous to 2019 me.

April 08, 2019 11:55 AM

TDD is a Means for Delaying Intuition

In one of his "Conversations with Tyler", Tyler Cowen talks with Daniel Kahneman about intuition and its relationship to thinking fast and slow. According to Kahneman, evidence supports his position that most people have not had the experience necessary to develop intuition that is good enough for solving problems directly. So he thinks that most people, including most so-called experts, should slow down.

So I think delaying intuition is a very good idea. Delaying intuition until the facts are in, at hand, and looking at dimensions of the problem separately and independently is a better use of information.

The problem with intuition is that it forms very quickly, so that you need to have special procedures in place to control it except in those rare cases...

...

Break the decision up. It's not so much a matter of time because you don't want people to get paralyzed by analysis. But it's a matter of planning how you're going to make the decision, and making it in stages, and not acting without an intuitive certainty that you are doing the right thing. But just delay it until all the information is available.

This is one of the things that I find most helpful about test-driven design when I practice it faithfully. It's pretty easy for me to think that I know everything I need to implement a program after I've read the spec and thought about it for a few minutes. I mean, I've written a lot of code over the years... If my intuition tells me where to go, I can start right away and have the whole path ahead of me in my mind.

But how often do I really have evidence that my intuitive plan is the correct one? If I'm wrong, I'll spend a bunch of time and write a bunch of code, only later to find out I'm wrong. What's worse, all that code I've written usually ends up feeling like a constraint within which I have to work as I try to dig myself out of the mess.

Writing one test at a time and implementing just the code I need to pass it is a way to force my intuitive mind to slow down. It helps me think about the actual problem I'm solving, rather than the abstraction my expert brain infers from the spec. The program grows slowly along side my understanding and points me in the direction of the next small step to take.

TDD is a procedure I can put in place to help me control my intuition until the facts are in, and it encourages me to look at different dimensions of the problem independently as write the code to solve them.

April 04, 2019 4:40 PM

A Non-Software Example of Preparatory Refactoring

When I refactor my code, I most often think in terms of what Martin Fowler calls preparatory refactoring: if it's difficult to add a new feature to my program, I refactor the existing code into a state where the feature fits naturally somewhere, then I add the feature. As is often the case, Kent Beck captured this notion memorably in a tweet-sized aphorism. I was delighted to see that Martin's blog post, which I remember fondly, cites the same tweet!

Ideas from programming are usually instances of more general ideas from the broader world, specialized to a world of bits and computation. Back when I taught our object-oriented programming course every semester, I often referred my students to a web site that offered real-life examples of the various design patterns we were learning. I remember an example of Decorator that showed how we can embellish a painting with a matte or a frame, and its use of the U.S. Constitution's specification of the President to illustrate the idea of a Singleton. I can't find that site on the web just now, but there's almost surely a local copy buried in one of my course websites from way back.

The idea of refactoring is useful outside the world of software, too. Just yesterday, my dean suggested what I consider to be a preparatory refactoring.

A committee on campus is charged with proposing ways that the university can restructure its colleges and departments. With the exception of a merger of two colleges a few years ago, we have had essentially the same academic structure for my entire time here. In those years, disciplines have changed and budgets have changed, so now the administration is thinking that the university might be more efficient or more productive with a different structure. Thinking about the process from this perspective, restructuring is largely a reaction to change that has already happened.

My dean suggested another way to approach the task. Think, he said, of the new academic programs that you'd like to create in the future. We may not have money available right now to create a new major or to organize a new research center, but one day we might. What university structure would make adding this program go more smoothly and work better once in place? Which departments would the new program want to work with? Which administrative structures already in place would minimize unnecessary overhead of the new program? As much as possible, he suggested, let's try to create a new academic structure that suits the future programs we'd like to build. That will reduce friction later, which is good: Administrative friction often grinds new academic ideas to a halt before they get off the ground.

In programming terms, this is quite bit different than the sort of refactoring I prefer. I try to practice YAGNI and refactor only for the specific feature that I want to add right now, not taking into account imagined future features I may never need. In terms of academic structure, though, this sort of ip-front design makes a lot of sense. Academic structures are much harder to change than software; getting a few things right now may make future changes at the program level much easier to implement later.

Thinking about academic restructuring this way has another positive side effect: it might entice faculty to be more engaged, because what we do now matters to the future we would like to build. It's not merely a reaction to past changes.

My dean is suggesting that we build academic structures now that make the changes we want to implement later (when the resources and requisite will exist) easier to implement. Building those structures now may take more work than simply responding to past changes, but it will be worth the effort when we are ready to create new programs. I think Kent and Martin might be proud.

Posted by Eugene Wallingford | Permalink | Categories: Managing and Leading, Patterns, Software Development

February 24, 2019 9:35 AM

Normative

In a conversation with Tyler Cowen, economist John Nye expresses disappointment with the nature of discourse in his discipline:

The thing I'm really frustrated by is that it doesn't matter whether people are writing from a socialist or a libertarian perspective. Too much of the discussion of political economy is normative. It's about "what should the ideal state be?"

I'm much more concerned with the questions of "what good states are possible?" And once good states are created that are possible, what good states are sustainable? And that, in my view, is a still understudied and very poorly understood issue.

For some reason, this made me think about software development. Programming styles, static and dynamic typing, software development methodologies, ... So much that is written about these topics tells us what's the right the thing to do. "Do this, and you will be able to reliably make good software."

I know I've been partisan on some of these issues over the course of my time as a programmer, and I still have my personal preferences. But these days especially I find myself more interested in "what good states are sustainable?". What has worked for teams? What conditions seem to make those practices work well or not so well? How do teams adapt to changes in the domain or the business or the team's make-up?