November 29, 2007 4:38 PM

Coincidence by Metaphor

I recently wrote that I will be in a play this Christmas season. I'm also excited to have been asked by Brian Marick to serve on a committee for the Agile2008 conference, which takes me in another direction with the performance metaphor. As much as I write about agile methods in my software development entries, I have never been to one of the agile conferences. Well, at least not since they split off from OOPSLA and took on their own identity.

Rather than using the traditional and rather tired notion of "tracks", Agile2008 is organized around the idea of stages, using the metaphor of a music festival. Each stage is designed and organized by a stage producer, a passionate expert, to attract an audience with some common interest. In the track-based metaphor -- a railroad? -- such stages are imposed over the tracks in much the way that aspects cut across the classes in an object-oriented program.

(At a big conference like OOPSLA, defining and promoting the crosscutting themes is always a tough chore. Now that I have made this analogy, I wonder if we could use ideas from aspect-oriented programming to do the job better? Think, think.)

I look forward to seeing how this new metaphor plays out. In many important ways, the typical conference tracks (technical papers, tutorials, workshops, etc.) are implementation details that help the conference organizers do their job but that interfere with the conference participants' ability to access events that interest them. Why not turn the process inside out and focus on our users for a change? Good question!

(Stray connection: This reminds of an interview I heard recently with comedian Steve Martin, who has written a biography of himself. He described how he developed his own style as a stand-up comedian. Most comedians in that era were driven by the punch line -- tell a joke that gets you to a punch line, and then move on to the next. While taking a philosophy course, he learned that one should question even the things that no one ever questioned, what was taken for granted. What would be comedy be like without a punch line? Good question!)

Of course, this changes how the conference organizers work. First of all, it seems that for a given stage the form of activity being proposed could be almost anything: a presentation, a small workshop, a demonstration, a longer tutorial, a roundtable, ... That could be fun for the producers and their minions, and give them some much needed flexibility that is often missing. (Several times in the past I have had to be part of rejecting, say, a tutorial when we might gladly have accepted the submission if reformulated as a workshop -- but we were the tutorials committee!)

Brian tells me that Agile 2008 will try a different sort of submission/review/acceptance process. Submissions will be posted on the open web, and anyone will be able to comment on them. The review period will last several months, during which time submitters can revise their submissions. If the producer and the assistant producers participate actively in reviewing submissions over the whole period, they well put in more work than in a traditional review process (and certainly over a longer period of time.) But the result should be better submissions, shaped by ideas from all comers -- including potential members of the audience that the stage hopes to attract! -- and so better events and a better conference. It will be cool to be part of this experiment.

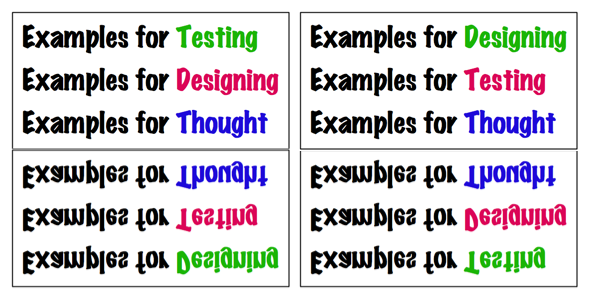

As you can see from the Agile 2008 web site, its stages correspond to themes, not event formats. Brian is producing a stage called "Designing, Testing, and Thinking with Examples", the logo for which you see here. This is an interesting theme that goes beyond particular behaviors such as designing, testing, and teaching to the heart of a way to think about all of them, in terms of concrete examples. The stage will not only accept examples for presentation, demonstration, or discussion, but glory in them. That word conveys the passion that Brian and his assistant producer Adam Geras bring to this theme.

I think Brian asked me to help them select the acts for this stage because I have exhibited some strong opinions about the role of examples and problems in teaching students to program and to learn the rest of computer science. I'm pretty laid back by nature, and so don't often think of myself in terms of passion, but I guess I do share some of the passion for examples that Brian and Adam bring to the stage. This is a great opportunity for me to broaden my thinking about examples and to see what they means for the role they play in my usual arenas.

In a stroke of wisdom, no one has asked me yet to be on the stage, unlike my local director friend. Whatever practice I get channeling Jackson Davies, I am not sure I will be ready for prime time on the bright lights of an Agile stage...

It occurs to me that, following this metaphor one more step, I am not playing the role of a contestant on, say, American Idol, but the role of talent judge. Shall I play Simon or Paula? (Straight up!)

November 28, 2007 3:33 PM

Learning About Software from The Theater, and Vice Versa

Last Sunday was my fourth rehearsal as a newly-minted actor. It was our first run-through of the entire play on stage, and the first time the director had a chance to give notes to the entire cast. The whole afternoon, my mind was making connections between plays and programs -- and I don't mean the playbill. These thoughts follow from my experiences in the play and my experiences as a software developer. Here are a few.

Scenes and Modularity Each scene is a like a module that the director debugs. In some plays, the boundaries between scenes are quite clean, with a nice discrete break from one to the next. With a play on stage, the boundaries between scenes may be less clear.

In our play, scenes often blend together. Lights go down on one part of the stage and up on another, shifting the audience's attention, while the actors in the first scene remain in place. Soon, the lights shift back to the first and the action picks up where it left off. Plays work this way in part because they operate in limited real estate, and changing scenery can be time-consuming. But this also

It is easier to debug scenes that are discrete modules. Debugging scenes that run together is difficult for the same reasons that debugging code modules with leaky boundaries is. A change in one scene (say, repositioning the players) can affect the linked scene (say, by changing when and where a player enters).

Actors and Code If the director debugs a scene, then maybe the actors, their lines, and the props are like the code. That just occurred to me while typing the last section!

Time Constraints It is hard to get things right in a rush. Based on what he sees as the play executes, the director makes changes to the content of the show. He also refactors: rearranging the location of a prop or a player, either to "clean things up" or to facilitate some change he has in mind. It takes time to clean things up, and we only know that something needs to be changed as we watch the play run, and think about it.

Mock Objects and Stand-Ins When rehearsing a scene, if you don't have the prop that you will use in the play itself, then use something to stand in its place. It helps you get used to the the fact that something will be there when you perform, and it helps the director remember to take into account its use and placement. You have to remember to have it at the right time in the right place, and return it to the prop table when it's not in use.

A good mock prop just needs to have some of the essential features of the actual prop. When rehearsing a scene in which I will be carrying some groceries to the car, I grabbed a box full of odds and ends out of some office. It was big enough and unwieldy enough to help me "get into the part".

Conclusion for Now The relationship between software and plays or movies is not a new idea, of course. I seem to recall a blog entry a couple of years ago that explored the analogy of developing software as producing a film, though I can't find a link to it just now. Googling for it led me to a page on Ward's wiki and to two entries on the Confused of Calcutta blog. More reading for me... And of course there is Brenda Laurel's seminal Computers As Theatre. You really should read that!

Reading is a great way to learn, but there is nothing quite like living a metaphor to bring it home.

The value that comes from making analogies and metaphors comes in what we can we learn from them. I'm looking forward to a couple of weeks more of learning from this one -- in both directions.

November 27, 2007 5:45 PM

Comments on "A Program is an Idea"

Several folks have sent interesting comments on recent posts, especially A Program is an Idea. A couple of comments on that post, in particular, are worth following up on here.

Bill Tozier wrote that learning to program -- just program -- is insufficient.

I'd argue that it's not enough to "learn" how to program, until you've learned a rigorous approach like test-driven development or behavior-driven development. Many academic and scientific colleagues seem to conflate software development with "programming", and as a result they live their lives in slapdash Dilbertesque pointy-haired boss territory.

This is a good point, and goes beyond software development. Many, many folks, and especially university students, conflate programming with computer science, which disturbs academic CS folks to no end, and for good reason. Whatever we teach scientists about programming, we must teach it in a broader context, as a new way of thinking about science. This should help ameliorate some of the issues with conflating programming and computer science. The need for this perspective is also one of the reasons that the typical CS1 course isn't suitable for teaching scientists, since its goals relative to programming and computer science are so different.

I hadn't thought as much about Bill's point of conflating programming with software development. This creates yet another reason not to use CS1 as the primary vehicle for teaching scientists to ("program" | "develop software"), because even more so in this regard are the goals of CS1 for computer science majors very different from the goals of a course for scientists.

Indeed, there is already a strong tension between the goals of academic computer science and the goals of professional software development that makes the introductory CS curriculum hard to design and implement. We don't want to drag that mess into the design of a programming course for scientists, or economists, or other non-majors. We need to think about how best to help the folks who will not be computer scientists learn to use computation as a tool for thinking about, and doing work in, their own discipline.

And keep in mind that most of these folks will also not be professional software developers. I suspect that folks who are not professional software developers -- folks using programs to build models in their own discipline -- probably need to learn a different set of skills to get started using programming as a tool than professional software developers do. Should they ever want to graduate beyond a few dozen lines of code, they will need more and should then study more about developing larger and longer-lived programs.

All that said, I think that that scientists as a group might well be more amenable than many others to learning test-driven development. It is quite scientific in spirit! Like the scientific method, it creates a framework for thinking and doing that can be molded to the circumstances at hand.

The other comment that I must respond to came from Mike McMillan. He recalled having run across a video of Gerald Sussman giving a talk on the occasion of Daniel Friedman's 60th birthday, and hearing Sussman comment on expressing scientific ideas in in Scheme. This brought to his mind Minsky's classic paper "Why Programming Is a Good Medium for Expressing Poorly-Understood and Sloppily-Formulated Ideas", which is now available on-line in a slightly revised form.

This paper is the source of the oft-quoted passage that appears in the preface of Structure and Interpretation of Computer Programs:

A computer is like a violin. You can imagine a novice trying first a phonograph and then a violin. The latter, he says, sounds terrible. That is the argument we have heard from our humanists and most of our computer scientists. Computer programs are good, they say, for particular purposes, but they aren't flexible. Neither is a violin, or a typewriter, until you learn how to use it.

(Perhaps Minsky's paper is where Alan Kay picked up his violin metaphor and some of his ideas on computation-as-medium.)

Minsky's paper is a great one, worthy of its own essay sometime. I should certainly have mentioned it in my essay on scientists learning to program. Thanks to Mike for the reminder! I am glad to have had reason to track down links to the video and the PDF version of the paper, which until now I've only had in hardcopy.

November 26, 2007 8:05 PM

A Quick Thought on Minimesters

Yes, that is a word used by an accredited institution of higher education.

In my previous entry, I discussed what lecture was good for. One of the best uses of lecture, I concluded, was to motivate students for the work that they would do outside of class. The best use of class time, whether lecture or not, is to support students in the work they do at home and in the lab. That's because learning takes time, and 150 minutes a week in class just isn't enough.

After the wedding I mentioned last time, I took my family out for dinner. While dining, I could not help overhearing some of the conversation in booth behind us. An instructor from the local community college was describing her unabashed love of the minimester. "The students were so focused!"

"Minimester" is a portmanteau that refers to a very short and concentrated term of study. In recent years, our local community college has begun offering eight-day courses in the interim between real semesters. Eight days.

The appeal to students is obvious. They can knock out an entire course in a couple of weeks. To students looking to get out into the workforce quickly, or to transfer to a four-year school as soon as possible, the minimester is a boon.

The students are so focused... They have to be, or the course will be over in the blink of an eye. But do they learn??

Like lecture, this is an idea that may sound good but does not work in practice. It may work great for the professor, who can free up a teaching slot during a traditional semester for other activities. It may work great for the school, which can sell courses to students who might be unable to commit a whole semester to a course. But I do not think that minimesters work for students -- at least not the ones who want to learn something.

The minimester course reminds me many of the one-week OOD/OOP courses offered by consulting groups for professional developers. No one should think that these courses do anything more than introduce students to the topic and prepare them for a lot of work afterwards, learning on their own. Too many managers seem to think that these one-week courses are sufficient on their own.

Stretching the idea in the other direction, there is a private four-year college in our state that teaches all of its courses in terms of three weeks. Its faculty believe that this sort of immersive experience benefits student learning. Three weeks is quite a bit different than 5 or 8 days, but it still seems to be so short...

So I got to thinking, what sort of course can be taught -- learned -- effectively in such a short period? Without much experience teaching these courses, though, my mind quickly turned to the sort of course that cannot be learned effectively in such a short time frame.

Not design. Not creating. Not writing. Not programming. (Just ask Peter Norvig!)

Let's see. Students can retarget existing knowledge in a short course. A student can learn a fourth or tenth programming language in a short time, if she already knows another language like it. Most students can't even approach mastering the new language in a week, but they can be prepared to master the language with practice at home. And if the new language is in a new programming style, all bets are off. A week or two almost certainly isn't enough.

Students can learn some facts in a short period, so courses that are heavy on facts are a possibility. But then, that takes us back to the discussion of lecture. If the course is "just the facts, ma'am", why not just give the student a book to read? The thing is, learning facts is only one of the desired outcomes of even a fact-laden course. It is also the one most easily achieved.

The problem with minimesters is that one cannot very easily learn practices, or any behavior that take time to develop and become habitual. Practice takes, well, practice! And practice takes time.

The one benefit of a three-week immersive experience is that the deep focus it affords allows the learner a chance to get a good start on a new habit. Students who take 5 courses in a traditional 15-week semester often are stretched so thin that they have a hard time creating new habits in any of them, unless they make a concerted effort.

Many of us at the university detest the notion of students transferring credit for one of our courses from a community college if the course was taken during a minimester. But there is not much we can do. Fortunately, the community colleges aren't doing CS courses this way -- at least yet.

Most people know that training the body takes time (though some students hope against hope). We need to respect the same truth about how the mind works.

November 25, 2007 10:55 AM

A Quick Thought on Lecture

Yesterday I ran into two thoughts on teaching and learning that may end up being longer pieces later. This entry is me thinking out loud about the first, and next I'll think out loud about the second.

Yesterday I was filling some time before a wedding in a geeky way by reading Carl Wieman's Why Not Try a Scientific Approach to Science Education? from the September/October 2007 issue of Change magazine, on the recommendation of a fellow department head in my college. Wieman won the 2001 Nobel Prize in physics and has also spent a lot of time thinking about teaching physics to general population. The article nicely summarizes many of the ways we can improve of general science education, most of which won't be new to anyone who has read in this area before.

Wieman explains why lecture is not an effective instructional strategy, even in situations where you think it might be (for example, when the audience consists of other experts in the field). The thought that occupied me for much of the afternoon was, okay, so, what is lecture good for? Or should we just retire it from our repertoires entirely?

Two customized forms of lecture can be quite useful. A short lecture -- 10 to 15 minutes max -- can set up another activity. A lecture that includes heavy doses of solving problems can illustrate a technique that students must do, or have done and now have questions about.

Straight lecture is, as Wieman says, a distillation of understanding: "First I thought very hard about the topic and got it clear in my own mind. Then I explained it to my students so that they would understand it with the same clarity I had." This is exposition which is well-suited to be read by the student. This is why so many instructors end up turning their awesome lecture notes into a book. So a third use of lecture is to fine-tune material that will become a book. That may be good for the lecturer/author and even future readers, but it doesn't do as much for the students sitting in the classroom.

I think that the fourth use of lecture is motivation. A great lecture can fire up the troops, rousing their passions to leave class -- and work hard. That's how learning really happens, through hard work outside of class. Time in class can help streamline the process, heading off potential deadends and guiding students down more fruitful paths. What a lecture during in class time can do is to excite students about the material and prepare them for the work they need to do on their own.

So: I don't think we should retire lecture from our repertoires entirely. We should use it in a target, limited fashion to prepare other activities in class and occasionally we should use it as a motivational speaker would, to excite students about what is possible and why the hard work is worth the effort.

I really wish I were a little better at this sort of lecturing. I think my students this semester could use a spiritual revival as they enter the last two weeks writing their compilers.

November 24, 2007 5:43 AM

A Program is an Idea

Recently I claimed that scientists should learn to program. Why? I think that there are reasons beyond the more general reasons that others should learn.

As more and more science becomes computational, or relies on computation in some fundamental way, scientists will build models of their theories using programs. There are, of course, many modeling tools to support this activity. But a scientist should understand something about how these tools work in order to understand better the models built with them. Scientists may not need to understand their software tools as well as the computer scientists who build them, but they should probably understand them better than my grandma knows how her e-mail tool works.

Actually, scientists may want to know a lot about their tools. My physicist and chemist friends talk and write often about how they build their own instruments and mock up equipment for special-purpose experiments. There is great power in building the instruments one uses -- something I've written about as a computer scientist writing his own tools. Then again, my scientist friends don't blow their own glass or make their own electrical wire, so there is some point at which the process of tool-building bottoms out. I'm not sure we have a very good sense where the process of a scientist writing her own software will bottom out just yet.

Being able to write code, even if only short scripts, puts a lot of power into the scientist's hands. It is the power to express an idea. And that's what programs are: ideas. I read that line in an article by Paul Graham about how programmers work best. But the crux of his whole argument comes down to that one line: a program is an idea.

As a scientist creates an idea, explores it, shapes it, and communicates it, a program can be the evolving idea embodied -- concrete, unambiguous, portable. That is part of what I inferred from the presentations of earth scientist Noah Diffenbaugh and physics educators Ruth Chabay and Bruce Sherwood at the SECANT workshop, Programs are part of how the scientist works and part of how the scientist communicates the result.

Reader Darius Bacon sent me some some neat comments in response to my Programming Scientists entry on just this point. Darius expressed frustration with the way that many scientific papers formally explain their experiments and results. "Why don't you just *write a program*?" he asks. He agrees that especially scientists should learn how to program, because it may help them learn to communicate their ideas better. I look forward to him saying more about this in his blog.

A scientist can communicate an idea to others with a program, but they can also think better with a program. Graham captured this from the perspective of the software developer in the article I quoted earlier:

Your code is your understanding of the problem you're exploring.

Every programmer knows what this means. I can think all the Big Thoughts I like, but until I have working a program, I'm never quite certain that these thoughts make sense. Writing a program forces me to specify and clarify; it rejects fuzziness and incoherence. As my program grows and evolves, it reflects the growth and evolution of my idea. Graham says it more strongly: the program is the growth and evolution of my idea. Whether this is truth or merely literary device, thinking of the program as the idea is a useful mechanism for holding myself accountable to making my idea clear enough to execute.

This is one of the senses in which Darius Bacon's notion of program-as-explanation works. A program might be clearer than the prose, "and even if it isn't at first, at least I could reverse-engineer it, because it has to be precise enough to execute" (my emphasis). And what helps us communicate better can also help us to think better, because the program in which we embody our thoughts has to be precise enough to execute.

I know this sounds like a lot of high-falutin' philosophizing. But I think it is one of the Big Ideas that computing has given the world. It's one of the reasons that teaching everyone a little programming would be good.

There are practical reasons for learning to program, too, and I think we can be more practical and concrete in saying why programming is especially important to scientists. In science, the 20th century was the century of the mathematical formula. The ideas we explored, and the level at which we understand them, were in most ways expressible with small systems of equations. But as we tackle more complex problems and try to understand more complex systems, individual formulae become increasingly unsatisfactory as models. The 21st century will be the century of the algorithm! And the best way to express and test an algorithm is... write a program.

November 23, 2007 9:09 AM

Now Appearing at a Theater Near You...

In a stunning departure from my ordinary behavior, I have taken an acting role in a play. My daughters were recently cast in a production of Barbara Robinson's classic children's story The Best Christmas Pageant Ever, being put on by a local church. The director is well known in our area as an actor and as the long-time director of a tremendous local children's theater, and he has just returned to the area as youth director of said church. He is also the virtual training partner to whom I have referred a few times in my entries on marathon preparation.

This play is mostly about kids and ladies, and plenty of folks auditioned for those roles. But when the one guy who auditioned for the part of the father dropped out, the production was left with a big hole. My daughters joked that I should fill in; it would be fun. My running partner-as-director assured me that I could handle what is really a small supporting role, even though I have no acting experience to speak of. After some hemming and hawing, I decided to give it a go. A compressed rehearsal schedule and a relaxed venue were enough to lower my fears, and the chance to work with my daughters -- who love to perform and who are getting pretty good at it -- was enough to convince me to take a risk.

So, in a few weeks, I will appear on stage as father Bob Bradley, immortalized in a made-for-TV film starring Loretta Swit by veteran character actor Jackson Davies.

Fortunately, my role in the play is a bit larger than the dad's role in the movie. Davies played a small, straight part, and I get to go for a laugh or two. The dad, though also gets to deliver a key passage in the story, what I call my "Linus moment", in analogy to A Charlie Brown Christmas. My lines are neither as extensive nor quite a poignant as the spotlighted soliloquy of Linus's Biblical passage, but still it is a pivotal moment. How is that for pressure on a first-time actor with no discernible natural skill? May I rise to the challenge!

I'm still not sure what to expect. I figure in the worst case we have a little fun. In the best case, perhaps learning a bit about how to deliver a line and mug for the audience will improve my "stage presence" as a teacher and as a public speaker. I usually live my life on a rather narrow path, so stretching my boundaries is almost certainly a good thing.

November 22, 2007 6:16 PM

For the Fruits of This Creation

On this and every day:

For the harvests of the Spirit, Thanks be to God;

For the good we all inherit, Thanks be to God;

For the wonders that astound us,

For the truths that will confound us,

Most of all that love has found us, Thanks be to God.

(Lyric by Fred Pratt Green, copyright 1970. Sung to a traditional Welsh melody.)

Among so many things, I'm thankful for the chance to write here and to have people read what I write.

Happy Thanksgiving.

November 21, 2007 3:28 PM

Small World

Recently I mentioned the big pharmaceutical company Eli Lilly in an entry on the next generation of scientists, because one of its scientists spoke at the SECANT workshop I was attending. I have some roundabout personal connections to Lilly. It is based in my hometown.

When I was in high school and had moved to a small town in the next county, I used to go with some adult friends to play chess at the Eli Lilly Chess Club, which was the only old-style corporate chess club of its kind that I knew of. (Clubs like it used to exist in many big cities in the 19th and early 20th centuries. I don't know how common they are these days. The Internet has nearly killed face-to-face chess.) I recall quite a few Monday nights losing quarters for hours while playing local masters at speed chess, at 1:30-vs-5:00 odds!

Coincidentally, my high school hometown was also home to a Lilly Research Laboratories facility, which does work on vaccines, toxins, and agricultural concerns. Parents of several friends worked there, in a variety of capacities. When I was in college, I went out on a couple of dates with a girl from back home. Her father was a research scientist at Lilly in Greenfield. (A quick google search on his name even uncovers a link to one of his papers.) He is the sort of scientist that Kumar, our SECANT presenter, works with at Lilly. Interesting connection.

But I can go one step further and bring this even closer to my professional life these days. My friend's last name was Gries. It turns out that her father, Christian Gries, is brother to none other than distinguished computer scientist David Gries. I've mentioned Gries a few times in this blog and even wrote an extended review of one of his classic papers.

I don't think I was alert enough at the time to be sufficiently impressed that Karen's uncle was such a famous computer scientist. In any case, hero worship is hardly the basis for a long-term romantic relationship. Maybe she was wise enough to know that dating a future academic was a bad idea...

November 20, 2007 4:30 PM

Workshop 5: Wrap-Up

[A transcript of the SECANT 2007 workshop: Table of Contents]

The last bit of the SECANT workshop focused on how to build a community at this intersection of CS and science. The group had a wide-ranging discussion which I won't try to report here. Most of it was pretty routine and would not be of interest to someone who didn't attend. But there were a couple of points that I'll comment on.

On how to cause change. At one point the discussion turned philosophical, as folks considered more generally how one can create change in a larger community. Should the group try to convince other faculty of the value of these ideas first, and then involve them in the change? Should the group create great materials and courses first and then use them to convince other faculty? In my experience, these don't work all that well. You can attract a few people who are already predisposed to the idea, or who are open to change because they do not have their own ideas to drive into the future. But folks who are predisposed against the idea will remain so, and resist, and folks who are indifferent will be hard to move simply because of inertia. If it ain't broke, don't fix it.

Others expressed these misgivings. Ruth Chabay suggested that perhaps the best way to move the science community toward computational science is by producing students who can use computation effectively. Those students will use computation to solve problems. They will learn deeper. This will catch the eye of other instructors. As a result, these folks will see an opportunity to change how they teach, say, physics. We wouldn't have to push them to change; they would pull change in. Her analogy was to the use of desktop calculators in math, chemistry, and physics classes in the 1970s and 1980s. Such a guerilla approach to change might work, if one could create a computational science course good enough to change students and attractive enough to draw students to take it. This is no small order, but it is probably easier than trying to move a stodgy academic establishment with brute force.

On technology for dissemination. Man, does the world change fast. Folks talked about Facebook and Twitter as the primary avenues for reaching students. Blogs and wikis were almost an afterthought. Among our students, e-mail is nearly dead, only 20 years or so after it began to enter the undergraduate mainstream. I get older faster than the calendar says because the world is changing faster than the days are passing.

Miscellaneous. Purdue has a beautiful new computer science building, the sort of building that only a large, research school can have. What we might do with a building at an appropriate scale for our department! An extra treat for me was a chance to visit a student lounge in the building that is named for the parents of a net acquaintance of mine, after he and his extended family made a donation to the building fund. Very cool.

I might trade my department's physical space for Purdue CS's building, but I would not trade my campus for theirs. It's mostly buildings and pavement, with huge amounts of auto traffic in addition to the foot traffic. Our campus is smaller, greener, and prettier. Being large has its ups and its downs.

Thanks to a recommendation of the workshop's local organizer, I was able to enjoy some time running on campus. Within a few minutes I found my way to some trails that head out into more serene places. A nice way to close the two days.

All in all, the workshop was well worth my time. I'll share some of the ideas among my science colleagues at UNI and see what else we can do in our own department.

November 20, 2007 1:28 PM

Workshop 4: Programming Scientists

[A transcript of the SECANT 2007 workshop: Table of Contents]

Should scientists learn to program? This question arose several times throughout the SECANT workshop, and it was an undercurrent to most everything we talked about.

Most of the discipline-area folks at the workshop currently use programming in their courses. Someone pointed out that this can be an attractive feature in an elective science course that covers computational material -- even students in the science disciplines want to learn a tool or skill they know to be marketable. (I am guessing that at least some of the times it may be the only thing that convinces them to take the course!)

Few require a standard programming course from the CS catalog, or a CS-like course heavy in abstraction. That is usually not the most useful skill for the science students to learn. In practice, the scientists need to learn to write only small procedural programs. They don't really need OOP (which was the topic of a previous talk at the workshop), though they almost certainly will be clients of rich and powerful objects.

Python was a popular choice among attendees and is apparently quite popular as a scripting language in the sciences. The Matter and Interactions curriculum in physics, developed by Chabay and Sherwood, depends intimately on simulations programmed -- by physics students -- in VPython, which provides an IDE and modules for fast array operations and some quite beautiful 3-D visualization. I'm not running VPython yet, because it currently requires X11 on my Mac and I've tried to stay native Mac whenever possible. This package looks like it might be worth the effort.

A scripting language augmented with the right libraries seems like a slam-dunk for programmers in this context. Physics, astronomy, and other science students don't want to learn the overhead of Java or the gotchas of C pointers; they want to solve problems. Maybe CS students would benefit from learning to program this way? We are trying a Python-based version of a media computation CS1 in the spring and should know more about how our majors respond to this approach soon. The Java-based CS1 using media computation that we ran last year went well. In the course that followed, we did observe a few gaps that CS students don't usually have after CS1, so we will need to address those in the data structures course that will follow the Python-based offering. But that was to be expected -- programming for CS students is different than programming for end users. Non-computer scientists almost certainly benefit from a scripting language introduction. If they ever need more, they know where to go...

The next question is, should CS faculty teach the programming course for non-CS students? CS faculty almost always say, "Yes!" Someone at the workshop said that otherwise programming will be "taught BioPerl badly in a biology course by someone who only knows how to hack Perl. Owen Astrachan dared to ask "'taught badly' -- but is it?" The science students learn what they need to solve problems in their lab. CS profs responded, well, but their knowledge will be brittle, and it won't scale, and .... But do the scientists know that -- or even care!? If they ever need to write bigger, less brittle, more scalable programs, they know where to go to learn more.

I happen to believe that science students will benefit by learning to program from computer science professors -- but only if we are willing to develop courses and materials for them. I see no reason to believe that the run-of-the-mill CS1 course at most schools is the right way to introduce non-CS students to programming, and lots of reasons to believe otherwise.

And, yes, I do think that science students should learn how to program, for two reasons. One is that science in the 21st century is science of computation. That was one of the themes of this workshop. The other is that -- deep in my heart -- I think that all students should learn to program. I've written about this before, in terms of Alan Kay's contributions, and I'll write about it again soon. In short, I have at least two reasons for believing this:

- Computation is a new medium of communication, and one with which we should empower everyone, not just a select few.

- Computer programming is a singular intellectual achievement, and all educated people should know that, and why.

Big claims to close an entry!

November 19, 2007 4:41 PM

Workshop 3: The Next Generation

[A transcript of the SECANT 2007 workshop: Table of Contents]

The highlight for me of the final morning of the SECANT workshop was a session on the "next generation of scientists in the workforce". It consisted of presentations on what scientists are doing out in the world and how computer scientists are helping them.

Chris Hoffman gave a talk on applications of geometric computing in industry. He gave examples from two domains, the parametric design of mechanical systems and the study of the structure and behaviors of proteins. I didn't follow the virus talk very well, but the issue seems to lie in understanding the icosahedral symmetry that characterizes many viruses. The common theme in the two applications is constraint resolution, a standard computational technique. In the design case, the problem is represented as a graph, and graph decomposition is used to create local plans. Arriving at a satisfactory design requires solving a system of constraint equations, locally and globally. A system of constraints is also used to model the features of a virus capsid and then solved to learn how the virus behaves.

Essential to both domains is the notion of a constraint solver. This won't be a surprise to folks who work on design systems, even CAD systems, but the idea that a biologist needs to work with a constraint solver might surprise many. Hoffman's take-home point was that we cannot do our work locked within our disciplinary boundaries, because we don't usually know where the most important connections lie.

The next two talks were by computer scientists working in industry. Both gave wonderful glimpses of how scientists work today and how computer scientists help them -- and are helping them redefine their disciplines.

First was Amar Kumar of Eli Lilly, who drew on his work in bioinformatics with biologists and chemists in drug discovery. He views his primary responsibility as helping scientists interpret their data.

The business model traditionally used by Lilly and other big pharma companies is unsustainable. If Lilly creates 10,000 new compounds, roughly 1,000 will show promise, 100 will be worth testing, and 1 will make it to market. The failure rate, the time required by the process, the cost of development -- all result in an unsustainable model.

Kumar means that Lilly and its scientists must undergo a transformation in thought process about how to find candidates and how to discover effects. He gave two examples. Biologists must move from "How does this particular gene respond to this particular drug?" to "How do all human genes respond to this panel of 35,000 drugs?" There are roughly 30,000 human genes, which means that the new question produces 1 billion data points. Similarly, drug researchers must move from "What does Alzheimer's do to the levels of amyloid protein in the brain? to "When I compare a healthy patient with an Alzheimer's patient, what is difference in the level of every brain-specific protein over time?" Again, the new question produces a massive number of data points.

Drug companies must ask new kinds of questions -- and design new ways to find answers. The new paradigm shifts the power from pure lab biologists to bioinformatics and statistics. This is a major shift in culture at a place like Lilly research labs. It terms of a table of gene/drug interactions, the first adjustment is from cell thinking (1 gene/1 drug) to column thinking (1 drug/all genes). Ultimately, Kumar believes, the next step -- to grid thinking (m drugs/n genes) and finding patterns throughout -- is necessary, too.

What the bioinformatician can do is to help convert information into knowledge. Kumar said that a friend used to ask him what he would do with infinite computational power. He thinks the challenge these days is not to create more computational power. We already have more data in our possession than we know what to do with. More than more raw power, we need new ways to understand the data that we gather. For example, we need to use clustering techniques more effectively to find patterns in the data, to help scientists see the ideas. Scientists do this "in the small", by hand, but programs can do so much better. Kumar showed an example, a huge spreadsheet with a table of genes crossed with metabolites. Rather than look at the data in the small he converted the numbers to a heat map so that the scientist could focus on critical areas of relationship. That is a more fruitful way to identify possible experiments than to work through the rows of the table by hand.

Kumar suggests that future scientists require some essential computational skills:

- data integration (across data sets)

- data visualization

- clustering

- translation of problems from one space to another

- databases

- software development lifecycle

Do CS students learn about clustering as undergrads? Biologists need to. On the last two items, other scientists usually know remarkably little. Knowing a bit about the software lifecycle will help them work better with computer scientists. Knowing a bit about databases will help them understand the technology decisions the CS folks make. If all you know is a flat text file or maybe a spreadsheet, then you may not understand why it is better to put the data in a database -- and how much better that will support your work.

The second speaker was Bob Zigon from Beckman Coulter, a company that works in the area of flow cytometry. Most of us in the room didn't know that flow cytometry studies the properties of cells as they flow through a liquid. Zigon is a software tech lead for the development of flow cytometry tools. He emphasized that to do his job, he has to act like the lab scientists. He has to learn their vocabulary, how to run their equipment, how to build their instruments, and how to perform experiments. The software folks at Beckman Coulter spend a lot of time observing scientists.

... and students chuckle at me when I tell them psychology, anthropology, and sociology make great minors or double majors for CS students! My experience came in the world of knowledge-based systems, which require a deep understanding of the practice and implicit knowledge of domain experts. Back in the early 1990s, I remember AI researcher John McDermott, of R1/XCON fame, describing how his expert systems team had evolved toward cultural anthropology as the natural next step in their work. I think that all software folks must be able to develop a deep cultural understanding of the domains they work in, if they want to do their jobs well. As software development becomes more and more interdisciplinary, it becomes more important. Whether they learn these skills in the trenches or with some formal training is up to them.

Enjoying this sort of work helps a software developer, too. Zigon clearly does. He and his team implement computations and build interfaces to support the scientists who use flow cytometry to study blood cancer and other health conditions. He gave a great two-minute description of one of the basic processes that I can't do justice here. First they put blood into a tube that narrows down to the thickness of a hair. The cells line up, one by one. Then the scientists run the blood across a laser beam, which causes the cells to effloresce. Hardware measures the fluorescent energy, and software digitizes it for analysis. The equipment processes 10k cells/second, resulting in 18 data points for each of anywhere between 1 and 20 million cells.

What do scientists working in this area need? Data management across the full continuum: acquisition, organization, querying, and visualization. Eight years of research data amount to about 15 gigabytes. Eight years of pharmaceutical data reaches 185 GB. And eight years of clinical data is 3 terabytes. Data is king.

Zigon's team moves all the data into relational databases, converting the data into fifth normal form to eliminate as much redundancy as possible. Their software makes the data available to the scientists for online transactional processing and online analytical processing. Even with large data sets and expensive computations, the scientists need query times in the range of 7-10 seconds.

With so much data, the need for ways to visualize data sets and patterns is paramount. In real time, they process 750 MB data sets at 20 frames per second. The biologists would still use histograms and scatter plots as their only graphical representations if the software guys couldn't do better. Zigon and his team build tools for n-dimensional manipulation and review of the data. They also work on data reduction, so that the scientists can focus on subsets when appropriate.

Finally, to help find patterns, they create and implement clustering algorithms. Many of the scientists tend to fall back on k-means clustering, but in highly multidimensional spaces that technique imposes a false structure on the data. They need something better, but the alternatives are O(n²) -- which is, of course, intractable on such large sets. So Zigon needs better algorithms and, contrary to Kumar's case, more computational power! At the top of his wish list are algorithms whose complexity scales to studying 15 million cells at a time and ways to parallelize these algorithms in cost- and resource-effective ways. Cluster computing is attractive -- but expensive, loud, hot, .... They need something better.

What else do scientists need? The requirements are steep. The ability to integrate cellular, proteomic, and genomic data. Usable HCI. On a more pedestrian tack, they need to replace paper lab notebooks with electronic notebooks. That sounds easy but laws on data privacy and process accountability make that a challenging problem, too. Zigon's team draws on work in the areas of electronic signatures, data security on a network, and the like.

From these two talks, it seems clear that domain scientists and computer scientists of the future will need to know more about the other discipline than may have been needed in the past. Computing is redefining the questions that domain scientists must ask and redefining the tasks performed by the CS folks. The domain scientists need to know enough about computer science, especially databases and visualization, to know what is possible. Computer scientists need to study algorithms, parallelism, and HCI. They also need to take more seriously the soft skills of communication and teamwork that we have encouraging for many years now.

The Q-n-A session that followed pointed out an interesting twist on the need for communication. It seems that clustering algorithms are being reinvented across many disciplines. As each discipline encounters the need, the scientists and mathematicians -- and even computer scientists -- working in that area sometimes solve their problems from scratch without reference to the well-developed results on clustering and pattern recognition from CS and math. This seems like a potentially valuable place to initiate dialogue across the sciences in places looking to increase their interdisciplinary focus.

November 16, 2007 10:52 AM

Workshop 2: Exception Gnomes, Garbage Collection Fairies, and Problems

[A transcript of the SECANT 2007 workshop: Table of Contents]

Thursday afternoon at the NSF workshop saw a hodgepodge of sessions around the intersection of science ed and computing.

The first session was on computing courses for science majors. Owen Astrachan described some of the courses being taught at Duke, including his course using genomics as the source of problems to learn programming. He entertained us all with pictures of scientists and programmers, in part to demonstrate how many of the people who matter in the domains where real problems live are not computer geeks. The problems that matter in the world are not the ones that tend to excite CS profs...

Unbelievable but true! Not everyone knows about the Towers of Hanoi.

... or cares.

John Zelle described curriculum initiatives at Wartburg College to bring computing to science students. Wartburg has taken several small steps along the way:

- a more friendly CS1 course

- an introductory course in computational science

- integration of computing into the physics curriculum

- a CS senior project course that collaborates with the sciences

- (coming) a revision of the calculus sequence

At first, Zelle said a "CS1 course friendlier to scientists", but then he backed up to the more general statement. The idea of needing a friendlier intro course even for our majors is something many of us in CS have been talking about for a while, and something I wrote about a while back. I was also interested in hearing about Wartburg's senior projects. More recently, I wrote about project-based computer science education. Senior project courses are a great idea, and one that CS faculty can buy into at many schools. That makes it a good first step to perhaps changing the whole CS program, if a faculty were so inclined. The success of such project-centered courses is just about the only way to convince some faculty that a project focus is a good idea in most, if not all, CS courses.

Wartburg's computational science course covers many of the traditional topics,including modeling, differential equations, numerical methods, and data visualization. It also covers the basics of parallel programming, which is less common in such a course. Zelle argued that every computational scientist should know a bit about parallel programming, given the pragmatics of computing over massive data sets.

The second session of the afternoon dealt with issues of programming "in the small" versus "in the large". It seemed like a bit of a hodgepodge itself. The most entertaining of these talks was by Dennis Brylow of Marquette, called "Object Dis-Oriented". He said that his charge was to "take a principled stand that will generate controversy". Some in the room found this to be a novelty, but for Owen and me, and anyone in the SIGCSE crowd, it was another in a long line of anti-"objects first" screeds: Students can't learn how to decompose into methods until they know what goes into a method; students can't learn to program top-down, because then "it's all magic boxes".

I reported on a similarly entertaining panel at SIGCSE a couple of years ago. Brylow did give us something new, a phrase for the annals of anti-OO snake oil: to students who learn OOP first see their programs as

... full of exception gnomes and garbage collection fairies.

Owen asked the natural question, reductio ad absurdum: Why not teach gates then? The answer from the choir around the room was, good point, we have to choose a level, but that level is below objects -- focused on "the fundamentals". Sigh. Abacus-early, anyone?

Brylow also offered a list of what he thinks we should teach first, which contains some important ideas: a small language, a heavy focus on data representation, functional decomposition, and the fundamental capabilities of machine computation

This list tied well into the round-table discussion that followed, on what computational concepts science students should learn. I didn't get a coherent picture from this discussion, but one part stood out to me. Bruce Sherwood said that many scientists view analytical solution as privileged over simulation, because it is exact. He then pointed out that in some domains the situation is being turned on its head: a faithful discrete simulation is a more real depiction of the world than the closed-form analytical solution -- which is, in fact, only an approximation created at a time when our tools were more limited. The best quote of this session came from John Zelle: Continuity is a hack!

The day closed with another hodgepodge session on the role of data visualization. Ruth Chabay spoke about visualizing models, which in physics are as important as -- more important than!? -- data. Michael Coen gave a "great quote"-laden presentation that on the question of whether computer science is the servant of science or the queen of the sciences, a lá Gauss on math before him. Chris Hoffman gave a concise but convincing motivational talk:

- There is great power in visualizing data.

- With power comes risk, the risk of misleading.

- Visualization can be tremendously effective.

- Techniques for visual data analysis must account for the coming data deluge. (He gave some great examples...)

- The challenges of massive data are coming in all of the sciences.

When Coen's and Hoffman's slides become available on-line, I will point to them. They would be worth a glance.

Hodgepodge sessions and hodgepodge days are okay. Sometimes we don't know where the best connections will come from...

November 15, 2007 9:13 PM

Making Time to Do What You Love

Earlier this week, I read The Geomblog's A day in the life..., in which Suresh listed what he did on Monday. Research did not appear on the list.

I felt immediate and intense empathy. On Monday, I had spent all morning on our college's Preview Day, on which high school students who are considering studying CS at my university visit campus with their parents. It is a major recruiting effort in our college. I spent the early morning preparing my discussion with them and the rest of the morning visiting with them. The afternoon was full of administrative details, computer labs and registration and prospective grad students. On Tuesday, when I read the blog entry, I had taught compilers -- an oasis of CS in the midst of my weeks -- and done more administration: graduate assistantships, advising, equipment purchases, and a backlog of correspondence. Precious little CS in two days, and no research or other scholarly activity.

Alas, that is all too typical. Attending an NSF workshop this week is a wonderful chance to think about computer science, its application in the sciences, and how to teach it. Not research, but computer science. I only wish I had a week or five after it ends to carry to fruition some of the ideas swirling around my mind! I will have an opportuniy to work more on some of these ideas when I return to the office, as a part of my department's curricular efforts, but that work will be spread over many weeks and months.

That is not the sort of intense, concentrated work that I and many other academics prefer to do. Academics are bred for their ability to focus on a single problem and work intensely on it for long periods of time. Then comes academic positions that can spread us quite then. An administrative position takes that to another level.

Today at the workshop, I felt a desire to bow down before an academic who understands all this and is ready to take matters into his own hands. Some folks were discussing the shortcomings of the current Mac OS X version of VPython, the installation of which requires X11, Xcode, and Fink. Bruce Sherwood is one of the folks in charge of VPython. He apologized for the state of the Mac port and explained that the team needs a Mac guru to build a native port. They are looking for one, but such folks are scarce. Never fear, though... If they can't find someone soon, Sherwood said,

I'm retiring so that I can work on this.

Now that is commitment to a project. We should all have as much moxie! What do you say, Suresh?

November 15, 2007 8:34 PM

Workshop 1: Creating a Dialogue Between Science and CS

[A transcript of the SECANT 2007 workshop: Table of Contents]

The main session this morning was on creating a dialogue between science and CS. There seems to be a consensus among scientists and computer scientists alike that the typical introductory computer science course is not what other science students need, but what do they need? (Then again, many of us in CS think that the typical introductory computer science course is not what our computer science students need!)

Bruce Sherwood, a physics professor at North Carolina State, addressed the question,"Why computation in physics?" He said that this was one prong in an effort to admit to physics students "that the 20th century happened". Apparently, this is not common enough in physics. (Nor in computer science!) To be authentic to modern practice, even intro physics must show theory, experiment, and computation. Physicists have to build computational models, because many of their theories are too complex or have no analytical solution, at least not a complete one.

What excited me most is that Sherwood sees computation as a means for communicating the fundamental principles of physics, even its reductionist nature. He gave as an example the time evolution of Newtonian synthesis. The closed form solution shows students the idea only at a global level. With a computer simulation, students can see change happen over time. Even more, it can be used to demonstrate that the theory supports open-ended prediction of future behavior. Students never really see this when playing with analytical equations. In Sherwood's words, without computation, you lose the core of Newtonian mechanics!

He even argued that physics students should learn to program. Why?

- So there are "no black boxes". He wants his students to program all the physics in their simulations.

- So that they see common principles, in the form of recurring computations.

- So that they can learn the relationship between the different representations they use: equations, code, animation, ...

More on science students and programming in a separate entry.

Useful links from his talk include comPADRE, a part of the National Science Digital Library for educational resources in physics and astronomy, and VPython, dubbed by supporters as "3D Programming for Ordinary Mortals". I must admit that the few demos and programs I saw today were way impressive.

The second speaker was Noah Diffenbaugh, a professor in earth and atmospheric sciences at Purdue. He views himself as a modeler dependent on computing. In the last year or so, he has collected 55 terabytes of data as a part of his work. All of his experiments are numerical simulations. He cannot control the conditions of the system he studies, so he models the system and runs experiments on the model. He has no alternative.

Diffenbaugh claims that anyone who wants a career in his discipline must be able to do computing -- as a consumer of tools, builder of models. He goes farther, calling himself a black sheep in his discipline for thinking that learning computing is critical to the intellectual development of scientists and non-scientists alike.

When most scientists talk of computation, they talk about a tool -- their tool -- and why it should be learned. They do not talk about principles of computing or the intellectual process one practices when writing a program. This concerns Diffenbaugh, who thinks that scientists must understand the principles of computing on which they depend, and that non-scientists must understand them, too, in order to under the work of scientists. Of course, scientists are the only ones who fixate on their computational tools to the detriment of discussing ideas. CS faculty do it, too, when they discuss CS1 in terms of the languages we teach. What's worse, though, is that some of us in CS do talk about principles of computing and intellectual process -- but only as the sheep's clothing that sneaks our favorite languages and tools and programming ideas into the course.

The session did include some computer scientists. Kevin Wayne of Princeton described an interdisciplinary "first course in computer science for the next generation of scientists and engineers". On his view, both computer science students and students of science and engineering are shortchanged when they do not study the other discipline. One of his colleagues (Sedgewick?) argues that there should be a common core in math, science, and computation for all science and engineering students, including CS.

What do scientists want in such a course? Wayne and his colleagues asked and found that they wanted the course to cover simulation, data analysis, scientific method, and transferrable programming skills (C, Perl, Matlab). That list isn't too surprising, even the fourth item. That is a demand that CS folks hear from other CS faculty and from industry all the time.

The course they have built covers the scientific method and a modern programming model built on top of Java. It is infused with scientific examples throughout. This include not examples from the hard sciences, such as sequence alignment, but also cool examples from CS, such as Google's page rank scheme. In the course, they use real data and so so experience the sensitivity to initial conditions in the models they build. He showed examples from financial engineering and political science, including the construction of a red/blue map of the US by percentage of the vote won by each candidate in each state. Data of this sort is available at National Atlas of the US, a data source I've already added to my list.

The fourth talk of the session was on the developing emphasis on modeling at Oberlin College, across all the sciences. I did not take as many notes on this talk, but I did grab one last link from the morning, to the Oberlin Center for Computation and Modeling. Occam -- a great acronym.

My main takeaway points from this session came from the talks by the scientists, perhaps because I know relatively less about what scientists think about and want from computer science. I found the examples they offered fascinating and their perspectives on computing to be surprisingly supportive. If these folks are at all representative, the dialogue between science and CS is ripe for development.

November 15, 2007 6:59 PM

Workshop Intro: Teaching Science and Computing

[A transcript of the SECANT 2007 workshop: Table of Contents]

I am spending today and tomorrow at an NSF Workshop on Science Education in Computational Thinking put on by SECANT, a group at Purdue University funded by a grant from NSF's Pathways to Revitalized Undergraduate Computing Education (CPATH) program. SECANT's goals are to build a community that is asking and answering questions such as these:

- What should science majors know about computing?

- How can computer science be used to teach science?

- Can we integrate computer science effectively into other majors?

- What will the implications of answers to these questions be for how we teach computer science and engineering themselves?

The goal of this workshop is to begin building a community, to share ideas and to make connections. I'll share in my next few entries some of the ideas I encounter here, as well as some of the thoughts I have along the way. This entry is mostly about the background of the workshop and a few miscellaneous impressions.

First, I am impressed with the wide range of attendees. Folks come from big state schools such as Ohio State, Purdue, and Iowa, from private research schools such as Princeton and Notre Dame, and from small liberal arts schools such as Wartburg and Kalamazoo.

We started with introduction from the workshop organizers at Purdue and the NSF itself. Joseph Urban from NSF spoke a bit about the challenges addressed by the CPATH program. I think its most interesting goal is to move "beyond curriculum revision" to "institution transformation models" -- avoiding the curse of incremental change. This reminded me of something that Guy Kawasaki said in his talk The Art of Innovation: Revolution, then evolution. To completely change how we teach sciences and intro computer science -- revolution first, or evolution? Given the deep strain of academic conservatism that dominates most colleges and universities, this raises an interesting question about which approach will work best. From what I've seen here today, different schools are trying each, with various levels of success.

The introductory remarks by Jeff Vitter, dean of the College of Sciences -- and a computer scientist by training -- included a comment that is a theme underlying this workshop and driving the scientists who are here to explore computer science more deeply: Computing is now a fundamental component in the cycle of science: theory followed by experimentation. For many scientists, building models is the next step after experiment, or even a hand-in-hand partner to experiment. For many scientists, visualizing the results of experiments is essential -- we cannot understand them otherwise.

The workshop made a few personal connections for me. Also in attendance are neighbors of mine, Alberto Segre from Iowa and John Zelle from Wartburg College. But there are connections to my past, too. Another attendee is an old grad school colleague of mine, Pat Flynn, who is now at Notre Dame. FInally, from Urban's NSF presentation I learned that one of the big CPATH awards was made to a team at Michigan State -- including my old advisor. I'm not too surprised that his professional interests have evolved in this direction, though he might be.

Some here expressed surprise that so many folks are already doing interesting work in this arena. I wasn't, because there's been a lot of buzz in the last couple of years, but I was interested to see the diversity of new courses and new programs already in place. That is, of course, one of the great benefits of attending workshops such as this one.

November 12, 2007 7:27 AM

Notes to My Bloglines Readers

My apologies to the 130-odd of you who read this blog via Bloglines. A couple of you have alerted me to a technical issue with links disappearing from my posts when you read Knowing and Doing through the Bloglines interface. The problem is intermittent, which makes it frustrating for you all and harder for me to track down.

I've validated my RSS feed at http://feedvalidator.org and looked for some clues in the HTML source. No luck. At this point, I have asked the folks at Bloglines to see if they can find something in my feed that interacts badly with their software. I'll keep you posted.

November 10, 2007 4:21 PM

Programming Challenges

Gerald Weinberg's recent blog Setting (and Character): A Goldilocks Exercise describes a writing exercise that I've mentioned here as a programming exercise, a pedagogical pattern many call Three Bears. This is yet another occurrence of a remarkably diverse pattern.

Weinberg often describes exercises that writers can use to free their minds and words. It doesn't surprise me that "freeing" exercises are built on constraints. In one post, Weinberg describes The Missing Letter, in which the writer writes (or rewrites) a passage without using a randomly chosen letter. The most famous example of this form, known as a lipogram, is La disparition, a novel written by Georges Perec without an using the letter 'e' -- except to spell the author's name on the cover.

When I read that post months ago, I immediately thought of creating programming exercises of a similar sort. As I quoted someone in a post on a book about Open Court Publishing, "Teaching any skill requires repetition, and few great works of literature concentrate on long 'e'." We can design a set of exercises in which the programmer surrenders one of her standard tools. For instance, we could ask her to write a program to solve a given problem, but...

- with no if statements. This is exactly the idea embodied in the Polymorphism Challenge that my friend Joe Bergin and I used to teach a workshop at SIGCSE a couple of years ago and which I often find useful in helping programmers new to OOP see what is possible.

-

with no for statements. I took a big step in understanding how objects worked when I

realized how the internal iterators in Smalltalk's collection classes let me solve repetition tasks with a single

message -- and a description of the action I wanted to take. It was only many years later that I learned the term

"internal iterator" or even just "iterator", but by then the idea was deeply ingrained in how I programmed.

Recursion is the usual path for students to learn how to repeat actions without a for statement, but I don't think most students get recursion the way most folks teach it. Learning it with a rich data type makes a lot more sense.

- with no assignment statements. This exercise is a double-whammy. Without assignment statements, there is no call explicit sequences of statements, either. This is, of course, what pure functional programming asks of us. Writing a big app in Java or C using a pure functional style is wonderful practice.

-

with no base types. I nearly wrote about this sort of exercise a couple of years ago when discussing

the OOP anti-pattern Primitive

Obsession. If you can't use base types, all you have left are instances of classes. What objects can do the job

for you? In most practical applications, this exercise ultimately bottoms out in a domain-specific class that wraps

the base types required to make most programming languages run. But it is a worthwhile practice exercise to see how

long one can put off referring to a base type and still make sense. The overkill can be enlightening.

Of course, one can start with an language that provides only the most meager set of base types, thus forcing one to build up nearly all the abstractions demanded by a problem. Scheme feels like that to most students, but only a few of mine seem to grok how much they can learn about programming by working in such a language. (And it's of no comfort to them that Church built everything out of functions in his lambda calculus!)

This list operates at the level of programming construct. It is just the beginning of the possibilities. Another approach would be to forbid the use of a data structure, a particularly useful class, or an individual base type. One could even turn this idea into a naming challenge by hewing close to Weinberg's exercise and forbidding the use of a selected letter in identifiers. As an instructor, I can design an exercise targeted at the needs of a particular student or class. As a programmer, I can design an exercise targeted at my own blocks and weaknesses. Sometimes, it's worth dusting off an old challenge and doing it for it's own sake, just to stay sharp or shake off some rust.

Posted by Eugene Wallingford | Permalink | Categories: Computing, Patterns, Software Development, Teaching and Learning

November 07, 2007 8:02 PM

Magic Books and Connections to Software

Lest you all think I have strayed so far from software and computer science with my last note that I've fallen off the appropriate path for this blog, let me reassure you. I have not. But there is even a connection between my last post and the world of software, though it is sideways.

Richard Bach, the writer whom I quoted last time, is best known for his bestselling Jonathan Livingston Seagull. I read a lot of his stuff back in high school and college. It is breezy pop philosophy wrapped around thin plots, which offers some deep truths that one finds in Hinduism and other Eastern philosophies. I enjoyed his books, including his more straightforward books on flying small planes.

But one of Richard Bach's sons is James, a software tester with whose work I came into contact via Brian Marick's. James is a good writer, and I enjoy both his blog and his other writings about software, development methods, and testing. Another of Richard Bach's son, Jon, is also a software guy, though I don't know about his work. I think that James and Jon have published together.

Illusions offers a book nested inside another book -- a magic book, no less. All we see of it are the snippets that our protagonist needs to read each moment he opens it. One of the snippets from this book-within-a-book might be saying something important about an ongoing theme here:

There is no such thing as a problem without a gift for you in its hands. You seek problems because you need their gifts.

Here is the magic page that grabbed me most as I thumbed through the book again this morning:

Live never to be ashamed if anything you do or say is published around the world -- even if what is published is not true.

Now that is detachment.

How about one last connection? This one is not to software. It is an unexpected connection I discovered between Bach's work and my life after I moved to Iowa. The title character in Bach's most famous book was named for a real person, John Livingston, a world-famous pilot circa 1930. He was born in Cedar Falls, Iowa, my adopted hometown, and once even taught flying at my university, back when it was a teachers' college. The terminal of the local airport, which he once managed, is named for Livingston. I have spent many minutes waiting to catch a plane there, browsing pictures and memorabilia from his career.

November 07, 2007 7:45 AM

Magic Books

Last Saturday morning, I opened a book at random, just to fill some time, and ended up writing a blog entry on electronic communities. It was as if the book were magic... I opened to a page, read a couple of sentences, and was launched on what seemed like the perfect path for that morning. That experience echoed one of the things Vonnegut himself has often said: there is something special about books.

This is one reason that I don't worry about getting dumber by reading books, because for me books have always served up magic.

I remember reading just that back in high school, in Richard Bach's Illusions:

I noticed something strange about the book. "The pages don't have numbers on them, Don."

"No," he said. "You just open it and whatever you need most is there."

"A magic book!"

These days, I often have just this experience on the web, as I read blogs and follow links off to unexpected places. An academic book or conference proceedings can do the same. Bach would have said, "But of course."

"No you can do it with any book. You can do it with an old newspaper, if you read carefully enough. Haven't you done that, hold some problem in your mind, then open any book handy and see what it tells you?"

I do that sometimes, but I'm just as likely to catch a little magic when my mind is fallow, and I grab a paper of one of my many stacks for a lunch jaunt. Holding a particular problem in my mind sometimes puts too much pressure on whatever might happen.

Indeed, this comes back to the theme of the article I wrote on Saturday morning. On one hand there are traditional media and traditional communities, and on the other are newfangled electronic media and electronic communities. The traditional experiences often seem to hold some special magic for us. But the magic is not in any particular technology; it is in the intersection between ideas out there and our inner lives.

When I feel something special in the asynchronicity of a book's magic, and think that the predetermination of an RSS feed makes it less spontaneous, that just reflects my experience, maybe my lack of imagination. If I look back honestly, I know that I have stumbled across old papers and old blog posts and old web pages that served up magic to me in much the same way that books have done. And, like electronic communities, the digital world of information creates new possibilities for us. A book can be magic for me only if I have a copy handy. On the web, every article is just a click a way. That's a powerful new sort of magic.

November 06, 2007 6:53 AM

Lack of Confidence and Teamwork