August 31, 2012 3:22 PM

Two Weeks Along the Road to OOP

The month has flown by, preparing for and now teaching our "intermediate computing" course. Add to that a strange and unusual set of administrative issues, and I've found no time to blog. I did, however manage to post what has become my most-retweeted tweet ever:

I wish I had enough money to run Oracle instead of Postgres. I'd still run Postgres, but I'd have a lot of cash.

That's an adaptation of tweet originated by @petdance and retweeted my way by @logosity. I polished it up, sent it off, and -- it took off for the sky. It's been fun watching its ebb and flow, as it reaches new sub-networks of people. From this experience I must learn at least one lesson: a lot of people are tired of sending money to Oracle.

The first two weeks of my course have led the students a few small steps toward object-oriented programming. I am letting the course evolve, with a few guiding ideas but no hard-and-fast plan. I'll write about the course's structure after I have a better view of it. For now, I can summarize the first four class sessions:

- Run a simple "memo pad" app, trying to identify behavior (functions) and state (persistent data). Discuss how different groupings of the functions and data might help us to localize change.

- Look at the code for the app. Discuss the organization of the functions and data. See a couple of basic design patterns, in particular the separation of model and view.

- Study the code in greater detail, with a focus on the high-level structure of an OO program in Java.

- Study the code in greater detail, with a focus on the lower-level structure of classes and methods in Java.

The reason we can spend so much time talking about a simple program is that students come to the course without (necessarily) knowing any Java. Most come with knowledge of Python or Ada, and their experiences with such different languages creates an interesting space in which to encounter Java. Our goal this semester is for students to learn their second language as much as possible, rather than having me "teach" it to them. I'm trying to expose them to a little more of the language each day, as we learn about design in parallel. This approach works reasonably well with Scheme and functional programming in a programming languages course. I'll have to see how well it works for Java and OOP, and adjust accordingly.

Next week we will begin to create things: classes, then small systems of classes. Homework 1 has them implementing a simple array-based class to an interface. It will be our first experience with polymorphic objects, though I plan to save that jargon for later in the course.

Finally, this is the new world of education: my students are sending me links to on-line sites and videos that have helped them learn programming. They want me to check them and and share with the other students. Today I received a link to The New Boston, which has among its 2500+ videos eighty-seven beginning Java and fifty-nine intermediate Java titles. Perhaps we'll come to a time when I can out-source all instruction on specific languages and focus class time on higher-level issues of design and programming...

Posted by Eugene Wallingford | Permalink | Categories: General, Software Development, Teaching and Learning

August 27, 2012 12:53 PM

My Lack of Common Sense

In high school, I worked after school doing light custodial work for a local parochial school for a couple of years. One summer, a retired guy volunteered to lead me and a couple of other kids doing maintenance projects at the school and church.

One afternoon, he found me in trying to loosen the lid on a paint can using one of my building keys. Yeah, that was stupid. He looked at me as if I were an alien, got a screwdriver, and opened the can.

Later that summer, I overheard him talking to the secretary. She asked how I was working out, and he said something to the effect of "nice kid, but he has no common sense".

That stung. He was right, of course, but no one likes to be thought of as not capable, or not very smart. Especially someone who likes to be someone who knows stuff.

I still remember that eavesdropped conversation after all these years. I knew just what he meant at the time, and I still do. For many years I wondered, what was wrong with me?

It's true that I didn't have much common sense as a handyman back then. To be honest, I probably still don't. I didn't have much experience doing such projects before I took that job. It's not something I learned from my dad. I'd never seen a bent key before, at least not a sturdy house key or car key, and I guess it didn't occur to me that one could bend.

The A student in me wondered why I hadn't deduced the error of my ways from first principles. As with the story of Zog, it was immediately obvious as soon as it was pointed out to me. Explanation-based learning is for real.

Over time, though, I have learned to cut myself some slack. Voltaire was right: Common sense is not so common. These days, people often say that to mean there are far too many people like me who don't have the sense to come in out of the rain. But, as the folks at Wikipedia recognize, that sentence can mean something else even more important. Common sense isn't shared whenever people have not had the same experiences, or have not learned it some other way.

Maybe there are still some things that most of us can count on as common, by virtue of living in a shared culture. But I think we generally overestimate how much of any given person's knowledge is like that. With an increasingly diverse culture, common experience and common cultural exposure are even harder to come by.

That gentleman and secretary probably forgot about their conversation within minutes, but the memory of his comment still stings a little. I don't think I'd erase the memory, though, even if I could. Every so often, it reminds me not to expect my students to have too much common sense about programs or proofs or programming languages or computers.

Maybe they just haven't had the right experiences yet. It's my job to help them learn.

August 13, 2012 3:56 PM

Lessons from Unix for OO Design

Pike's and Kernighan's Program Design in the UNIX Environment includes several ideas I would like for my students to learn in my Intermediate Computing course this fall. Among them:

... providing the function in a separate program makes convenient options ... easier to invent, because it isolates the problem as well as the solution.

In OO, objects are the packages that create possibilities for us. The beauty of this lesson is the justification: because a class isolates the problem as well as the solution.

This solution affects no other programs, but can be used with all of them.

This is one of the great advantages of polymorphic objects.

The key to problem-solving on the UNIX system is to identify the right primitive operations and to put them at the right place.

Methods should live in the objects whose data they manipulate. One of the hard lessons for novice OO programmers coming from a procedural background is putting methods with the thing, not a faux actor.

UNIX programs tend to solve general problems rather than special cases.

Objects that are too specific should be rare, at least for beginning programmers. Specificity in interface often indicates that implementation detail is leaking out.

Merely adding features does not make it easier for users to do things -- it just makes the manual thicker.

Keep objects small and focused. A big interface is often evidence of an object waiting to be born.

~~~~

In many ways, The Unix Way is contrary to object-oriented programming. Or so many of Linux friends tell me. But I'm quite comfortable with the parallels found in these quotes, because they are more about good design in general than about Unix or OOP themselves.

August 09, 2012 1:36 PM

Sentences to Ponder

In Why Read?, Mark Edmundson writes:

A language, Wittgenstein thought, is a way of life. A new language, whether we learn it from a historian, a poet, a painter, or a composer of music, is potentially a new way to live.

Or from a programmer.

In computing, we sometimes speak of Perlis languages, after one of Alan Perlis's best-known epigrams: A language that doesn't affect the way you think about programming is not worth knowing. A programming language can change how we think about our craft. I hope to change how my students think about programming this fall, when I teach them an object-oriented language.

But for those of us who spend our days and nights turning ideas into programs, a way of thinking is akin to a way of life. That is why the wider scope of Wittgenstein's assertion strikes me as so appropriate for programmers.

Of course, I also think that programmers should follow Edmundson's advice and learn new languages from historians, writers, and artists. Learning new ways to think and live isn't just for humanities majors.

(By the way, I'm enjoying reading Why Read? so far. I read Edmundson's Teacher many years ago and recommend it highly.)

August 08, 2012 1:50 PM

Examples First, Names Last

Earlier this week, I reviewed a draft chapter from a book a friend is writing, which included a short section on aspect-oriented programming. The section used common AOP jargon: "cross cutting", "advice", and "point cut". I know enough about AOP to follow his text, but I figured that many of his readers -- young software developers from a variety of backgrounds -- would not. On his use of "cross cutting", I commented:

Your ... example helps to make this section concrete, but I bet you could come up with a way of explaining the idea behind AOP in a few sentences that would be (1) clear to readers and (2) not use "cross cutting". Then you could introduce the term as the name of something they already understand.

This may remind you of the famous passage from Richard Feynman about learning names and understanding things. (It is also available on a popular video clip.) Given that I was reviewing a chapter for a book of software patterns, it also brought back memories of advice that Ralph Johnson gave many years ago on the patterns discussion list. Most people, he said, learn best from concrete examples. As a result, we should write software patterns in such a way that we lead with a good example or two and only then talk about the general case. In pattern style, he called this idea "Concrete Before Abstract".

I try to follow this advice in my teaching, though I am not dogmatic about it. There is a lot of value in mixing up how we organize class sessions and lectures. First, different students connect better with some approaches than others, so variety increases the chances that of connecting with everyone a few times each semester. Second, variety helps to keep students in interested, and being interested is a key ingredient in learning.

Still, I have a preference for approaches that get students thinking about real code as early as possible. Starting off by talking about polymorphism and its theoretical forms is a lot less effective at getting the idea across to undergrads than showing students a well-chosen example or two of how plugging a new object into an application makes it easier to extend and modify programs.

So, right now, I have "Concrete Before Abstract" firmly in mind as I prepare to teaching object-oriented programming to our sophomores this fall.

Classes start in twelve days. I figured I'd be blogging more by now about my preparations, but I have been rethinking nearly everything about the way I teach the course. That has left my mind more muddled that settled for long stretches. Still, my blog is my outboard brain, so I should be rethinking more in writing.

I did have one crazy idea last night. My wife learned Scratch at a workshop this summer and was talking about her plans to use it as a teaching tool in class this fall. It occurred to me that implementing Scratch would be a fun exercise for my class. We'll be learning Java and a little graphics programming as a part of the course, and conceptually Scratch is not too many steps from the pinball game construction kit in Budd's Understanding Object-Oriented Programming with Java, the textbook I have used many times in the course. I'm guessing that Budd's example was inspired by Bill Budge's game for Electronic Arts, Pinball Construction Set. (Unfortunately, Budd's text is now almost as out of date as that 1983 game.)

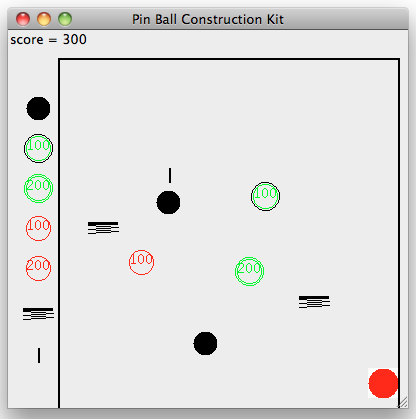

Here is an image of a game constructed using the pinball kit and Java's AWT graphics framework:

The graphical ideas needed to implement Scratch are a bit more complex, including at least:

- The items on the canvas must be clickable and respond to messages.

- Items must be able to "snap" together to create units of program. This could happen when a container item such as a choice or loop comes into contact with an item it is to contain.

The latter is an extension of collision-detecting behavior that students would be familiar with from earlier "ball world" examples. The former is something we occasionally do in class anyway; it's awfully handy to be able to reconfigure the playing field after seeing how the game behaves with the ball in play. The biggest change would be that the game items are little pieces of program that know how to "interpret" themselves.

As always, the utility of a possible teaching idea lies in the details of implementing it. I'll give it a quick go over the next week to see if it's something I think students would be able to handle, either as a programming assignment or as an example we build and discuss in class.

I'm pretty excited by the prospect, though. If this works out, it will give me a nice way to sneak basic language processing into the course in a fun way. CS students should see and think about languages and how programs are processed throughout their undergrad years, not only in theory courses and programming languages courses.

August 03, 2012 3:23 PM

How Should We Teach Algebra in 2012?

Earlier this week, Dan Meyer took to task a New York Times opinion piece from the weekend, Is Algebra Necessary?:

The interesting question isn't, "Should every high school graduate in the US have to take Algebra?" Our world is increasingly automated and programmed and if you want any kind of active participation in that world, you're going to need to understand variable representation and manipulation. That's Algebra. Without it, you'll still be able to clothe and feed yourself, but that's a pretty low bar for an education. The more interesting question is, "How should we define Algebra in 2012 and how should we teach it?" Those questions don't even seem to be on Hacker's radar.

"Variable representation and manipulation" is a big part of programming, too. The connection between algebra and programming isn't accidental. Matthias Felleisen won the ACM's Outstanding Educator Award in 2010 for his long-term TeachScheme! project, which has now evolved into Program by Design. In his SIGCSE 2011 keynote address, Felleisen talked about the importance of a smooth progression of teaching languages. Another thing he said in that talk stuck with me. While talking about the programming that students learned, he argued that this material could be taught in high school right now, without displacing as much material as most people think. Why? Because "This is algebra."

Algebra in 2012 still rests fundamentally on variable representation and manipulation. How should we teach it? I agree with Felleisen. Programming.