April 30, 2011 10:05 AM

A Shock to the System

I received some bad news from the doctor yesterday. It looks like my running career is over.

As I wrote earlier this month, I haven't run since March 4, when I came down with the flu. As that was ending, my right knee started to hurt and swell, though I was not aware of any injury or trauma that might have caused the symptoms.

In the few weeks since that entry, the pain and swelling have decreased but not disappeared. We finally had an MRI done on Thursday so that an orthopedic surgeon could examine me yesterday.

The diagnosis: Osteochondritis dissecans (OCD). This is a condition in which articular cartilage in a joint and the bone to which it attaches crack or pull away from the rest of the bone. OCD occurs when the subchondral bone is deprived of blood. As near as I can tell from my reading thus far, the cause of the blood deprivation itself is less well understood.

Depending on the size of the lesion and the state of the bone tissue, there are several potential reparative and restorative steps that my surgeon can take. Unfortunately, even with the best outcomes, the new tissue is more fragile than the original tissue and usually is not able to withstand high-impact activity over a long period.

That's where we get to the bad news. I almost surely cannot run any more.

After the doctor told me his diagnosis and showed me the MRI, he said, "You're taking this awfully well." To be honest, as the doctor and I talked about this, it felt as if we were discussing someone else. I'm not the sort of person who tends to show a lot of emotion in such situations anyway, but in this case the source of my dispassion was easy enough to see. In an instant, I was jolted from trying to get better to never getting better. On top of that jolt, it wasn't all that long ago that I went from running 30+ peaceful miles a week to not running at all. I was stunned.

On the walk back to my office, my conscious and subconscious minds began to process the news, trying to make sense of it. I have had a lot of thoughts since then. My first was that there was still a small chance that the lesion wouldn't look so bad under the scope, that it could heal and that I would be back on the road soon. I chuckled when I realized that I had already entered the stage of grief, denial. That small chance does exist, but it is not a rational one on which to plan my future. The expected value of this condition is much closer to long-term problems with the knee than to "yeah, I'm running again". I chuckled because my mind was so predictable.

Not being able to run is a serious lifestyle change for someone who has run 13,000 miles in the last eight years. It also means that I will have to make other changes to my lifestyle as well. My modest hope is that eventually I will still be able to take walks with my wife. In then grand scheme, I would probably miss those more than I miss the running.

I'm also going to have to change my diet. As a runner, I have been able to consume a lot of calories, and it will be impossible to burn that many calories without running or other high-impact exercise. This may actually be a good thing for my health, as strange as that may sound. Burning 4000 extra calories a week covers up a multitude of eating sins. I'll have to do the right thing now. Maybe this is what people mean when they see a misfortune as an opportunity?

I've had only two really sad thoughts since hearing the news. First, I wish I had know that my last run was going to be my last run. Perhaps I could have savored it a bit more. As it is, it's already receding into the fogginess of my memory. Second, I wonder what this will mean for my sense of identity. For the last decade, I have been a runner. Being a runner was part of who I was. That is probably gone now.

Of course, when I put this into context, it's not all that bad. There could have been much worse diagnoses with much more dire consequences for my future. Much worse events could have happened to me or a loved one. As one of my followers on Twitter recently put it, this is a First World Problem: a relatively healthy, economically and politically secure white male won't be able to spend hours each week indulging himself in frivolous athletic activity for purely personal gain. I think I'll survive.

Still, it's a shock, one that I may not get used to for a while. I'm not a runner any more.

April 27, 2011 6:12 PM

Teachers and Mentors

I'm fortunate to have good relationships with a number of former students, many of whom I've mentioned here over the years. Some are now close friends. To others, I am still as their teacher, and we interact in much the same way as we did in the classroom. They keep me apprised of their careers and occasionally ask for advice.

I'm honored to think that a few former students might think of me as their mentor.

Steve Blank recently captured the difference between teacher and mentor as well as I've seen. Reflecting back to the beginning of his career, he considered what made his relationships with his mentors different from the relationship he had with his teachers, and different from the relationship his own students have with him now. It came down to this:

I was giving as good as I was getting. While I was learning from them -- and their years of experience and expertise -- what I was giving back to them was equally important. I was bringing fresh insights to their data. It wasn't that I was just more up to date on current technology, markets or trends; it was that I was able to recognize patterns and bring new perspectives to what these very smart people already knew. In hindsight, mentorship is a synergistic relationship.

In many ways, it's easier for a teacher to remain a teacher to his former students than to become a mentor. The teacher still feels a sense of authority and shares his wisdom when asked. The role played by both teacher and student remains essentially the same, and so the relationship doesn't need to change. It also doesn't get to grow.

There is nothing wrong with this sort of relationship, nothing at all. I enjoy being a teacher to some of my once and future students. But there is a depth to a mentorship that makes it special and harder to come by. A mentor gets to learn just as much as he teaches. The connection between mentor and young colleague does not feel as formal as the teacher/learner relationship one has in a classroom. It really is the engagement of two colleagues at different stages in their careers, sharing and learning together.

Blank's advice is sound. If what you need is a coach or a teacher, then try to find one of those. Seek a mentor when you need something more, and when you are ready and willing to contribute to the relationship.

As I said, it's an honor when a former student thinks of me as a mentor, because that means not only do they value my knowledge, expertise, and counsel but also they are willing to share their knowledge, expertise, and experience with me.

April 26, 2011 4:41 PM

Students Getting There Faster

I saw a graphic over at Gaping Void this morning that incited me to vandalism:

A lot of people at our colleges and universities seem to operate under the assumption that our students need us in order to get where they need to be. In A.D. 1000, that may have been true. Since the invention of the printing press, it has been becoming increasingly less true. With the invention of the digital computer, the world wide web, and more and more ubiquitous network access, it's false, or nearly so. I've written about this topic from another perspective before.

Most students don't need us, not really. In my discipline, a judicious self-study of textbooks and all the wonderful resources available on-line, lots of practice writing code, and participation in on-line communities of developers can give most students a solid education in software development. Perhaps this is less true in other disciplines, but I think most of us greatly exaggerate the value of our classrooms for motivated students. And changes in technology put this sort of self-education within reach of more students in more disciplines every day.

Even so, there has never been much incentive for people not to go to college, and plenty of non-academic reasons to go. The rapidly rising cost of a university education is creating a powerful financial incentive to look for alternatives. As my older daughter prepares to head off to college this fall, I appreciate that incentive even more than I did before.

Yet McLeod's message resonates with me. We can help most students get where they need to be faster than they would get there without us.

In one sense, this has always been true. Education is more about learning than teaching. In the new world created by computing technologies, it's even more important that we in the universities understand that our mission is to help people get where they need to be faster and not try to sell them a service that we think is indispensable but which students and their parents increasingly see as a luxury. If we do that, we will be better prepared for reality as reality changes, and we will do a better job for our students in the meantime.

April 21, 2011 8:10 PM

Agile Approaches and the Small

Seth Godin recently blogged on the economies of small:

I think we embraced scale as a goal when the economies of that scale were so obvious that we didn't even need to mention them. Now that it's so much easier to produce a product in the small and market a product in the small, and now that it's so beneficial to offer a service to just a few, with focus and attention, perhaps we need to rethink the very goal of scale.

Agile approaches to software development exploit the economies of small:

- small promises

- short cycles

- small teams

- small steps

- small changes

- continuous integration

Traditional software engineering, based as it is on an engineering metaphor, seems invested in traditional economies of scale. That was a natural result of the metaphor bust also the tools of the time. Back when hardware and software made it more difficult to write software rapidly over short iterations, scale in the traditional sense -- large -- helped to make processes and maybe even people more efficient.

Things have changed.

April 19, 2011 6:04 PM

A New Blog on Patterns of Functional Programming

(... or, as my brother likes to say about re-runs, "Hey, it's new to me.")

I was excited this week to find, via my Twitter feed, a new blog on functional programming patterns by Jeremy Gibbons, especially an entry on recursion patterns. I've written about recursion patterns, too, though in a different context and for a different audience. Still, the two pieces are about a common phenomenon that occurs in functional programs.

I poked around the blog a bit and soon ran across articles such as Lenses are the Coalgebras for the Costate Comonad. I began to fear that the patterns on this blog would not be able to help the world come to functional programming in the way that the Gang of Four book helped the world come to object-oriented programming. As difficult as the GoF book was for every-day programmers to grok, it eventually taught them much about OO design and helped to make OO programming mainstream. Articles about coalgebras and the costate comonad are certainly of value, but I suspect they will be most valuable to an audience that is already savvy about functional programming. They aren't likely to reach every-day programmers in a deep way or help them learn The Functional Way.

But then I stumbled across an article that explains OO design patterns as higher-order datatype-generic programs. Gibbons didn't stop with the formalism. He writes:

Of course, I admit that "capturing the code parts of a pattern" is not the same as capturing the pattern itself. There is more to the pattern than just the code; the "prose, pictures, and prototypes" form an important part of the story, and are not captured in a HODGP representation of the pattern. So the HODGP isn't a replacement for the pattern.

This is one of the few times that I've seen an FP expert speak favorably about the idea that a design pattern is more than just the code that can be abstracted away via a macro or a type class. My hope rebounds!

There is work to be done in the space of design patterns of functional programming. I look forward to reading Gibbons's blog as he reports on his work in that space.

April 18, 2011 4:44 PM

At the Penumbra of Intersections

Three comments on my previous post, Intersections, in decreasing order of interest to most readers.

On Mediocrity. Mediocitry is a risk if we add so many skills to our portfolio that we don't have the ability or energy to be good at all of them. This article talks about start-ups companies, but I think its lesson applies more broadly to the idea of carving out one's niche. For start-ups as in life, mediocrity is often a worse outcome than failure. When we fail, we know to move on and do. When we achieve mediocrity, sometimes we are just good enough to feel comfortable. It's hard to come up with the willingness to give up the security, or the energy it takes to push ourselves out of the local maximum. But then we miss out on the chance to rach our full potential.

Who Is "Non-Technical"? My post said, "my talk considered the role of programming in the future of people who study human communication, history, and other so-called non-technical fields". I qualified "non-technical", but still I wonder: How many disciplines are non-technical these days, in the era of big data and computation everywhere? How many of these disciplines will be non-technical in the same way 20 years from now?

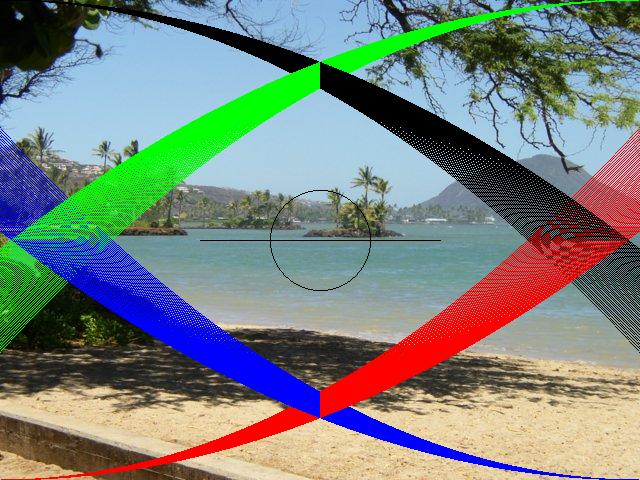

Yak Shaving. I went looking for Venn diagrams to illustrate my post, and then realized I should just create my own. As I played with a couple of tools, I remembered a cool CS 1 assignment I used several years and one student's solution in particular. Suddenly I was obsessed with using my own homegrown tool. That meant finding the course archive and Keller's solution. Then finding my own solution, which had a couple of extra features. Then digging out Dr. Java and making it work with a current version of the media comp tools. Then extending the simple graphics language we used, and refactoring my code, and... The good news is that I now have running again a nice, very simple tool for drawing simple graphics, one that can be used to annotate existing images. And I got to have fun tickereing with code for a\ while on a cloudy Sunday afternoon.

April 17, 2011 12:43 PM

Intersections

It seems I've been running into intersections everywhere.

In one of Richard Feynman letter, he wrote of two modes for scientists: deep and broad. Scientists who focus on one thing typically win the big awards, but Feynman reassured his correspondent that scientists work broadly in the intersections of multiple disciplines can make valuable contributions.

Scott Adams wrote about the value of combining skills. John Cook commented on Adams's idea, and one of Cook's readers commented on Cook's comment.

A week ago Friday, I spoke at a gathering of professors, students, and local business people who are interested in interactive digital technologies. Among other things, my talk considered the role of programming in the future of people who study human communication, history, and other so-called non-technical fields. One of my friends and former students, now a successful entrepreneur who employs many of our current and former students, spoke about how to succeed in business as a start-up. His talk inspired the audience withe power of passion, but he also gave some practical advice. It is difficult to be the best at any one thing, but if you are very good at two or three or five, then you can be the best in a particular market niche. The power of the intersection.

Wade used a Venn diagram to express his idea:

The more skills -- "core competencies", in the jargon of business and entrepreneurship -- you add, the more unique your niche:

As I thought about intersections in all these settings, a few ideas began to settle in my mind:

Adding more circles to your Venn diagram is a good thing, even if you feel they limit your ability to excel in one of the other areas. Each circle adds depth to your niche at the intersection. Having several skills gives you the agility to shift your focus as the world changes -- and as you change.

At some point, adding more circles to your Venn diagram starts to hurt you, not help you. For most of us, there is a limit to the number of different areas we can realistically be good in. If we are unable to perform at a high level in all the areas, or keep up with the changes they evolve, we end up being mediocre. Mediocrity isn't usually good enough to excel in the market, and it isn't a fun place to live.

The fact that we can create intersections in which to excel is a great opportunity for people who do not have the interest or inclination to focus on any one area too narrowly. Perhaps we can't all be Nobel Prize-winning physicists, but in principle we all can make our own niche.

The challenge is that you still have to work hard. This isn't about being the sort of dilettante who skims along the surface of knowledge without ever getting wet. It's about being good at several things, and that takes time and energy.

Of course, that is what makes Nobel Prize winners, too: hard work. They simply devote nearly all of their time and energy to one discipline.

I think it's good news that hard work is the common denominator of nearly all success. We may not control many things in this world, but we have control over how hard we work.

April 14, 2011 10:20 PM

Al Aho, Teaching Compiler Construction, and Computational Thinking

Last year I blogged about Al Aho's talk at SIGCSE 2010. Today he gave a major annual address sponsored by the CS department at Iowa State University, one of our sister schools. When former student and current ISU lecturer Chris Johnson encouraged me to attend, I decided to drive over for the day to hear the lecture and to visit with Chris.

Aho delivered a lecture substantially the same as his SIGCSE talk. One major difference was that he repackaged it in the context of computational thinking. First, he defined computational thinking as the thought processes involved in formulating problems so that their solutions can be expressed as algorithms and computational steps. Then he suggested that designing and implementing a programming language is a good way to learn computational thinking.

With the talk so similar to the one I heard last year, I listened most closely for additions and changes. Here are some of the points that stood out for me this time around, including some repeated points:

- One of the key elements for students when designing a domain-specific language is to exploit domain regularities in a way that delivers expressiveness and performance.

- Aho estimates that humans today rely on somewhere between 0.5 and 1.0 trillion lines of software. If we assume that the total cost associated with producing each line is $100, then we are talking about a most serious investment. I'm not sure where he found the $100/LOC number, but...

- Awk contains a fast, efficient regular expression matcher. He showed a figure from the widely read Regular Expression Matching Can Be Simple And Fast, with a curve showing Awk's performance -- quite close to Thompson NFA curve from the paper. Algorithms and theory do matter.

- It is so easy to generate compiler front ends these days using good tools in nearly every implementation language. This frees up time in his course for language design and documentation. This is a choice I struggle with every time I teach compilers. Our students don't have as strong a theory background as Aho's do when they take the course, and I think they benefit from rolling their own lexers and parsers by hand. But I'm tempted by what we could with the extra time, including processing a more compelling source language and better coverage of optimization and code generation.

- An automated build system and a complete regression test suite are essential tools for compiler teams. As Aho emphasized in both talks, building a compiler is a serious exercise in software engineering. I still think it's one of the best SE exercises that undergrads can do.

- The language for quantum looks cool, but I still don't understand it.

After the talk, someone asked Aho why he thought functional programming languages were becoming so popular. Aho's answer revealed that he, like any other person, has biases that cloud his views. Rather than answering the question, he talked about why most people don't use functional languages. Some brains are wired to understand FP, but most of us are wired for, and so prefer, imperative languages. I got the impression that he isn't a fan of FP and that he's glad to see it lose out in the social darwinian competition among languages.

If you'd like to see an answer to the question that was asked, you might start with Guy Steel's StrangeLoop 2010 talk. Soon after that talk, I speculated that documenting functional design patterns would help ease FPs into the mainstream.

I'm glad I took most of my day for this visit. The ISU CS department and chair Dr. Carl Chang graciously invited me to attend a dinner this evening in honor of Dr. Aho and the department's external advisory board. This gave me a chance to meet many ISU CS profs and to talk shop with a different group of colleagues. A nice treat.

Posted by Eugene Wallingford | Permalink | Categories: Computing, Software Development, Teaching and Learning

April 12, 2011 7:55 PM

Commas, Refactoring, and Learning to Program

The most important topic in my Intelligent Systems class today was the comma. Over the last week or so, I had grading their essays on communicating the structure and intent of programs. I was not all that surprised to find that their thoughts on communicating the structure and intent of programs were not always reflected in their essays. Writing well takes practice, and these essays are for practice. But the thing that stood out most glaringly from most of the papers was the overuse, misuse, and occasional underuse of the comma. So after I gave a short lecture on case-based reasoning, we talked about commas. Fun was had by all, I think.

On a more general note, I closed our conversation with a suggestion that perhaps they could draw on lessons they learn writing, documenting, and explaining programs to help them write prose. Take small steps when writing new content, not worrying as much about form as about the idea. Then refactor: spend time reworking the prose, rewriting, condensing, and clarifying. In this phase, we can focus on how well our text communicates the ideas it contains. And, yes, good structure can help, whether at the level of sentences, paragraphs, or then whole essay.

I enjoyed the coincidence of later reading this passage in Roy Behrens's blog, The Poetry of Sight:

Fine advice from poet Richard Hugo in The Triggering Town: Lectures and Essays on Poetry and Writing (New York: W.W. Norton, 1979)--

Lucky accidents seldom happen to writers who don't work. You will find that you may rewrite and rewrite a poem and it never seems quite right. Then a much better poem may come rather fast and you wonder why you bothered with all that work on the earlier poem. Actually, the hard work you do on one poem is put in on all poems. The hard work on the first poem is responsible for the sudden ease of the second. If you just sit around waiting for the easy ones, nothing will come. Get to work.

This is an important lesson for programmers, especially relative beginners, to learn. The hard work you do on one program is put in on all programs. Get to work. Write code. Refactor. Writing teaches writing.

~~~~

Long-time readers of this blog may recall that I once recommended The Triggering Town in an entry called Reading to Write. It is still one of my favorites -- and due for another reading soon!

April 10, 2011 9:23 PM

John McPhee on Writing, Teaching, and Programming

John McPhee is one of my favorite non-fiction writers. He is a long-form journalist who combines equal measures of detailed fact gathering and a literary style that I enjoy as a reader and aspire to as a writer. For years, I have used selections from the The John McPhee Reader in advice to students on how to do gather requirements for software, including knowledge acquisition for AI systems.

This weekend I enjoyed Peter Hessler's interview of McPhee in The Paris Review, John McPhee, The Art of Nonfiction No. 3. I have been thinking about several bits of McPhee's wisdom in the context of both writing and programming, which is itself a form of writing. I also connected with a couple of his remarks about teaching young writers -- and programmers.

One theme that runs through the interview serves as a universal truth connecting writing and programming:

Writing teaches writing.

In order to write or to program, one must first learn the basics, low-level skills such as grammar, syntax, and vocabulary. Both writers and programmers typically go on to learn higher-level skills that deal with the structure of larger works and the patterns that help creators create and readers understand. In the programming world, we call these "design" skills, though I imagine that's too much an engineering term to appeal to writes.

Once you have these skills under your belt, there isn't much more to teach, but there is plenty to learn. We help newbies learn by sharing what we create, by reading and critiquing each others work, and by talking about our craft. But doing it -- writing, whether it's stories, non-fiction, or computer programs -- that's the thing.

McPhee learned this in many ways, not the least of which was one of the responses he received to his first novel, which he wrote in lieu of a dissertation (much to the consternation of many Princeton English professors!). McPhee said

It had a really good structure and was technically fine. But it had no life in it at all. One person wrote a note on it that said, You demonstrated you know how to saddle a horse. Now go find the horse.

He still had a lot to learn. This is a challenge for many young programmers whom I teach. As they learn the skills they need to become competent programmers, even excellent ones, they begin to realize they also need a purpose. At a miniconference on campus last week, a successful former student encouraged today's students to find and nurture their own passions. In those passions they will also find the energy and desire to write, write, write, which is the only he knew of to master the craft of programming.

Finding passion is hard, especially for students who come through an educational system that sometimes seems more focused on checking off boxes than on growing a person.

Luckily, though, finding problems to work on (or stories to write) can be much less difficult. It requires only that we are observant, that we open our eyes and pay attention. As McPhee says:

There are zillions of ideas out there--they stream by like neutrons.

For McPhee, most of the ideas he was willing to write about, spending as much as three years researching and writing, relate to things he did when I was a kid. That's not too far from the advice we give young software developers: write the programs you need or want to use. It's okay to start with what you like and know even if no one else wants those things. First of all, maybe they do. And second, even if they really don't, those are the problems on which you will be willing to work. Programming teaches programming.

Keep in mind: finding ideas isn't enough. You have to do the work. In the end, that is the measure of a writer as well as the measure of a programmer.

If you have already found your passion, then finding cool things to do gets even easier. Passion and obsession seem to heighten our senses, making it easier to spot potential new ideas and solution. I just saw a great example of this in the movie The Social Network, when an exhausted Mark Zuckerberg found the insight for adding Relationship Status to Facebook from a friend's plaintive request for help finding out whether a girl in his Art History class was available.

So, you have an idea. How long does it take to write?

... It takes as long as it takes. A great line, and it's so true of writing. It takes as long as it takes.

Despite what we learn in skill, this is true of most things. They take however long they take. This was a hard lesson for me to learn. I was a pretty good student in school, and I learned early on how to prosper in the rhythm of the quarter, semester, and school year. Doing research in grad school helped me to see that real problems are much messier, much less predictable than the previous sixteen years of school had led me to believe.

As a CS major, though, I began to learn this lesson in my last year as an undergrad, writing the program at the core of my senior project. It takes as long as it takes, whatever the university's semester calendar says. Get to work.

As a teacher, I found most touching an answer McPhee gave when asked why he still teaches writing courses at Princeton. He is well past the usual retirement age and might be expected to slow down, or at least spend all of his time on his own writing. Every teacher who reads the answer will feel its truth:

But above all, interacting with my students--it's a tonic thing. Now I'm in my seventies and these kids really keep me alive. To talk to a nineteen-year-old who's really a good writer, and he's sitting in here interested in talking to me about the subject--that's a marvelous thing, and that's why I don't want to stop.

As I read this, my mind began to recount so many students who have changed me as a programmer and teacher. The programmer, artist, and musician who wanted to write a program that could express artistic style, who is now a filmmaker inflamed with understanding man and his relationship to the world. The high school kid with big ideas about fonts, AI, and design whose undergrad research qualified for a national ACM competition and who is now a research scientist at Apple. The brash PR student who wanted to become a programmer and did, writing a computer science thesis and an even more important communications studies thesis, who is now set on changing how we study and understand human communication in the age of the web. The precocious CS student whose ideas were bigger than my courses before he set foot in my classroom, who worked hard learning things beyond what we were teaching and eventually doubling back to learn what he had missed, an entrepreneur with a successful tech start-up who is now helping a new generation of students learn and dream.

The list could go on. Teaching keeps us alive. Students learn, we hope, and so do we. They keep us in the present, where the excitement of new ideas is fresh. And, as McPhee admits with no sense of shame or embarrassment, it is flattering, too.

April 08, 2011 6:07 AM

Perfectly Reasonable Deviations

I recently mentioned discovering a 2005 collection of Richard Feynman's letters. In a letter in which Feynman reviews grade-school science books for the California textbook commission, I ran across the sentence that give the book its title. It stood out to me for more than just the title:

[In parts of a particular book] the teacher's manual doesn't realize the possibility of correct answers different from the expected ones and the teacher instruction is not enough to enable her to deal with perfectly reasonable deviations from the beaten track.

I occasionally see this in my daughters' math and science instruction, but mostly I've been surprised at how well their teachers do. The textbooks often suffer from the ills that Feynman complains about (too many words, rules, and laws to memorize, with little emphasis on understanding. The teachers do a reasonable job making sense of it all. It's a tough gig.

In many ways, university teachers have an easier job, but we face this problem, too. I'm not a great teacher, but one thing I think I've learned since the beginning of my time in the classroom is that students deviate from the beaten track in perfectly reasonable ways all the time. This is true of strong students and weak students alike.

Sometimes the reasonableness of the deviation is a result of my own teaching. I have been imprecise, or I've taught using implicit assumptions my students don't share. These students are learning in an uncertain space, and sometimes they learn differently than I intended. Of course, sometimes they learn the wrong thing, and I need to fix that. But when their deviations are reasonable, I need to recognize that. Sometimes we recognize the new idea and applaud the student for the deduction. Sometimes we discuss the deviation in detail, using the differences as an opportunity to learn more deeply.

Sometimes a reasonable deviation results simply from the creativity of the students. That's a good result, too. It creates a situation in which I am likely to learn as much as, or more than, my students do from the detour.

April 06, 2011 7:10 PM

Programming for All: The Programming Historian

Knowing how to program is crucial for doing advanced research with digital sources.

This is the opening line of William Turkel's how-to for digital researchers in the humanities, A Workflow for Digital Research Using Off-the-Shelf Tools. This manual helps such researchers "Go Digital", which is, he says, the future in disciplines such as history. Turkel captures one of the key changes in mindset that faces humanities scholars as they make the move to the digital world:

In traditional scholarship, scarcity was the problem: travel to archives was expensive, access to elite libraries was gated, resources were difficult to find, and so on. In digital scholarship, abundance is the problem. What is worth your attention or your trust?

Turkel suggests that programs -- and programming -- are the only way to master the data. Once you do master the data, you can be an even more productive researcher than you were in the paper-only world.

I haven't read any of Turkel's code yet, but his how-to shows a level of sophistication as a developer. I especially like that his first step for the newly-digital is:

Start with a backup and versioning strategy.

There are far too many CS grads who need to learn the version control habit, and a certain CS professor has been bitten badly by a lack of back-up strategy. Turkel wisely makes this Job #1 for digital researchers.

The how-to manual does not currently have a chapter on programming itself, he does talk about using RSS feeds to do work for you and about and about measuring and refactoring constantly -- though at this point he is talking about one's workflow, not one's programs. Still, it's a start.

As soon as I have some time, I'm going to dig into Turkel's The Programming Historian, "an open-access introduction to programming in Python, aimed at working historians". I think there is a nice market for many books like this.

This how-to pointed me toward a couple of tools I might add to my own workflow. One is Feed43, a web-based tool to create RSS feeds for any web page (an issue I've discussed here before). On first glance, Feed43 looks a little complex for beginners, but it may be worth learning. The manual also reminded me of Mendeley an on-line reference manager. I've been looking for a new tool to manage bibliographies, so I'll give it another look.

But the real win here is a path for historians into the digital world and then into programming -- because it makes historians more powerful at what they do. Superhuman strength for everyone!

April 04, 2011 7:26 PM

Saying "Hell Yeah! or No" to "Hell Yeah! or No"

Sometimes I find it hard to tell someone 'no',

but I rarely regret it.

I have been thinking a lot lately about the Hell Yeah! or No mindset. This has been the sort of year that makes me want to live this way more readily. It would be helpful when confronting requests that come in day to day, the small stuff that so quickly clutters a day. It would also be useful when facing big choices, such as "Would you like another term as department head?"

Of course, like most maxims that wrap up the entire universe in a few words, living this philosophy is not as simple as we might like it to be.

The most salient example of this challenge for me right now has to do with granularity. Some "Hell Yeah!"s commit me to other yesses later, whether I feel passionate about them or not. If I accept another term as head, I implicitly accept certain obligations to serve the department, of course, and also the dean. As a department head, I am a player on the dean's team, which includes serving on certain committees across the college and participating in college-level discussions of strategy and tactics. The 'yes' to being head is, in fact, a bundle of yesses, more like a project in Getting Things Done than a next action.

Another thought came to mind while ruminating on this philosophy, having to do with opportunities. If I do not find myself with the chance to say "Hell Yeah!" very often, then I need to make a change. Perhaps I need to change my attitude about life, to accept the reality of where and who I am. More likely, though, I need to change my environment. I need to put myself in more challenging and interesting situations, and hang out with people who are more likely to ask the questions that provoke me to say "Hell Yeah!"

April 03, 2011 10:07 AM

Runner, Interrupted

Have you ever confused a dream with life?

I haven't blogged about running in a long while. Then again, I haven't run in a while now.

If you'd rather not hear me whine about that, you should skip the rest of this post!

I was getting through winter well enough, running 30+ miles each week. The coldest weather wasn't even stopping me; long, cold runs were the norm on Sunday mornings.

On March 4, I ran a good track workout, but as the day wore on I knew I wasn't feeling well. What ensued was the worst flu or something that I can remember. It knocked me out for two full weeks, through SIGCSE and spring break. I made it to Dallas for the conference, but my paucity of SIGCSE-themed posts was certainly a product of just how bad I felt.

Just as I was ready to start running again, my right knee became the problem. I don't recall suffering any particular injury to it at the time. I woke up one day with a stiff knee. Within a couple days I felt pain while walking, and soon it was swollen and stiffer.

It's now been two weeks more. I'm still hobbling around, knee wrapped tightly to immobilize it. The swelling and pain have decreased, and I hope that means I am on a trajectory to normal function. I have a second doctor's appointment this coming week. With any luck, an MRI will be able to tell us what is going on in there.

While I don't recall suffering any particular injury to the knee recently, I suspect that this is related to an injury I do remember. In 1999, I was at ChiliPLoP. We were playing doubles tennis after a long day working on elementary patterns. About an hour in, I was back and my partner was at the net. One of our opponents hit a bunny that floated enticingly over the net on my side of the court. I called my partner off and ran in for what would be an impressive smash. My partner must not have heard me. He ran along the net, apparently with the same idea in mind. As I stretched out to strike the ball, he struck me -- solidly on the inside of my knee, which buckled outwards.

For the rest of ChiliPLoP, I was pretty well immobile. After I returned home, I went to a highly respected orthopedic surgeon. He suggested a conservative plan, letting the knee heal and then strengthening it with targeted exercise. If all seemed well, we would skip surgery and see what happened.

After rest and exercise, the knee seemed fine, so we let it be. And so it was for twelve years. And just twelve years of sedentary lifestyle. Since 2003, I have kept close records of my running, and by my tally I have run about 13,000 miles. In all that time, I've never had any knee pain, and scant few days of running lost to injury. This injury could be the result of cumulative wear and tear, but I really would have expected to see symptoms of the wearing down over time.

I do hope that the doctor can figure out what's going on. With any luck, it's an aberration, and I'll be back on the trail soon!

(Writing this reminds me that I still haven't posted my Running Year in Review for 2010. I have started it a time or two and need only to finish. You'd think that not running would give me plenty of time...)